A few years ago VAST added a tabular capability to a growing list of supported data presentations. That capability, called the VAST DataBase, works as the data layer for massively-parallel processing (MPP) engines like Spark and Trino. It supplies acceleration to read-intensive analytic workloads by executing scans and filters on data structures that are optimized for native flash and integrated into an architecture built for the AI era.

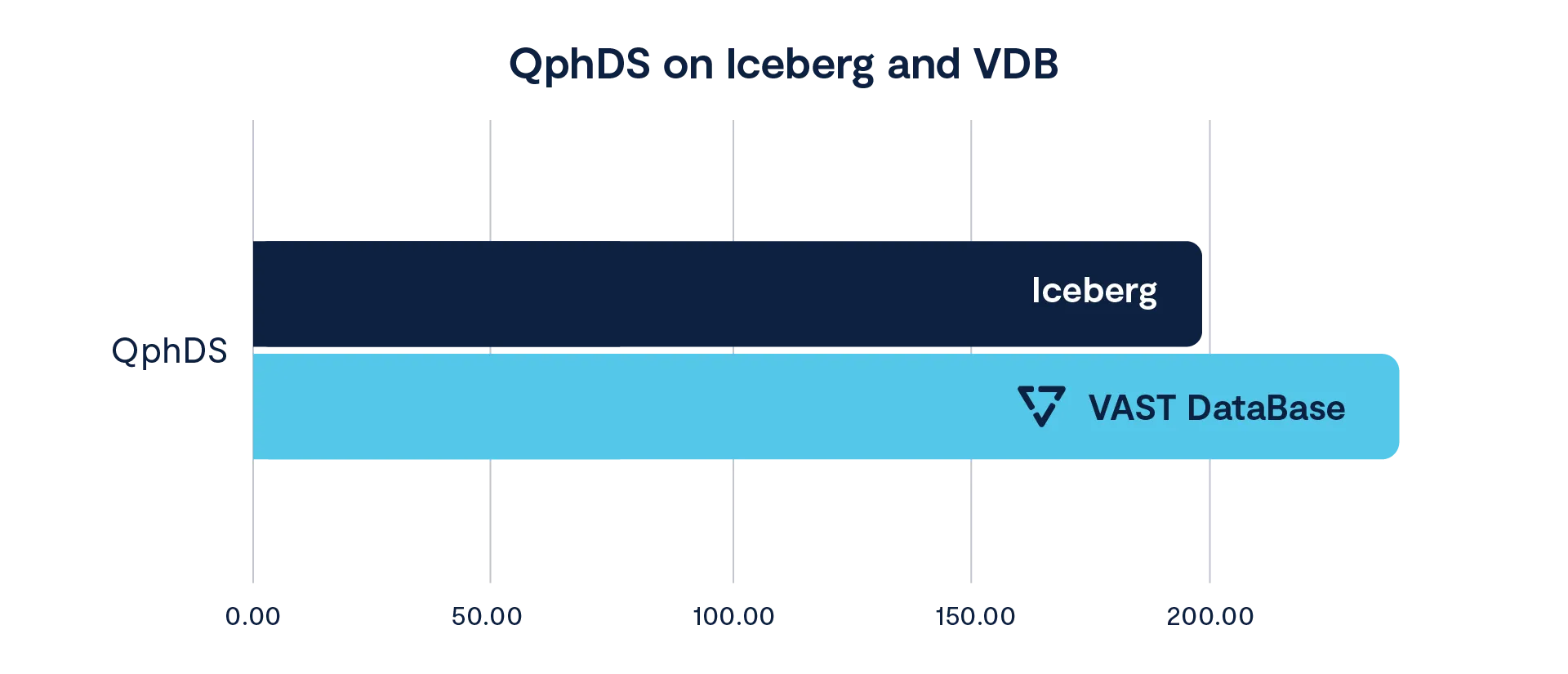

By itself, the VAST DataBase (VDB) accelerates real-world workloads. A manufacturer that recently platformed their analytics solution on VAST was able to accelerate their primary workloads 18% beyond Iceberg by using VDB for their tabular data:

This is real-world savings, not an abstract benchmark that was optimized for ahead of time. Taken on its own, it constitutes a major improvement to a solution that can almost be described as settled science. But talking about old-school warehousing performance in the abstract ignores whole classes of capability that could have a major impact on your business.

Operational Data Stores and Transactional Data Warehousing and Streaming Change-Data Capture (Oh My!)

In recent years the functions of operational data stores (ODS) have roosted in a range of products, each focusing on specific needs - pinot/druid for low-latency analysis for dashboards, RocksDB for feature stores, maybe some side-Iceberg for various materialized views, et cetera. The commonality is real-time. The flexible nature of VDB makes it ideal for combining functions into a single system to simplify complex environments. Hierarchical sorted projections enable low-latency key/value retrieval, while the fine-grained columnar nature of the physical format excels at analytics involving full table scans. Put those two capabilities together and you can run reports over a full date-set, perform fast lookups on a feature store and everything in-between.

VAST’s flexibility has ramifications across a range of workflows. Let's take a look at a few capabilities and look at some examples:

Fast Lookups

Sorted tables have been in VAST since March 2025. A sorted table is an optimized, live presentation of a fully mutable table where VAST maintains the physical data layout according to the user's selected sorting hierarchy. This approach to data optimization gives us a logarithmic temporal complexity for accessing the rows in a table. Or O(log(n)) where n=”number of rows in the table.” The analogous “data lake” optimization is partitioning (or bucketing), which is different from sorting in some subtle and not-so-subtle ways:

Partitioning works great for low cardinality data but breaks down on high-cardinality data which requires a bucketing approach that, in turn, requires work-arounds to handle effectively.

Partitioning and bucketing create a lot of stress during write operations, using a lot of memory and CPU and often forcing multiple partitioning stages

Partitions can only go so far in guiding your engine to the desired data - last-mile scans of entire row-groups are still needed to fish out small numbers of records

Partitions are great for allowing processing engines to efficiently distribute several types of processing operations - joins in particular. Table sorting cannot affect these operations, there’s a win for partitioning! (partitions are available in VAST 5.5)

Partitions in Iceberg can be “sorted” however this nomenclature is somewhat deceptive. It involves the minimization of overlap of the row groups of selected columns within Parquet files. This is an incremental optimization that doesn’t approximate a sorted table.

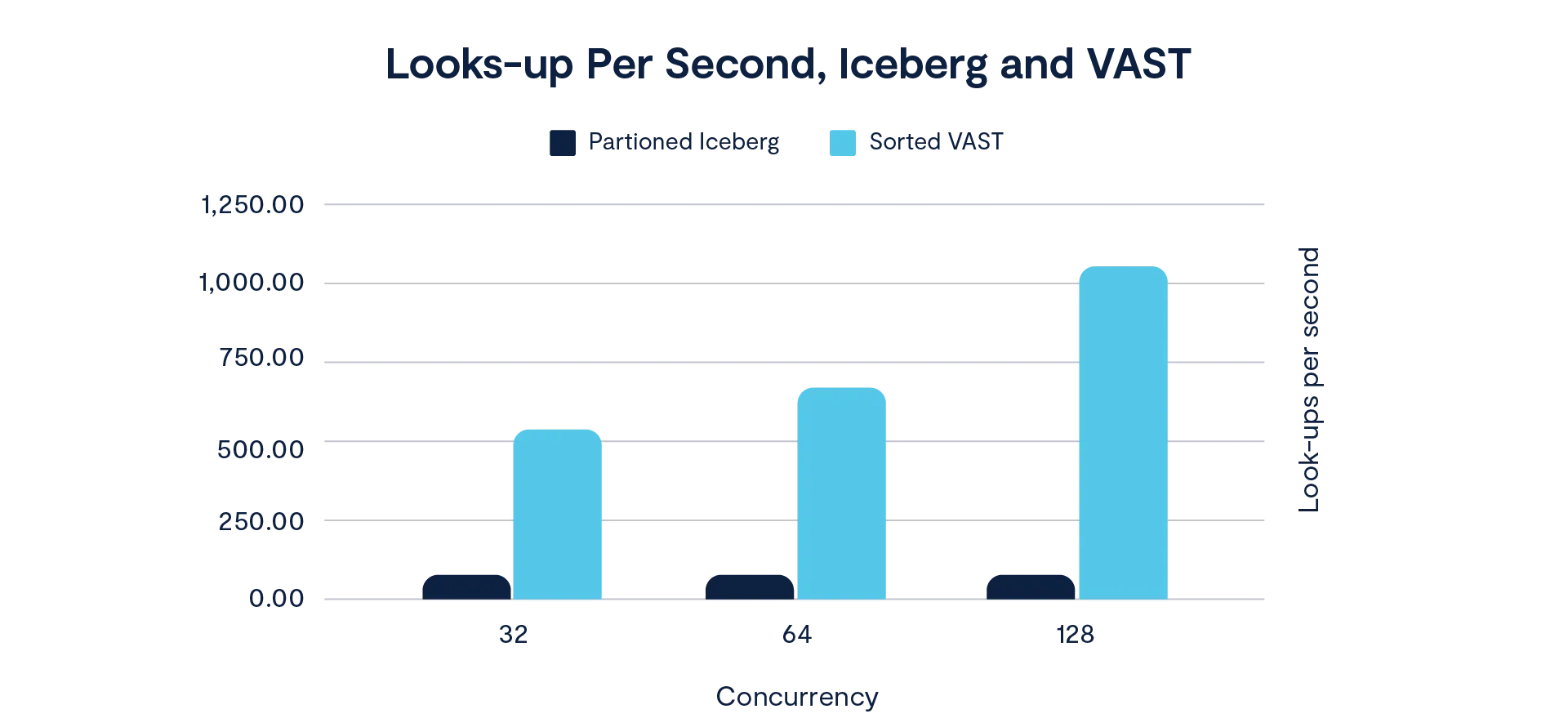

Sorting in VDB is simple to use and understand, but how well does it work? We ran a point-query workload where small - almost singular - rows of data were selected from large tables (10 billion rows) with high concurrency factors. This is very much like a key/value workload where data is searched on a high-cartinality column at high-concurrency. The Iceberg table is partitioned into 1024 buckets along the search column, VAST tables were sorted according to the same column.

Lookup times per thread were 120 ms for VAST at the highest concurrency factor, with the Iceberg searches clocking in at 1.6 seconds. This is due to VAST's ability to sort tables and return data projections from storage using predicate push-downs - a process where precise data is requested of (and returned from) the storage layer. The term “predicate push-down” when used in the context of Iceberg refers to the filter being applied at the parquet-reader level, not the storage layer. Even when the filters are “pushed-down” to parquet data, the parquet reader still has to pull entire row-groups across the network forcing clients (the data processing engine) to render the requested projection. In extremely large tables this can lead to bandwidth exhaustion in addition to additional compute overhead in the processing cluster.

Bottom line: when referencing a VAST table, if you have a key, you get the value. In Iceberg, you get a bunch of data with what you want somewhere in it.

Mutable Data

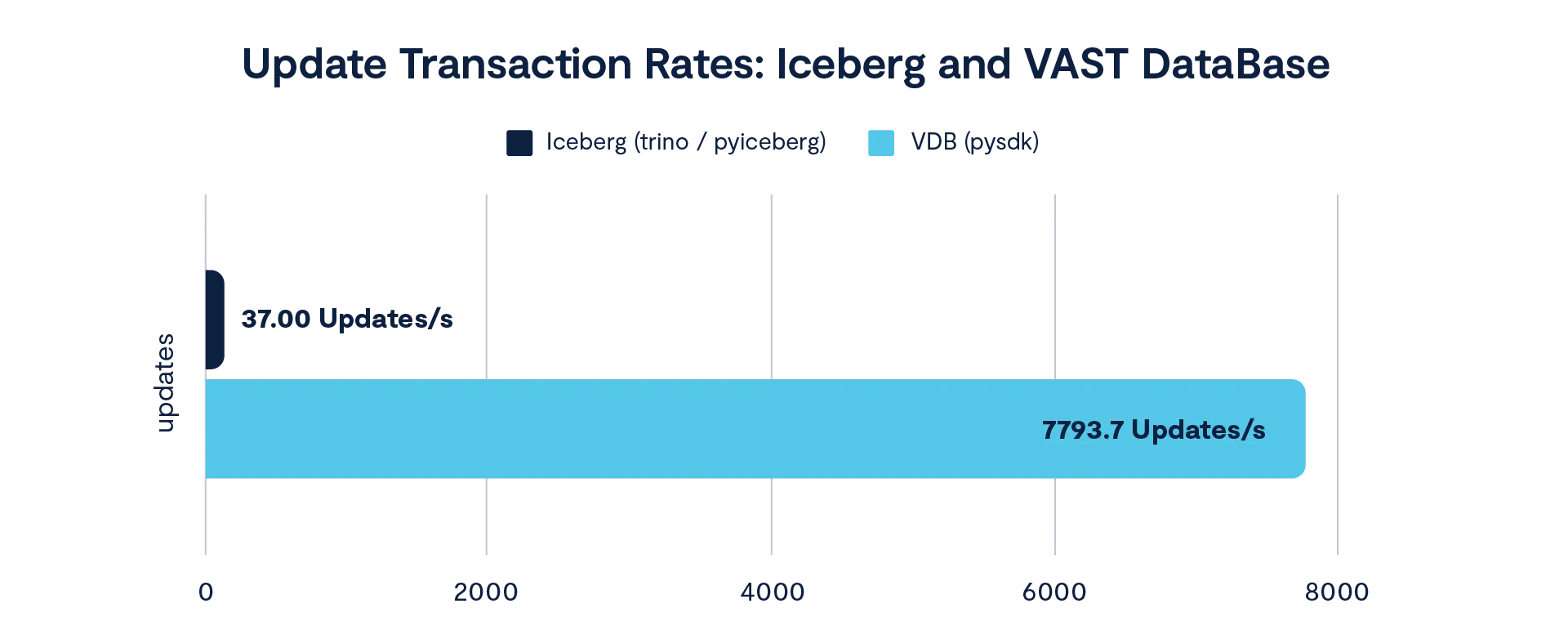

Another nicety of structured data on VAST is that it is fully transactional and supports high-speed updates. OLTP-like SQL operations can be executed in parallel resulting in high transaction rates even on the columnar physical layout. Even better, VAST’s Python SDK can be used in parallel to execute large numbers of transactions in parallel from streaming sources. Here are some performance figures from a modest 3x1 VAST cluster (three stateless compute front-ends and a single flash enclosure). This workload shows:

Updating a 1 billion row table with 10 columns,

batched in groups of 500 updates on random rows.

Each update changes a set of random columns with a 20% chance of update per column.

Trino was used to execute MERGE updates on the Iceberg table.

The vastdb Python SDK was used to update the VAST table by collating arrow data frames (resulting in dozens of effective batches within the outer batches).

The very first observation you’ll make when seeing a comparison like this might be one of three things:

This looks like one result (look carefully, there are two)

This compares an SQL engine (Trino) to a lower-level SDK

You would never use this update methodology to interact with an Iceberg table

These last two observations are related. Iceberg is intrinsically coupled with a containerized object back-end requiring a metastore for coordination. A hive metastore was used in these tests to coordinate both Trino and PyIceberg tests (both yielding similar results). Low update timings are due principally to the serial behavior needed to keep data coherent using a hand-full of manifest objects on high-latency object storage. It’s impossible to parallelize small discrete transactions in this context so lower-level interfacing isn’t even possible. Which takes us to the third observation: “you would never do this anyway” - this is the most pertinent. You’ll never do this. In reality, you’ll accumulate changes over time in another table and occasionally merge them in a single long-running transaction, which is not a real-time, in-band operation.

This comparison is lop-sided and unfair because high-speed, operational updates aren’t an intended function of Iceberg. They are an intended function of VDB.

Streaming Transaction Target

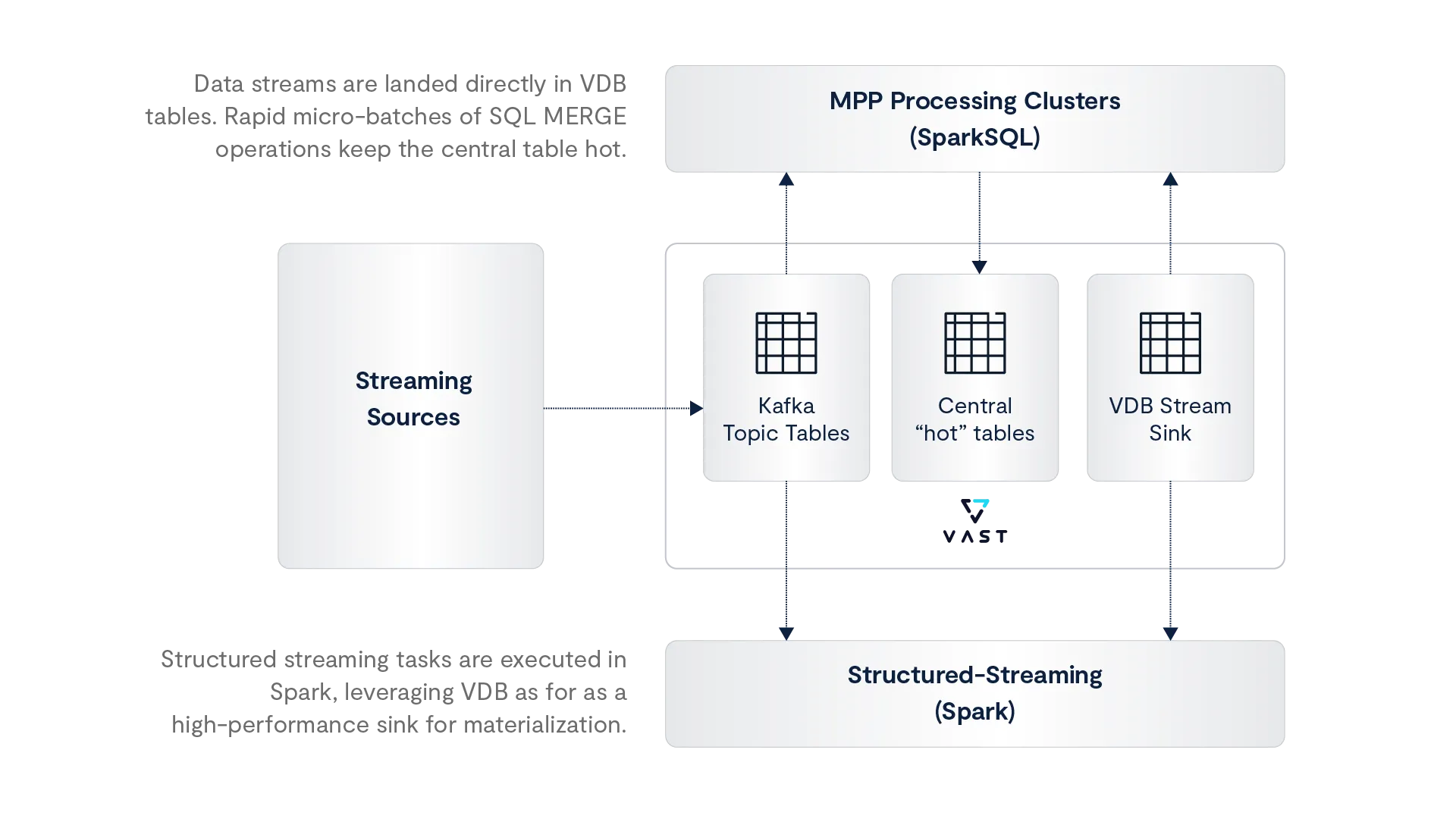

So we can transact. Now what? Let’s stream. In an anonymous use-case, web transactions and telemetry data are applied to VDB tables in real-time. Since VAST itself is a Kafka-compatible message broker, the vastdb Python SDK is used to subscribe to change-data topics and apply updates to a hot central table. Now, let’s discuss another workflow optimization: since the VAST message broker uses VDB tables for topic state, these tables can be used to directly update other tables, micro-batching narrow time-windows courtesy of table sorting:

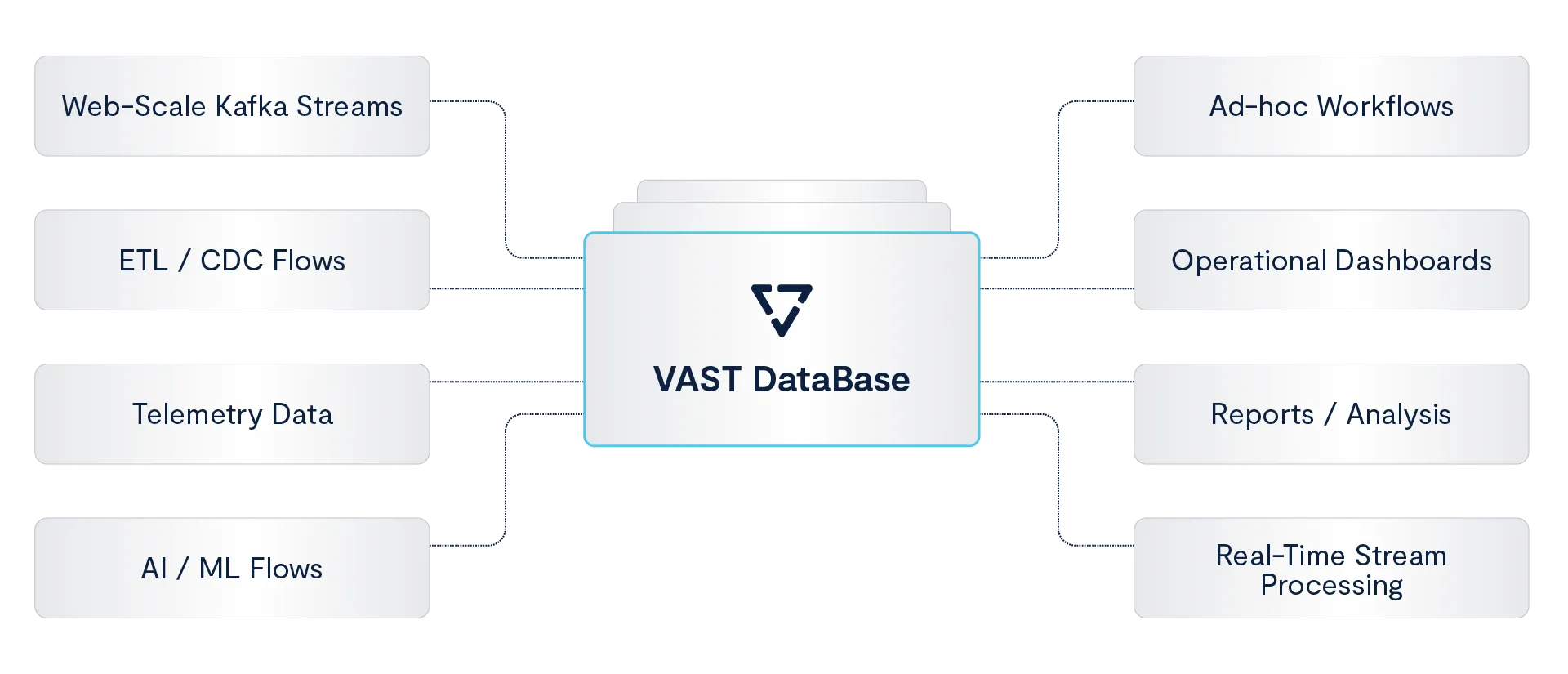

The above workflow demonstrates the ability to simplify an ecosystem that needs to scale seamlessly with growing business demands and workflows. Implementing the workflow with traditional tools would have required separate Kafka clusters, invited Nifi clusters for data transformation, additional databases for housing operational data (RocksDB or PostgreSQL) additional table formats for the central lake-house and split pipelines for real-time analysis - all of which would have necessitated more workflows with cascading dependencies. With low-latency transactional tables that do analysis and in-VAST Kafka support, the environment is distilled-down to VDB tables and a single processing engine. This de-risks the environment by making it simple, performant and predictable.

The Big Picture

This is a small part of the VAST ecosystem and this writing concerns itself with details. The broader context is the VAST DataEngine, where data operations are orchestrated, bringing streaming, structured and unstructured data together into a managed execution layer for high-performance analytics, AI workflows and everything in between. These are exciting times at VAST, contact us and see how we can help you.