Traditional data platforms force you to choose between performance and scale, creating complexity as your requirements evolve. VAST DataBase challenges this notion with a unified architecture that handles diverse workloads seamlessly, delivering performance without introducing challenges.

Scale & Speed

Achieve exabyte-scale and sub-millisecond performance with VAST DataBase. Our DASE architecture eliminates traditional bottlenecks, delivering linear scaling that grows with your needs.

Built for Performance, Engineered for Growth

Exabyte Scale

DASE Architecture: Shared-Everything Approach

VAST's Disaggregated Shared-Everything (DASE) architecture separates compute from storage, allowing independent scaling without traditional limitations. Stateless compute nodes (CNodes) connect to all-flash data storage nodes (DNodes) over high-speed NVMe-oF fabric, creating a "shared-everything" model where every node can access the entire global dataset directly.

Linear Performance at Scale

Adding compute nodes (CNodes) immediately increases capacity without bottlenecks or re-partitioning. VAST’s all-flash architecture with Storage Class Memory buffers enables consistent performance, even at exabyte scale. Throughput scales directly with concurrency, allowing organizations to grow data infrastructure without operational complexity.

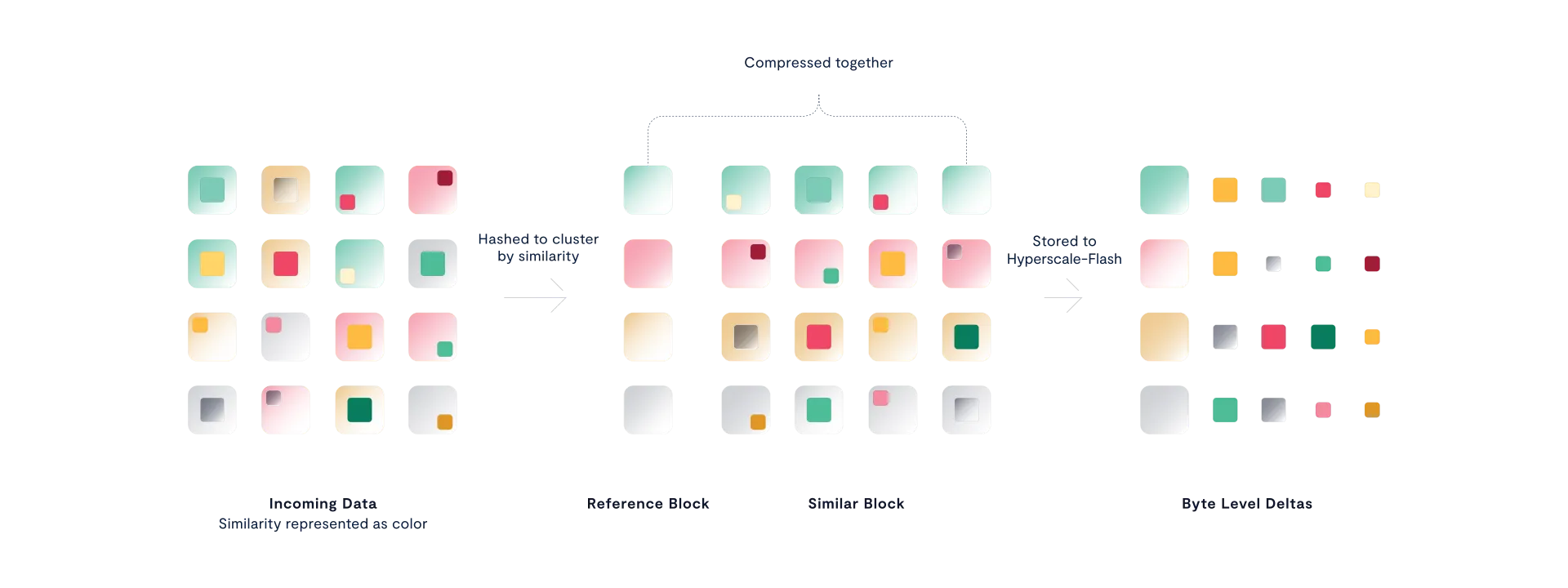

Similarity-Based Reduction

Global Pattern Recognition

Unlike traditional compression that works on individual files, VAST's Similarity-based Data Reduction operates across the entire data store—all files, objects, and tables. It identifies similar (not just identical) data blocks at two-byte granularity and compresses them together using advanced algorithms like Zstandard. This global approach finds patterns that single-file methods miss entirely.

Minimum 3:1 Reduction on Any Data

VAST delivers minimum 3:1 compression ratios even on challenging datasets like pre-compressed files, encrypted data, or large AI embeddings. Traditional compression struggles with this data because exact patterns are rare, but similarity compression finds correlations across the entire namespace, dramatically reducing storage footprint while maintaining sub-millisecond read performance.

Automatic Storage Optimization

The system continuously optimizes compression as data flows through Storage Class Memory buffers to all flash, requiring zero manual configuration. This eliminates the data engineering overhead of sizing files or managing partitions common with formats like Parquet, while making all-flash storage as affordable as traditional disk drives.

Small Chunks, Smart Filtration

Small Chunks, Big Acceleration

VAST DataBase stores data in 32KB variable-length chunks for ultra-granular filtering directly within the storage layer. This Smart Filtration approach ensures only precise, highly selective data—not full, unfiltered blocks—moves across the network. The result: up to 20× faster selective queries and dramatically lower compute and bandwidth costs.

The Filtration Advantage

By breaking data into microscopic 32KB chunks, VAST DataBase turns “needle-in-a-haystack” queries into instant lookups. Only the relevant sections are scanned—never the full dataset. This design delivers 5–20× faster selective query performance while using up to 90% less CPU than traditional object-based systems. Precision filtering replaces brute-force scans, enabling true real-time responsiveness at scale.

Sorted Tables for Log-Time Search

Sort Once, Query Forever

Unlike traditional systems that require continuous re-indexing or complex partitioning, VAST sorted tables need sorting only once at ingestion. This eliminates ongoing maintenance while enabling near-logarithmic (O(logN)) query speeds that remain consistent even as datasets grow.

100× Faster Point Queries

Benchmarks demonstrate 100× acceleration on single-key queries and 25× faster results for multi-key access patterns. Throughput scales linearly from 50,000 to 100,000 results per second as client concurrency increases, while traditional unsorted tables suffer performance degradation from 2,500 to 10,000 milliseconds as datasets grow—making sorted tables essential for real-time AI inference, RAG applications, and lightning-fast analytics.

Predicate Pushdown

Query Processing at the Storage Layer

Instead of transferring entire datasets for external filtering, VAST pushes query logic directly to its storage nodes. Popular engines like Trino and Spark send their filtering operations to VAST, which executes the logic locally and returns only the exact results requested, not the raw data.

Faster Analytics with Lower Costs

Predicate pushdown minimizes the amount of data that must be read, transferred, and processed by applying filters as close to storage as possible. This reduces CPU usage and accelerates selective queries, improving overall workload efficiency.

In benchmark testing, VAST DataBase demonstrated exceptional performance on highly selective workloads: for a 0.01% selectivity query, VAST DataBase retuwrned ~245,000 rows per second—4.7× faster than traditional object-based systems (Apache Iceberg on S3), which returned ~52,000 rows per second for the same workload.