The language of modern infrastructure is saturated with familiar claims: disaggregation, composability, scale-out. Nearly every storage or data platform provider now promises some version of these ideas. But as architectures converge rhetorically, the underlying implementations are beginning to diverge in ways that matter.

Nowhere is that clearer than in how vendors interpret a deceptively simple phrase: shared everything.

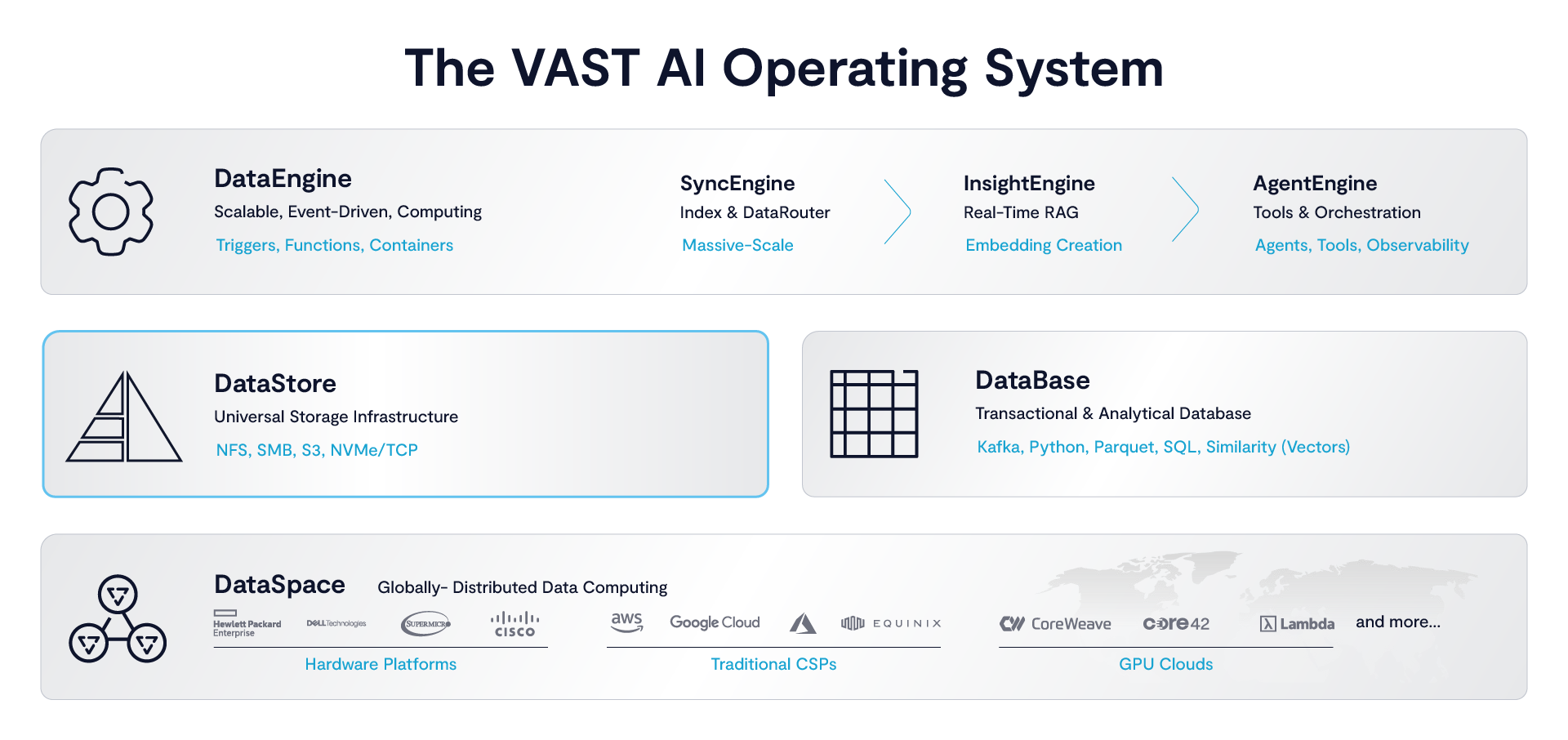

In a recent talk Scott Howard, Field CTO at VAST, offered a definition that cuts through the ambiguity. Most systems, he noted, treat “shared” as a property of hardware - shared enclosures, shared disks, shared access paths. VAST’s DataStore, by contrast, extends that concept to its logical extreme. "We’re actually sharing the data and the metadata," Howard said. "Any one of our storage controllers can access any of the SSDs, any of the data or metadata on any of those SSDs."

That distinction between sharing infrastructure and sharing the entire data structure is where the architectural divergence begins.

The Limits of Disaggregation

Disaggregation has become the default design pattern for modern infrastructure. Separating compute from storage allows each to scale independently, improving utilization and flexibility. It is a necessary evolution from tightly coupled shared-nothing systems.

But disaggregation alone does not solve the coordination problem. In most implementations, it simply moves it.

When data is partitioned across nodes, even in a disaggregated system, ownership and locality still matter. Metadata services track where data lives. Access requires indirection. Operations like deduplication, compression, or protection are constrained by the boundaries of those partitions. As systems scale, so do the coordination costs - manifesting as metadata bottlenecks, rebalancing overhead, and inconsistent performance under load.

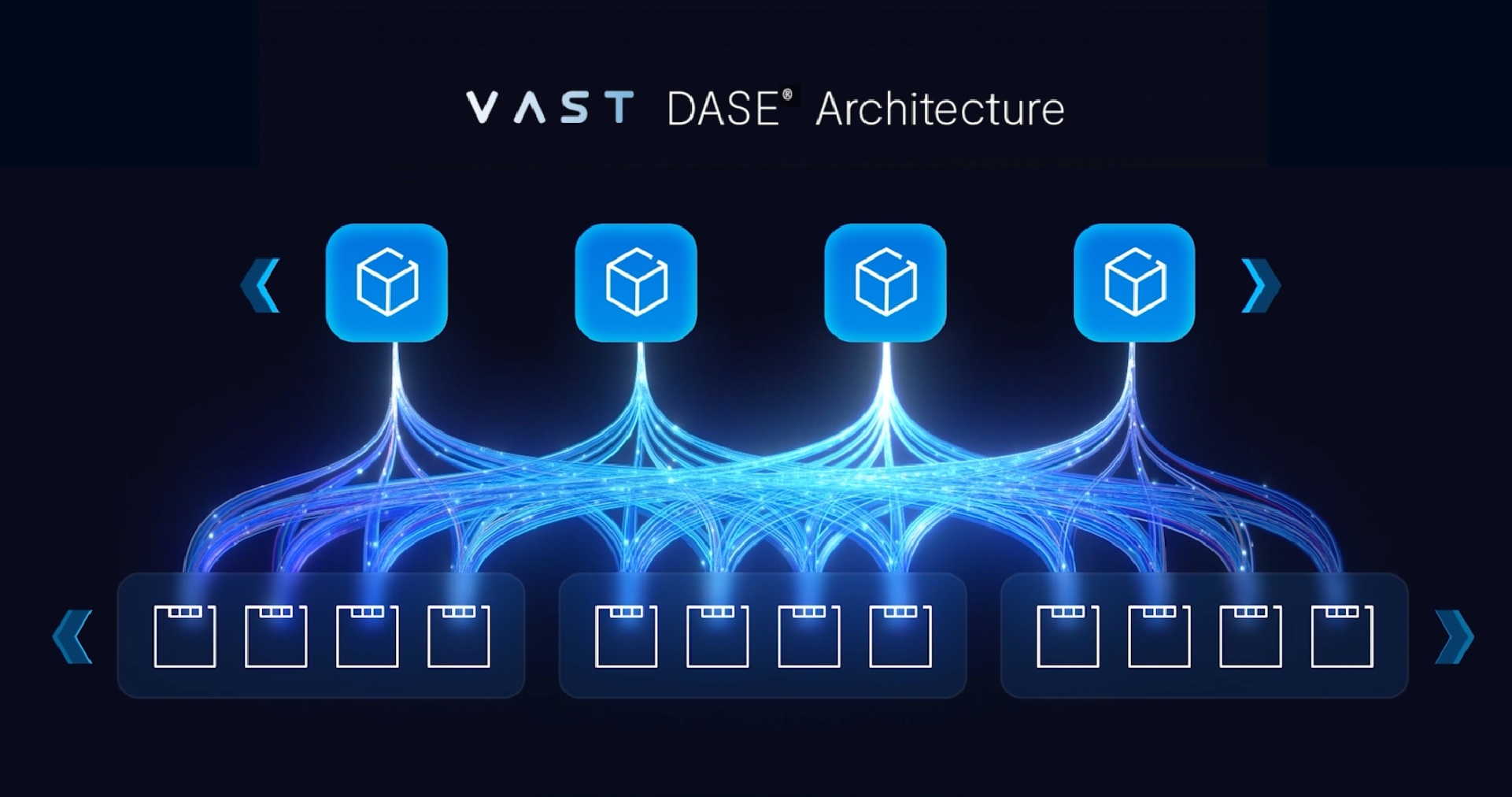

This is where VAST’s Disaggregated Shared-Everything (DASE) architecture departs from the pack. Disaggregation is only the first step. The second, and more difficult, step is eliminating the concept of partitioned ownership altogether.

In the VAST DataStore, compute nodes (CNodes) are stateless, and storage nodes (DNodes) hold the data. But critically, all data and metadata are globally accessible across the system. There are no shards to rebalance, no volumes to migrate, no locality constraints to manage.

Howard is clear about the difference. While competitors may call their system “shared” because multiple controllers can access the same disk enclosure, he counters: “Sure… but it’s actually a lot more than that.”

True shared-everything means sharing not only the storage medium and the data, but the metadata as well: the structures that give the data its meaning and define how it’s used across the entire system.

The result is a system that behaves less like a distributed cluster and more like a single, parallel data structure.

Why Global Visibility Changes Everything

This architectural choice unlocks capabilities that are difficult or impossible to achieve in partitioned systems.

Data reduction is a clear example. Compression is straightforward, but global deduplication and VAST’s proprietary similarity reduction require visibility across the entire dataset. Without shared metadata, those techniques degrade into localized optimizations with diminishing returns at scale. With it, they become system-wide operations.

The same applies to data protection. VAST’s ability to build extremely wide erasure coding stripes - enabled by universal access (shared everything) to all data blocks - allows for high durability with minimal overhead. “The only reason we can do that,” Howard explains, “is because every one of our storage controllers can access every single SSD and every block of data.”

Performance follows a similar pattern. When any node can service any request without coordination, throughput scales linearly with resources. In one example, the system demonstrated over 11 TB/s of aggregate throughput, numbers that are less interesting in isolation than in what they imply: the absence of bottlenecks typically introduced by partitioning.

This is the deeper implication of shared-everything. It is not just about scale; it is about removing the mechanisms that limit scale.

Collapsing the Protocol Boundary

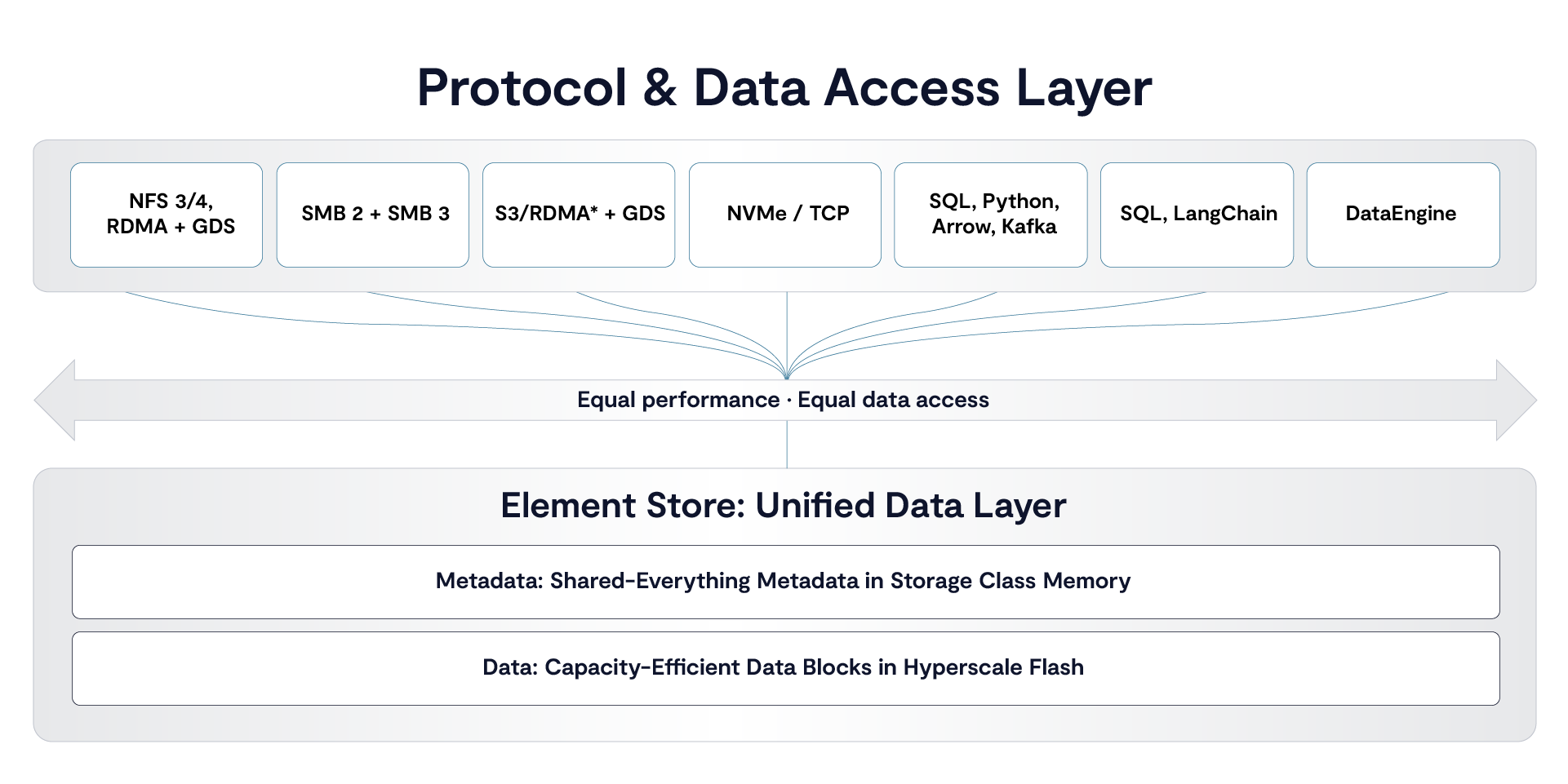

If shared data eliminates boundaries within the system, VAST’s internal data model eliminates boundaries at the interface.

Traditional storage architectures force a choice: file, object, or block. Each protocol implies a different storage backend, with its own semantics and operational model. Moving data between them requires copying, reformatting, or introducing gateways.

The VAST DataStore sidesteps this entirely through what it calls the “element” model. Internally, data is not stored as files or objects, but as a unified structure that can be presented through any protocol.

“You can write a file as an object, you can read it as SMB, you can read it over NFS,” Howard explains. “An element is not an NFS file or an S3 object. It’s a superset of all of them.”

This is not multi-protocol in the conventional sense. It’s a decoupling of storage from access semantics.

The practical effect is immediate. In one demonstration, a photo captured on a mobile device is uploaded via an S3-compatible interface, processed via NFS, and accessed via SMB without any data movement or translation layers. Each stage uses the protocol best suited to it, while operating on the same underlying data.

For AI pipelines, where data flows between ingestion services, preprocessing steps, training environments, and inference systems, this removes an entire class of complexity. There is no need to stage data between systems or maintain multiple copies optimized for different tools.

The pipeline collapses into a shared substrate.

From Storage System to Execution Surface

In the demonstration above, the workflow is orchestrated externally - a script polling for new data and triggering processing. But the underlying system already supports a more advanced model, where events within the data layer trigger computation directly.

Howard alludes to this when contrasting the manual approach with what could be done using the platform’s data engine: instead of polling, “the whole process [could] trigger automatically.”

This is a subtle but important transition. When data arrival, transformation, and access all occur within the same system, orchestration becomes an intrinsic property of the platform rather than an external concern.

For AI workloads, this aligns with a broader shift toward continuous systems. Training, inference, and data processing are no longer discrete stages. They operate concurrently, driven by streams of new data and feedback loops that refine models in real time.

In that context, moving data between systems is not just inefficient; it’s fundamentally misaligned with how the workload behaves.

Why This Matters Now

The significance of these ideas extends beyond storage.

As enterprises scale AI deployments, the bottlenecks are shifting away from compute and toward data coordination. Systems built from loosely integrated components - object stores, file systems, databases, streaming platforms - struggle to maintain consistency and performance under continuous load.

The industry response has often been incremental: faster interconnects, better caching, more sophisticated orchestration. But these approaches treat the symptoms rather than the cause.

VAST’s approach is more structural. By eliminating boundaries between nodes, between protocols, and increasingly between storage and execution, it removes the need for coordination in the first place.

Which is why you’ll regularly hear VAST’s founders talk about the company as an architectural outlier, challenging assumptions that have defined data infrastructure for decades.

The Road Ahead

The question is not whether these ideas are technically viable - VAST’s valuation this month reached $30 billion - but whether they represent a new default.

If shared-everything architectures can deliver consistent performance without the operational complexity of partitioned systems, and if unified data models can replace protocol-specific silos, the implications are significant. Entire categories of infrastructure, including data movement tools, integration layers, and even aspects of orchestration, could become redundant.

At the same time, the model raises new challenges. Global visibility requires rigorous control. Unified systems must accommodate diverse workloads without reintroducing fragmentation. And organizations must adapt to a paradigm where infrastructure is no longer assembled from discrete components, but delivered as an integrated whole.

What is clear is that the center of gravity has shifted. The defining question is no longer how to scale individual parts of the system, but how to design a system where those parts no longer need to be separate.

In that sense, the most important innovation in AI infrastructure may not be a new capability, but the removal of boundaries that once made those capabilities necessary.