Vector database benchmarks are often presented as headline numbers: queries per second, latency, recall. What those numbers rarely explain is the architectural model that produced them.

In our evaluation, we compared VAST’s native vector index to Milvus 2.6 using its disk-based AISAQ backend at the 1-billion-vector scale (128-dimensional embeddings). Under comparable multi-process query workloads, Milvus delivered approximately 89 queries per second, while VAST delivered approximately 1000 queries per second, an improvement of more than 11×. At 50 billion vectors, this translated into approximately 91% lower cost per 1,000 searches.

We also evaluated the VAST DataBase at 50 billion vectors on 8 CNodes, sustaining stable throughput under concurrency. The Milvus evaluation was conducted at 1B and 5B scales on the same hardware configuration and was not extended to 50B due to scaling limits encountered on the 8-node test cluster.

This post explains why those differences emerge.

The answer is architectural.

The Structural Limits of Sharded ANN Systems

Most vector databases evolved from two assumptions:

The index should be memory-resident.

Scale is achieved by sharding.

At moderate scale, this model works. Graph-based ANN structures such as HNSW perform well when the full index fits comfortably in DRAM. However, as vector collections grow into the billions, maintaining memory residency becomes prohibitively expensive. When indexes spill to disk, performance drops sharply because the graph traversal pattern becomes I/O-bound.

Sharding attempts to solve this by partitioning the vector space across multiple nodes. But sharding introduces secondary effects:

Query fan-out across shards

Shard routing and coordination overhead

Rebalancing when datasets grow

Operational complexity under continuous ingest

Metadata detachment from the vector system

The 50B benchmark exposed those limits.

VAST’s Hierarchical Clustering Architecture

VAST does not use shard-based scaling or memory-resident ANN graphs. Instead, it uses a hierarchical clustering architecture embedded directly inside the VAST DataBase.

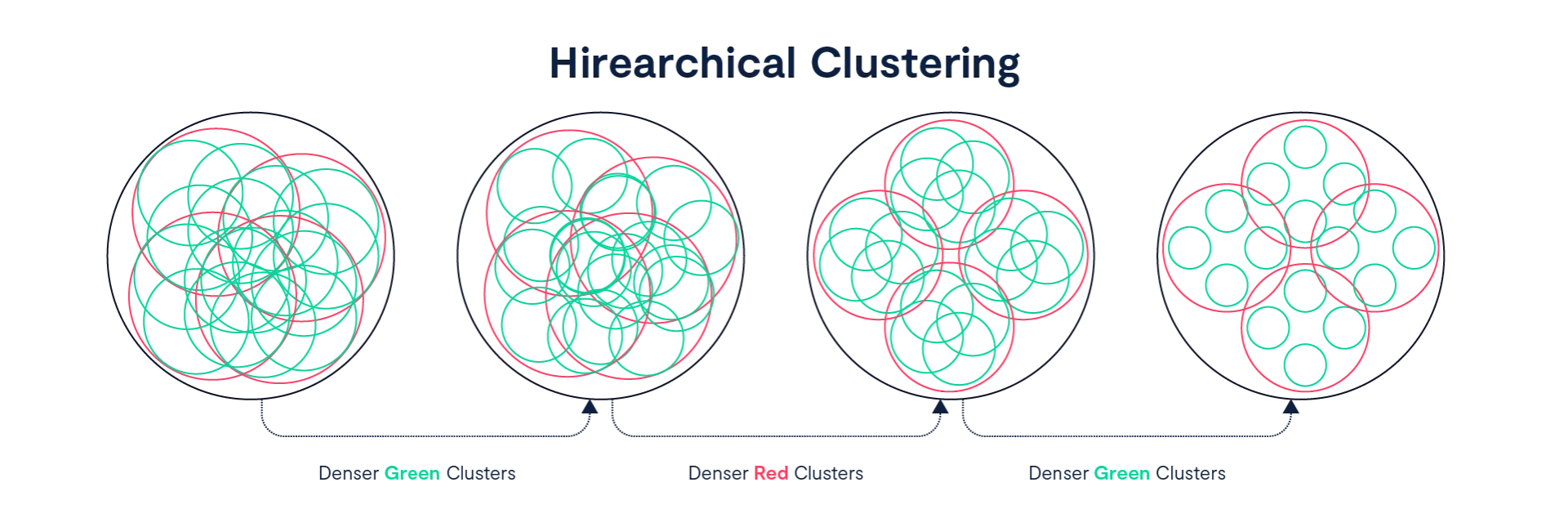

The vector index stores quantized vectors organized into a recursive, multi-level hierarchy of clusters. At the lowest level (Level 0), individual vectors are grouped into Level 1 clusters of up to 1,000 vectors. Each Level 1 cluster is represented by a centroid vector summarizing its region of the vector space.

Every 1,000 Level 1 centroids are then grouped into a Level 2 cluster, and a new centroid is computed to represent that aggregate region. This process repeats recursively: 1,000 Level 2 centroids form a Level 3 cluster, and so on.

Level 0: Individual vectors Level 1: Up to 1,000 vectors per cluster Level 2: Up to 1,000 Level 1 centroids (≈1 million vectors) Level 3: Up to 1,000 Level 2 centroids (≈1 billion vectors) Level 4: Up to 1,000 Level 3 centroids (≈1 trillion vectors) Level 5: Up to 1,000 Level 4 centroids (≈1 quadrillion vectors)

Each level increases namespace capacity by a factor of 1,000. Growth is logarithmic rather than shard-linear.

Because upper levels consist only of centroids rather than raw vectors, the top of the hierarchy remains compact. Clustering is performed using an optimized K-means approach (K=1000 on representative samples), with balanced constraints to avoid skewed partitions. Reclustering occurs in the background and operates locally within each level of the hierarchy. At lower levels, quantized vectors are redistributed into new subclusters. At higher levels, centroid groups are reorganized into new parent clusters. This localized restructuring avoids global index rebuilds and eliminates shard migration events. This recursive centroid hierarchy allows the index to scale without requiring the full vector corpus to reside in memory.

Search as Progressive Narrowing

Search in VAST follows a deterministic narrowing pattern through the hierarchy.

A query begins at the top-level cluster and recursively selects the closest centroids at each level using quantized vector comparisons. Only those candidate subclusters are explored further. At the lowest level, quantized vectors within the selected clusters are compared, and final reranking produces the top-K results.

This process has several properties:

It avoids full graph traversal across a distributed shard topology.

It avoids global fan-out across partitions.

It limits memory usage to targeted portions of the index.

It maintains predictable behavior as the dataset exceeds DRAM capacity.

The key difference is that search cost scales with tree depth and cluster selection, not shard count. In this benchmark, not only did VAST demonstrate performance - but it did so at 99% recall.

At 50 billion vectors, that difference becomes measurable.

Continuous Ingest and Background Clustering

Real-world AI systems do not operate on static datasets. VAST’s index is designed for continuous ingest.

Vectors are initially inserted into a landing table in non-clustered form. Background clustering organizes them into Level 2 clusters (~1M vectors per cluster), and further reclustering refines density and balance as the corpus grows.

In our large-scale testing:

Sustained ingest reached approximately 1 million vectors per second via Parquet import.

Background clustering sustained approximately 290,000 vectors per second.

Query performance remained stable during clustering operations.

There is no index freeze during reclustering. There is no shard rebalance event. The index evolves incrementally.

This matters because many systems show strong search performance only when ingest is idle. Mixed workloads reveal architectural weaknesses.

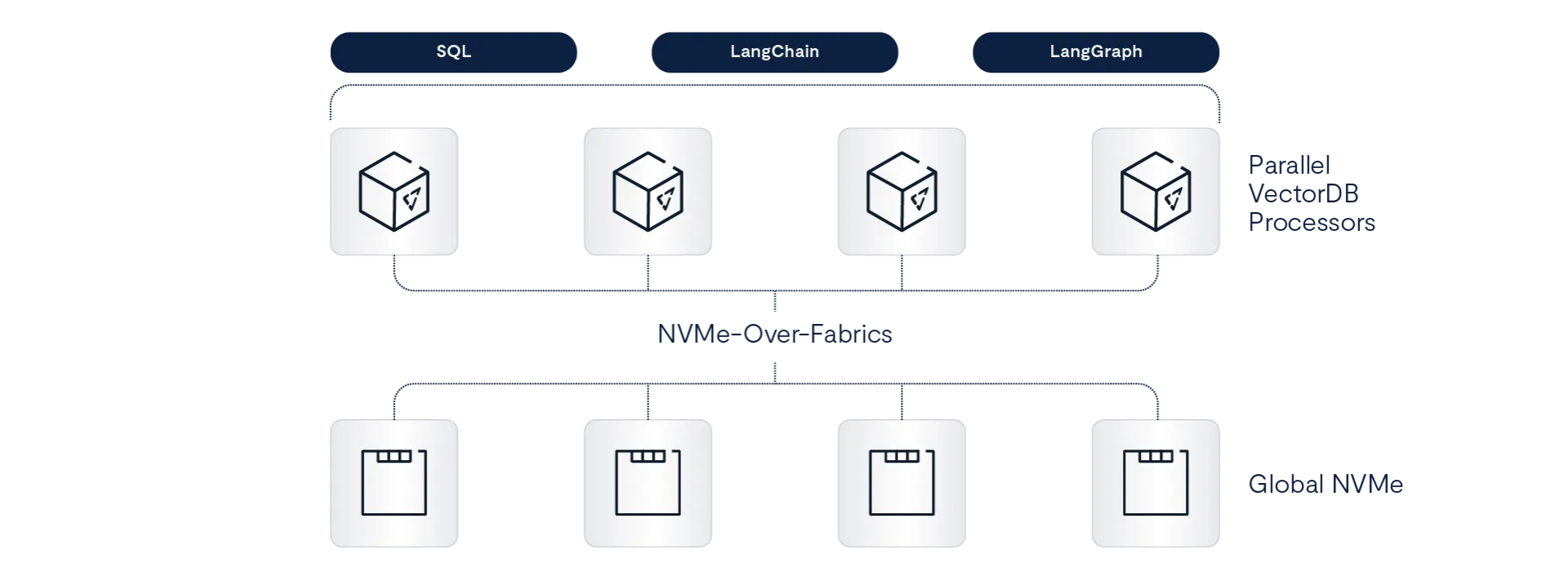

Hybrid Retrieval Without Architectural Friction

Because the vector index is embedded in VAST DataBase, vectors live alongside structured and semi-structured data.

Vector-indexed tables support secondary indexes with up to four user-defined sort keys. Each vector maintains an up-to-date pointer into its location within the vector hierarchy. As reclustering moves vectors, these pointers are updated automatically using the database’s sorted table capabilities.

This enables:

Efficient point lookups

Fast updates and deletes

Hybrid queries combining vector similarity with SQL predicates

Joins against structured metadata

There is no external metadata synchronization layer and no separate vector governance model. Access controls remain atomic with the underlying data.

This integration eliminates an entire category of operational overhead common in detached vector systems.

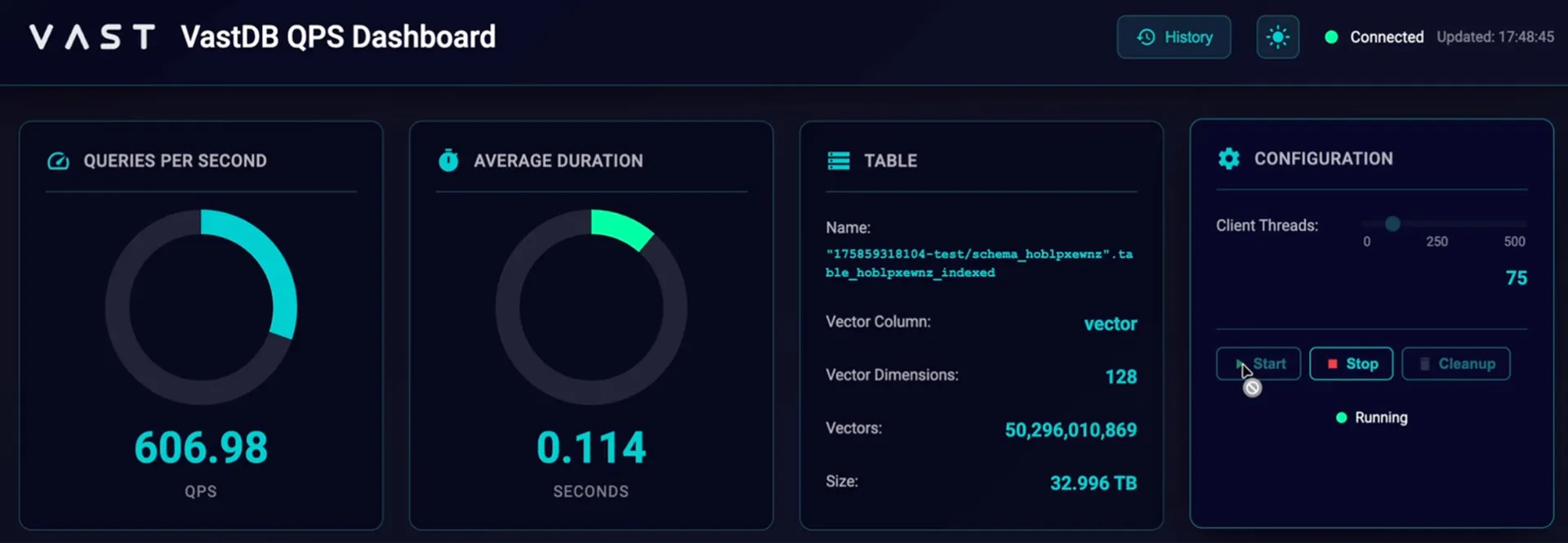

Measured Query Throughput at Scale

Beyond the 1B benchmark comparison, we also evaluated large-scale performance on a 50-billion-row table (128-dimensional vectors).

Under concurrency:

1026 QPS at ~317 ms average latency (375 client threads)

606 QPS at ~114 ms average latency (75 client threads)

These results were achieved without shard routing layers and without requiring the entire namespace to be memory-resident.

The 1B Milvus comparison, showing ~89 QPS versus VAST’s ~1000 QPS, reflects this structural difference. The performance delta is not a tuning artifact. It arises from the elimination of shard fan-out and disk-bound ANN traversal.

Scaling Envelope Observations

In this evaluation, Milvus was benchmarked at 1 billion and 5 billion vectors using the AISAQ disk-based index on an 8-node query cluster. These configurations produced the QPS results described earlier.

VAST was evaluated at 50 billion vectors on 8 CNodes, sustaining stable query throughput under concurrent workloads.

We did not extend the Milvus evaluation to 50 billion vectors in the same configuration. As dataset size increased from 1B to 5B, Milvus throughput decreased significantly under identical hardware conditions, and indexing and operational complexity increased correspondingly. Extrapolating from the observed scaling behavior between 1B and 5B, extending the same Milvus configuration to 50B would likely require a proportional increase in cluster resources. Based on index size and throughput trends, this would imply on the order of a 10× increase in query node count - from 8 nodes to roughly 80 - to maintain similar performance envelopes.

This highlights an important distinction: beyond per-query performance, the practical scaling envelope of an index architecture determines how far a system can grow before requiring structural changes.

The VAST hierarchical clustering model increases namespace capacity by expanding cluster depth and count while keeping per-query traversal bounded. In contrast, shard-based graph systems must increase memory footprint, coordination overhead, and disk traversal cost as the corpus expands.

At moderate scale, these differences may not dominate. At tens of billions of vectors, they shape what is operationally achievable on a fixed cluster size.

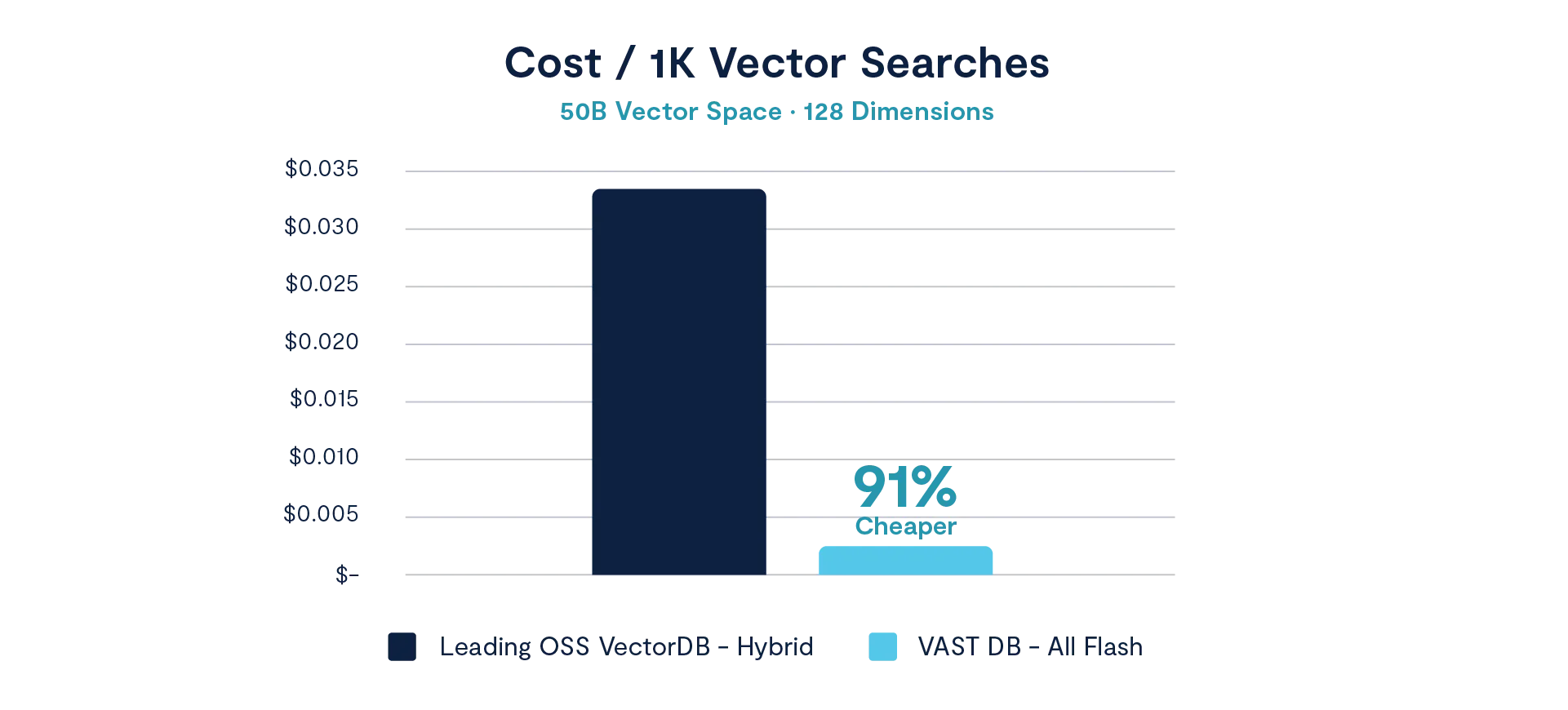

Price-Performance at 50 Billion Scale

Raw throughput is only part of the picture. Infrastructure efficiency determines the practical viability of large vector deployments.

At a 50-billion vector namespace (128 dimensions), we evaluated cost per 1,000 vector searches under sustained concurrency. The leading OSS hybrid vector system required approximately $0.033 per 1,000 searches. Under the same scale conditions, VAST delivered approximately $0.003 per 1,000 searches — a 91% reduction in cost per query.

This delta reflects architectural differences rather than hardware tuning. In shard-based systems, scaling corpus size increases memory pressure, coordination overhead, and disk traversal costs. As node count grows to sustain throughput, cost scales with it.

In the hierarchical clustering model, search remains bounded and localized. Memory footprint per query remains stable as namespace size grows. The result is not only higher throughput, but materially lower infrastructure cost per unit of work.

At tens of billions of vectors, performance and efficiency become inseparable metrics.

Why the 11× Gap Exists

At 50 billion vectors, architecture determines performance more than algorithm selection.

In sharded ANN systems:

Query execution must coordinate across partitions.

Disk access patterns become irregular under graph traversal.

Memory pressure increases non-linearly with corpus size.

In VAST’s hierarchical model:

Search depth is bounded.

Only targeted subclusters are loaded.

Memory usage is proportional to query scope.

Namespace growth does not increase shard coordination complexity.

The result is a widening performance gap as datasets scale.

From Billion-Scale to Trillion-Scale

The hierarchical index is explicitly designed for namespaces extending into trillions of vectors. The cluster tree depth accommodates exponential growth without rearchitecting the system or increasing shard count.

More importantly, performance behavior remains predictable as datasets exceed memory capacity. The index was built for memory-bounded execution rather than memory-resident operation.

That distinction is what enabled the 11× benchmark result.

Architecture Determines the Outcome

At 50B vectors, the performance gap between VAST and Milvus reflects architectural design choices, not incremental optimization.

Vector systems built around sharded, memory-resident ANN graphs inherit the scaling properties of that design. As datasets grow, coordination and memory constraints become first-order performance factors.

VAST’s hierarchical clustering architecture avoids those constraints by treating vector search as a progressive narrowing problem inside a distributed database, not as a graph traversal problem across shards.

Enterprise RAG systems are still in early deployment stages, but their trajectory is clear. As organizations embed retrieval into customer support, compliance review, engineering workflows, and video intelligence pipelines, vector namespaces will grow from billions into the trillions. At that scale, architectural assumptions that seem acceptable today begin to surface as constraints. The systems that perform well in early pilots may struggle under sustained, enterprise-wide adoption.