When VAST Data cofounder Jeff Denworth took the stage at the VAST FWD conference recently, all eyes were trained on center stage. Few people deliver the tech and energy Denworth does and his talk did not disappoint.

His vision, which followed closely on the heels of VAST Data CEO and founder Renen Hallak’s keynote about where the heart of AI systems will live, is that AI is becoming a continuous system…and continuous systems cannot be built on fragmented infrastructure.

Just as Hallak outlined, we’ve spent several years explaining AI via models. Bigger models, better models, faster GPUs to train them, and so forth. And while that narrative is clean and easy to repeat, it’s increasingly detached from where the real system is forming.

What is emerging now, according to both VAST leads, is not a better model stack, but a different kind of system altogether. If AI is becoming continuous instead of episodic then the systems have to reflect that continuity.

That distinction is subtle until you start tracing what actually happens inside modern AI environments. In that model-centric view, intelligence is something that’s produced, evaluated, and deployed. It has clear phases, boundaries, and ownership. But in the system Denworth describes, those boundaries dissolve. Jeff paints a picture of AI with no clean separation between training and inference and no moment where the system stops and hands off to something else.

As he told the crowd, intelligence is shaped continuously by the data it encounters, the actions it takes, and the feedback it generates, and the infrastructure beneath it has to sustain that loop without interruption. This is a dataflow system that can pull “inputs from all around the world and then use those inputs to improve the intelligence of a model.”

That loop he’s describing might sound abstract until you map it onto the way systems are actually built today. Data arrives in streams, lands in object stores, is copied into databases, transformed into feature sets, indexed for search, and staged for inference. Each of those steps is optimized in isolation. Each layer introduces its own latency, its own consistency model, its own failure modes. But the entire pipeline works because the workload is treated as discrete, because it is acceptable for minutes or hours to pass between ingestion and action.

The moment that workload becomes continuous, the pipeline becomes the bottleneck.

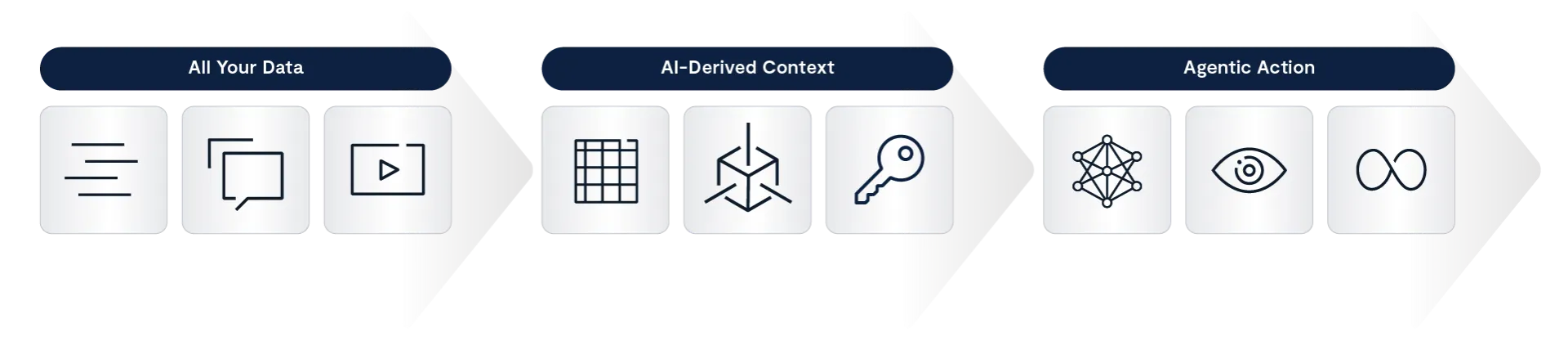

The first constraint he calls out is the data itself. Modern infrastructure is organized around types: unstructured data in file and object systems, structured data in databases, events in streaming platforms, vectors in specialized search engines. Each of these exists because of a historical tradeoff between performance, scale, and flexibility. But that separation has been manageable because applications have been forced to adapt to it and unfortunately, agents don’t do that.

For example, think about an agent resolving a supply chain disruption. Do you think it cares whether its inputs are emails, inventory tables, sensor logs, or call transcripts? After all, it needs all of them, in context, at the same time. And as Denworth argues, that requirement immediately exposes the inefficiency of maintaining separate systems for each modality. Data has to be duplicated, synchronized, and translated across boundaries that were never designed for real-time interaction.

At small scale, this is pretty much just overhead. But at the scale of thousands of agents per employee, which Denworth explicitly references, it becomes systemic drag. “You can’t just solve one problem. You have to solve all of them,” Denworth adds.

The response, he says, isn’t to optimize the boundaries, but to remove them. This is where the architectural foundation he references, the disaggregated and shared-everything model, becomes critical.

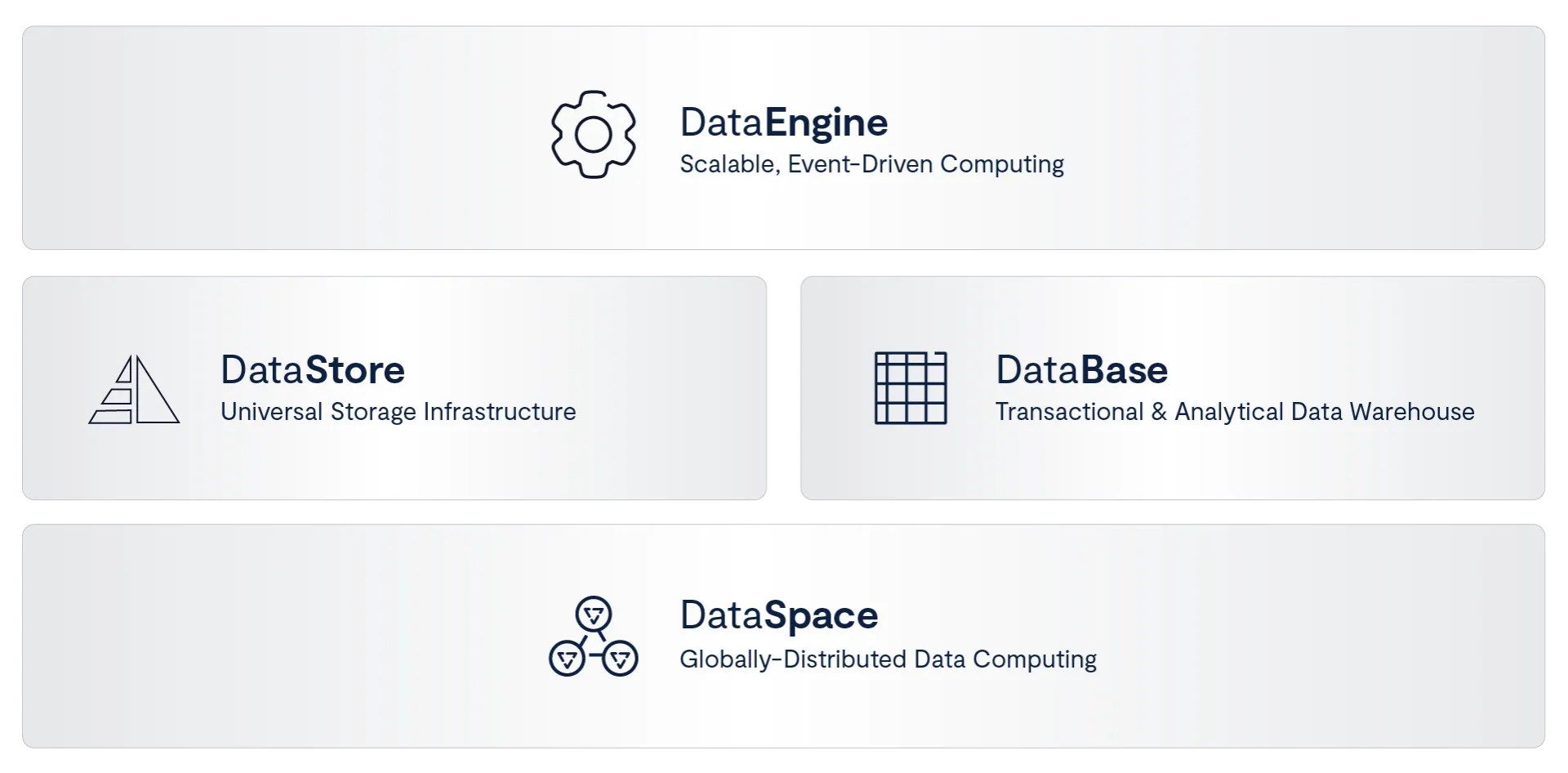

In this paradigm, data is no longer partitioned into systems with different capabilities. It exists within a single parallel architecture that can ingest streams, store objects, serve files, and maintain structured tables simultaneously. Event streams can be written directly into tables. Tables can be queried in real time without staging. Vector indices can be built alongside both structured and unstructured data. The distinction between systems collapses into a single data substrate.

All of this only works because of how it’s built. The system is designed for parallelism at every level, allowing data to be ingested and processed without intermediate layers. There aren’t gateways between ingestion and computation, and there are no separate systems for streaming and storage. When Denworth talks about delivering multiple times the performance of existing event brokers, or enabling queries directly against live data streams, what he’s describing is this very removal of boundaries. Data doesn’t have to be moved to where it can be processed. It is processed where it lives.

This leads directly to the second constraint, which is compute. In traditional architectures, compute is external. Applications request data, perform operations, and write results back. That model assumes that moving data is acceptable and that compute resources can be provisioned independently of storage. In a continuous system, both assumptions break.

Compute gets embedded within the data system itself. Functions are triggered by events as they occur. Pipelines are defined as part of the infrastructure rather than orchestrated across it. Denworth’s description of the data engine, with triggers, functions, and Kafka-compatible events operating directly on data as it arrives, is not just a feature set. It is a redefinition of where computation lives. The system stops waiting for applications to ask questions and starts reacting autonomously to changes in state.

That autonomy increases the pressure on performance in ways that aren’t really obvious until you reach inference at a certain scale. This takes us into one of the most technically dense parts of the keynote but arguably the most important: A discussion of key-value caching, which reframes storage as memory for models rather than as a repository for data.

In distributed inference, context is everything. Every token generated depends on what came before it. Recomputing that context is expensive, both in latency and GPU cycles. “An AI model has a memory… if you can store it somewhere, you don’t have to go recreate that context,” he explains.

What Denworth describes here goes beyond just caching, it’s persistent, distributed model memory integrated directly into the infrastructure. By embedding storage logic into the compute nodes themselves, and leveraging parallel access across the system, each GPU can access the context it needs without recomputation. That’s a huge deal when it comes to efficiency and latency.

This is where the architecture intersects directly with NVIDIA’s inference stack, where BlueField DPUs effectively become distributed storage servers, and where the cost and power implications of eliminating redundant compute become significant. Storage is no longer downstream of compute. It is on the critical path of inference.

The third constraint Denworth unpacked for the crowd is locality. Even if data and compute are unified within a system, the system itself is still distributed. Most enterprises operate across multiple datacenters, multiple clouds, and increasingly across edge environments.

Data is generated everywhere, intelligence is applied everywhere and the loop does not respect the boundaries of a single deployment.

“The data space allows us to federate all of your environments into one unified namespace… it kind of defies data gravity,” Denworth says.

If you pull back for the bigger picture, this is really where the architecture extends beyond a single cluster into a global system. Data doesn’t get replicated blindly across environments but instead gets presented through a unified namespace that allows compute to be routed dynamically based on cost, performance, or policy. This is not just a convenience for the hybrid cloud set either. It’s a requirement for sustaining continuous workloads that span regions and environments. The system has to behave as if it is local, even when it is not.

At this point, the system begins to resemble something more than infrastructure. It is not just storing and processing data. It is coordinating activity across a distributed environment. Agents operate continuously within it, ingesting data, generating insights, triggering actions, and interacting with one another.

That shift introduces the fourth constraint, which is control. As systems become autonomous, the absence of control is not just a governance issue, it is a failure of the architecture itself.

“Nothing can happen within a system without being completely controlled,” says Denworth in his description of the PolicyEngine. He emphasizes this isn’t just an external compliance layer, but rather it’s more like an in-band system that mediates every single interaction. Agent-to-agent communication, access to tools, retrieval of data, and even the flow of information through pipelines are subject to policy enforcement. This includes not just binary allow or deny decisions, but more nuanced controls such as redaction, transformation, and context-aware filtering.

Every action is observable, auditable, and governed in real time.

Even with control in place, one final constraint remains, and it brings the system back to the loop where it began. If models are still trained outside the system and deployed into it, intelligence evolves in discrete steps. The system adapts, Denworth says, but cautions it’s not continuous.

What are they being fine tuned with,” he asks. “Do I have full confidence in this idea that I trust what’s being done?

The answer is to move tuning inside the system. His description of the TuningEngine, where model performance and behavior are captured, evaluated, and fed into continuous fine-tuning pipelines, completes the loop. Models are no longer external artifacts. They are part of the system’s runtime, evolving alongside the data and processes that shape them.

Seen in isolation, each of these capabilities can be understood as an incremental improvement. Higher performance storage, faster databases, way better vector search, integrated pipelines, stronger security. That’s a lot already. But taken together, they reveal a pattern. Each constraint forces the system to eliminate a boundary that was previously assumed to be necessary.

Data unifies because fragmentation breaks the loop. Compute embeds because movement breaks the loop. Infrastructure globalizes because locality breaks the loop. Control becomes intrinsic because autonomy breaks the loop. And models evolve in-system because static intelligence breaks the loop.

What emerges from that sequence is a system that looks increasingly familiar. Storage becomes a core service, it’s a database layer that organizes structured and unstructured information together along with an execution layer that coordinates computation, a control plane enforces policy and identity. And all of this under the banner of a global namespace that connects environments into a single system.

These are, by the way, all components that have historically defined an operating system.

This is where Hallak’s keynote theme comes full circle, intersecting with Denworth’s. The operating system for AI is not something that appears fully formed at the top of the stack. It emerges from the bottom, as infrastructure is forced to adapt to the realities of continuous intelligence.

If all that holds, then the center of gravity in AI shifts away from the models themselves and toward the system that keeps them running, coordinating, and evolving over time. And that system becomes the operating layer for intelligence itself.