The conversation around AI is still largely centered on models, how they perform, how large they are, and how efficiently they can be trained. That focus made sense when training was the dominant challenge, which required massive datasets, significant compute, and careful iteration to produce meaningful results.

What is becoming increasingly clear, however, is that the model is no longer the hardest part of the problem.

What matters now is everything that surrounds it.

In a recent talk, VAST CTO Alon Horev and VAST Field CTO Andy Pernsteiner outlined how this shift is taking shape in real-world environments - not as a future vision, but as something organizations are already working through today.

Training Is No Longer the Bottleneck

The discussion begins with training, grounding the conversation in familiar territory. LLMs are built by iterating over massive datasets, tuning parameters over time to improve output quality. As Alon explained, the process is “very compute intensive” but also inherently scalable.

What often goes unspoken, but is critical in practice, is how that training process is managed. Models are not trained in a single continuous run. They rely on checkpoints, periodic snapshots of the model state that allow training to resume, recover from failures, and scale across distributed environments. These checkpoints create a constant flow of data being written, read, and reloaded at high speed. Even at the training layer, the system is not just compute-bound. It is deeply dependent on how efficiently data can be handled.

That dependency only increases as the nature of data changes. As Alon notes, customers are rapidly moving “from text to multimodal…from petabytes to exabytes in less than a year.” Video, images, and audio introduce both scale and complexity that traditional pipelines were never designed to handle.

At that point, training becomes just one workload in a much larger system.

From Pipelines to Systems

Around it sits data preparation, streaming pipelines, inference, memory layers such as KV cache, and search systems that allow models to access and reason over data in real time. These stages aren’t discrete; they are concurrent, interdependent, and continuously active.

Alon frames the consequence of this clearly: “every AI factory is practically a multi-tenant environment… a single pool of hardware that should be leveraged across workloads.” What used to be isolated pipelines has become a shared system.

This is where many architectures begin to break down. When storage, databases, and processing are separated into different systems, data must be copied, moved, and reprocessed at each step. At scale, that coordination becomes the bottleneck.

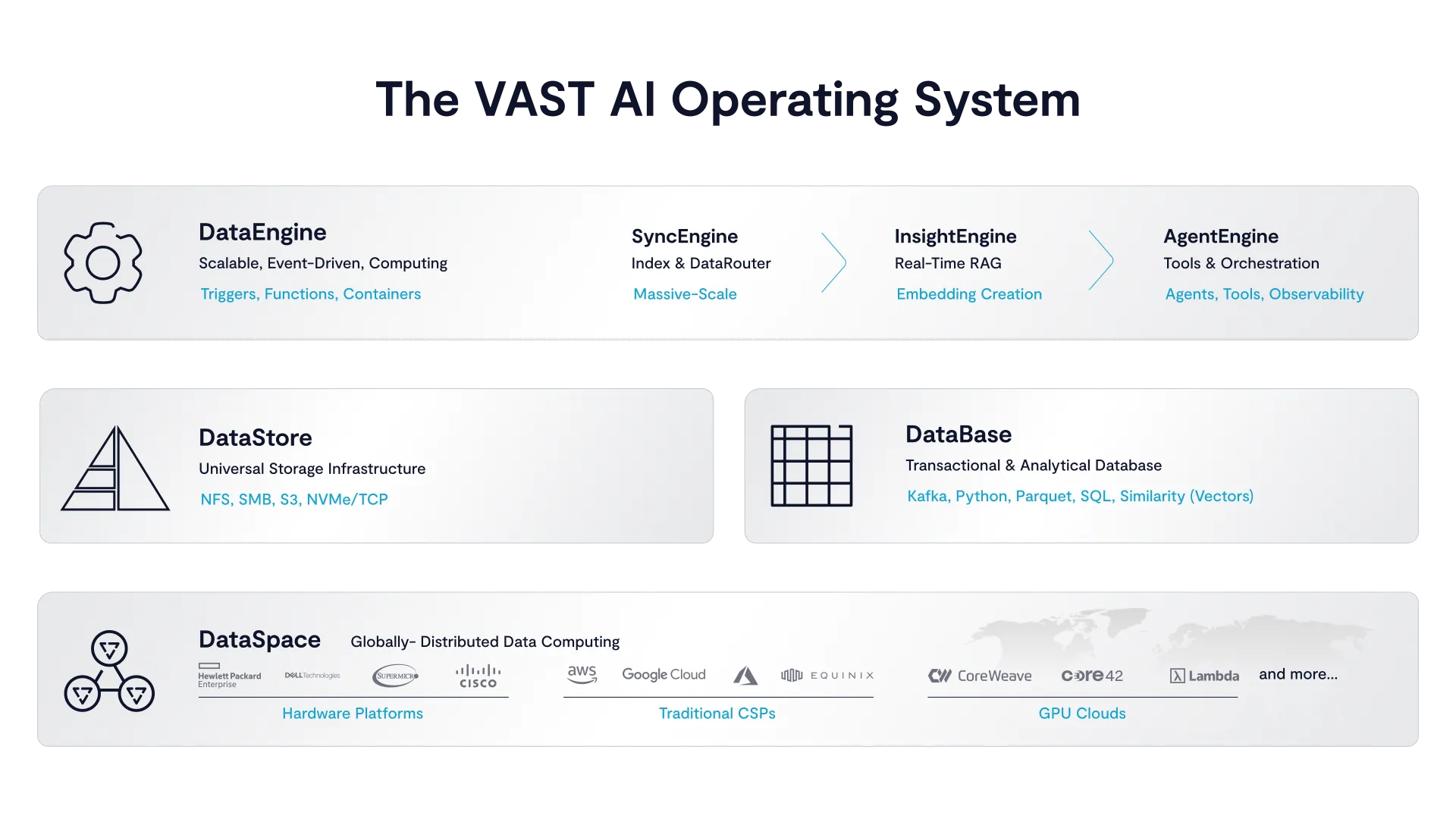

What VAST presents is a different approach. Instead of assembling these capabilities across layers, the VAST AI Operating System integrates them into a single system where data, processing, and execution operate together. The VAST DataStore provides a shared, high-performance data layer. The VAST DataBase unifies structured, unstructured, and vector data. The VAST DataEngine enables event-driven execution across workflows.

The System as the Runtime

The impact of that design becomes clear in the demo.

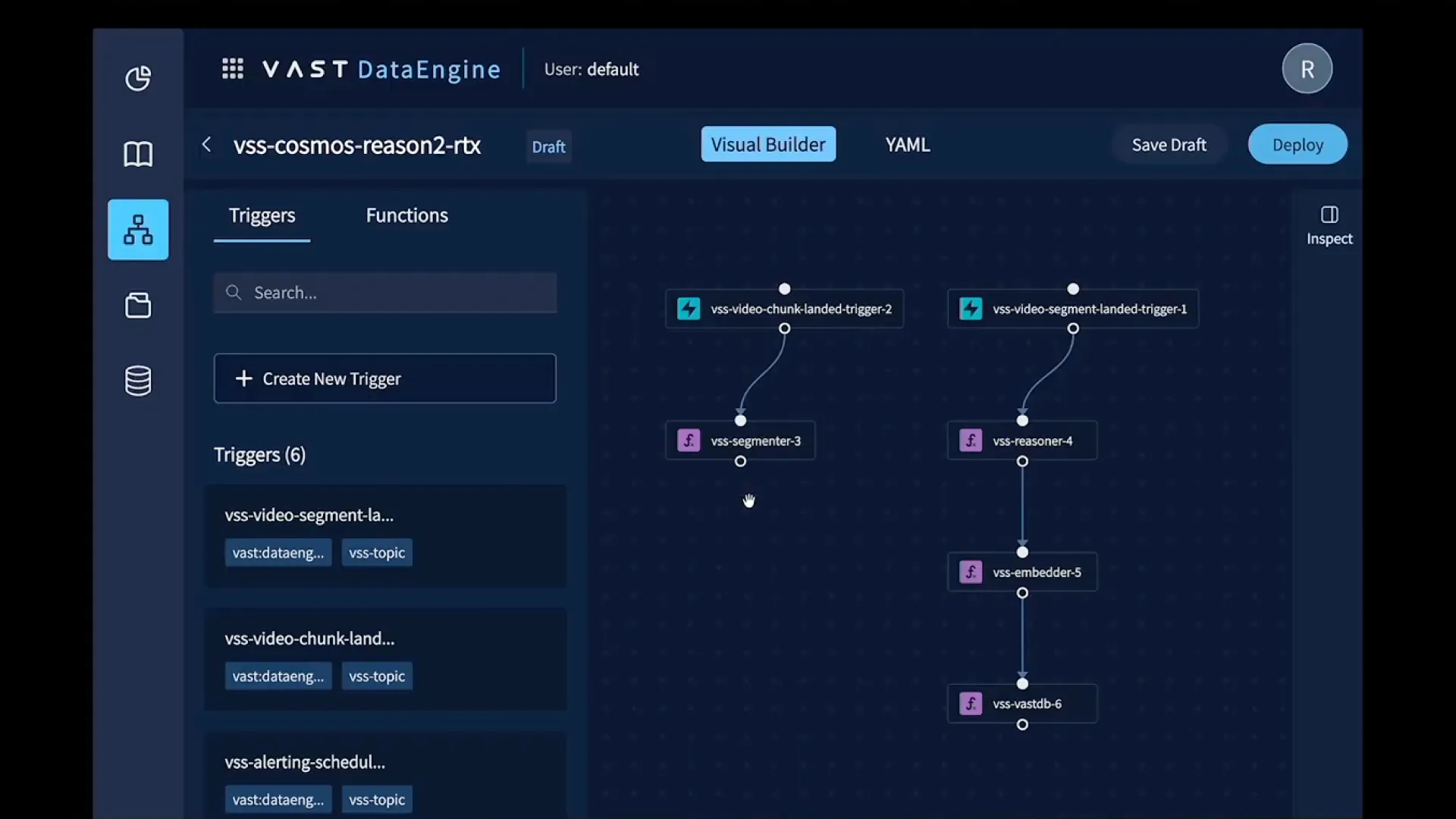

Andy walks through a use case that appears simple, making video searchable, but quickly exposes the complexity underneath. A video is ingested into the system and immediately segmented into smaller units, because, as he notes, “you can’t take a two-hour long video and just create a quick summary on it. You need segments.” Those segments are processed through a vision-language model to generate summaries, embedded into vectors, and indexed for semantic search.

What is important is not just what happens, but how it happens.

The video lands in the VAST DataStore, and the VAST DataEngine reacts automatically. Each step is triggered by the previous one, segmentation, model inference, embedding, indexing, without requiring external orchestration or moving data between systems. The results are stored in the VAST DataBase, making them immediately searchable.

This reflects a broader principle. The system itself serves as the runtime. Pipelines are not stitched together across services, but executed directly within the system through events. Data changes trigger actions, and those actions produce new data, all within the same environment.

The Visual Builder within the VAST Data Engine offers simple, intuitive steps to building AI data pipelines.

Pernsteiner underscores this point directly: “Everything I’m going to show today, you can do today.” The emphasis is not just on capability, but on the fact that this model of execution is already operational.

Observability in Real Time

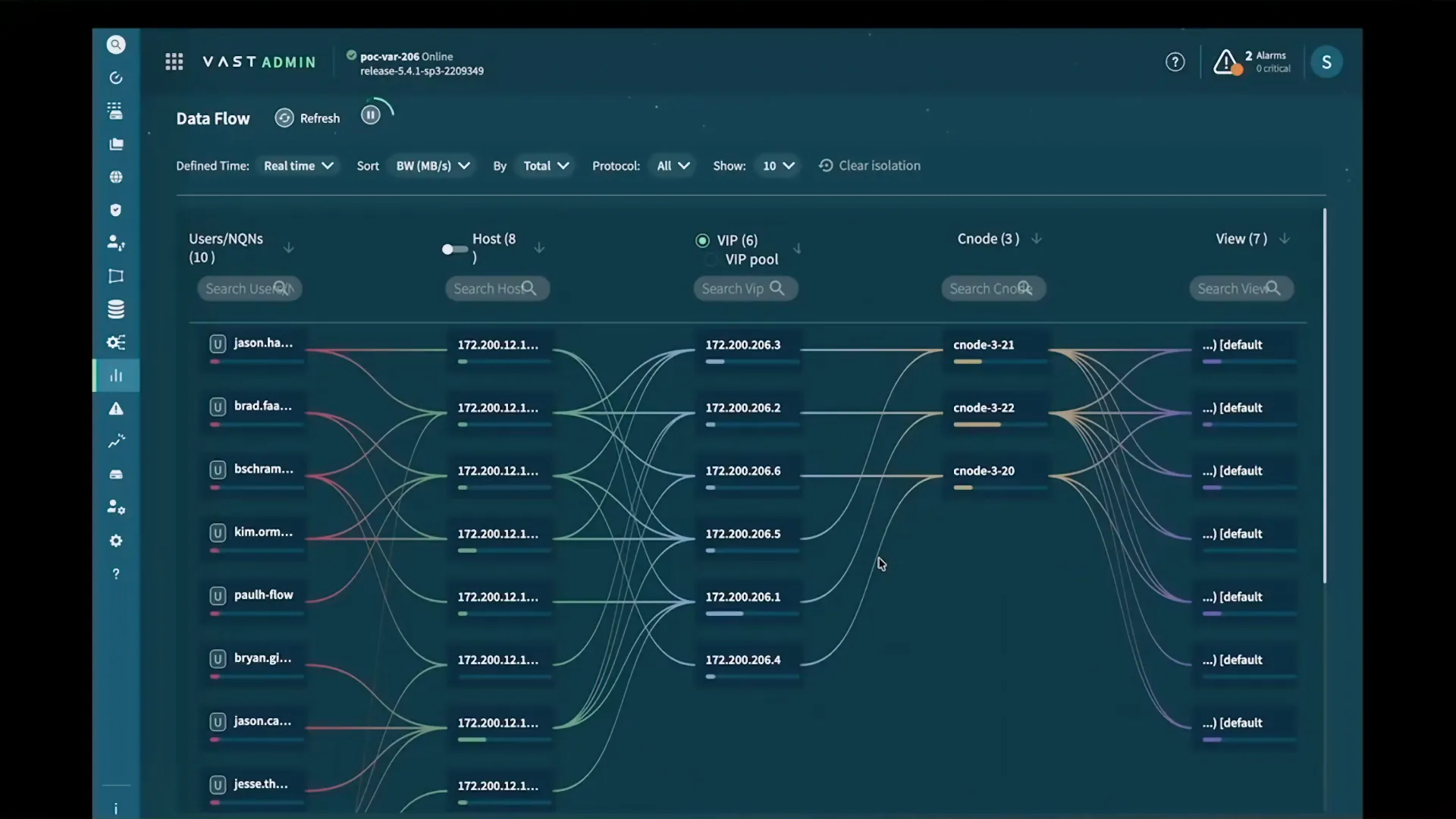

As the system expands to support multiple workloads, the need for visibility becomes critical.

Training jobs, inference workloads, pipelines, and analytics are all running concurrently, often across different teams. Understanding what is happening inside the system at any given moment is no longer optional.

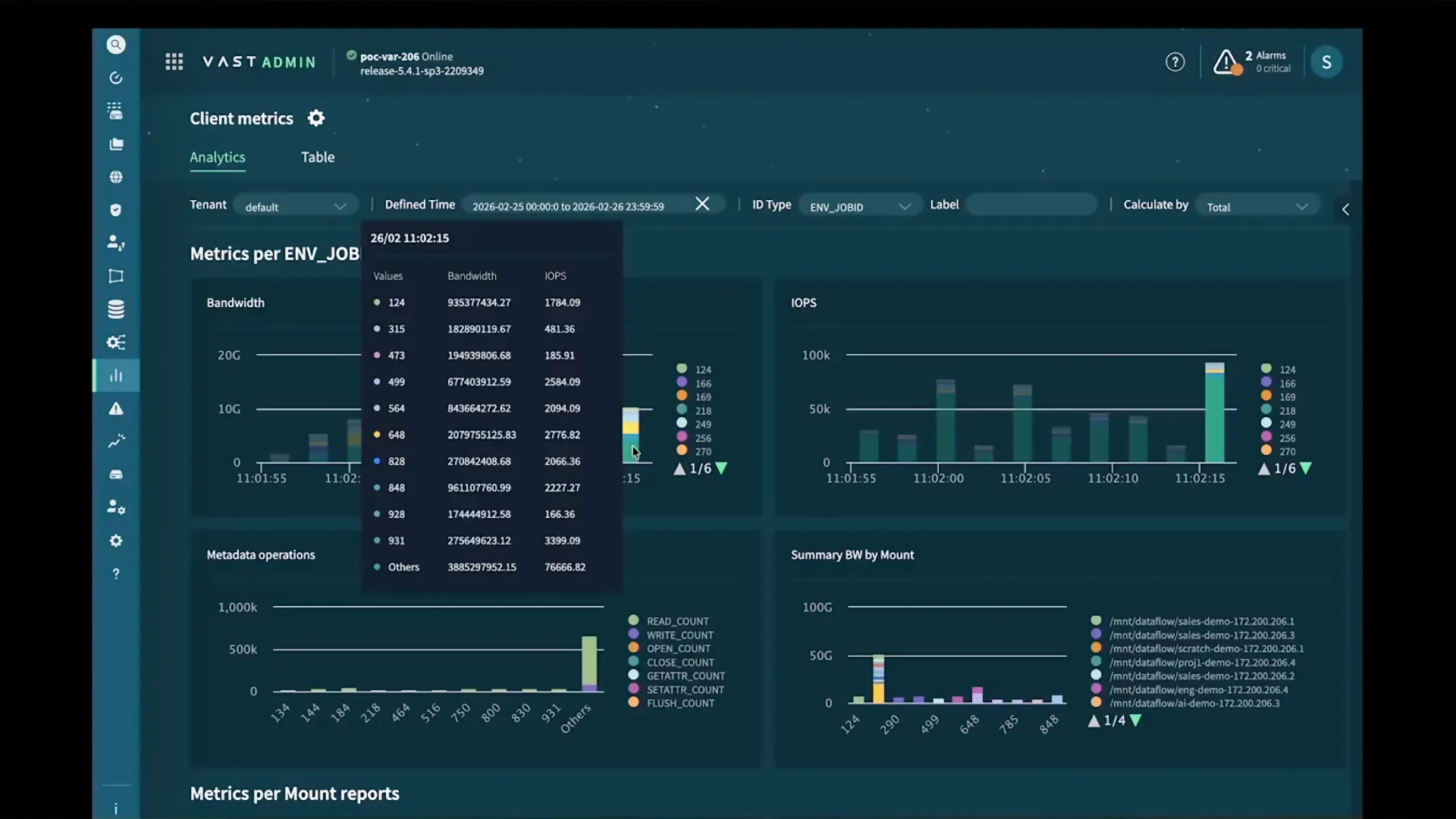

VAST’s Data Flows feature enables administrators to be much better informed about how the system is being utilized.

Pernsteiner frames it simply: “Who is trashing my system right now?”

In most environments, answering that question requires exporting logs, processing them externally, and reconstructing events after the fact. In the approach demonstrated here, observability is built directly into the system. Activity can be queried in real time through the VAST DataBase, using the same system that stores the data itself. Teams can immediately see which workloads are consuming resources, how data is being accessed, and where bottlenecks are forming.

Andy demonstrates how admins can view I/O by JobID within the cluster dashboard.

Agents and Event-Driven Execution

This becomes even more important as systems move toward agent-based execution.

Alon describes the distinction clearly: “if you think about an LLM as a brain, an agent is a person.” Agents introduce intent. They take actions, use tools, and operate continuously toward a goal. But they also depend heavily on the system around them.

Agents need access to real-time data, memory, and execution. They need to respond to changes in the environment, not operate in isolation. This is where the event-driven model and observability intersect.

In an event-driven system, agents are not constantly running. They react to events, new data, changes in state, triggers within the system. Observability ensures that these actions are visible as they happen, allowing the system to be monitored and managed in real time. Together, they create a feedback loop where the system can both act and be understood simultaneously.

This is what allows agent systems to scale.

As Alon explains, “you can go from one agent to 10,000 agents, not a single line of code changes.” That level of scalability is only possible when execution, data access, and visibility are all part of the same system.

A System That Improves Over Time

At the center of all of this is data. Models are limited to what they were trained on, which makes real-time access to fresh data essential. The VAST DataBase enables retrieval, semantic search, and vector operations within the same environment as the data itself. This is particularly important for complex data types such as video, where understanding meaning requires more than simple indexing.

As these systems operate, they generate new data that can be used to improve performance over time. Models can be fine-tuned, retrieval mechanisms refined, and agent behavior adapted. Horev describes this as a continuous loop, where systems can be “fine-tuned… daily or even hourly,” creating an environment that improves through use.

A Different Approach to AI Infrastructure

The broader takeaway is that AI is no longer defined by individual components, but by the system that brings them together. Success depends less on the performance of a single model and more on the ability of the underlying infrastructure to support continuous, coordinated activity across data, compute, and execution.

This is where VAST is taking a fundamentally different approach. Rather than layering new capabilities onto existing architectures, the VAST AI Operating System rethinks the system itself, collapsing storage, database, and execution into a unified platform designed for how AI actually runs.

For those looking to understand how these ideas translate into real systems, the full talk provides a clear view into how these components come together and why that level of integration is becoming essential.