When we set out to create an architecture and development platform for AI and high-throughput data workflows, we knew we needed to support data in all of its forms: unstructured, structured and streaming. On the unstructured front, VAST built file and object services over an all-flash architecture that nullified price/performance trade-offs in that space. For structured data, the VAST DataBase came along and is doing much the same, leveraging the same architecture.

For structured and unstructured data we have a solution that sits comfortably as the standard for the most demanding high-throughput HPC and AI workloads operating today; while also being comfortable as a feature-rich enterprise data layer servicing highly disparate workloads; while also being comfortable running web-scale analytics on structured data. It covers the bases. So it goes with streaming:

VAST Message Broker

The VAST Message Broker completes a picture for high-throughput ingest and for low-latency messaging that’s been in planning for some time. We recently released the VAST DataEngine, and while the Message Broker sits at the core of that offering as a low-latency message bus (communicating arbitrary events to serverless functions), its spirit is that of a high-throughput data ingestion monster aimed at web-scale click data, industrial telemetry, high-volume transaction data and data-processing transports - variations on the “messaging” theme, but with a lot more data.

So what is the Message Broker? Put simply, it is a Kafka protocol implementation. Kafka is the easy choice given how established it is and how flexible it has already proven to be. It has several existent implementations that have been around for years. Adopting one would be easy; there are many high-quality routes to go: Apache/Confluent Kafka, Redpanda, AutoMQ - they all have their strengths and weaknesses. But they also all include fundamental trade-offs at the data layer. This is just a long way of saying that they don’t involve a Disaggregated Shared-Everything (DASE) architecture - like our structured and unstructured offerings.

Disaggregated Shared Everything

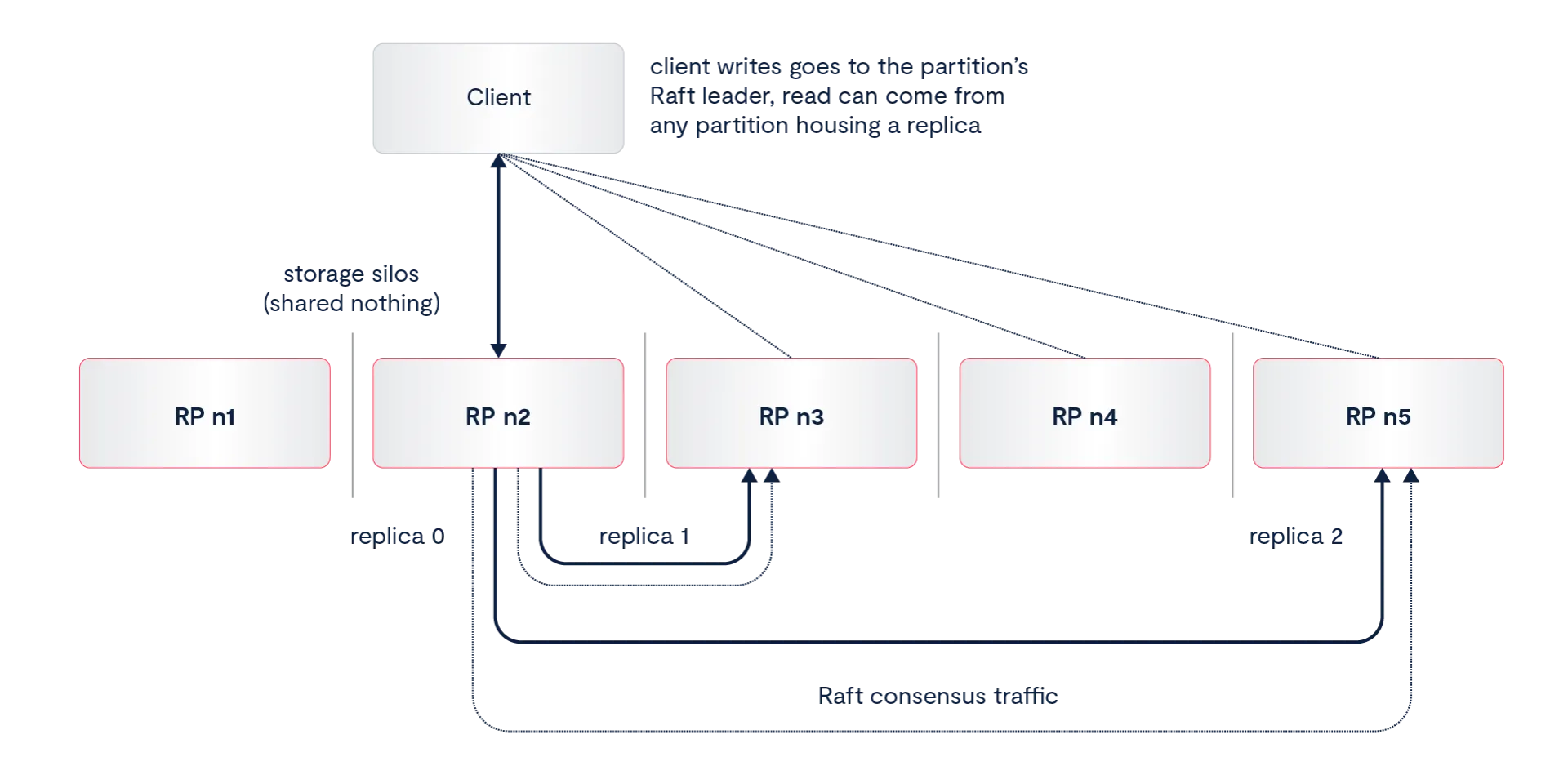

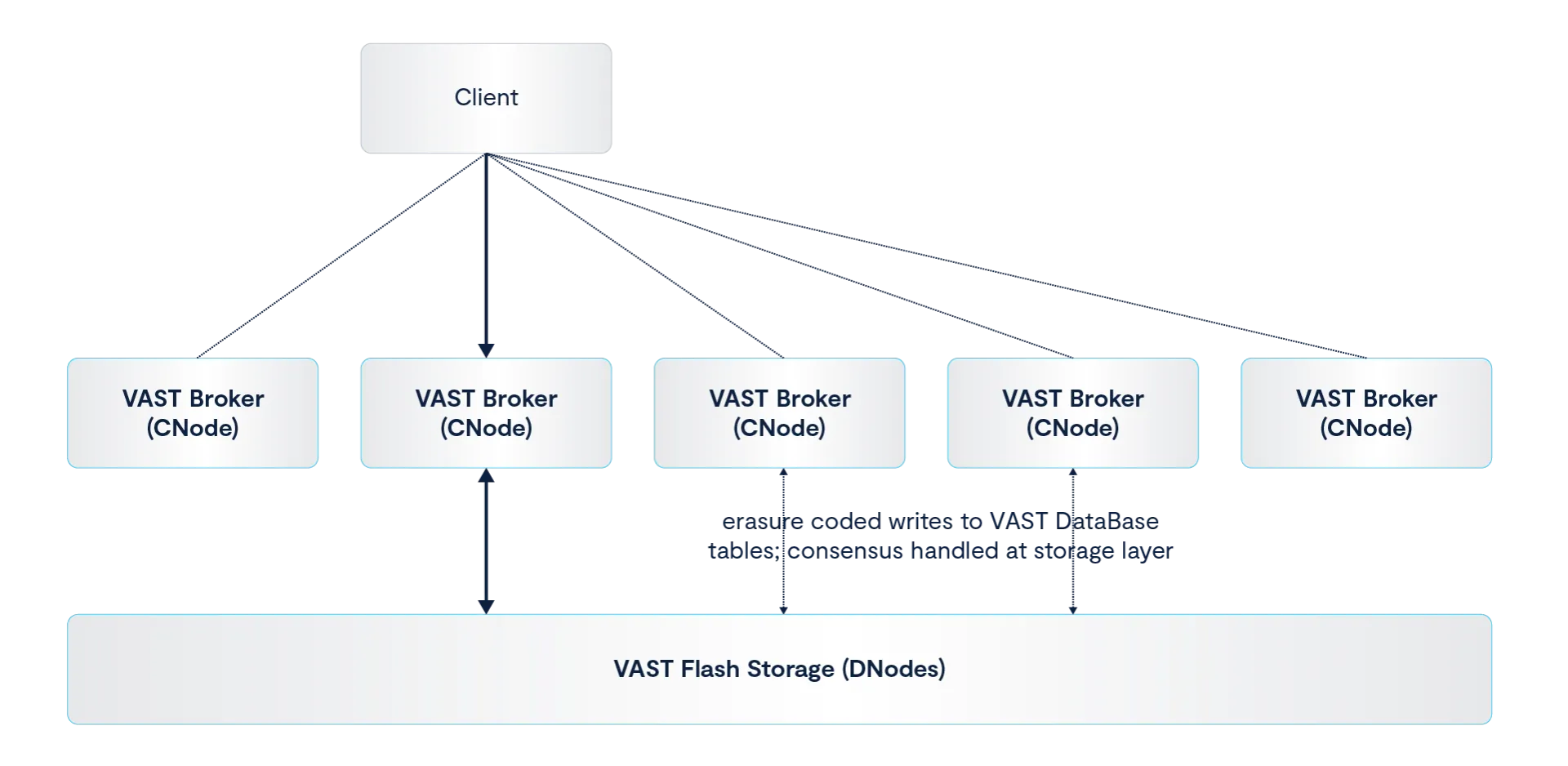

There are plenty of artifacts that describe DASE, but in short, it is the core of the VAST architecture. It combines high-speed commodity networks (RDMA technologies), very-high performance/endurance NAND devices (sometimes referred to as storage-class memory), read-intensive flash (QLC NVMe today, but covering a class of low-cost, low endurance flash), and redundant hardware capabilities to build a distributed system that operates like a single computing system. This is in contrast to shared-nothing architectures where hardware owns slices of data, requiring layers of operations for establishing consensus, balance-traffic for failure scenarios and complex processes for dealing with skew and CPU/storage imbalances. You can read the link above for details, but the bottom line for DASE is: flash cost reduction, independent scaling of CPU and storage resources and exceptionally high performance.

VAST Message Broker Architecture

What were we talking about? Right, Kafka. Our implementation directly benefits from DASE. The VAST DataBase (VDB) mentioned earlier is a tabular data presentation in VAST that can be used transparently in existing data ecosystems to run high-performance workloads on structured data. Tables in VDB are simply database tables like you already know and love and they are also the storage layer for the Message Broker. Each topic is a VDB table housing the Kafka metadata (offset data, timestamps, etc.) and the message itself.

To be clear, there are no protocol extensions, or anything different about how you produce and consume from Message Broker topics; the usual client libraries are how you talk to it (librdkafka, the official Java SDK, etc.). VAST is intended as a drop-in for anything that produces to, or consumed from, Kafka APIs. What’s different is the data layer.

Streaming to Tables

Targeting a tabular format for streaming data came easy. Structurally, Kafka message batches align well with our DataBase table design, which resides on a storage layer that includes erasure coding, deduplication, similarity deduplication (system-wide compression), compression, encryption, a mess of enterprise features, and all-flash performance — as a matter of course. Most Kafka implementations use replication and sharding mechanisms for handling log resilience, which involve lots of back-end traffic that you’ll never witness directly - triplication at a minimum. They are shared-nothing systems with east/west consensus traffic and balance-traffic related to failure scenarios - in addition to the aforementioned triplication.

The Message Broker’s path to storage is more direct, and the underlying storage architecture takes care of resilience and availability.

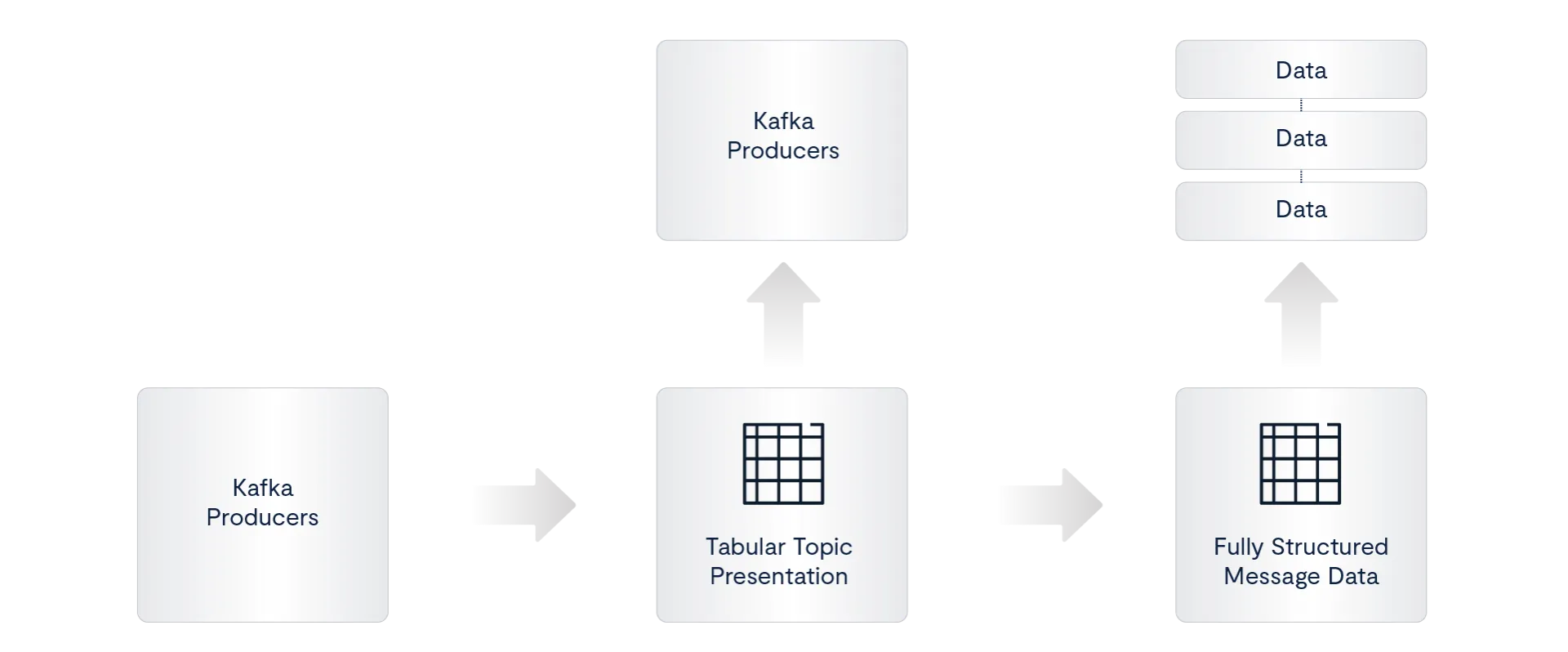

Another thing: because we use a tabular back-end, data written to the message broker is immediately available as semi-structured data to processing engines like Spark and Trino. In VAST 5.5 (releasing soon), Kafka messages will be immediately available as fully structured data.

Blob-to-Table

Starting with JSON data (protobuf is next), Message Broker topics can be configured to extract Kafka messages into fully structured tables. This is done in real time but does not affect the root message broker tables:

When blob2table is enabled on a topic, messages are immediately extracted into a sibling table that can be used by data processing engines for real-time processing. This has obvious benefits, but there are some less obvious ones that are even more interesting. A feature of tabular data on VAST is the ability to fully sort tables. Data arrives in the topic along the temporal domain (time being what it is), but the target tables can be sorted according to whatever columns you would like: user IDs, geographic locations, etc. - the possibilities will be obvious to your use case.

So - jumping ahead - while this may not entirely solve your ETL pipelines from soup to nuts (and who knows, it might), it absolutely will eliminate large swaths of compute needed for subsequent pipeline processes. And, as a side note, if you do run structured streaming workloads in your environment, you can use VAST DataBase as the sink for similar benefits (but that’s another article: “Ask me about my sink”).

Performance

I’d love to keep going on about use cases and capabilities (see the Transactional Warehousing blog for more), but we need to take a breath and talk about Message Broker performance before this blog ends. It’s my favorite topic.

In November of last year we released this write-up based on early-stage benchmarks on beta code. A lot has happened since then and we have some figures to share based on release code (5.4). There’s not a lot of nuance here: streaming is complicated; demonstrating the capability of a streaming system is not.

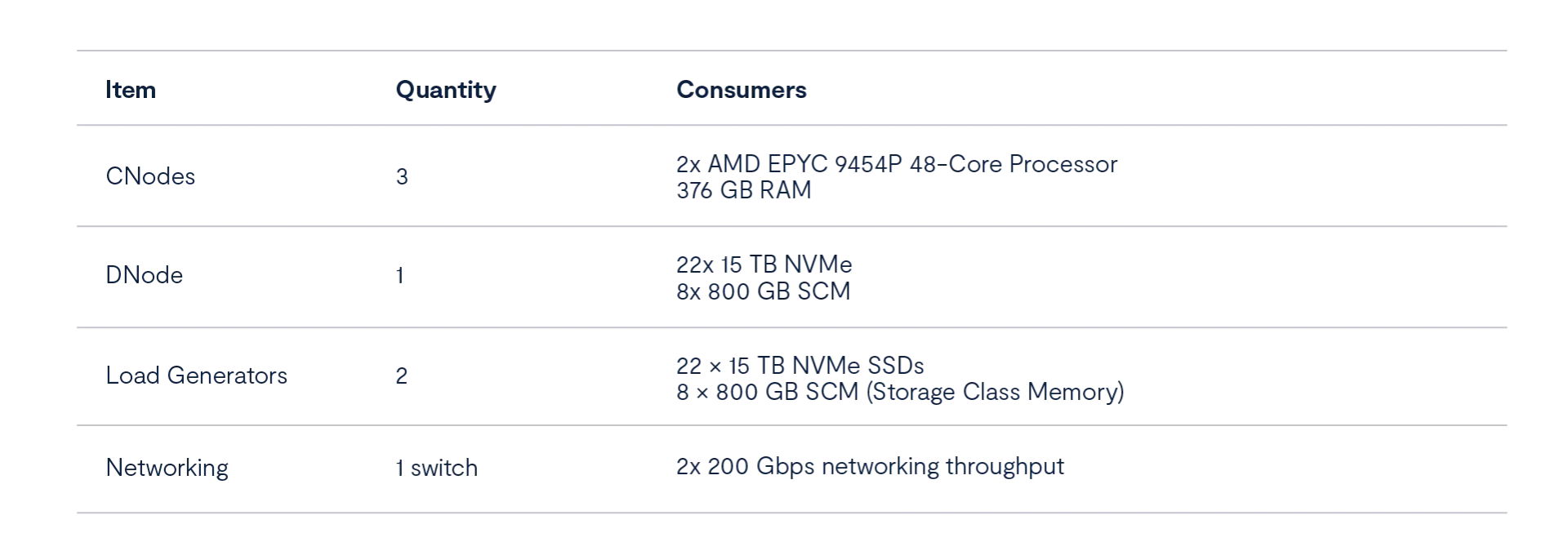

A typical test harness for a streaming system will consist of a set of brokers: VMs in the cloud, pods in Kubernetes or bare metal. Our brokers run directly on hardware in these tests. The cluster in this case consists of 3 compute nodes (we call them CNodes) with AMD Turin processors frontending a single flash enclosure housing 22 X 15TB NVMes and 8 X 800GB SCM devices.

Tests and Configuration

Producer Settings

Throughput load profile uses 1024 byte messages

Kafka producers set to a 4ms linger time

With a 1MB batch size

Idempotence is off (not currently supported)

All resilience and consistency settings and thresholds are ignored on VAST. There is no concept of an unsafe write.

Consumer Settings

Auto commit is off and consumers read from the earliest offset

Topic Configuration

192 partitions

Message timestamps are set to production time

Workload Settings:

These are throughput tests exclusively. Low-latency tests are currently underway (perhaps we’ll do a follow-up).

Read write workload: aggregate cluster ingress and egress (production and consumption) are at max performance while remaining equal. This is a stress test so latency under this max load can be as high as 3-4 seconds.

Write-only workload: pure write (producer) load, no consumption on the topic

Read-only workload: pure read (consumer) load, no production on the topic

Throughput Results

Notes/Analysis

Read/Write Workload

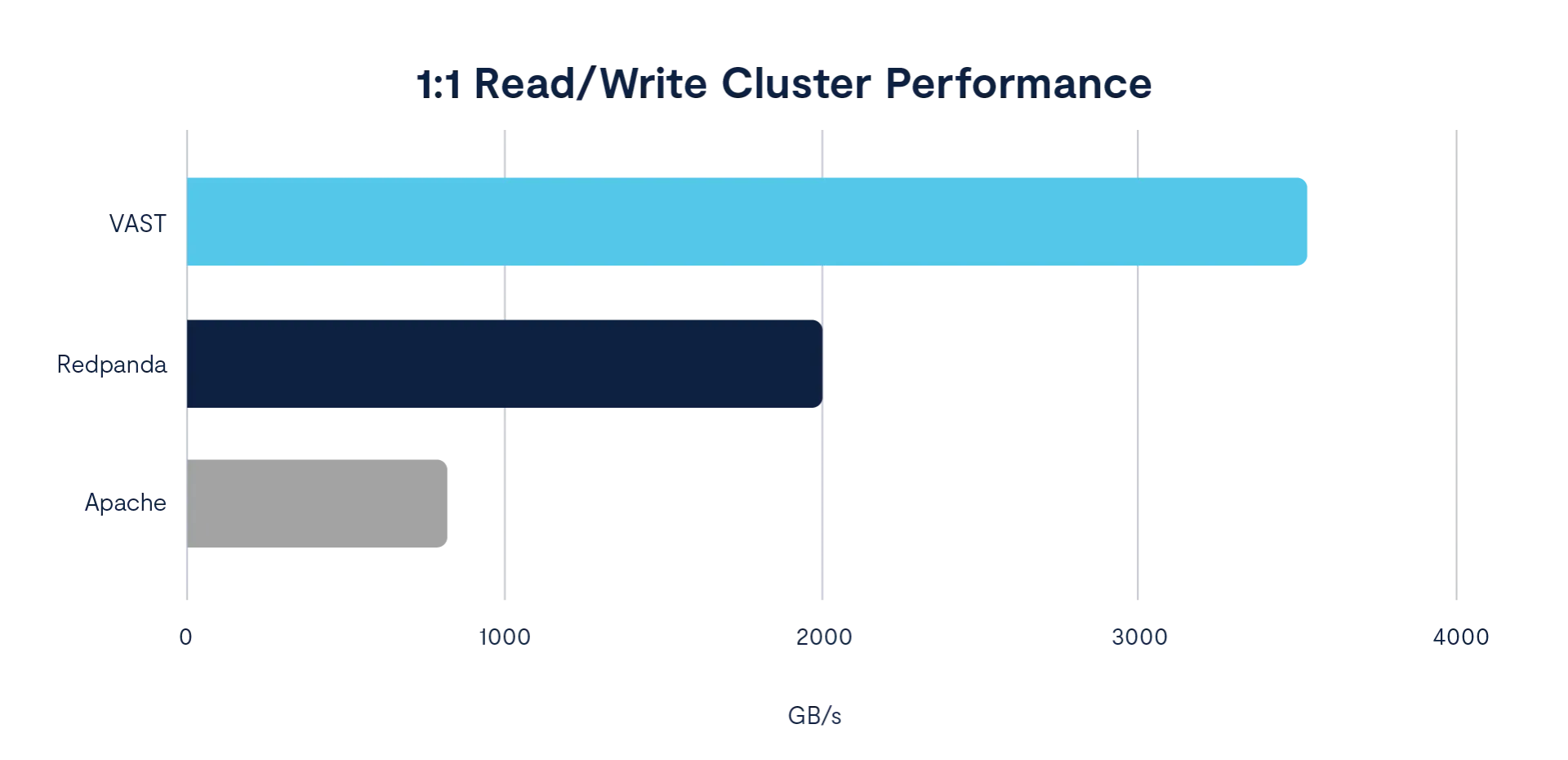

During read/write workloads (the most important one) the Message Broker achieves a whopping 1.1GB/s of streaming throughput (read and write) per broker. For comparison, Redpanda throughput is roughly 666 MB/s per node on high-end AWS instances. Apache Kafka sustained about 275 MB/s per node in our own lab tests for this same workload. Here’s a visual:

Write-only Workload

This small cluster can sustain burst traffic of roughly 17 million messages per second (~6 GB/s per node) for over thirty minutes - that’s over 30TB of burst traffic. Sustained traffic holds at 4.75 million messages per second of pure ingestion.

Read-only Workload

VAST sustains a read-only rate of greater than 12 million messages per second. Industrial and engineering profiles where bursts of telemetry data need to unload fast and then be read fast as separate steps will benefit from VAST bursting capabilities.

Where now?

There’s so much more to do. VAST is busy building the Message Broker into the ultimate Kafka implementation. Many of the new capabilities on the horizon are related to the tabular back-end. In an upcoming release we will have support for multiple simultaneous sorted projections: Kafka topics automatically exploded into several subtables, each projecting only the data you want, sorted how you want. Additional interfaces and engines are on the way for processing your topics directly: ADBC support and a new SQL engine.

But we’re also hard at work on the Kafka parts as well, with additional consumer group capabilities, more authentication options and aggressive performance improvements - even from where we are today.

We’ve talked about performance and capabilities for pages now, but it’s important to mention our core rationale: reducing costs through raw performance and user experience. None of this does anyone any good unless it enables you to do more with less; that’s the very definition of technological advancement. Contact VAST to see if we can help you.