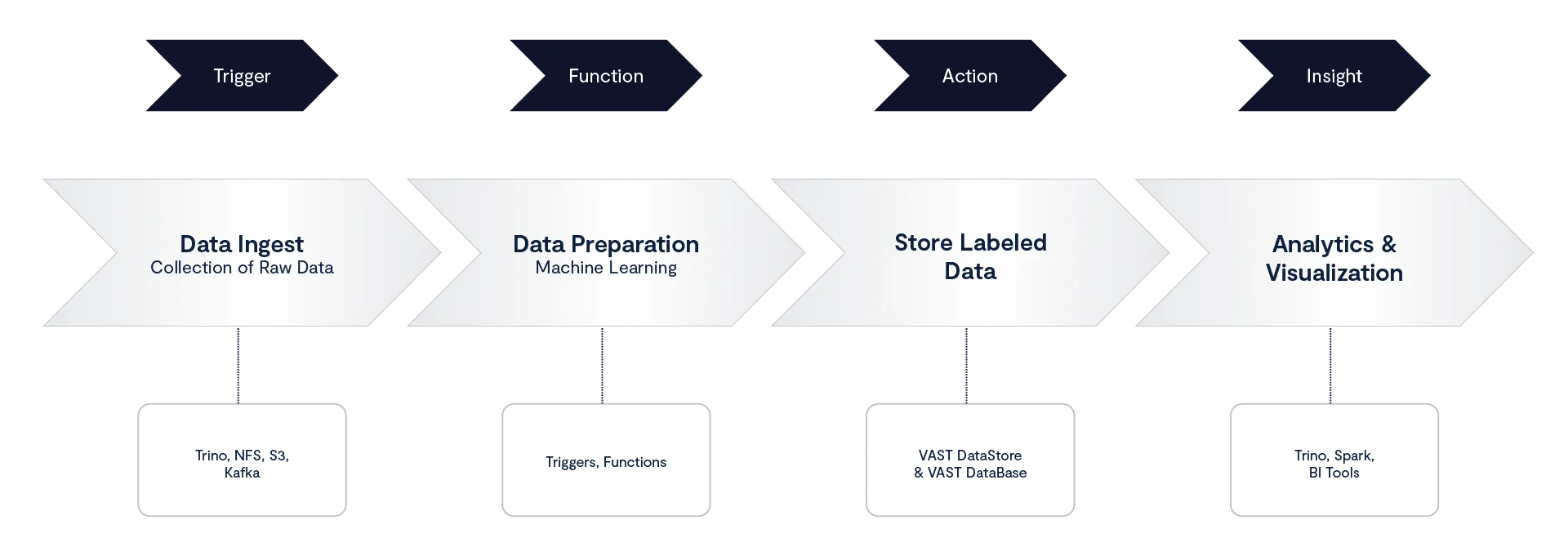

Managing event-driven workflows in enterprise data environments has traditionally required a patchwork of schedulers, scripts, and external services. Pipelines often involve brittle connectors, manual orchestration, and constant operational overhead. With the general availability of serverless functions on the VAST AI Operating System, these workflows can now run natively, directly where the data resides.

Serverless functions and triggers are part of the VAST DataEngine, an integrated programming environment built into the platform. This environment turns passive data into an active automation fabric, eliminating the need to move data between tools or manage additional infrastructure.

The VAST DataEngine: Compute Where the Data Lives

The VAST DataEngine brings compute to the data itself. Functions run on the VAST AI OS, co-located with the data, which reduces latency, simplifies management, and improves security.

The primary programming model is serverless Python functions. Functions are lightweight, containerized units of logic that scale elastically and only execute when triggered by an event. This model makes it possible to automate data processing, enrichment, and AI pipelines without the operational burden of provisioning or maintaining servers.

The VAST Event Broker: Triggers for Automation

At the center of this event-driven architecture is the VAST Event Broker. Compatible with the Kafka API, the Event Broker acts as the backbone for all asynchronous communication inside the platform.

Event Producers: Data events such as new files, metadata changes, log messages

Event Broker: Captures, persists, and routes events with sub-second latency

Triggers: Rules that detect specific event patterns and invoke functions

Consumers: Serverless functions or downstream pipelines that act on the events

This decoupled design ensures scalability, reliability, and flexibility. Functions are never hardwired to data sources; instead, the broker ensures that events flow seamlessly to the right consumer.

Two Paths to Building Automation

The VAST DataEngine is designed for both developers who want full control and analysts who prefer a low-code interface.

Code-First Workflow: Bring Your Own Functions

For developers, data scientists, and MLOps engineers, the DataEngine supports custom, user-defined functions written in Python. Any code or library can be packaged, deployed, and triggered natively within the platform.

For developers:

Code in the IDE: Write your custom Python function and dependencies locally, using your preferred development environment.

Containerize & Push: Package the code and its dependencies into a standard container image (e.g., Docker) and push it to your private registry.

Register the Function: Register the container image in the VAST platform’s management interface, making the function cluster-ready.

Configure Data Event Trigger: Define the specific data event (e.g., a new file write, object update) that will automatically initiate the function.

Execute In-Situ: The VAST DataEngine executes the function container instantly against the new data without moving the data, automating the entire transformation or inferencing pipeline.

This process makes it simple to extend the VAST AI OS with custom logic, from enrichment scripts to domain-specific AI models.

Low-Code Workflow

The VAST DataEngine also supports a low-code approach, making it possible to build event-driven pipelines without writing Python code.

For analysts:

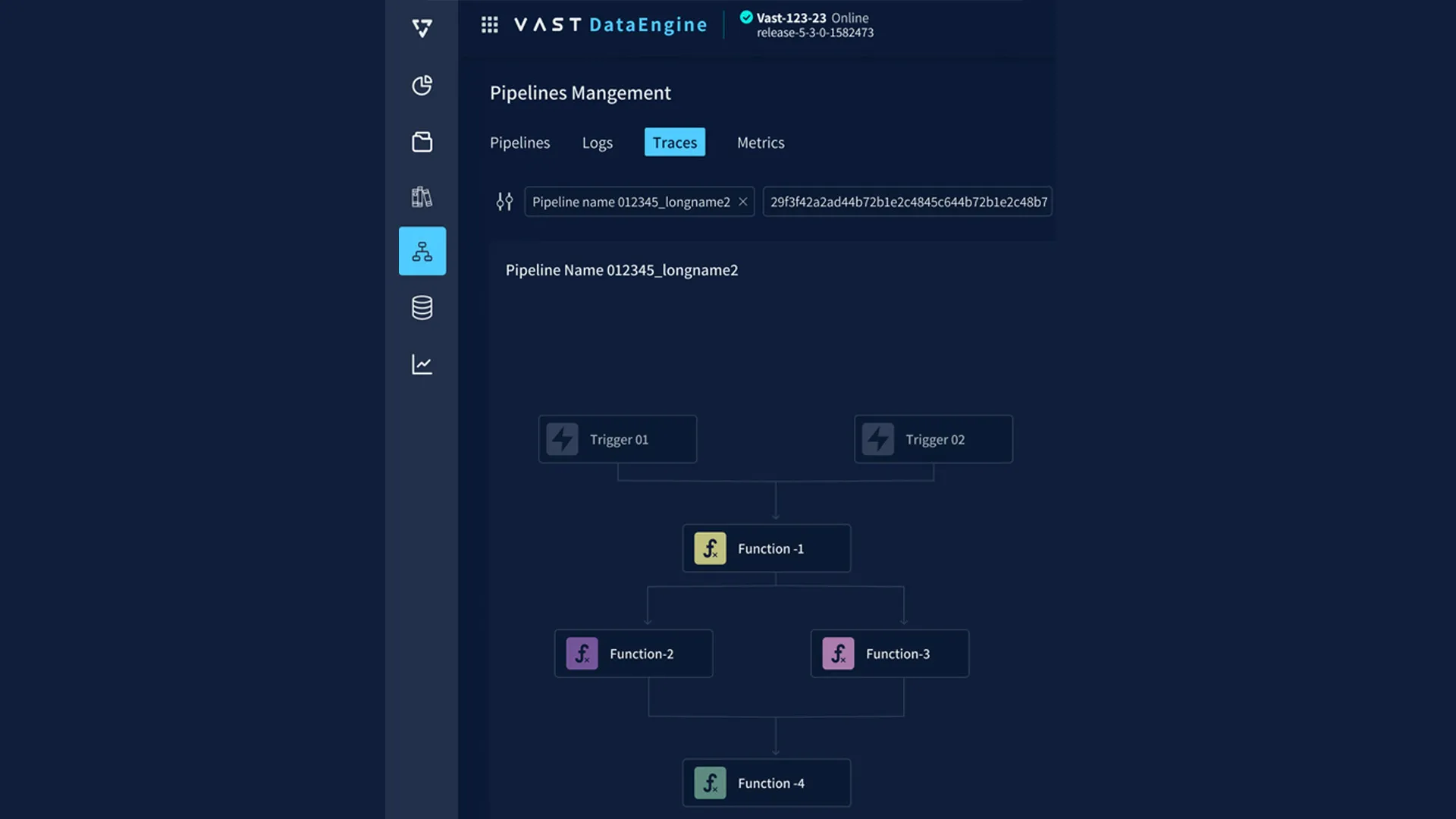

Use VAST DataEngine’s drag-and-drop UI to connect triggers and functions

Build pipelines visually without writing code

Manage deployments, observability, and logs directly from the UI

Both approaches feed into the same execution environment, with unified monitoring and governance.

Example: Building a Data Pipeline

To illustrate how triggers and functions work in practice, consider a pipeline for image processing and enrichment.

Trigger: A .jpg file is uploaded into a VAST DataStore S3 bucket. The Event Broker detects the new object event.

Function 1 — Resize: A serverless Python function resizes the image (using Pillow, for example) and emits metadata about the new image.

Function 2 — Inference: A second function consumes the metadata event, runs an inference model (e.g., NVIDIA NIM) to classify the image, and tags it with relevant keywords.

Storage: Both the resized image and enriched metadata are written back to the VAST DataStore and DataBase (respectively). Vector embeddings can also be generated and stored for semantic search in VAST DataBase.

Observability: Logs, metrics, and execution traces are automatically captured in the VAST UI for debugging and performance monitoring.

This workflow demonstrates the efficiency of serverless design: functions execute only when triggered, scale independently, and pass events seamlessly through the broker without manual orchestration.

Technical Advantages of VAST Serverless Functions

Native Integration with the Data Platform: Functions run where the data lives. There’s no need to ETL events into an external compute environment or manage sidecar services for enrichment.

Unified Governance: All triggers, functions, and pipelines inherit the platform’s governance model. Access controls, entitlements, and audit logs apply consistently across raw objects, metadata, vectors, and results.

Scalability and Elasticity: Because compute nodes (CNodes) in the DASE architecture are stateless, functions can scale elastically. Each new node can immediately consume events and execute functions without rebalancing or sharding.

Event-Driven Design: Any event - file creation, metadata change, or external system signal - can be used to trigger a pipeline. The system supports both high-frequency streams (millions of events per second) and low-volume scheduled jobs.

Code-First and Low-Code Flexibility: The same runtime supports both Python-based workflows and visual low-code pipelines, ensuring broad accessibility across technical teams.

The Serverless Architectural Advantage

Serverless is not about having no servers; it is an architectural paradigm that fundamentally changes the burden of application deployment.

It is a programming model where developers focus exclusively on the function’s code (the business logic), while the underlying platform dynamically manages the execution environment. This model abstracts away the immense complexity of provisioning, scaling, patching, and maintaining servers or container orchestration systems. By shifting this operational burden entirely to the platform, Serverless allows teams to deliver new features and automate entire pipelines faster with dramatically reduced infrastructure management overhead.

Build Where Your Data Lives

Serverless functions and triggers extend the VAST DataEngine into a complete automation fabric. By combining an event broker, serverless execution, and unified governance, VAST turns raw events into governed, actionable outcomes in real time.

For developers, this means writing Python functions and deploying pipelines directly where the data lives. For operators, it means building low-code automations without touching infrastructure. For enterprises, it delivers a resilient and scalable foundation to transform data workflows, from ingestion and enrichment to AI-powered pipelines.

The result: a unified, event-driven programming model that makes enterprise data active, automated, and AI-ready by default.

Take a deeper dive into the VAST DataEngine here.