There’s little debate these days that the interface question is already settled, even if most people are still framing it as a storage decision.

AWS S3 has graduated from one of many options to the de facto standard, the default way apps expect to interact with data. Whether systems run in the cloud or on prem, the access model is converging on the same pattern because developers, tools, and services are already built around S3 as the interface.

What’s less clear is whether the systems underneath can actually keep up. The model works cleanly at small scale, but as workloads become continuous, distributed, and shared across teams, the gap between the API and the infrastructure starts to show.

Alon Horev, CTO at VAST and colleague Scott Howard, Field CTO and S3 specialist at VAST delved into the journey of S3 as storage choice-to-de facto standard in front of the large crowd gathered at the recent VAST FWD user conference.

“The simple fact is, every single one of us is using S3 almost every single minute of every single day, on your phone, on your computer and everywhere,” Scott reminded the crowd, emphasizing that S3 isn’t just a backend system for storing large datasets these days because it’s morphed with increasing scale to encompass how modern systems operate at a most basic level and sits behind everything from web apps, content delivery, data pipelines, and increasingly, super-complex AI workflows.

Once you see it that way, he says, the conversation shifts. But he adds that the real question these days is whether the systems underneath it can actually support how widely it is being used.

So now that we’ve all agreed the interface has already been decided, the next question is why this one (among many choices for a long time) held. The answer comes down to how much complexity it removes from building large systems. “Basically building massively scalable systems is hard. And presenting primitives that are simpler, like saying, you know, you're going to write objects and there's no renames, you can't mutate them….Everything is immutable. It just makes life easier for architects and engineers to build such systems.” Alon Horev said.

The key is not just immutability as some kind of conceptual benefit, it’s much more about all the extra stuff it removes. If data’s written once and never changed, you avoid locking, partial updates, and all those messy coordination problems that make distributed systems a bear to run.

That same enticing simplicity carried through to how S3 is accessed, making it a fine choice. Because it’s built on HTTP, anything that can speak HTTP can interact with it, meaning no need for specialized clients or mounted file systems and the like. Bonus is that security’s handled using the same mechanisms that already exist for web traffic, with all the auth and encryption functionality built into the very request itself.

Alon describes this as an “internet first” model, where access and control are exposed directly to the user instead of requiring administrative setup. Developers can create and access data using the same patterns they use for APIs, which lowers the barrier to adoption and makes the model consistent across environments.

What matters just as much is what goes away. File systems assume you know where the data lives, that you can mount it, and that permissions are centrally managed. S3 removes all of that. Access is a single request, and permissions are handled through policies instead of filesystem rules. That simpler model is what let S3 scale across so many use cases and become the default way systems interact with data.

Atomic by Design, Not by Convention

Once you see why S3 is simpler, the next step is what that simplicity enforces, which really marks where it behaves very differently from file systems. “The object either exists or it doesn't, and it exists in its final state or not at all,” Scott explains. That means no partial reads or half-written data, and importantly, no need to coordinate access just to keep things consistent. What you read is always complete.

File systems work the opposite way. Writes happen in pieces, and those pieces are visible as they happen. A process can open a file mid-write and only see part of it. That is why file systems rely on locking, temp files, and careful sequencing. S3 removes that by making writes atomic and objects immutable. If something changes, it is replaced, not edited in place, which makes behavior more predictable when multiple processes are involved.

This matters more as workloads become continuous. When many processes are reading and writing at the same time, consistency cannot be handled cleanly at the application level. It has to come from the system. The atomic model does that by default, ensuring every read is against a complete object, which is exactly what modern distributed workloads need.

The Gap Between the API and the System

S3 looks clean at the interface, but problems show up underneath it.

Alon makes the key distinction. “I think it's really important to make a distinction between a protocol and an implementation, right? As Scott was saying, s3 is a protocol based on HTTP AWS, s3 is an implementation, right?” S3 defines how you access data, not how it is stored or handled at scale, and that difference matters.

The issues appear when the workloads start to grow though. Small objects add overhead because every request carries authentication and headers and listing large numbers of objects requires a ton pf API calls. What’s more is that some systems make this worse with centralized metadata that becomes a bottleneck or with inefficient storage layouts that waste capacity. You don’t see any of this at the API level, but it defines how the system actually performs.

The result is a gap between what S3 promises and what many systems can actually deliver when scaled up.

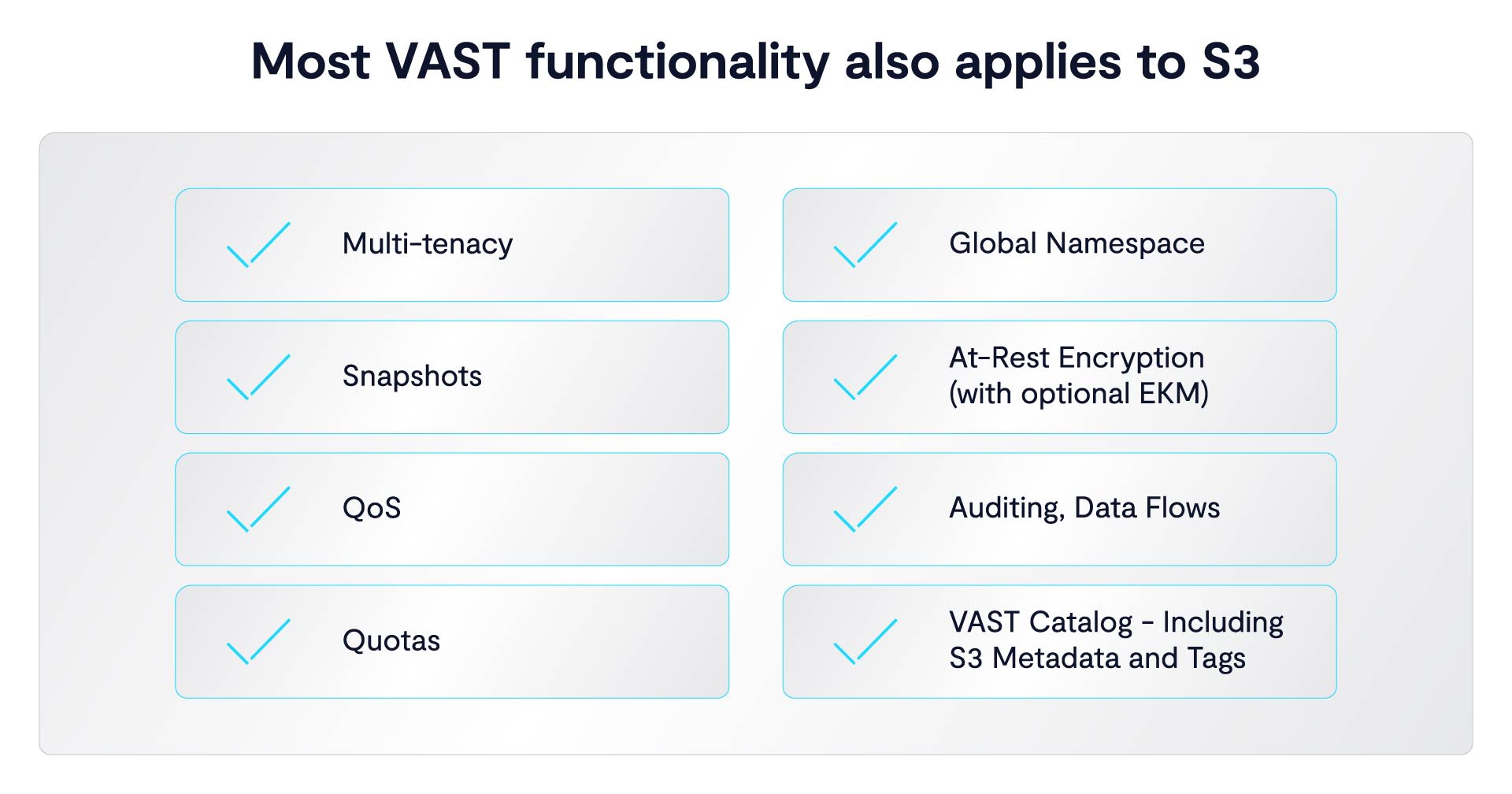

Once you see the gap between the S3 API and the systems underneath it, the next step is how VAST approaches it differently. Instead of treating protocols as separate storage paths, it removes that distinction.

“Internally within VAST we don't store things as S3 objects. We don't store things as NFS files. We store them as what we call an element, and then that element is really a superset of all of the protocols.” Scott said, driving home that these aren’t just different choices, they’re actually different ways to access the same data.

That changes how systems are used because it means protocol becomes an access decision, not a storage decision. Data can be written one way and read another without copying or moving it. A legacy system can write via SMB, compute can run over NFS, and applications can use S3, all against the same data. The point is, you’re no longer locked into one model.

The point is, if apps expect S3, the system has to support it without breaking other workloads. A multi-protocol model does that by separating how data is stored from how it’s accessed, so S3 can be the common interface while other access methods still work where needed.

Architecture Decides Whether S3 Works

Once protocols are no longer the issue, performance comes down to system design.

“The reality is that we get customers to help us understand a bit about the workloads and what they're trying to solve for but in general, there are very high performance workloads running on VAST S3 and some famous models are being trained on S3 because some of the model builders that we work with were born in the cloud,” Alon said.

The point is simple. S3 is not the limitation, the architecture is. And there are some pretty obvious places where systems break.

I heard from a bunch of customers that some object implementations are very bad at listing metadata, they’re basically listing buckets. Why is that? Maybe there is a single metadata server that can become a bottleneck for the entire cluster, vast shards or partitions. Metadata distributes it across all the SSDs.

At scale, metadata access matters but if it’s centralized, it becomes a bottleneck, and if it’s distributed, the system scales more cleanly, even though the API looks the same.

There are still real costs in the protocol, he adds. Small objects carry overhead because of HTTP and authentication. But most performance issues people blame on S3 come from system design. Centralized metadata, inefficient layouts, and lack of parallelism are architectural choices but whether S3 works at scale depends on how the system underneath is built.

From Storage to Coordination

Once the system underneath can support S3 at scale, S3 starts to do more than store and retrieve data. It becomes a way to describe what the data is and what should happen to it. Objects can include metadata and tags, which are simple key value pairs.

That information can drive access and behavior because now permissions are not fixed rules but can depend on tags, where a request comes from, or how the object is used. For example, a user can be blocked from accessing an object until it’s been processed, with that rule enforced automatically through tags. It looks like access control from the outside, but it is also a way to manage workflows.

When it comes to events, the system can generate a notification when an object is uploaded or changed and trigger processing immediately. “We can generate an event when you upload or modify an object,” Scott explains. At that point, S3 is doing way more than just storing data because it’s taking a central role in helping coordinate how data moves and gets processed across the system.

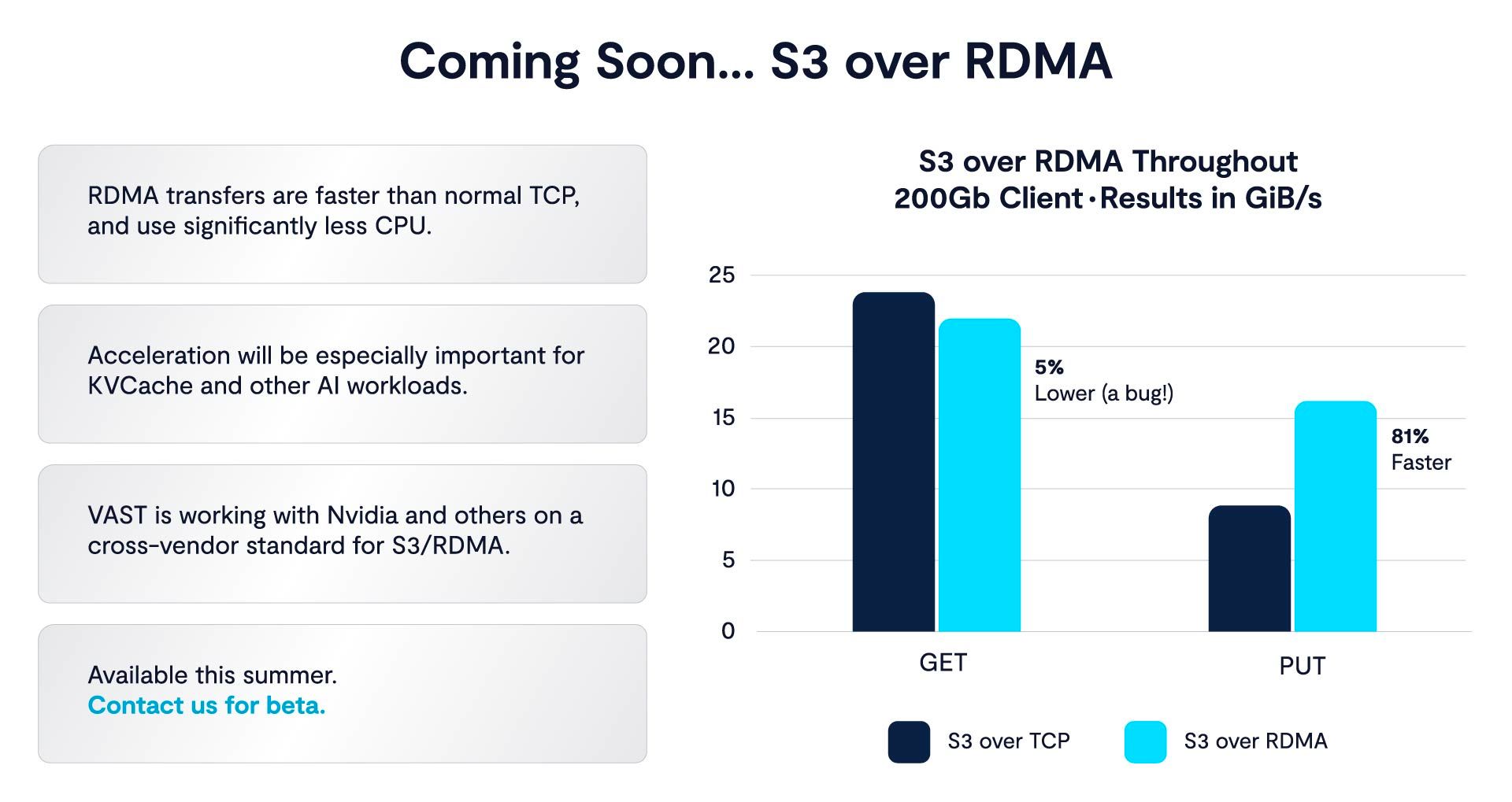

They also touch briefly on S3 over RDMA, which is about making S3 more efficient at high performance. Instead of running purely over standard TCP and HTTP, RDMA reduces CPU overhead and speeds up data movement. The point is not just the feature itself, but what it signals. S3 is being pushed into workloads that used to require file or block storage, especially in AI and HPC, and the system underneath is evolving to keep up.

AI Workloads Force the System to Hold Together

By this point, the pattern is clear. S3 is a simple, consistent way to access data, and with the right architecture it can scale. The real pressure comes from the workloads, especially AI. Scott points to where S3 is being used. “We also see a lot of customers using S3 for training, and then a lot of them's favorite one is KV cache.” These are active workloads that depend on constant access to shared data.

The fit comes from how the data is handled. In the video example, data is ingested as a stream, split into chunks, and processed step by step. “With video you're taking in a video stream, you're splicing it up into into chunks, and x3 is just a perfect protocol for doing that.” This same pattern shows up across AI. Data is continuously created, read, processed, and reused, and S3 works well because it handles discrete objects cleanly and makes them available right away.

S3 gives every part of the system a common way to access data, from ingestion to training to serving results. But these workloads also expose the limits of systems that cannot keep up. High request rates, large object counts, and constant reuse all put pressure on performance. At that point, the interface is not the issue. The system underneath it is, and that is the layer VAST is focused on.