AI inference is running into a new constraint, and it is not always compute. As LLMs move into production, workloads extend far beyond simple prompt-and-response interactions. Conversations unfold across multiple turns between humans and agents, continuously accumulating context along the way.

All that context lives in the model’s key-value cache, and as context windows grow, recomputing it gets expensive as GPUs spend valuable cycles regenerating what they’ve already seen.

NVIDIA Dynamo addresses the orchestration challenges of serving these workloads at scale. By separating prefill and decode, dynamically allocating GPU resources, routing requests based on KV cache locality, and reusing stored KV cache context, Dynamo maximizes performance across distributed inference clusters. This, combined with VAST Data supporting efficient KV cache storage and retrieval, means systems can reuse context instead of recomputing it, improving latency and GPU utilization. VAST has been collaborating with the NVIDIA Dynamo team for some time now and are excited about the general availability of Dynamo 1.0.

NVIDIA announced the following KVBM features in their tech blog:

Object storage support: S3- and Azure-style blob APIs

Global KV event emission: Emits events when KV blocks move across storage tiers, enabling cluster-wide tracking

Pip-installable module: KVBM can be installed directly into inference engines without the full Dynamo stack

Orchestrating Inference at Scale

As inference scales to many thousands of GPUs (and millions of requests), the challenge shifts from compute to coordination. Each request hits the system with different sequence lengths, context sizes, and latency requirements, and the system has to constantly determine how those requests should be handled across the cluster.

At scale, this becomes an endless series of real-time decisions. GPU memory capacity has to be allocated dynamically to keep resources fully fed without creating bottlenecks. This means requests have to get routed to nodes capable of serving them most efficiently, which ideally is where relevant KV cache state already exists.

The two phases of inference, prefill and decode, need to be scheduled across the cluster based on their very different resource demands. Prefill is compute-intensive and benefits from high-throughput GPU execution, while decode is typically memory- and bandwidth-bound. And all the while, KV cache blocks need to be managed and placed so shared context can be reused any time requests overlap.

Disaggregating pre-fill and decode, Dynamo lets each phase scale independently to run on the GPU resources best suited. KV-aware routing directs requests to those GPUs that already contain the needed context, meaning an end to redundant computation. At the same time, Dynamo dynamically adjusts GPU allocation across the cluster as traffic patterns change.

Dynamo also maintains awareness of KV block lifecycle events as they move across memory and storage tiers. This lets the cluster hold a consistent view of where context lives without having to poll every single node. By tracking when blocks get created, migrated, or kicked out, Dynamo enables more efficient routing and fetching of shared context.

The result of all this is an inference architecture where GPU clusters behave as coordinated systems rather than isolated nodes. Dynamo manages the flow of requests, resources, and context across the infrastructure, enabling large-scale AI services to maintain high utilization while adapting to unpredictable workloads.

In conversations with AI infrastructure teams deploying large inference clusters, a consistent pattern has emerged. Operators are concerned not only with raw model performance but also with how efficiently context can be reused across requests. Managing KV cache across GPUs and storage tiers has quickly become one of the central challenges in production inference environments.

What AI infrastructure operators face now as they deploy large scale deployment is that by taking advantage of high KV cache reuse, it not only saves costly compute, memory and power costs, it also dramatically reduces latency, which is critical for agentic workflows. This change in AI deployment is a pattern that we see as an organization transitions from POC stage to mass scale deployment.

Dynamo can determine where context should be reused and how requests move through the cluster but the next challenge is ensuring that the underlying infrastructure can store and retrieve that context efficiently.

As Dynamo is an open source framework with modular design, with Dynamo 1.0 the KVBM module of KVcache is a Pip-installable module which can be installed directly into inference engines without the full Dynamo stack. This enables interoperability with various frameworks.

Context Memory is The Next Bottleneck

Once orchestration is in place, another constraint rears its head. Dynamo can determine where requests should run and how context should be reused across the cluster. But the effectiveness of that reuse ultimately depends on where the context itself lives.

For modern LLM workloads, that context is stored in the model’s key-value (KV) cache. As models grow and context windows expand into the hundreds of thousands of tokens, KV cache quickly exceeds the capacity of GPU memory, especially when each GPU is serving multiple concurrent users. Context must spill beyond costly high bandwidth memory (HBM) into system memory, local storage, or shared infrastructure across the cluster.

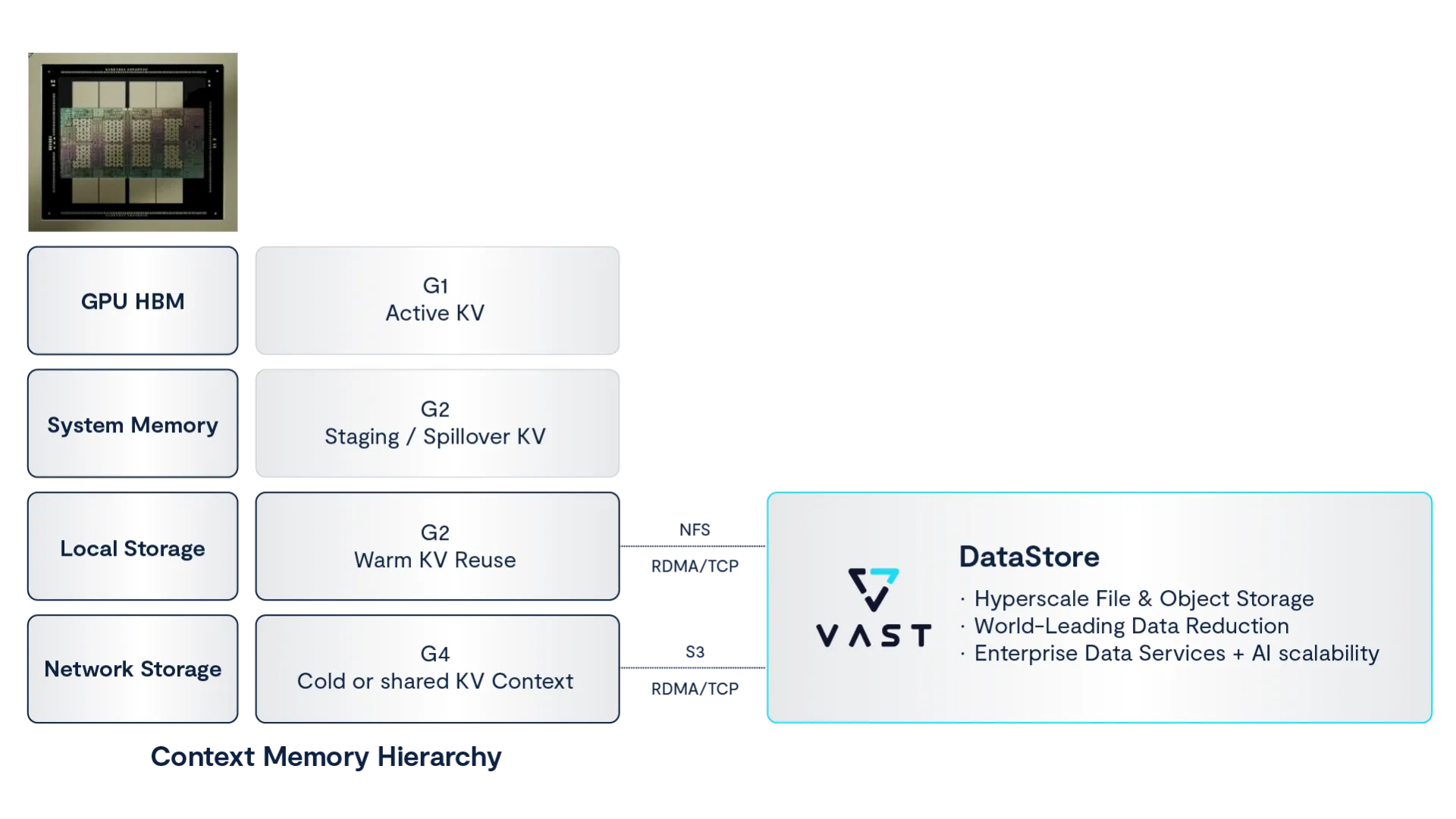

This creates a new architectural requirement: a memory hierarchy for inference context which VAST Data provides high performance and efficient shared storage platform for at the G3 and G4 tiers.

As context grows beyond what GPUs can hold locally, inference systems naturally form a memory hierarchy for KV cache. Different tiers store context based on how frequently it is accessed and how quickly it must be retrieved. In large inference clusters, this hierarchy typically includes:

G1 — Active KV (GPU HBM) The working set of context currently used by the model. This tier provides the lowest latency but is limited by GPU memory capacity.

G2 — Staging / Spillover KV (Host Memory) Context that no longer fits in GPU memory but may soon be reused. Host memory provides more capacity but slightly higher access latency.

G3 — Warm KV Reuse (Local or Shared Storage) Previously computed context that may be reused across requests or sessions. Efficient access at this tier allows GPUs to avoid recomputing KV state.

G4 — Cold or Shared Context (Cluster-Level Storage) Longer-lived context shared across nodes or sessions. This tier enables persistent reuse of inference state across distributed clusters.

In Dynamo 1.0, KV block manager can persist KV blocks to object storage tiers compatible with S3 or Azure-style blob APIs, allowing clusters to store and retrieve context across distributed environments. Announced support by VAST Data for S3/RDMA ensures simple, multi-protocol support for all common data types with a unified data platform that is simple and performant.

Active KV cache stays in GPU memory for immediate use, while warm or shared context must be stored in tiers that allow it to be retrieved quickly when requests overlap. Without an efficient way to persist and retrieve that context, the benefits of KV-aware routing diminish, and GPUs return to recomputing results that already exist elsewhere in the system.

This is where the infrastructure layer becomes critical.

By integrating Dynamo’s KV cache management with the VAST Data platform, inference clusters gain a high-performance context storage tier capable of feeding GPUs at network line speeds. Instead of recomputing context for each request, systems can retrieve previously computed KV cache blocks and reuse them across nodes.

The result is a significant shift in inference efficiency. GPUs spend less time reconstructing context and more time generating new tokens, improving latency, utilization, and overall system throughput.

As part of the new Dynamo 1.0 implementation, there is support for Global KV event emission, which means Dynamo emits events when KV blocks move across storage tiers enabling cluster-wide tracking.

From a user perspective, Global KV Event Emission is the “invisible hand” that makes a massive, distributed AI cluster feel like a single, incredibly fast, and infinite-memory computer. With Global KC event emission, the user’s “context” becomes a portable, global asset

Measuring the Impact of KV Cache Reuse

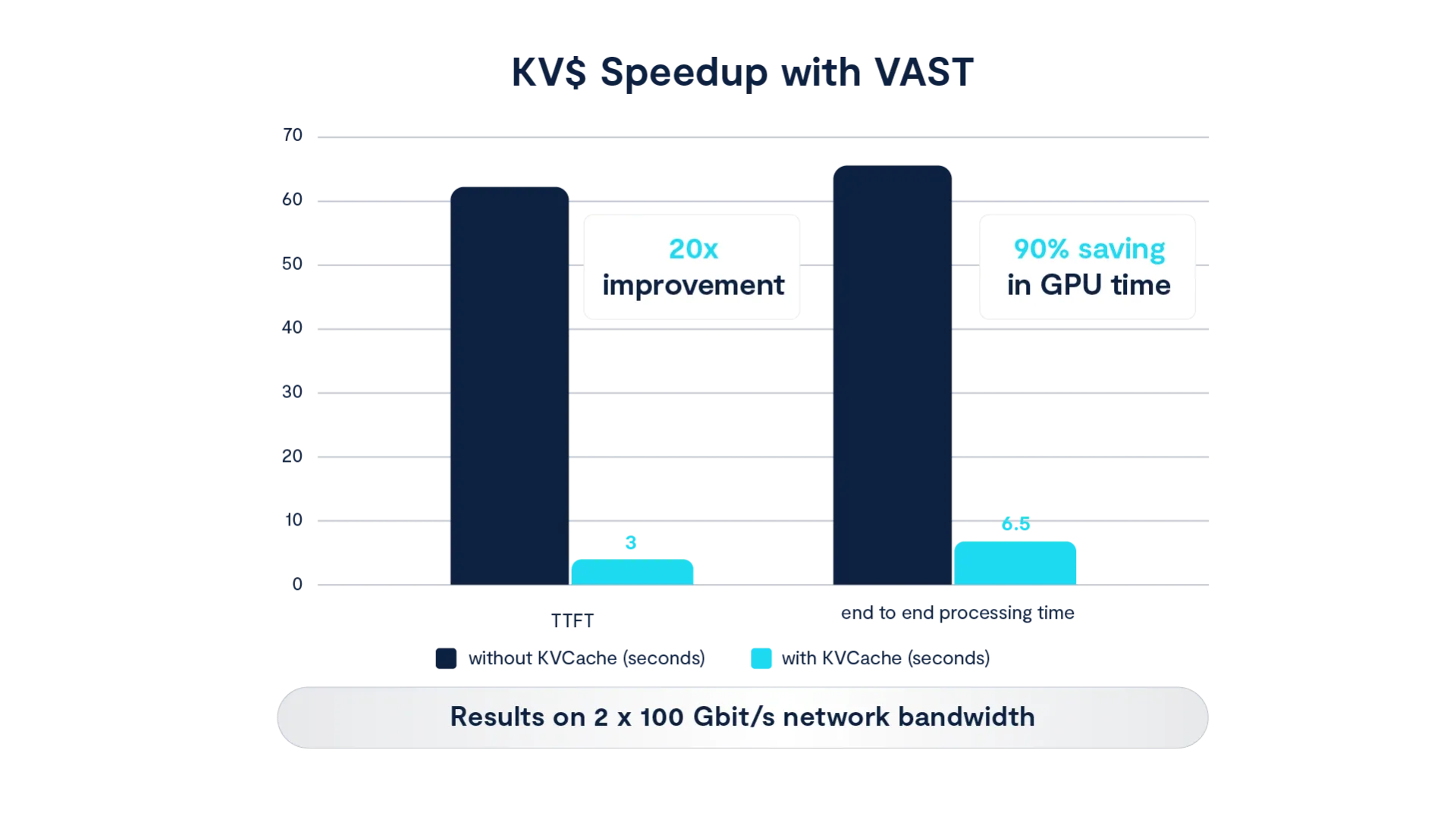

To quantify the impact of KV cache reuse in a distributed inference environment, experiments were conducted using a Llama-3 405B model with a 128K token context window running on a cluster of eight NVIDIA Hopper GPUs connected to storage through 2×100 Gb/s networking. The experiment compared two execution paths: recomputing the KV cache for each request, and retrieving previously computed KV state from shared storage.

In a typical inference pipeline, the prefill stage processes the input prompt and generates the attention keys and values that represent the model’s context. For large models and long context windows, this step can be expensive in compute resources. When the same context must be recomputed repeatedly, GPUs spend a significant portion of each request rebuilding state before producing the first output token.

By retrieving KV cache blocks from the VAST Data platform instead of recomputing them, the system bypasses this recomputation step entirely. In these tests, fetching the KV cache from shared storage reduced time-to-first-token by up to 20×, while reducing GPU compute time by approximately 90 percent. Because KV blocks were delivered at network line speeds, the bottleneck shifted away from storage I/O and toward the available network bandwidth.

Enterprise-Ready Infrastructure: Efficiency at Scale

Beyond raw performance gains, the integration of NVIDIA Dynamo and VAST Data addresses the operational hurdles of mass-scale deployment.

1.4x Data Reduction: VAST delivers a 1.4x data reduction ratio specifically for KV cache data. This is a critical advantage given the current global shortage of enterprise SSDs, allowing organizations to store significantly more context within the same physical footprint.

Driverless GPU Nodes: The architecture does not require proprietary drivers on the GPU machines. It remains lightweight and portable across various frameworks without added kernel complexity.

Secure Multi-Tenancy: VAST provides the logical isolation and Quality of Service (QoS) necessary to serve thousands of concurrent users. This ensures that sensitive conversation history or agentic context remains private and secure between different tenants on the shared cluster.

Enabling More Tokens Per GPU

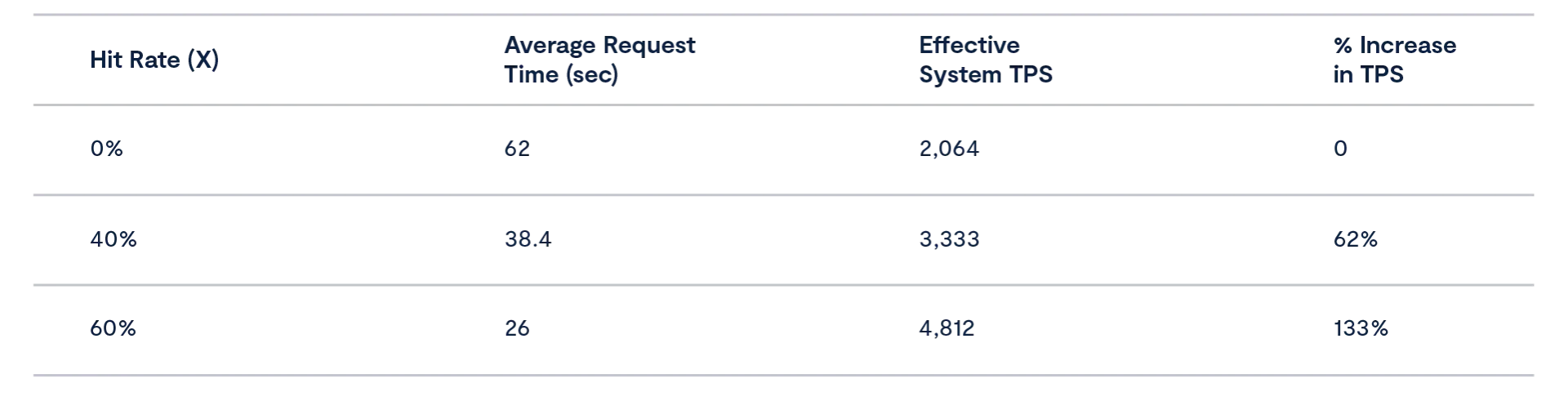

By applying our test results to a real-world deployment scenario, we project achieving 60-130% more tokens per dollar with VAST’s KV cache acceleration. We assume conservative cache hit rate of 40-60% for enterprise AI flows. For Agentic and coding tasks cache hit rate may be even higher.

Our calculation demonstrates how VAST turns wasted GPU time into productive time. We take the time it takes to process a request with and without kvcache, with varying kvcache hit rate assumptions that are applicable to everyday use cases such as coding, chats, and agentic workloads.

This performance boost translates to significantly more token generation, and noticeably lower latency to the end user. The principle is clear: we are not making the GPU faster; we are making it available more often, turning storage into a compute force multiplier.

The impact of this shift extends way beyond latency improvements. When GPUs no longer spend cycles rebuilding context, they can devote a much larger portion of their compute budget to generating new tokens.

In practice, KV cache reuse transforms storage into a compute efficiency layer. Rather than accelerating the GPU itself, the system increases effective throughput by ensuring GPUs spend more of their time generating new tokens instead of repeating work that has already been done.

Introducing Context Memory Storage

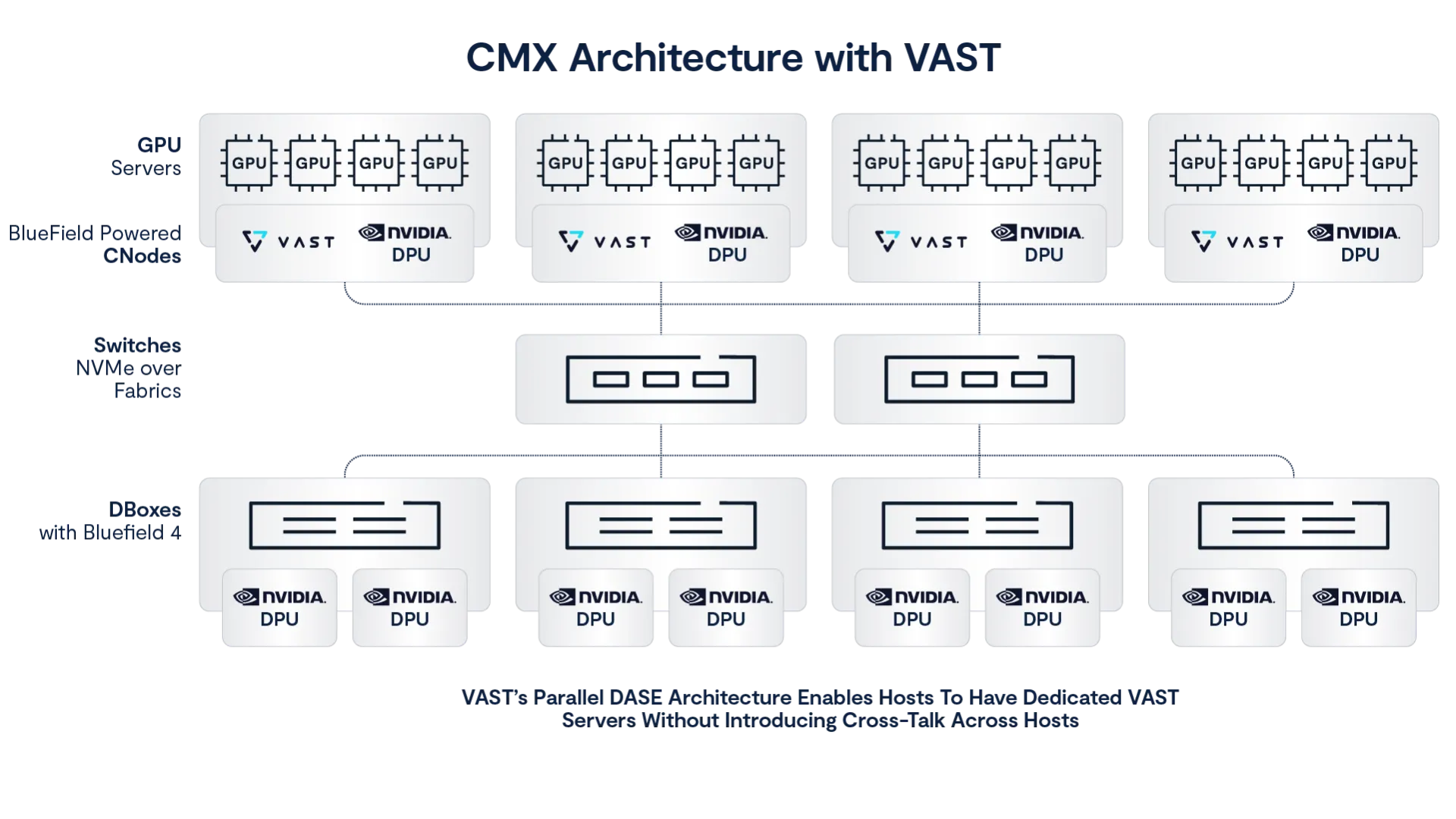

As inference systems scale to larger clusters and longer context windows, even shared storage tiers eventually encounter limits. Context reuse becomes most effective when KV cache can be accessed with low latency and shared efficiently across GPUs serving related requests. This requirement has led to the development of a new architectural layer: NVIDIA Context Memory Storage (CMX), built on the recently announced NVIDIA STX reference architecture.

CMX introduces a dedicated memory tier designed specifically for inference context. Rather than pushing active KV cache into higher-latency storage systems, CMX provides a pod-level context layer shared across GPUs within an inference cluster. This allows previously computed KV state to be reused across nodes while keeping retrieval latency low enough to support real-time workloads.

The CMX architecture combines several NVIDIA technologies to achieve this goal. NVIDIA BlueField-4 data processing units (DPUs) connected via high-speed link with RDMA to VAST Data D-nodes provide high-performance storage processing and data movement close to the compute nodes. NVIDIA Spectrum-X Ethernet networking delivers predictable, low-latency connectivity across the cluster, ensuring that KV cache can be retrieved quickly when requests share context. Dynamo continues to orchestrate request routing and KV reuse across the system.

Together, these components create an infrastructure where inference context behaves more like shared memory than traditional storage. GPUs can retrieve KV blocks across the cluster as needed, enabling efficient reuse of context across large distributed deployments. The software support for CMX in Dynamo will support the KV cache 3.5 tier in future releases.

VAST plays a key role in this architecture by providing the scalable data services that manage KV cache across these tiers. By integrating VAST’s distributed data platform with CMX, inference clusters can persist and retrieve context efficiently while benefiting from enterprise capabilities such as data reduction, security, and lifecycle management.

The result is a system designed for the next generation of AI inference utilizing large models with long input sequences including workloads like agentic AI, coding, chatbots, research and reasoning models with frequent content reuse. As context windows grow and agent-based workflows extend across longer interactions, CMX allows inference clusters to maintain low latency while scaling context memory far beyond the limits of individual GPU nodes.

Scaling Context for Real-World AI Systems

As organizations move from experimentation to production AI systems, the scale of inference context grows fast. Persistent workflows, multi-turn interactions, and agent-driven tasks all add to the mountain of context that has to be stored and reused across requests.

For example, imagine an organization serving roughly 10,000 concurrent users, each with a conversation history that generates approximately 32 GB of KV cache for a full session. Even modest retention goals can require hundreds of terabytes of context storage.

Retaining only the active session for each user requires roughly 320 TB of KV cache capacity. Expanding that retention to multiple sessions per user pushes requirements into the petabyte range, while longer-term context retention for agent workflows can grow far larger.

While the problem is clear, the solution is too. Architectures that treat KV cache as an first-class infrastructure layer allow systems to reuse previously computed state instead of recomputing it repeatedly. This improves latency and increases the productive use of GPU resources.

The combination of Dynamo orchestration, efficient KV cache reuse, and scalable context memory infrastructure enables inference systems to serve more users and generate more tokens with the same underlying compute.

And as LLMs continue to evolve toward longer context windows and agent-based workflows, the importance of efficient context management will only grow. Dynamo provides the control plane that coordinates distributed inference across GPU clusters. VAST provides the data platform that allows inference context to persist and be reused efficiently across those clusters.

Together, these technologies help break the emerging memory wall of AI inference, enabling organizations to build large-scale AI services that are both performant and economically efficient.

Learn more about how VAST Data helps customers reduce infrastructure and increase time to first token with Dynamo, KV Cache and Context Memory Storage.