Inference breaks at scale. As LLMs move from simple model serving to complex, high-concurrency pipelines, the industry is hitting a “Context Wall.” The only way over it is to move toward disaggregated inference, separating prefill and decode into distinct GPU pools.

But this shift creates a new, high-stakes engineering challenge: The KV Cache Data Plane. To make this work, we need to keep the “handle” the world has standardized on (S3) while fixing the “plane” it travels on (RDMA).

Why Prefill and Decode Must Separate

In a combined deployment, the scheduler is in a constant state of conflict.

Prefill is compute-bound. It arrives in bursts, running long attention passes over massive sequences, emitting large KV writes.

Decode is memory-bandwidth bound. It requires a steady, rhythmic cadence and tight per-token latency.

When you mix them, prefill bursts create head-of-line blocking, stealing SM (Streaming Multiprocessor) time from the decode kernels. The result is “token jitter,” which shows up as pauses in generation. By disaggregating these workloads, we treat them as two different classes of service. But this separation makes KV state transfer a first-class design point.

The Data Plane: Moving the Working Set

As context windows expand above 100k+ tokens, the KV cache for a single request can reach gigabytes. Moving this data between a prefill node and a decode node, or parking it in a cache tier during a multi-turn conversation, requires a dedicated data plane.

Sometimes prefill will produce KV but decode capacity isn’t available yet. In this case, KV can immediately be evacuated from the GPU’s memory. This enables available GPU memory capacity to begin new prefill jobs without having to page KV out of memory first. This flow creates the need for a buffer, either in CPU memory, local SSD, or centralized storage. Other times active decodes become paused, due to waiting for tool response, or next user prompt. Either way, active KV must be paged out while remaining available for the decode or prefill queue to recall it at the appropriate time. Both of these flows are best handled by an external store of state that doesn’t occupy GPU memory while in wait state.

Why S3/HTTP is not fit for purpose

The industry standard for object storage is S3 over HTTP/TCP. While it’s the most universal interface on earth, it was built for the web, not for the GPU serving loop.

Under the concurrency patterns of a busy inference cluster, TCP-based S3 breaks down. You see it in:

Protocol Overhead: The CPU tax of the TCP stack eats into the performance gains of the GPU.

Tail Latency: Web-style timeouts and retries create “bubbles” in the pipeline. If a KV transfer stalls, the GPU stalls.

Jitter: At high load, concurrency suffers as the network struggles to manage the bursty arrival of KV blocks.

At scale, KV movement becomes a high-bandwidth transfer that’s sensitive to latency and jitter. With S3 over HTTP/TCP, the data path is host-mediated: packet handling, protocol work, and buffering consume CPU cycles and host memory bandwidth, and the payload is copied through host memory on its way between the NIC and GPU. Under concurrency or high load, each step can introduce latency or jitter and can impose a bandwidth limit.

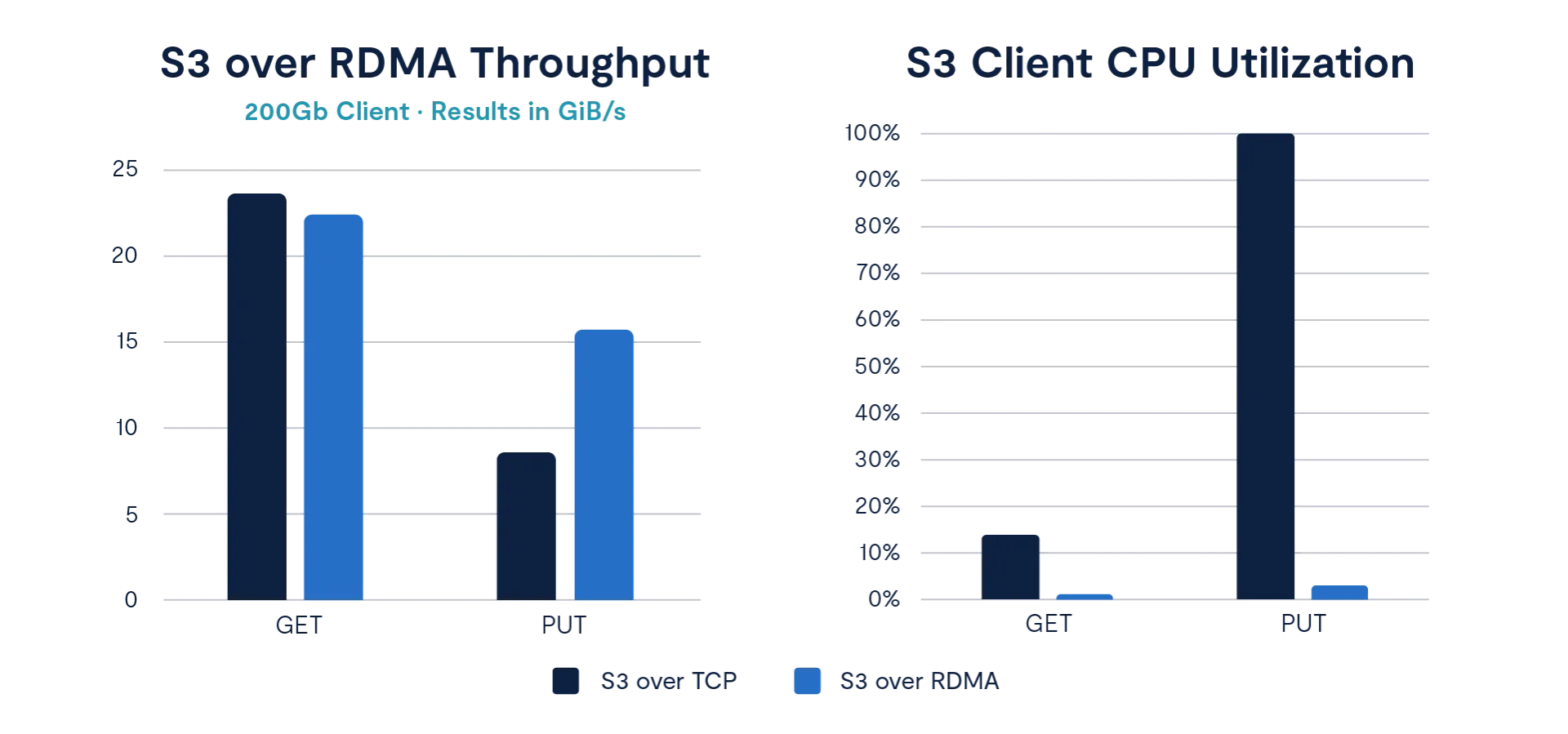

The Solution: Keep the Handle, Fix the Plane

The practical path forward is S3 over RDMA. S3 is the “handle”- the identity integration, tenancy, and operational muscle memory that your team already knows. RDMA is the “plane”- the zero-copy, high-throughput transport that allows the payload to move at the speed of the hardware, bypassing the host CPU. With GPUDirect RDMA, the S3/RDMA fast path can DMA KV blocks directly between the NIC and GPU device memory, avoiding the usual host-memory bounce buffers and keeping the CPU out of the data path.

By putting S3 semantics on an RDMA fast path, we treat KV movement as infrastructure rather than a one-off plumbing problem. This is the same transition we saw in AI model training with NFS/RDMA; now, inference is paying the same movement tax, and it requires the same solution.

VAST + NVIDIA: The Transfer Plane

In a disaggregated pipeline, the fastest place to hold KV is the GPU’s local device memory because it keeps KV on the same memory fabric as the attention kernels, but it is also a scarce resource. This scarcity makes it unsuitable for inactive KV.

The next tier is host RAM, but the movement of KV into it also consumes scarce resources. Capacity is finite, and every page-in/page-out consumes PCIe bandwidth and CPU cycles.

Local NVMe works as a spill tier, but a few standalone drives top out well below what modern GPU pools can consume, so it becomes a throughput limiter, while also consuming scarce PCIe bandwidth and CPU cycles.

An external storage pool can enable a real KV pipeline: it is large enough to hold the working set, and parallel enough to keep the bottleneck link saturated while thousands of sessions page KV in and out. And with S3/RDMA, it no longer burns an extra PCIe hop or wastes RAM and CPU cycles.

At VAST, we’ve worked directly with NVIDIA to ensure that when you use Dynamo and NIXL, the storage layer doesn’t become the bottleneck. Our heritage in RDMA at scale allows us to provide a transfer plane that stays consistent even at peak load.

We provide a single platform where the KV cache stays in the serving loop across turns, governed by the same policies as the rest of your enterprise data.

The KV Cache Becomes Infrastructure

Disaggregated inference turns the KV cache into a first-class workload. If you are in charge of AI infrastructure, your goal is to prevent your pipeline from fragmenting into a collection of specialized, fragile silos.

S3 over RDMA is the clean way to move that workload. It gives your developers the performance of a custom data plane with the governance and simplicity of the S3 API.