If you were to ask Nic Lewis, Senior Systems Administrator for the fleet of supercomputers at the Texas Advanced Computing Center (TACC), running storage at scale meant expecting things to break.

Maybe not catastrophically every time, but often enough that it shaped how the system was run, he explained to the audience this year at VAST Forward. This meant everything from degrading disks to workloads behaving unpredictably, and infectious performance issues. Two serious alerts a week was normal, he said, sometimes more, with nights and weekends never fully off because something could always tip over.

“It's been a lot of late nights prior to Stampede 3 with Stampede 2 and the Cray storage systems. I would probably bump that up to almost four per week,” he detailed, adding that as the file system got older and the spinning disks started to degrade, load would mount, especially on the older drives and his team had a hard time letting go of the disks.

“We’d be trying to push traffic into a disk that wasn’t accepting any more data,” he said of the baseline where systems got even slower and less stable under pressure, meaning a lot of manual intervention at all hours.

Then that stopped happening. And Lewis says VAST is the reason.

This wasn’t because failures went away necessarily but they did stop showing up in a way that mattered or was visible to users because the system just kept running.

With VAST I've had maybe three or four drive failures in total, and one of the times I didn't even notice that I missed the alert. I went a couple of days, then checked the dashboard and said, oh there was a drive out, which is awesome. It’s an amazing feeling to have, to be able to go in and be like ah nice, a drive failed and nobody felt it. That’s not an experience that I've had before, but it is one I'm very happy to have now.

The key he says is that yes, failures still happen, but they don’t turn into full-blown incidents.

The Workload Changed First

It’s worth noting the shift at TACC didn’t start with storage but was definitely driven by emerging workloads storage had to serve.

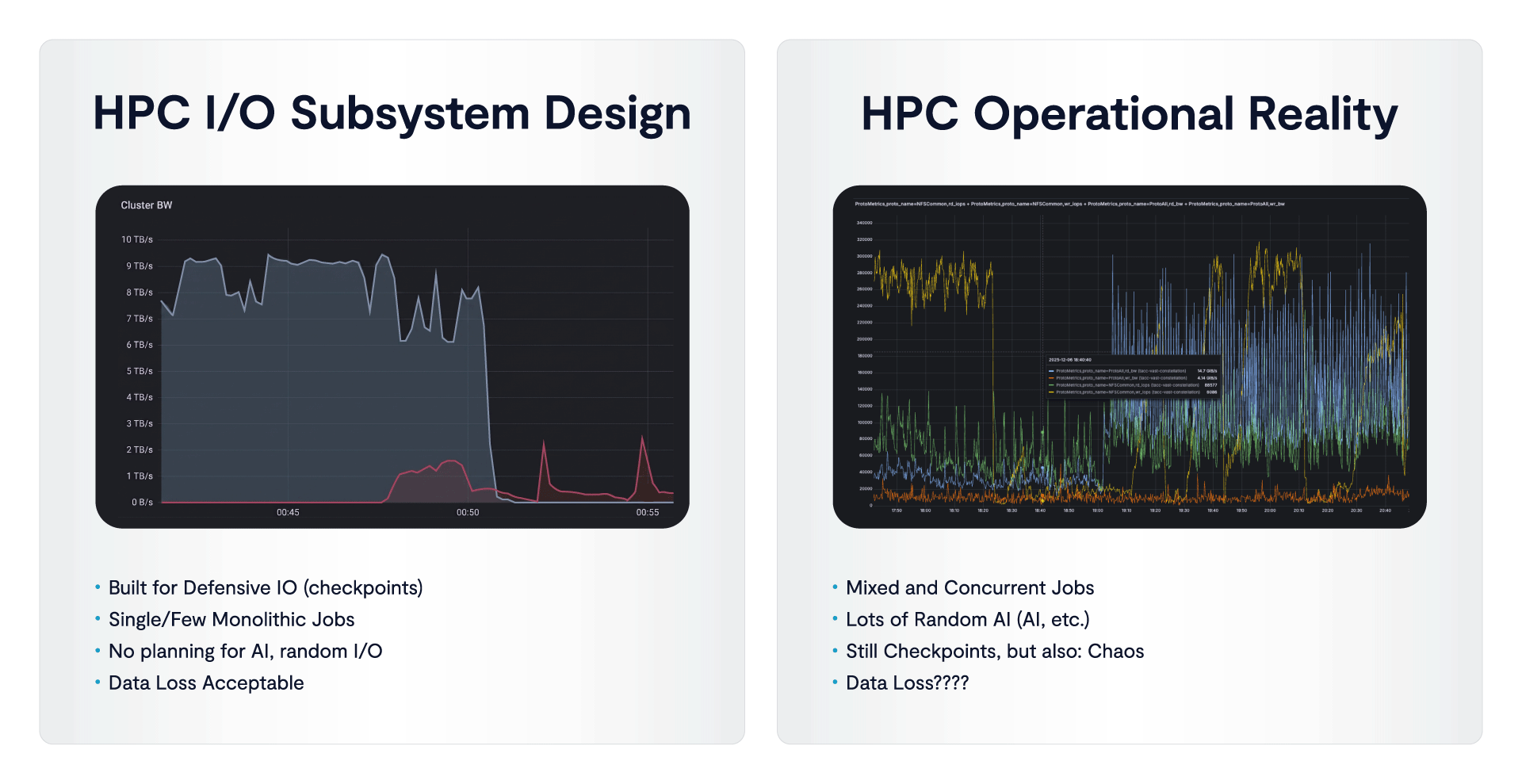

HPC systems used to be almost universally designed around a narrow and well-understood pattern. Large jobs ran across tightly coordinated nodes, wrote checkpoint data at predictable intervals, then moved forward in a controlled cycle of compute and write. Naturally, storage systems were optimized for that model, and “getting good storage performance” basically meant moving large volumes of data in a steady, sequential way, with most of the engineering effort going into sustaining overall throughput.

That model didn’t really go away but at the bleeding edge of HPC systems today it no longer defines the environment. What replaced it is a mix of workloads that behave very differently from one another and run at the same time. So of course, simulation is still there, but it now sits alongside AI training, pre-processing pipelines, and repeated model and environment loading. And instead of a single dominant job, there can now be hundreds competing for access to the same data.

Also, and importantly in TACC’s case, instead of predictable IO, there’s been the introduction of constant variation in request size, frequency, and access pattern.

“What we found is that that the Lustre file system doesn’t cope very well with that when you load in that Anaconda environment across several hundreds of nodes, it screams it is very, very unhappy about loading in all of those different modules and environmentals, different binaries and such, and loading them into the GPU, and then the GPU offloads that back. And it's all this back and forth, loading and unloading of these really large data sets and such,” Lewis explained.

He added this isn’t necessarily a bandwidth problem in the traditional sense. The system’s being asked to handle a pattern it was not designed for (those hundreds of nodes request thousands of small files at once). GPUs pull data in, process it, and push it back.

The same environments get loaded repeatedly across jobs and the result is pressure on metadata, uneven load across disks, and a level of concurrency that turns predictable behavior into something much harder to manage.

The systems didn’t exactly fail immediately under this, but they did become less stable as these patterns increased, Lewis says. Performance became inconsistent, load became harder to isolate and ultimately, the environment moved away from the conditions the storage layer was built to handle, and the gap started to show.

With VAST, those same patterns don’t lead to the same outcome, Lewis says but getting to VAST has been a progressive journey.

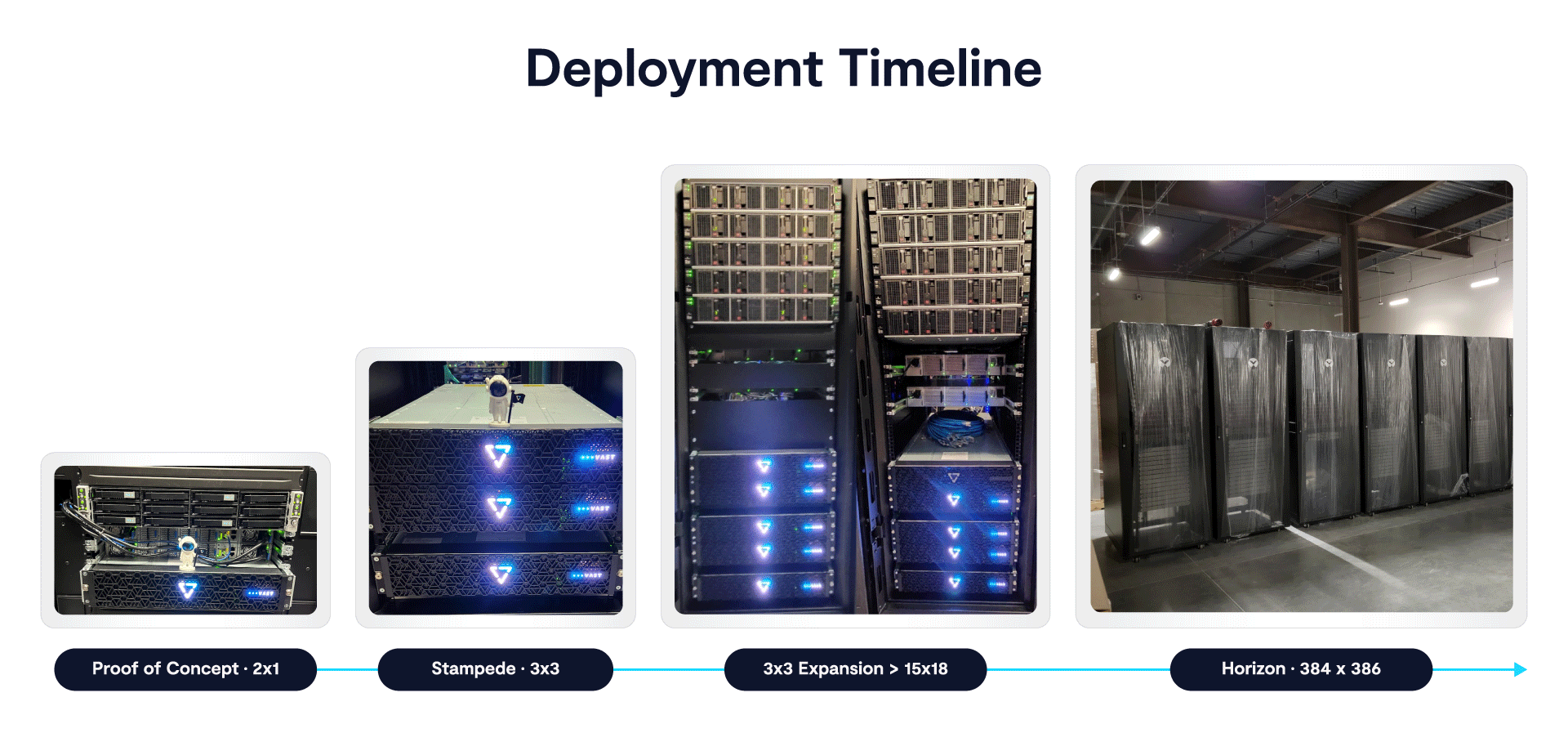

TACC started with a proof of concept where they pushed VAST hard with real workloads and upgrades. After that, they deployed it as the primary file system for Stampede3, supporting both HPC and AI jobs. They then expanded it in stages, adding nodes live in production without downtime. That led to Horizon, their next system, which is much larger and built to run mixed HPC and GPU workloads on the same platform.

Even with a bump in the complexity of work/scale in the Horizon era, there’s no need to isolate users, adjust workloads, or tune around bottlenecks. The same level of concurrency did not turn into instability, which was a big deal for Lewis and team.

I haven't been burned by VAST and it's always gone exactly as I would expect it to, which is that it doesn't topple over, workloads are able to continue, users don't notice… that's the main thing. If I can expand and if I have a couple CNodes fail out, so long as users don't know that, and so long as nothing else fails over to the point where I'm having to call an emergency maintenance, that's perfect.

As TACC has discovered, the system is less adequately measured by things like peak throughput or isolated benchmarks, but more how behaves under pressure, and whether that behavior remains consistent as more users and more workloads are added.

One of our biggest pain points is the file systems. If you look through all the interruptions or whenever a system is down, 90% of them are file system issues that have caused the system to be down.

We did everything we could with some really bad user code that has caused all kinds of problems on our older file systems, and VAST just stayed up and running. We pounded on it and we pounded on it. We did a rolling upgrade, VAST just went, didn't have any interruption, no downtime.

What Happens When the System Holds

At TACC the change shows up in everyday operations. Expansions happen live, hardware is added, the system rebalances, and jobs keep running. This is the life of luxury compared to previous systems, at least for Lewis and team.

We had the VAST installers come out, they put it in the new equipment, hooked everything up, and then I kicked off the actual expansion process…Everything kept running, which is exactly what you would want that sort of scenario.

Upgrades follow the same pattern, he says and importantly, they no longer require downtime.

With VAST I've been able to do full OS upgrades on the file system without having to take any downtime. I've done numerous upgrades with VAST now and even doing something like SSD firmware upgrades on the system was something that happened while IO was occurring, and there was minimal impact, if any. That was definitely also one of those sweaty hand moments, you're like ‘I'm going to be updating all the firmware for all the SSDs’, but it handled it.

Data management changes in some important ways too for Lewis and team. Instead of scanning the file system, admins query it directly. “I pretty heavily utilize VAST’s SDK, so say I've written a brand new application that'll reach out to VAST. It'll obtain all of the info from their database in the form of data frames, then I can take those down and manipulate them however I need. So I can do something like find all the files that were created in the 180 days, and then I want to exclude those, for example. VAST can give me that in like a minute, usually less.”

These changes reduce the need to plan around the system, which crucially for the team Lewis runs, operations get to become routine (sigh of relief) and different workloads can run on the same platform without creating instability.

The Real Reason It Works

The real takeaway from TACC’s use of VAST has less to do with old school benchmarks and more to do with how little attention the system needs.

Failures occur at the component level, but they do not turn into incidents. Expansions, upgrades, and workload changes happen without forcing downtime or intervention. Users run jobs without needing to think about where data lives or how the system will respond under load. “I can forget that it even exists,” Lewis beamed.

Management woes aside, the system just handles concurrency, mixed workloads, and constant reuse of data without requiring continuous adjustment. It just maintains consistent behavior as load increases and as the environment changes.

And for the first time at TACC storage doesn’t have to be segmented off to protect it from users or workloads because all kinds of different use cases can share the same platform. That also means operational focus shifts away from keeping the system stable and toward using it as part of the broader compute environment.

The change is not tied to a single feature, Lewis stresses. It comes from how the system behaves under the conditions that now define HPC and AI. When those conditions are handled without disruption, the system becomes part of the background, and the environment built on top of it can evolve without being constrained by the storage layer.