AI agents speak in incompatible dialects. One emerging agent protocol standard borrows from telecom to create a shared, secure language for intelligent systems.

Before digital networks, communication was an act of translation.

Each machine spoke its own dialect, and every connection required a custom bridge. Despite what might seem apparent though, it wasn’t faster hardware that changed that, it was the agreement to share a language.

Best example? Once TCP/IP arrived, the arguments stopped, the systems merged, and the internet began.

AI is standing on that same fault line at this moment. The new wave of large language model agents can reason, plan, and coordinate tasks, but they do so inside sealed worlds. If you think about OpenAI’s Function Calling, Anthropic’s Model Context Protocol, LangChain’s Agent Protocol, Google’s Agent2Agent system, IBM’s ACP, and the community-led ANP, at their core, all are seeking to define how machines can act or exchange information. But the problem is that each uses its own assumptions and structures, making collaboration across them fragile or impossible.

Without a shared protocol, every multi-agent system becomes an isolated island of logic, useful on its own, unscalable together.

Duplication aside, there’s structural risk to consider just as the telco pioneers did. Most current frameworks treat security and reliability as optional. Messages aren’t consistently authenticated, transactions aren’t atomic, and communication is tightly coupled to the agent’s own code. And what you get from that mishmash is a brittle ecosystem that might work for chatbots or demos, but definitely not for systems controlling vehicles, processing financial data, or operating in real time across networks.

There are efforts to standardize a way out of this problem, including a noteworthy one out of Nanyang Technological University in Singapore. Their team has built something that, even if it doesn’t become the adopted standard, makes the right arguments and shows what a path forward might look like.

Their LLM Agent Communication Protocol, or LACP, argues that this fragmentation looks an awful lot like the early “protocol wars” of computer networking with the same kind of disorder that once slowed the growth of telecommunications until the field agreed on layered, open standards.

The group pulls heavily from telecom, arguing that there, standardization created both efficiency and trustt. Each generation of wireless technology, from 1G to 5G, added speed and a more refined grammar around coordination. They note that when radio systems learned to negotiate, identify, and verify each other, scale followed. Not surprisingly, LACP aims to do the same for intelligent agents.

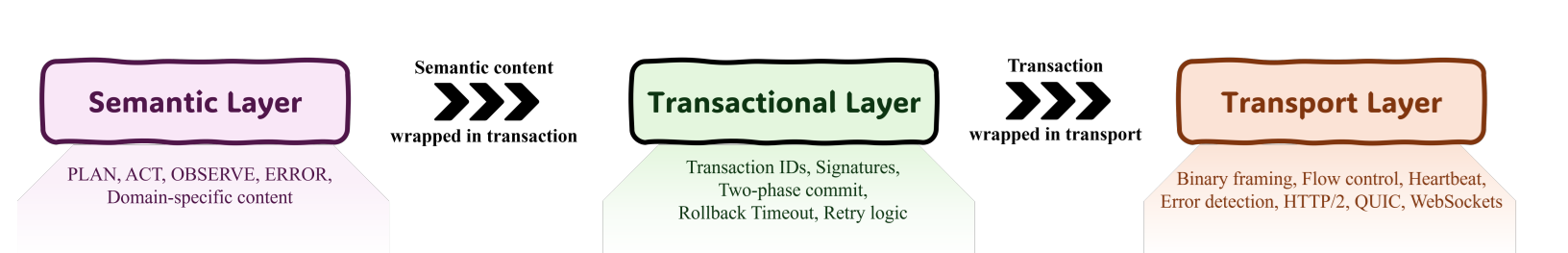

Its design borrows directly from telecom’s layered model.

At the top is a semantic layer that defines the intent of a message through universal categories such as planning, acting, and observing.

Below that sits a transactional layer that enforces reliability, adding identifiers, cryptographic signatures, and atomic commit behavior.

And then beneath everything is a transport layer that can run over common internet protocols like HTTP/2 or QUIC. Together they separate meaning, integrity, and delivery or, as the team argues, the same division that once made global voice and data networks possible.

Instead of just theorizing, the team built a prototype to prove it can work. A LangChain agent was connected to a standalone tool server through LACP, and the two communicated seamlessly without any framework-specific code.

Performance tests showed almost no latency penalty (measured in fractions of a millisecond) even with message signing and verification in place. And even security trials demonstrated that altered or replayed messages were automatically detected and rejected.

The result was a small, functional demonstration that agents could interoperate securely, reproducibly, and without a whole bunch of manual alignment.

What the agentic building community might appreciate here is that LACP won’t dictate how an agent reasons or what algorithms it uses. It defines how those agents confirm what they mean, verify their identity, and complete transactions safely.

In doing so, it provides a stable center layer that allows flexibility above and below. This in theory lets specialized frameworks evolve freely while still sharing a dependable communication layer.

Ah, but standards, you say with a heavy sigh.

We know the complaints well from the past. Won’t this slow the field down or impose artificial limits on creativity? Let’s be positive for a moment and look to history.

Once networks had a universal layer, innovation/creativity accelerated because developers no longer had to solve the same problems at the edges. The same could happen here if you think about it. If security, reliability, and semantics were handled by the protocol, teams could focus on higher-level reasoning and application design instead of reinventing the basics of communication.

Telecom’s long arc reinforces that point. Each protocol generation (the authors point to GSM, UMTS, LTE, 5G) was born out of the exhaustion of ad-hoc systems. But isn’t what drove the transitions way more than bandwidth demand and more about the realization that fragmented standards were unsustainable?

Back in the earlier telco era, once systems agreed on shared signaling, identity management, and handoff logic, they became scalable infrastructure.

The LACP authors see distributed AI at that same inflection point: hardware and models have advanced faster than the languages that tie them together.

The authors argue that standardization is what will turn intelligent agents from isolated prototypes into components of a broader cognitive network. Without it, every new agent framework deepens the divide; with it, collaboration could become as seamless as packet routing.

What LACP (or even a competitive effort elsewhere) could offer is the principle that meaning and trust should travel together.

Every message an agent sends carries both semantics and proof of integrity. Every exchange is accountable and for that matter, autonomous systems can only scale safely if they share a disciplined way of speaking.

LACP may not be the final form of that language, but it points in the only direction that has ever worked, in the direction of clarity, interoperability, and the rules that make communication real.