Most enterprise data centers were built for a different era. One where workloads were predictable, latency was tolerable, and data storage was an afterthought. Today's most demanding AI workloads, large language models (LLMs), multimodal systems spanning vision, text, and audio, agentic AI pipelines, and real-time inference at scale, require massive, continuous, low-latency data access that legacy infrastructure was never designed to deliver. For both on-premises enterprises and cloud service providers, the primary bottleneck has shifted from raw compute to data velocity. The race to differentiate through AI-enabled applications now demands more than training and fine-tuning the best models. Winning requires building the infrastructure capable of reliably feeding agentic AI applications at speed and at scale.

To address these challenges, VAST Data AI Operating System (AI OS) is certified for the latest NVIDIA DGX SuperPOD reference architecture with DGX B300 and DGX GB300 systems, delivering a turnkey, high-performance data center platform engineered to scale to exabyte range.

The Solution: VAST Data AI OS & NVIDIA DGX SuperPOD

AI Factory Model (Turnkey Infrastructure)

NVIDIA DGX SuperPOD is designed from the ground up as an AI factory, an integrated platform purpose-built for production AI at scale. Capable of scaling to tens of thousands of GPUs, it supports the most challenging training and inference, and multi-tenant workloads. VAST Data provides a fast, unified data platform that eliminates storage silos and gives every workload across the cluster consistent and high-performance access to the same data.

2. Disaggregated Shared-Everything (DASE) Architecture

At the architectural core is DASE, compute and storage are fully decoupled yet globally accessible, enabling each to scale independently based on actual demand. Three components make this work: CNodes act as stateless compute containers, DNodes and DBoxes provide NVMe-based persistent storage, and NVMe-over-Fabrics (NVMe-oF) delivers the high-speed interconnect that ties them together. The result is massive parallel I/O across the entire cluster and the ability to scale compute and storage capacities on their own trajectories, a critical advantage for cloud service providers managing diverse, fluctuating workloads without the cost penalty of overprovisioning.

3. High-Speed Data Fabric

The architecture supports both InfiniBand (NDR) for ultra-low-latency environments and Ethernet (RoCE/Spectrum-X) for cost-effective scaling, giving operators flexibility without sacrificing performance. RDMA-based data transfer, Multipath NFS over RDMA, and lossless networking with PFC and ECN ensure that data moves at the speed the GPUs demand. Combined with the DASE architecture, this delivers near-memory-speed data access across the entire cluster, effectively eliminating GPU idle time and converting expensive compute cycles into productive throughput.

4. Unified AI Operating System

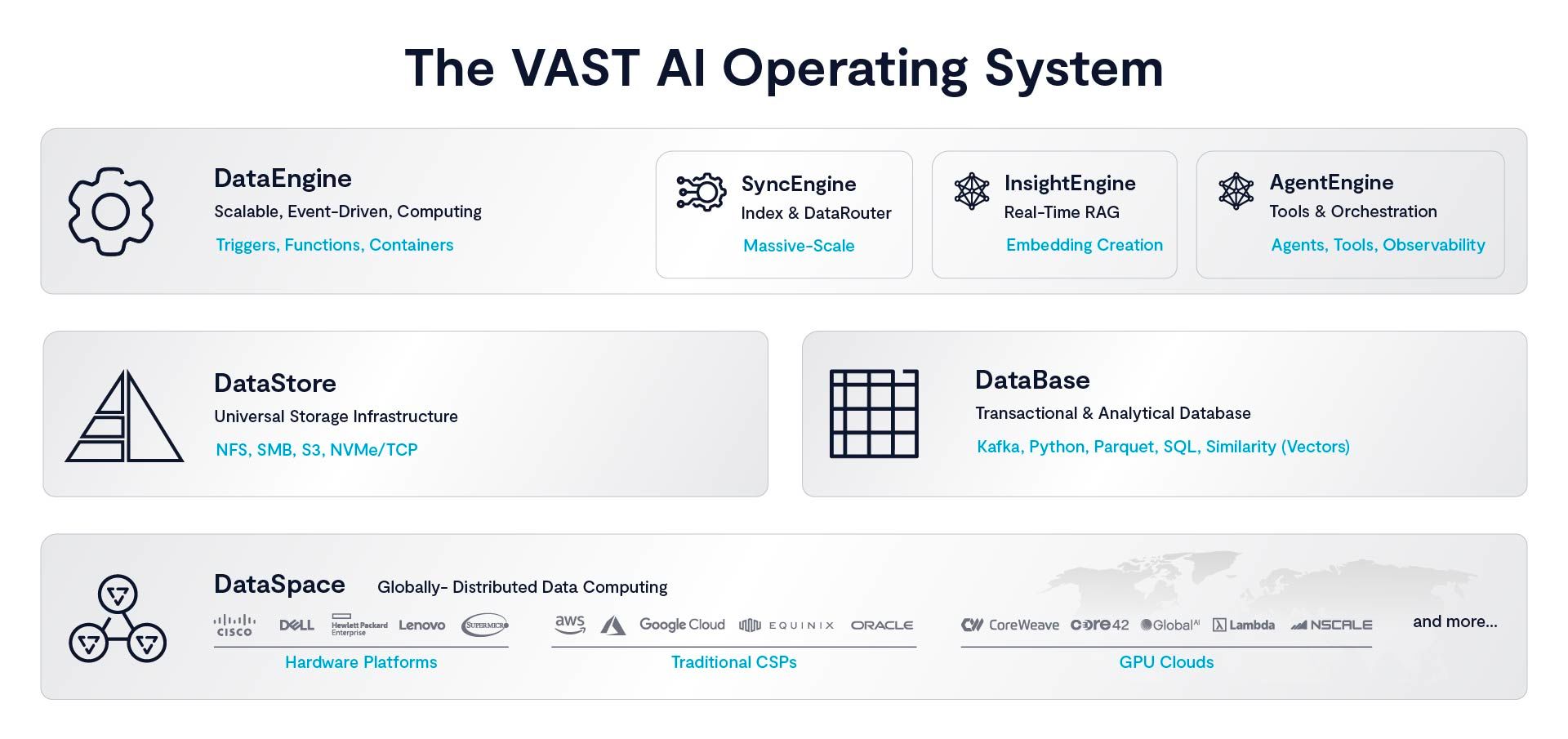

VAST AI OS provides a single platform that spans storage, database, and compute runtime. Seven integrated components replace separate feature stores, external pipelines, and multiple orchestration layers that typically inflate complexity and cost.

DataSpace (single global namespace)

DataStore (supporting NFS, SMB, S3, and NVMe/TCP)

DataBase (analytics, vector, and SQL)

DataEngine (event-driven compute)

InsightEngine (real-time RAG and embeddings)

AgentEngine (AI Orchestration)

SyncEngine (Index and Data Router)

Multi-protocol access means the same dataset is available to every workflow simultaneously: NFS for training, S3 for pipelines, SMB for enterprise applications, and NVMe/TCP for the highest-performance workloads. One platform. One dataset. No silos.

VAST Data AI Operating System

5. Non-Disruptive Operations

For enterprise environments, availability isn't a feature; it's a core requirement.

The stateless architecture of DASE enables zero-downtime upgrades, online scaling of both compute and storage, and live workload migration during maintenance windows. The platform sustains 99.9999% availability, and an API-first observability layer exposes thousands of telemetry points in real time, giving operations teams the deep workload visibility needed to manage complex, multi-tenant AI environments with confidence.

6. AI Workload Optimization

The platform is validated across the full spectrum of modern AI use cases: LLM training, RAG and agentic pipelines, computer vision, recommendation systems, and HPC workloads. This breadth matters, as AI strategies mature, organizations need infrastructure that doesn't require replacement as workload profiles evolve.

7. Global Data Access and Consistency

The VAST DataSpace with a single global namespace lets you unify data from every location, on-premises, in the cloud, or at the edge, and enforce strict global consistency so AI and analytics operate with local performance and scale seamlessly to meet enterprise demands.

Real-World Impact Across Industries

In healthcare, the platform accelerates large-scale imaging analysis and patient data processing, compressing diagnosis pipelines that previously required overnight batch runs. In financial services, it powers historical market modeling alongside real-time prediction systems within the same unified environment. Media and entertainment organizations use it to drive AI-generated content and high-throughput video and audio processing pipelines. And across enterprise AI broadly, it forms the foundation for agentic and multimodal AI systems that require the kind of persistent, low-latency data access that fragmented architectures cannot reliably deliver.

Business Impact

Faster Time to AI Value. Turnkey deployment and a fully integrated stack dramatically reduce the integration overhead that delays production readiness. Teams spend less time assembling infrastructure and more time building differentiated applications.

Higher GPU Utilization. By eliminating I/O bottlenecks, the platform ensures that GPU clusters operate at their intended utilization goals. Every improvement in GPU utilization directly increases the return on what is typically the largest line item in an AI infrastructure budget.

Future-Proof by Design. Mixed hardware generations are supported natively, upgrades are non-disruptive, and the architecture scales alongside the evolution of AI workloads, protecting infrastructure investments over multi-year planning horizons.

Multi-Tenant Enterprise Ready. LDAP and Active Directory integration, shared infrastructure across teams, and resource scheduling make the platform well-suited for large enterprise environments where multiple business units share a common AI foundation.

CSP Differentiation. For cloud service providers, this architecture enables the delivery of AI-native infrastructure services, high-performance AI-as-a-Service offerings that compete on both capability and cost-per-performance.

The Bottom Line

The NVIDIA DGX SuperPOD + VAST Data AI operating system represents a shift from infrastructure assembly → simplified, proven, and scalable AI factory design.

Instead of stitching together compute, storage, and pipelines, enterprises can deploy a cloud service provider-class unified, scalable AI platform.

Download our latest NVIDIA DGX SuperPOD with VAST Data Reference Architecture papers here:

NVIDIA DGX SuperPOD with B200, H200 and VAST

NVIDIA DGX SuperPOD with B300 and VAST

NVIDIA DGX SuperPOD with GB200, GB300 and VAST

Learn how Next-Gen AI Cloud Providers are building AI Factories with NCP designs