At GTC DC, Leidos’ Corey Hendricks described how AI built to reason, explain, and adapt is becoming the foundation of trust across mission-critical systems.

At GTC DC this year, Corey Hendricks, VP and Chief Engineer at Leidos, described a threshold in how AI is conceived for government systems.

“At Leidos, AI isn't just a single side program or a lab experiment,” he said. “It's becoming a part of our organizational DNA.” The phrase framed his argument that the next transformation in federal AI will depend not on speed, scale, or autonomy, but on reasoning.

For Hendricks, reasoning is what distinguishes AI that answers from AI that acts.

“Inference gives us answers,” but reasoning gives us options, foresight and outcomes.”

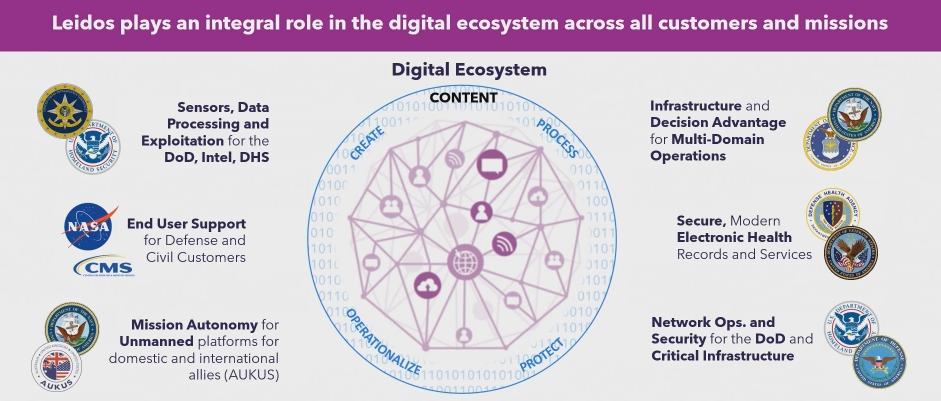

The difference might seem subtle until you place it in the context of Leidos’ work.

The company builds the systems that keep aircraft moving, data classified, veterans connected to care, and power grids stable under strain. These are not environments that can tolerate error or opacity.

The point is, in domains like these, trust is the infrastructure and reasoning is how you engineer it.

Hendricks also argues that agentic AI isn’t about autonomy for its own sake, but more about developing agents that can adapt to real conditions, simulate futures, and explain their logic under audit. “Agents that reason can show their work,” Hendricks explained. “Every decision [is] linked to a transparent chain of logic that builds confidence, compliance and oversight that government missions demand.”

That principle runs through what Leidos calls the Framework for Resilience and Security, or FAIRS. This is both a design philosophy and a quality-control system for mission AI. Each model is tested for fairness, assurance, resilience, explainability, and accuracy, what Hendricks called “the seven dimensions” of trusted performance.

“We ensure that the model results are fair by detecting and mitigating biases that ensure equitable outcomes,” he said. “Model security is critical, and we focus on defending both training data as well as the models themselves from adversaries.”

Hendricks described how FAIRS combines with another layer, the 4A methodology, which controls the degree of autonomy each AI system is allowed to exercise, describing it as “a dial on the wheel,” balancing human oversight with machine speed.

The goal is to maintain a flexible architecture that can expand or contract the role of automation depending on the mission risk. Together, FAIRS and the 4A framework define how Leidos builds AI that can operate in unpredictable environments without surrendering control or explainability.

In transportation security, Leidos AI supports screening systems like ProVision and ProSight, deployed in airports and border crossings worldwide. “Every day, over 2 million travelers around the world trust Leidos AI for their safety and their security,” he said. These systems use deep learning for threat detection, cargo manifest validation, and real-time image analysis.

In healthcare, he described how Leidos is applying the same reasoning architecture to unify patient data for the Veterans Administration. “Empower every veteran, every clinician, and every mission through intelligent, connected care,” Hendricks said. The system automates routine processes but remains transparent, ensuring that “every provider, every caregiver, and every veteran sees the same picture.” In that context, reasoning means continuity, decisions that can be traced, verified, and understood across institutions.

Hendricks then turned to energy. “The foundation of every mission is energy resilience,” he said, noting that AI workloads and datacenter demand are now straining power systems built for a different century. Leidos’ Transmission AI applies the same reasoning models used in defense logistics to the problem of grid design, exploring thousands of possible routes and configurations to minimize cost and disruption. “We’re building energy to the power of AI,” he said.

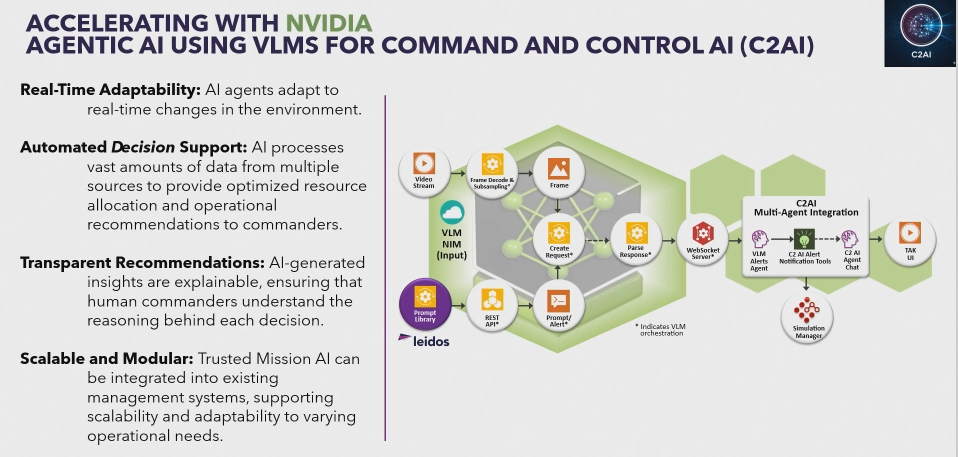

The most advanced expression of all of this is C2AI, a program for command and control. It uses multimodal reasoning models (vision, language, and sensor data) to manage fast-changing crisis environments.

“C2.AI uses an agentic AI architecture that adapts in real time,” Hendricks said. It processes inputs from satellites, field sensors, and human operators to recommend resource allocations and routes. “Every single insight C2.AI provides is transparent. Commanders don't just see recommendations, they see the reasoning behind it.” He adds that the architecture is modular, composed of narrowly scoped agents, each auditable and aligned with mission intent.

Threaded through all of this was a clear philosophy: that reasoning, not raw performance, will define the next era of mission AI. Reasoning builds traceability into systems where confidence is not optional. It turns AI from a computational aid into a partner in judgment.

“It's time to integrate agentic AI into the DNA of how we operate,” Hendricks said. “To make intelligence not just something we use, but something we are.”

For Hendricks, reasoning becomes the method through which AI earns its place inside those boundaries, where “outcomes shift from survive the complexity to thrive through it.”

Take the conversation one step further: watch Leidos + VAST: Agentic Cybersecurity with VAST and NVIDIA