At the VAST FWD user conference, one of the highlights was getting an insider’s view into how enterprises keep scaling, modernizing, and meeting AI head on.

Among the many large companies highlighting their path with VAST, CACEIS, which handles the safekeeping, accounting, and reporting of investments for large financial clients like asset managers and banks, gave the large audience just the kind of in-depth view of its journey dealing with constant flows of sensitive data that need to be accurate, accessible, and tightly controlled.

Ultimately, the session unpacked how their mission workload moved from being spread across separate systems into a single platform on VAST, where analytics and AI can now run against the same data without copying or moving it around.

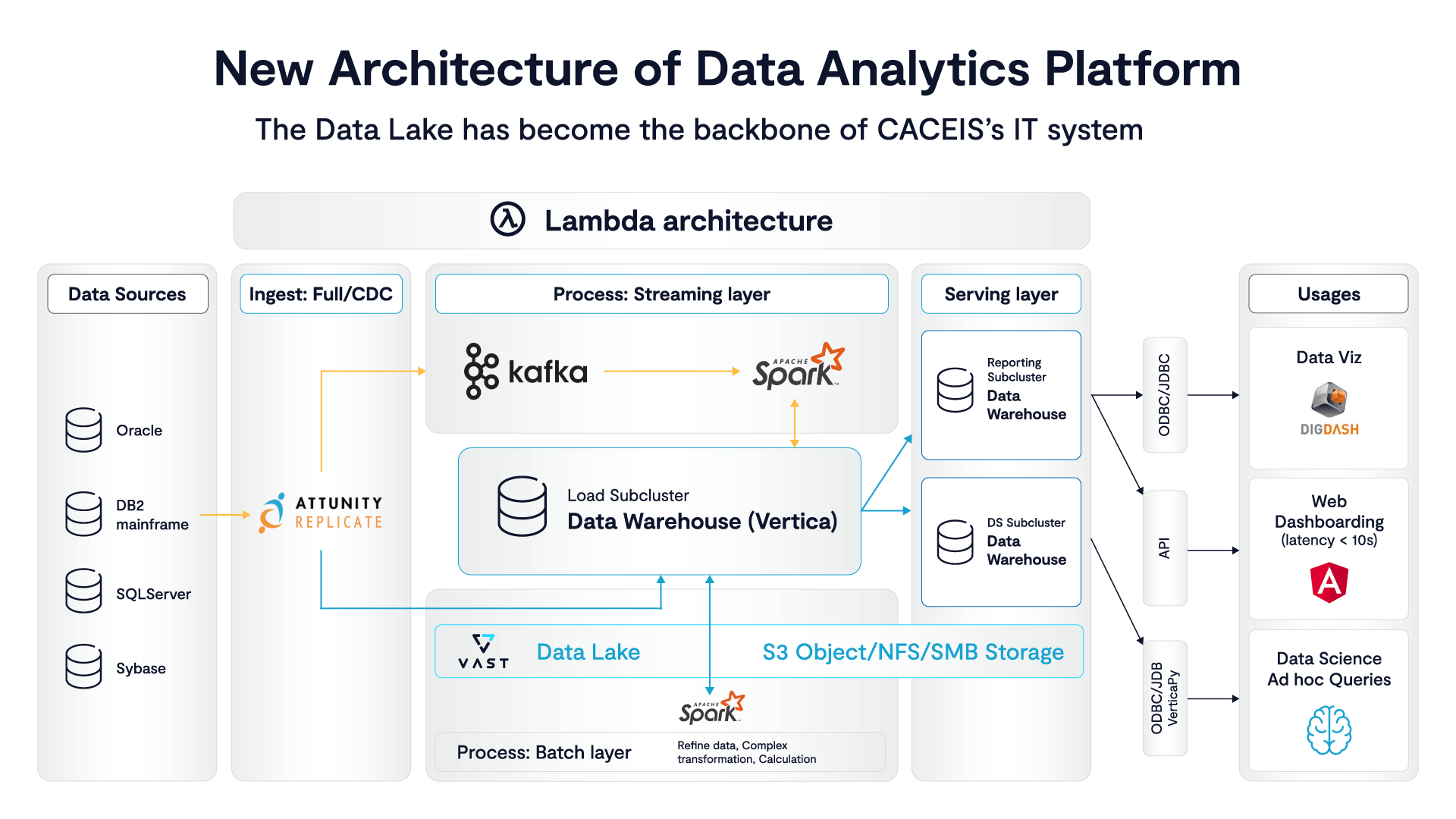

Ahmed Kessay, Head of Data and AI Offerings at DXC Technology, represented CACEIS along with Fouad Teban, who leads the Data and AI Pipeline Team at VAST and walked through how CACEIS moved from a setup built around separate systems for warehousing, analytics, and newer AI work, all handling large volumes of financial data for reporting and client services.

As Kessay explained, that approach made it hard to scale, and made the team slower to roll out new capabilities. He also said it was difficult to manage under data sovereignty requirements which are an important aspect of CACEIS’ work.

But in what is one of the most remarkable before/after environments we saw at VAST FWD is how those same workloads now run on one platform using the same data without copying or moving it between systems. It might sound simple but it’s a major overhaul with some rather stunning results.

Data Movement Broke the System, Not Compute

In an ultra-regulated financial environment where data can’t just move freely and AI depends on constant access to it, the problem becomes keeping everything coordinated and accessible in one place. With that in mind, the value of separating compute and storage to consolidating analytics and AI workloads onto VAST really can’t be overstated.

According to Kessay, the real pressure to make some changes started with how data is managed across systems, how often it gets copied, and whether it can be reused without slowing things down or creating governance issues.

It’s also important to point out the entry point wasn’t AI, but really more of a structural problem in how the data warehouse was built and how it scaled. CACEIS was running a traditional columnar MPP system with a shared-nothing design, which meant compute and storage were tightly coupled and every workload competed inside the same environment.

As we’ve seen time and again here at VAST (which is why we had so many customers talking at VAST FWD), that made it difficult to isolate ETL from analytical queries and nearly impossible to scale parts of the system independently as demand changed.

What VAST introduced at this stage was something unexpected and brilliant. By separating compute from storage and placing data on a shared object layer, the system could begin to isolate jobs and scale them independently without duplication. ETL pipelines could run without interfering with query performance, and analytics jobs could expand or contract based on demand.

As CAECIS soon saw in practice, when data is no longer bound to a single execution layer it becomes possible to reuse it across systems without moving it.

Replacing Hadoop and Rebuilding Analytics on a Shared Data Layer

Once compute and storage were separated, the next step was a full replacement of the existing data stack.

This went beyond just HDFS alone and included an Hadoop platform. VAST became both the data lake and what CACEIS calls its internal data hub. As VAST’s Fouad Teban explained, the key change is that data now lives in one place, on a shared object layer, instead of being copied across systems.

Analytics is now built directly on top of that, so their data from Oracle, mainframes, SQL Server, and flat files gets pulled in continuously using near real-time replication for ad hoc queries, viz, dashboarding and more.

With that foundation in place, the system extends into AI without introducing a new pipeline or duplicating data. The same data that feeds analytics is now used directly for AI workloads, with VAST providing the persistence layer through DataStore, the query and indexing layer through VAST Database for both vector and tabular data, and the execution layer through DataEngine for processing and orchestration.

In this setup AI runs against the same shared data as analytics, using the same platform, which removes the need to move data into separate systems and keeps both workloads aligned on a single source. It’s hard to overstate how much that tightens up everything for CAECIS.

RAG and Query Go Native

Once analytics and AI are using the same data, the next shift is how that data gets accessed.

What would normally require separate pipelines (not to mention a standalone vector database) is all handled by VAST. As Kessay demonstrated, dcuments come in through SharePoint via VAST Sync Engine, changes are picked up by triggers, and DataEngine runs the chunking and embedding before storing everything in the VAST Database as vectors and metadata. The cool thing here is that there’s no handoff to another system, the whole pipeline runs in one place.

Querying follows the same pattern. The LLM layer (using NVIDIA NIMs), retrieves results directly from VAST, and the system returns answers along with the source material behind them. That matters in a financial setting where traceability is required. For this use case value here is seeing how the full RAG loop stays inside the platform without duplicating data or introducing another service.

The same idea applies to structured data. Users can ask questions in plain language, which are translated into SQL, executed, and then rewritten into a response. Both document retrieval and database queries run on the same data, which means VAST isn’t just storing data, it’s the layer everything runs through.

Collapsing the Remaining Pipelines: Streaming and ELT Move Into VAST

So we’ve arrived at a point where AI and analytics are running on the same data, and the natural next step is pulling in the remaining pipelines that still sit outside the platform. Today, streaming and transformation are handled separately, with Kafka for eventing and tools like Talend for ETL, which means data still has to move between systems. That movement adds cost, latency, and operational overhead, especially when the same data is already sitting inside VAST.

The direction is to remove that separation. Kafka is being replaced with VAST Event Broker using Kafka-compatible APIs, and ETL workflows are shifting into ELT executed directly inside the platform through DataEngine. Kessay says instead of pushing data out to external systems for processing, transformations run where the data already lives which means big gains in performance and efficiency.

He adds that this changes is how the system behaves as it grows. Streaming, transformation, analytics, and AI all operate on the same shared data layer, which reduces duplication and simplifies operations. Pipelines don’t disappear per se, but they do stop being separate systems and become functions runnin inside VAST, using the same data that everything else depends on.

From Platform to System: AI Factory, Sovereignty, and Full-Stack Convergence

As more moves onto VAST, the remaining gaps come down to where AI runs and where the data is allowed to live. The RAG pipelines that started in Azure are being brought back on-prem because of data sovereignty. Some of this data can’t leave controlled environments, and once that becomes clear, the architecture has to change.

At the same time, GPU horsepower is being added so AI workloads can run directly against that same data. Instead of moving data to where compute lives, compute is brought to the data. That’s what turns this into an AI factory, where data, processing, and models are all coordinated in one place.

DataStore holds the data, VAST Database handles both vector and structured queries, DataEngine runs processing, Insight Engine drives pipelines like RAG, and the sync engine handles ingestion. Workloads stay separated where needed, but they all use the same data without copying it, so everything runs together instead of being split across layers.

What Actually Changes: Performance, Teams, and Why This Model Spreads

The biggest change shows up in speed. Projects that used to take months now take weeks because there’s no need to connect multiple systems or move data between them.

“Without VAST, it will take a month, maybe years. But with VAST, it take few weeks to do things. VAST brings performance facility, simplicity and speed, and that's we are looking for,” Kessay said.

He adds that the same shift changes how teams operate. Data engineers, analysts, and AI teams are working on the same platform using the same data instead of passing work between systems.

“This is the first time I see data engineers, business analysts, data scientists and AI practitioners collaborating around the same use cases,” Kessay told the audience. The platform lines up the work so teams aren’t separated by tooling, he added.