When you get to the heart of why many companies leave the cloud it’s often because of expense. But for security behemoth Crowdstrike, the reasons were far more complex, prompting a brilliant rethink of how to (re)build for scale from the ground up.

Appearing in front of a packed crowd at VAST FWD 2026, Guy Lawless, Senior Director of Production Systems at Cloudstrike went way beyond trimming cloud spend and shifting cold data to cheaper tiers. The tale he told is of a security platform that grew fast enough, ingested enough data, and bound itself tightly enough to S3 semantics that its own success forced a way deeper architectural break.

As Lawless explained, the problem wasn’t storage cost alone but that the system supporting the business could no longer behave as, well, a system.

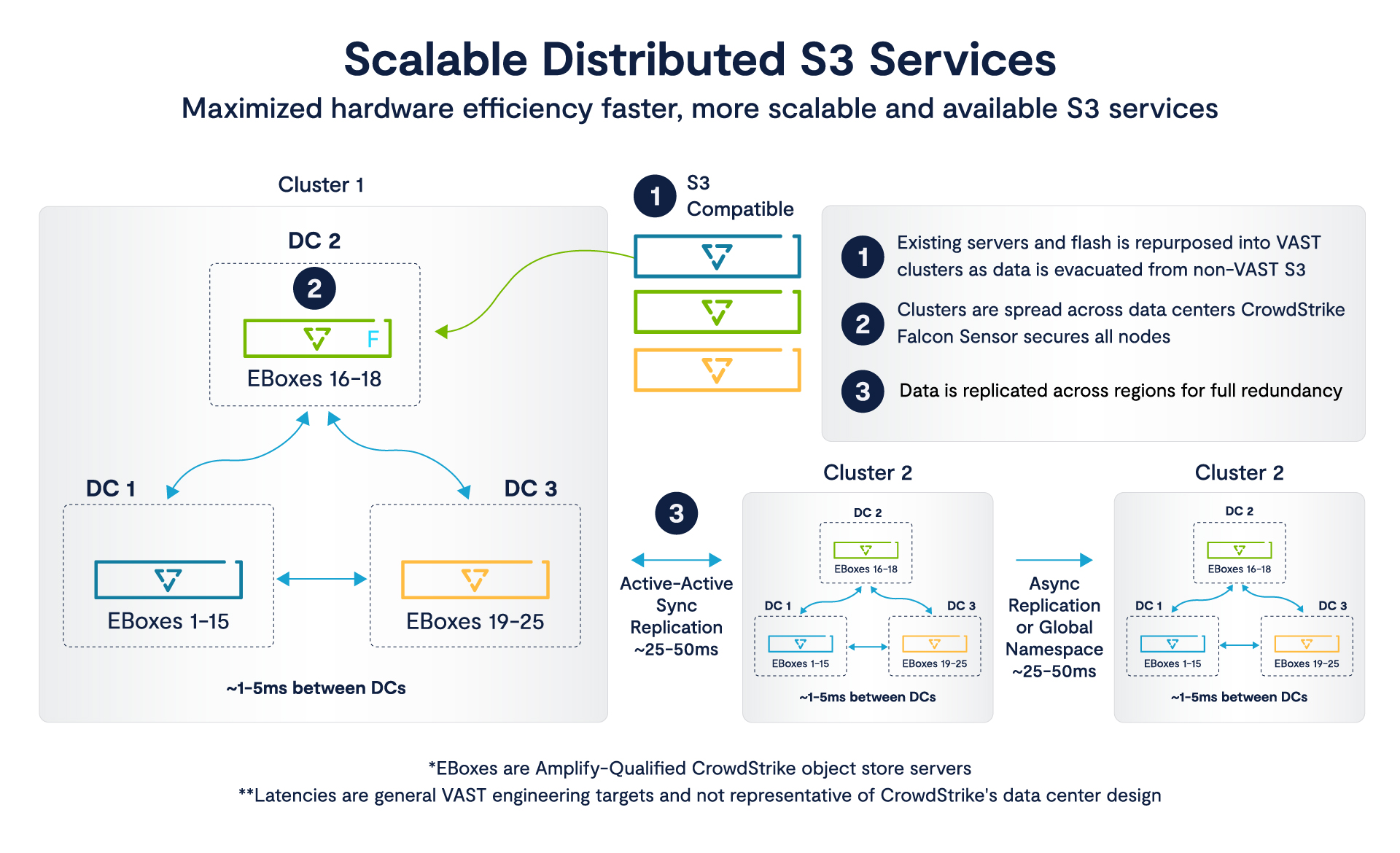

By the time CrowdStrike crossed into tens of thousands of nodes, it was suddenly in new territory where it was competing with hyperscalers for power, space, and supply. It was at this point when the real issue surfaced. Their breadth applications were built around S3 and their growth depended on ever-expanding data retention and analytics, and their economics continued growth depended on escaping that very platform without rewriting everything above it.

As Lawless outlined, that is the kind of constraint that does not get solved with a storage swap, meaning what he presented at FWD was far less of a migration story and more a case study of what happens when cloud-native architecture hits the limits of its own abstraction and has to be rebuilt, underneath itself, without breaking.

What became abundantly clear was that while it was a going concern, the breaking point wasn’t cost, it was actually coordination.

This is a familiar problem at scale because if you think about it, CrowdStrike did what every successful SaaS company of the last decade was supposed to do. They built cloud-first, scaled fast, and let AWS absorb the early complexity of infrastructure. And for a long time, that model worked exactly as advertised, Lawless says. But new customers meant more data, more endpoints, more telemetry, and the system expanded cleanly alongside it. As time and growth continued, those very properties that made that growth possible began to turn against the systems team as the volume of data, the retention windows, and the analytical demands of the platform all expanded at once.

While the coordination and infrastructure solutions are the story here, cost was always a going concern. Crowdstrike needed to reach a margin profile that would sustain it as a public entity, which meant pulling high-cost workloads out of the cloud. The most important work for this shift were applications where data shuttled around the most and where analysis was most intensive, the exact places where cloud abstractions tend to hide their true cost. As those workloads grew, cost scaled with them in a way that was no longer linear or predictable, Lawless explained.

What made this harder (and what makes this story more than a cost optimization exercise) is that S3 (which again, everything was built on) wasn’t a backend service that could just be swapped out. It was the literal connective tissue across microservices and as Lawless details, the underlying assumption embedded in everything from how data was written, retrieved, and processed across the entire platform. In short, cutting cost meant preserving the semantics while relocating the infrastructure underneath.

For Lawless and team, it was no longer about moving data to a cheaper place, it was much more about maintaining a coherent system while removing one of its foundational dependencies. He reminded the crowd that the cloud hadn’t failed them, it was just too central to extract cleanly.

The shift from a few thousand nodes to a few hundred thousand is where things got really interesting.

By the end of 2019, Laweless said CrowdStrike was operating roughly 5,500 nodes. That is already a meaningful distributed system, but still one where architecture can absorb inefficiencies without huge consequence. What followed over the next several years was not incremental growth but expansion at a rate that changes the physics of the system itself.

Today, that footprint has crossed 150,000 nodes spread across a dozen datacenters in the United States and Europe.

At that scale, the constraints are physical and immediate. Power becomes a gating factor, rack space becomes a negotiation and even “smaller” worries like component supply goes from procurement item to strategic risk. CrowdStrike is explicit about this transition. And to reiterate, they are competing for the same resources as hyperscalers (capacity, allocations of drives, timelines for hardware refresh, etc.)

At internet scale, storage is doing the heavy lifting that the entire application architecture depends on. That means it needs to behave like a global, fault-tolerant, API-compatible data plane.

Lawless explains that generational transitions can’t lag demand, nor can they outpace supply. The system has to be built with enough flexibility to absorb volatility in NAND availability, in DRAM pricing, in network equipment lead times, all while continuing to ingest and analyze data at an accelerating rate.

Moving workloads out of the public cloud gets progressively harder as those workloads become more critical to the business. While those early migrations targeted the obvious candidates (the data-heavy, the cost-intensive, etc.) every remaining service was more entangled therefore more sensitive to disruption.

And S3 had become a barrier to entry for moving applications out of AWS.

As Lawless explained, there’s no single, strict S3 standard. What exists is a set of APIs and behaviors that applications learn over time (how objects are written, how consistency is handled, how failures surface, how retries behave, and how edge conditions resolve and so on). When an application ecosystem grows around those semantics, it is not trivial to reproduce them somewhere else and even less trivial to do it at scale without introducing divergence.

Rewriting the application layer was not an option, Lawless said. The number of services involved, and the degree to which they were interdependent, would have turned that effort into a multi-year architectural reset with unacceptable risk. So the constraint became clear.

Whatever replaced S3 had to behave like S3 and had to accept existing workloads without modification and produce identical outcomes under load, under failure, and under concurrency.

Lawless says this goes way beyond building object storage in a datacenter. He and team had to think about recreating a core interface of the cloud inside a private infrastructure, with full compatibility and without breaking the system that depends on it.

That is a much harder problem than cost. It is a problem of preserving semantics while relocating the system underneath them.

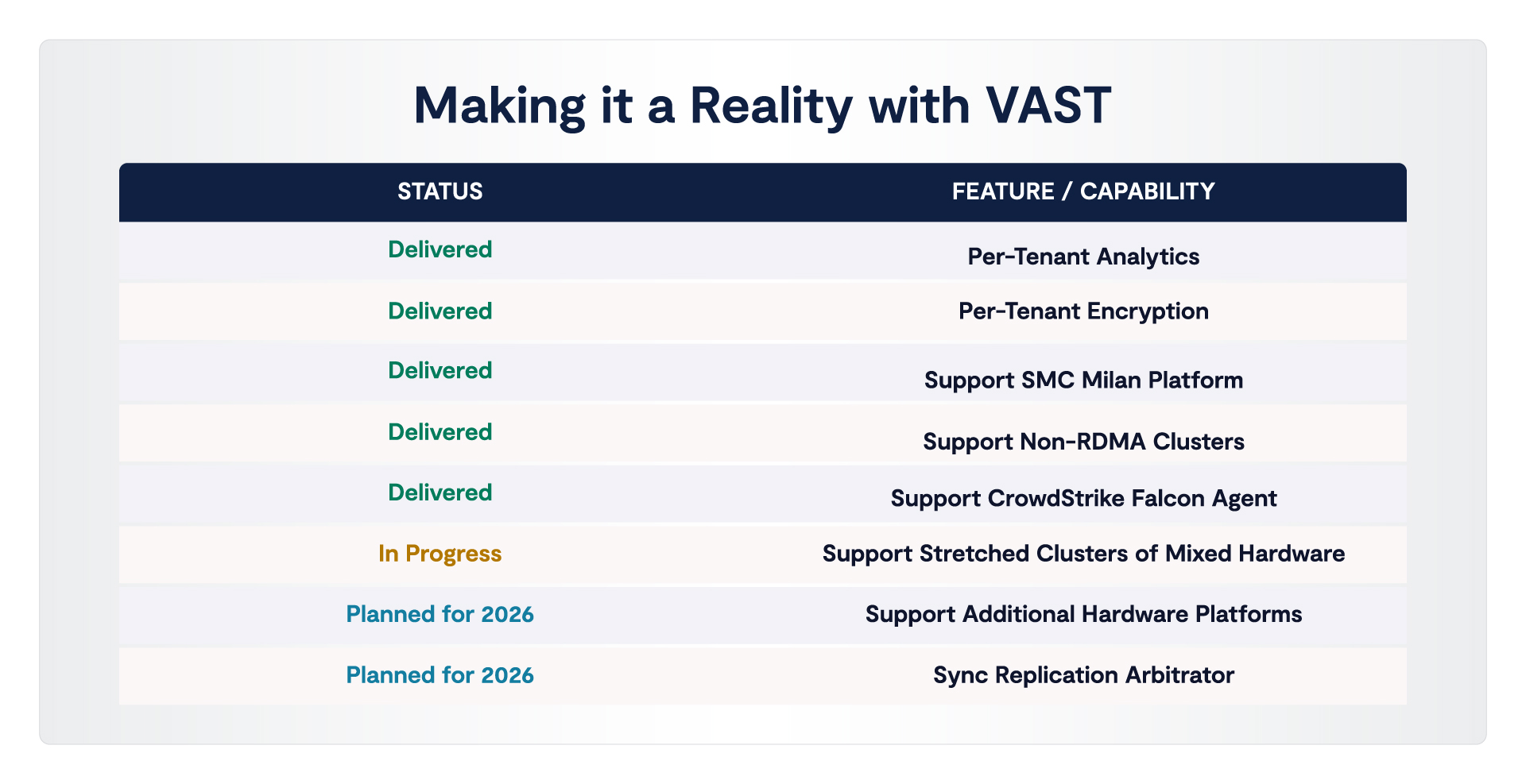

The first attempt looked reasonable in theory. They introduced an object storage layer into CrowdStrike’s datacenters, gave them a path off S3 for some workloads, and lowered the immediate cost of storage. But at scale, Lawless admits, the limitations of that approach quickly became clear in terms of efficiency in particular.

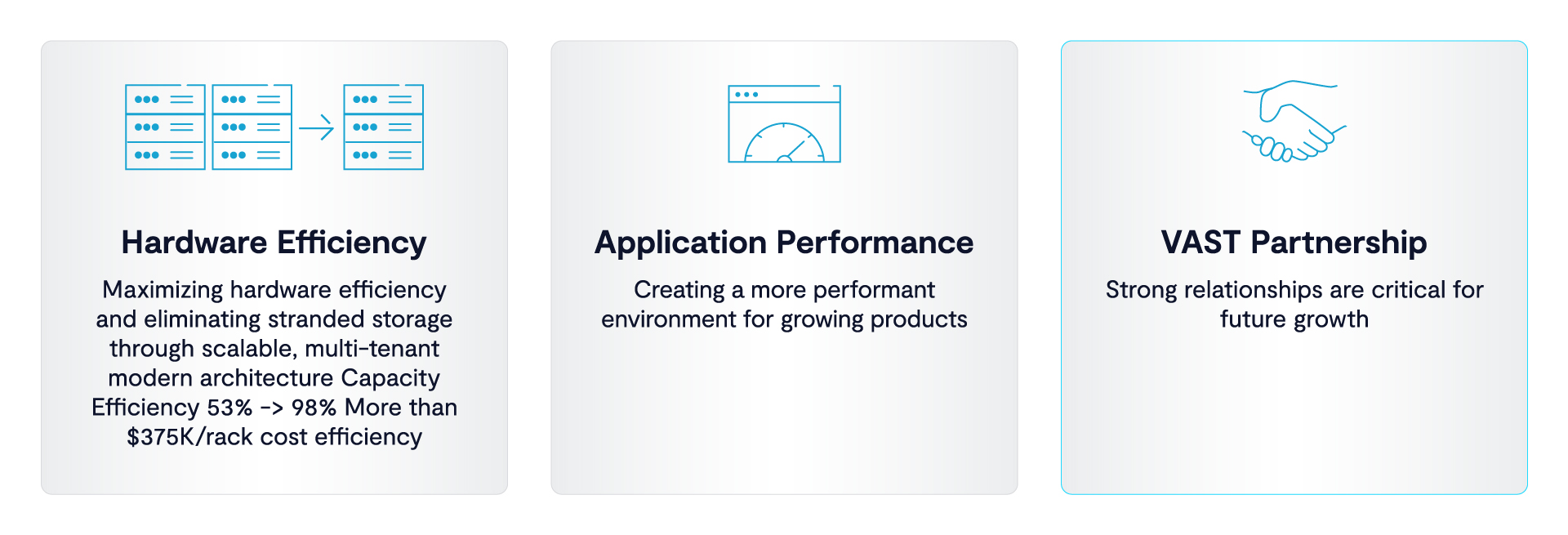

He says with their previous vendor, they were operating at roughly 53% usable capacity. Nearly half the hardware footprint was effectively overhead, consumed by the way data was protected and distributed across the system. At smaller scales, that kind of inefficiency can be tolerated or masked. At tens of thousands of nodes, it becomes a direct multiplier on capital expense, power consumption, and physical space. When they compared that to what they would later achieve, the delta was the difference between leaving large amounts of capacity stranded and reclaiming almost the entire footprint for usable data.

The eve deeper problem wasn’t just if that capacity was actually usable but how that capacity was organized.

Lawless walked the crowd through that version that forced them into isolated clusters, each tied to a specific workload or application domain. This created a fragmentation pattern familiar to anyone who’s operated storage at scale.

And as is to often the case in distributed systems, the little things were the big things. Capacity had to be provisioned in advance based on forecasts that were almost always wrong in one direction or the other. Some clusters would run hot and require expansion. Others would sit underutilized with large amounts of reserved space that could not be reassigned. On the ground this meant a team would request 5 petabytes, consume one, and the remaining 5 would be locked in place, unavailable to other parts of the system that might actually need it.

That fragmentation went way beyond their storage cluster, propagating upwards into operations. More clusters meant more systems to manage, more patching domains, more failure surfaces, and worse, more opportunities for performance degradation during maintenance. He adds that it also propagated downward into hardware and facilities because, of course more clusters meant more servers, more disks, more racks, more network ports, more power draw.

With hundreds of thousands of drives in the environment, failures were just continuous, Lawless said, explaining they would just assume disks were failing somewhere at any given time and that operators were constantly replacing components.

In a fragmented architecture, that creates a steady operational burden that scales with the size of the deployment. Lawless and team saw this firsthand.

But this difficult lesson sparked a radical idea…

What changed with VAST is easiest to misunderstand if you look at it as a better implementation of the same thing. It is not. The differences show up first in metrics, but the real shift is in how the system allocates, recovers, and behaves under pressure.

The most obvious number is capacity efficiency. On the same hardware, with the same disks and servers already deployed in CrowdStrike’s datacenters, usable capacity moved from roughly 53% to about 98%

Lawless emphasized that was certainly not a tuning boost but the actual removal of real overhead. The implication is that capacity is no longer carved into isolated protection domains with fixed boundaries. It’s pooled and protected in a way that allows nearly the entire footprint to be available for data without sacrificing resilience.

Just as the FWD keynotes from VAST CEO and founder, Renen Hallak and VAST co-founder Jeff Denworth argued, that change immediately collapses the fragmentation problem. Instead of provisioning storage per cluster and hoping forecasts hold, capacity becomes globally addressable.

Multi-tenant allocation is the absolute key to eliminating that stranded space. The same scenario that previously left petabytes locked inside an underutilized cluster now resolves differently. That capacity can be reassigned in place to workloads that need it, without migration, without reprovisioning, and without introducing new operational domains.

Performance gains follow from that same shift in structure. CrowdStrike reports roughly a 30% improvement in write commit performance and a doubling of read performance over their previous system.

Those gains matter in context when you consider ingestion is growing on the order of 30-40% quarter over quarter, and retention windows are expanding from weeks to months. Performance is not just about faster access. It is about sustaining growth without forcing architectural changes higher up the stack.

The even more telling differences show up when the system is stressed. Lawless and team induced failure conditions mid-operation. During upgrades, they even physically disrupted connectivity, pulling cables and forcing interruptions at arbitrary points in the process. What they observed was that all-important continuity. He said that when an upgrade failed, it resumed from the exact point of interruption, without restarting the process and without disrupting client work.

Under conditions where replication bandwidth was constrained, the system adapted, shifting to asynchronous behavior to absorb the load rather than failing or throttling unpredictably.

These behaviors point to a system that maintains explicit state about its operations and can continue forward progress in the presence of failure. That is a different model than one that treats failure as an exception to recover from after the fact. It is closer to a continuously running process that expects interruption and is designed to absorb it.

The final requirement, the biggie that he says determines whether any of this would usable in practice, is compatibility. All of this had to happen without rewriting the applications that depended on S3. CrowdStrike tested their existing services against the new environment and found that they could be moved without modification.

The interactions, the edge cases, the operational semantics all held. As Lawless confirmed, the integration was seamless.

Taken together, these changes go way beyond incremental improvements to storage. It’s the same shift in how the system is structured that was the backbone of everything at VAST FWD. Capacity is no longer partitioned, failure is no longer exceptional, and compatibility is preserved at the boundary that matters most, the application interface.

Capacity doesn’t have to be carved up ahead of time and protected within fixed boundaries. Failure doesn’t force the system into a stop-and-recover cycle. And the application layer does not need to be rewritten to accommodate any of it. The system continues forward, reallocating, resuming, and absorbing disruption as part of normal operation. And it’s a thing of beauty.