Managing data for AI workloads can be complex, but combining the VAST CSI driver and NVIDIA Run:AI’s data source abstraction makes the process dramatically simpler.

Together, VAST and NVIDIA Run.ai streamline provisioning, unify storage access, and remove low-level Kubernetes friction through seamless integration with VAST’s multi-protocol architecture and NVIDIA Run.ai’s PVC-based data management.

Simplified Provisioning and Abstraction

To see how this integration improves real-world operations, let’s start with how VAST and NVIDIA Run:AI streamline the basic task of provisioning and accessing data.

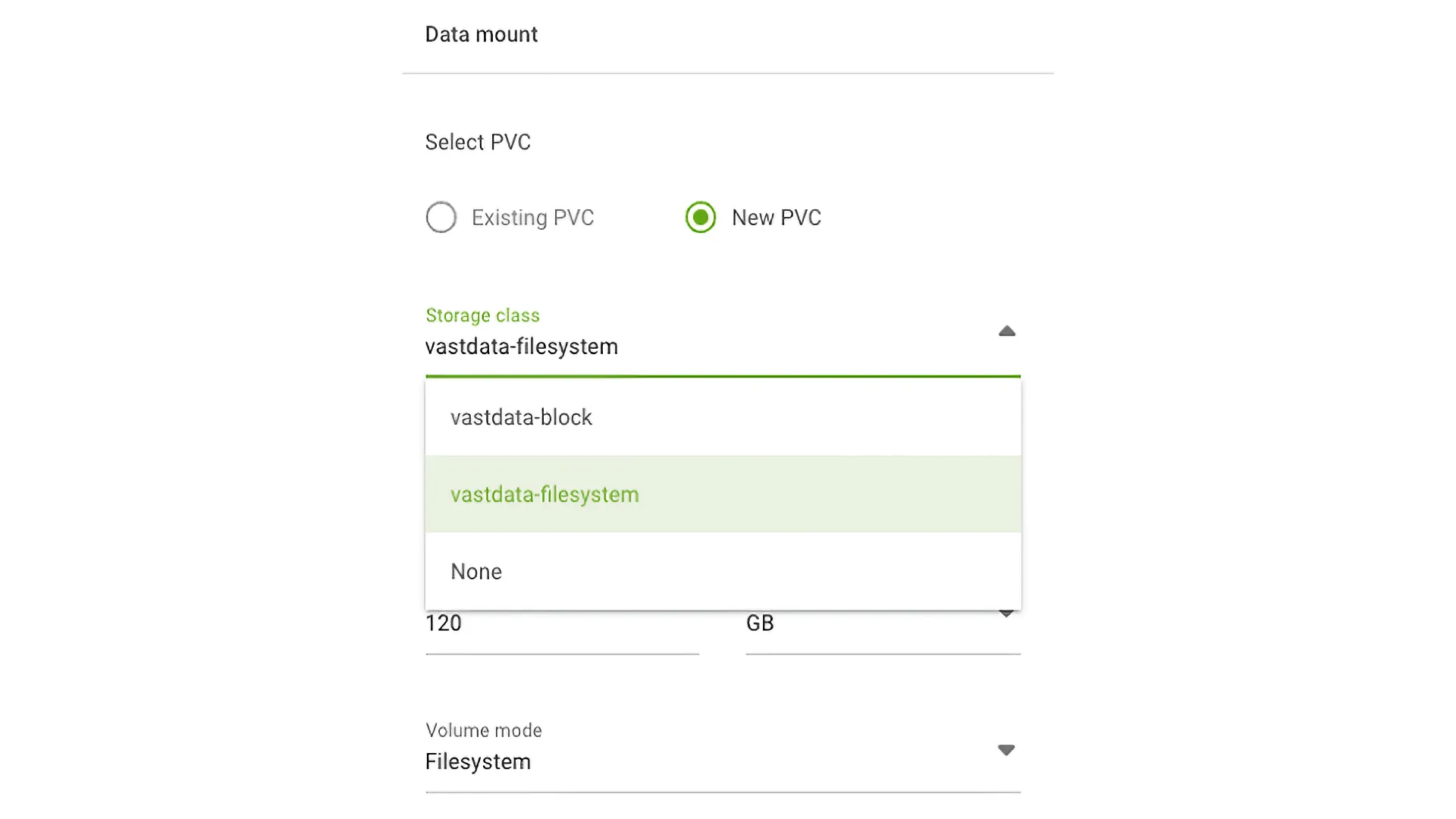

VAST Multi-Protocol Efficiency: The VAST CSI driver can provision both block volumes and file system (NFS) volumes (for shared access and model serving) from the same underlying data platform. This simplifies the administrator’s job as they only manage one data platform for all AI needs. Using VAST CSI, the administrator simply selects the appropriate storage class from the list and then provisions a new or existing PVC based on that selection.

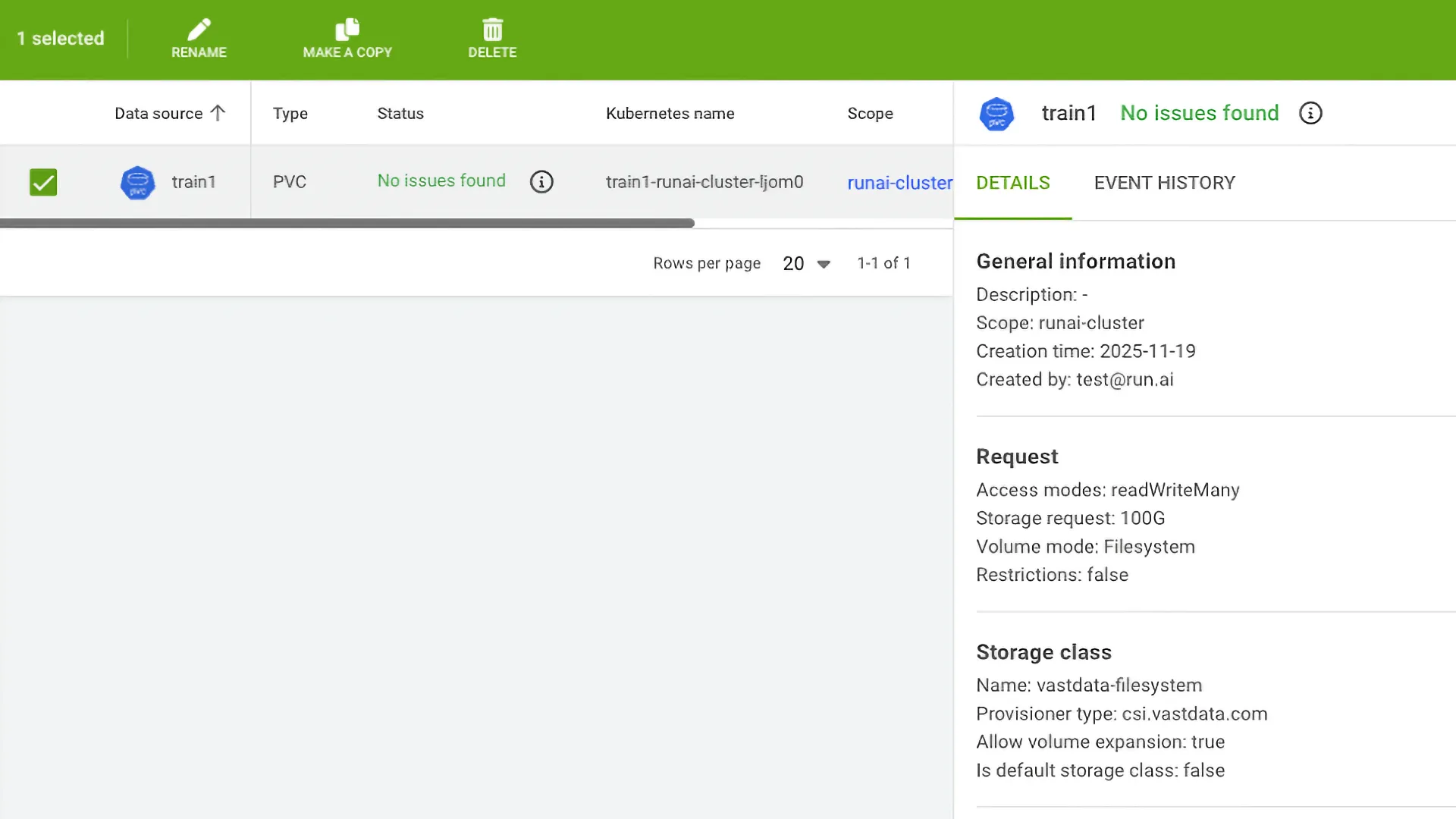

In this example, the storage class for both block and file systems is installed using the VAST CSI.

NVIDIA Run:AI PVC Abstraction: NVIDIA Run:AI abstracts the complexity of Kubernetes PVCs via its Data Sources feature. An administrator pre-defines PVC type Data Sources (backed by VAST StorageClasses) and exposes them to researchers. Researcher Self-Service: Researchers request data access simply by name (e.g., “project-a-pvc”) rather than needing to know the complex details of the VAST provisioner, StorageClass mount parameters, or volume binding.

Consistency and Advanced Feature Utilization

Beyond simplifying provisioning, the integration also ensures that advanced VAST features are consistently available through Kubernetes without adding operational overhead.

Snapshots and Clones: VAST’s native storage efficiency and performance features, such as high-performance snapshots and clones, are exposed directly through the CSI interface. The administrator manages these capabilities via standard Kubernetes VolumeSnapshot objects, ensuring fast data iteration and recovery without needing to learn separate VAST management tools for basic operations.

Simplified Model Lifecycle: The ability to instantly clone a trained model PVC or restore from a snapshot makes managing the lifecycle of development, training, and deployment seamless and efficient for the administrator.

Multi-Tenancy on VAST

With provisioning and advanced features handled, the next challenge is ensuring each project or team has secure, isolated access to storage. VAST’s native multi-tenancy makes this simple.

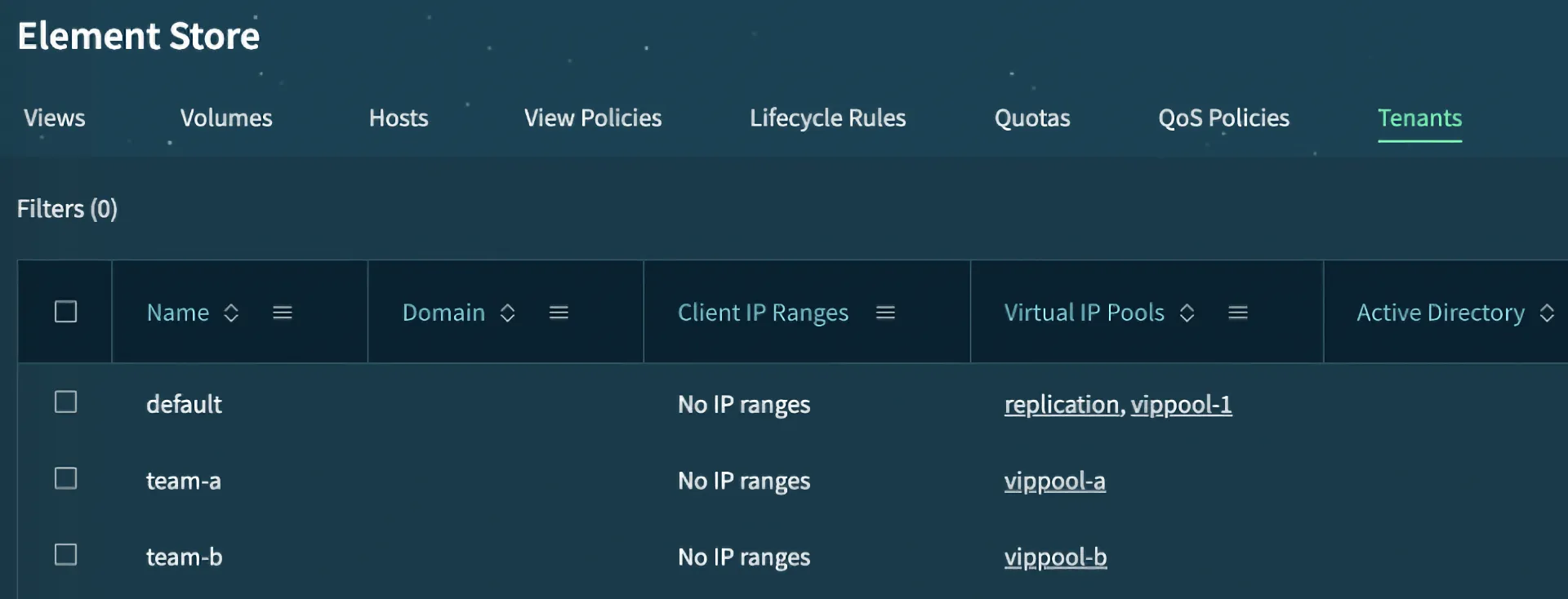

The VAST Cluster’s multi-tenancy directly supports the needs of a job orchestrator like NVIDIA Run:AI by providing complete administrative and data isolation. Tenants are defined within VAST as isolated domains, each having its own file system hierarchy, unique security policies and performance controls (QoS).

This structure directly enables NVIDIA Run:AI to create a dedicated VAST tenant for each project or department by mapping their respective network segments (Client IP Ranges) to distinct VIP Pools. This ensures every NVIDIA Run:ai project has segregated, high-performance storage access, preventing cross-project interference, managing consumption via individual QoS such as BW and IOPS limitations, quotas, and guaranteeing secure data isolation across NFS and S3 protocols.

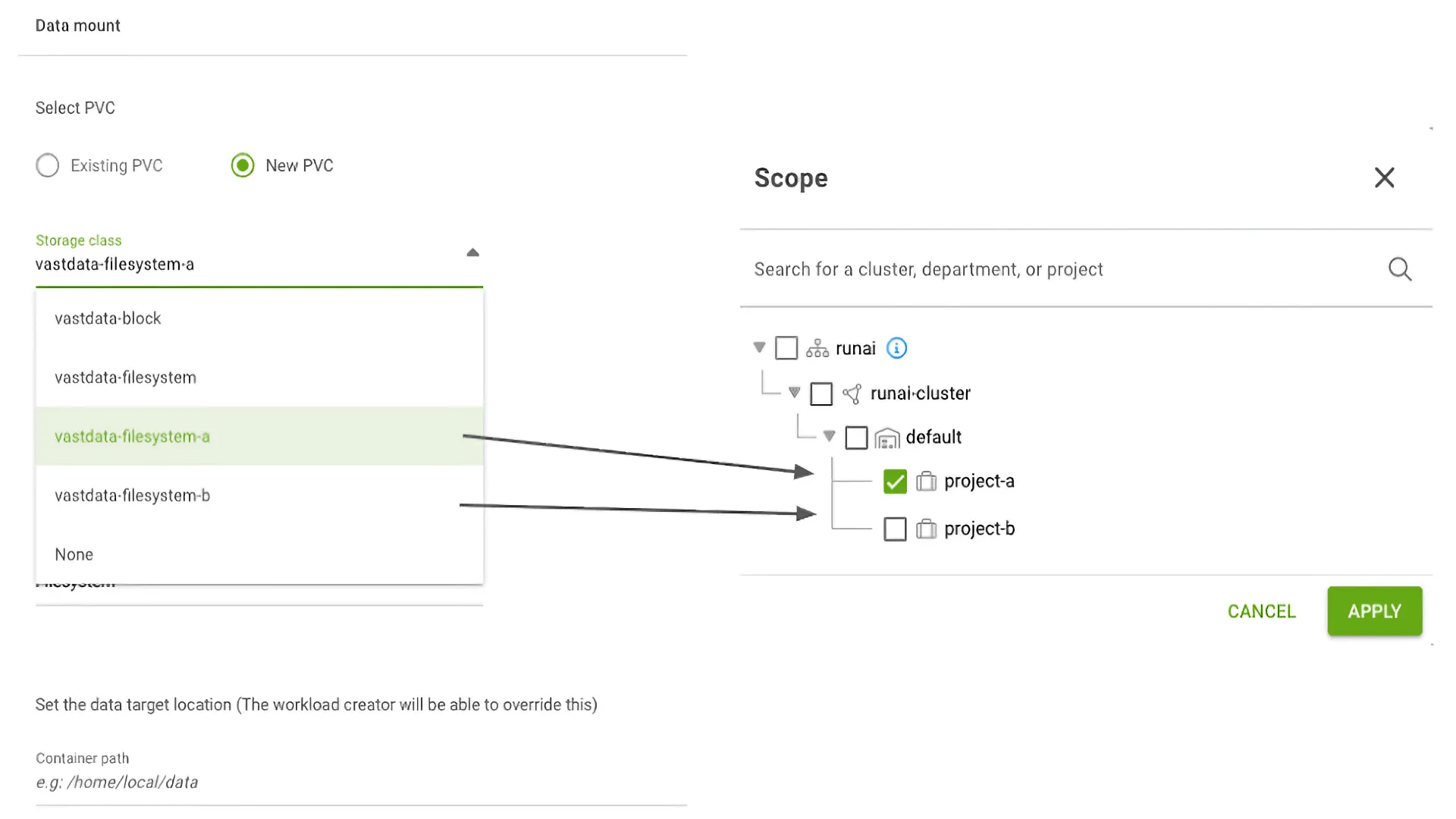

First, we configure two tenants, differentiated by vippool, and create two corresponding storage classes, each assigned to one of the tenants.

Subsequently, we assign two projects in NVIDIA Run:AI, one for each storage class. This configuration ensures definitive data isolation between the projects.

In the following example, two projects are assigned, each with its own dedicated storage class. This configuration ensures that every PVC, NFS mount, or S3 bucket is exclusively owned by and isolated within its respective project.

Controlling Tenants’ QoS

Once tenants are defined, the next step is ensuring each project gets the right performance.

VAST’s QoS policies give administrators precise control over bandwidth and IOPS for every tenant or user. The policies provide granular control over storage performance by defining minimum guaranteed and maximum allowed limits for bandwidth and IOPS on read/write operations.

Crucially, QoS can be applied granularly either to a specific view or to an individual user within a tenant. This capability is essential for a NVIDIA Run:AI administrator, as they can accurately map the isolated VAST tenant dedicated to each Run:AI project to a specific QoS policy.

By assigning these QoS policies, the administrator ensures that every project receives its properly provisioned performance limitations (or guarantees), effectively preventing resource contention and guaranteeing that no single project monopolizes the high-performance storage infrastructure.

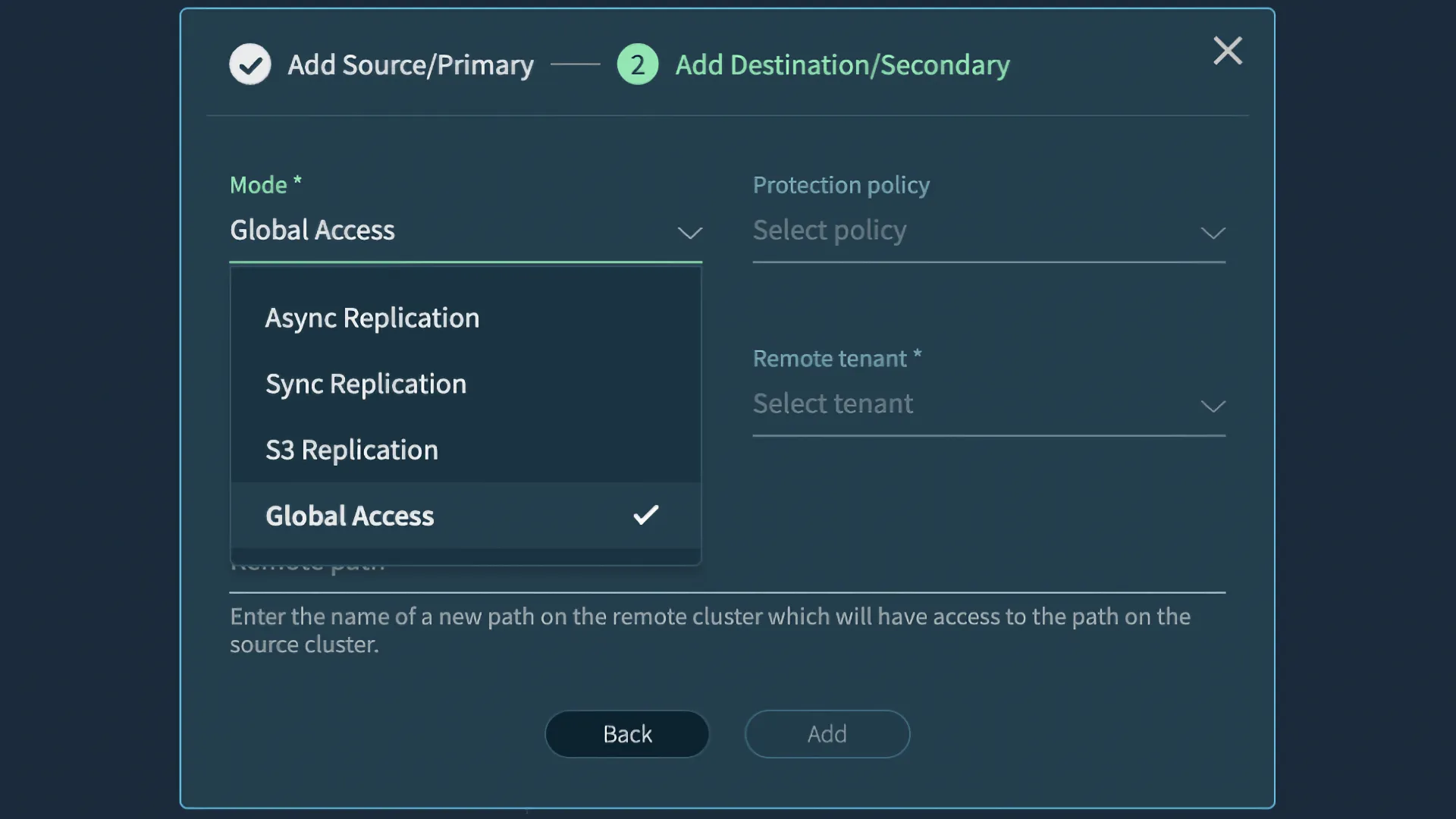

Multiple Sites / Global Namespace Integration

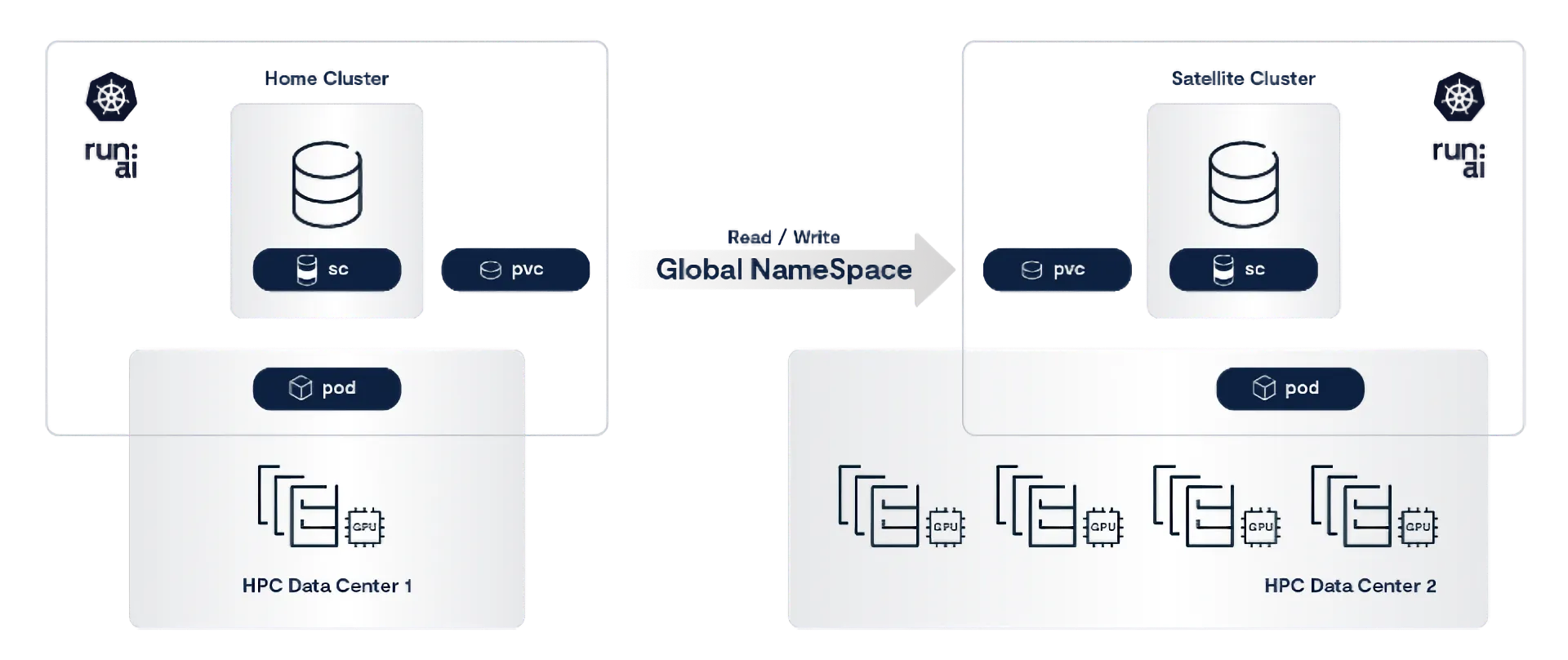

While we’ve concentrated on local PVC workloads with NVIDIA Run:AI, VAST’s global namespace (VAST DataSpace) is vital for extending resource utilization to remote sites and enabling advanced Run:AI scenarios. Let’s discuss two scenarios:

Seamless multi-site burst compute for both training and inference

Resilient LLM Training

Run:AI can dynamically burst the job queue to a secondary site (Site B) or a cloud environment where more compute resources are available.

Burst Compute for Training and Inference

This is a classic use case enabled by the global, persistent nature of the VAST DataSpace.

Scenario: You start a large training job on Site A (e.g., on older GPUs or fewer resources), but you acquire new, faster and much more compute resources at Site B.

Solution:

A job on Site A writes its training checkpoints to the VAST file system mounted via NFS.

The PVC for Site B is linked to the Site B VAST system, making the data instantly accessible at the location with superior compute resources.

NVIDIA Run:AI orchestrates the shutdown of the training job on Site A and launches a new job on Site B, instructing it to resume training from the latest replicated checkpoint path, immediately utilizing the significantly greater GPU compute resources available there.

Benefit: The job transitions seamlessly, leveraging the global VAST namespace to access the same data instantly on a site with much higher GPU compute power. This utilization of optimal, high-powered resources eliminates data transfer bottlenecks, leading to dramatically faster time-to-model completion.

Resilient LLM Training: Checkpoint and Recover in Seconds

The training of cutting-edge AI models, often spanning thousands of GPUs over weeks or even months, carries an unavoidable risk: hardware or network failures. At scale, a single GPU crash can halt an entire synchronous training job, wasting GPU‑hours and delaying training.

What if you could build resilience directly into your pipeline so that, when disaster strikes, your training picks up from the last checkpoint in just seconds? In this section, we’ll show how VAST’s high-throughput storage combined with NVIDIA Run:AI’s orchestration layer can be combined for checkpointing, automatic recovery, and zero disruption to LLM training with no manual intervention. We’ll walk through a real example - complete with code you can run - and share a GitHub repository to reproduce it.

The High Cost of Unplanned Interruptions

Failures during training aren’t reserved for hyperscale clusters - they can strike any environment, from a handful of GPUs to multi-region deployments. In Meta’s , we get a glimpse into just how common these interruptions are:

Over a 54-day window, Meta’s 16,384-GPU cluster recorded 466 job interruptions, 90% of which were unplanned. GPU hardware alone caused 59% of those failures.

Even if your environment is 1 percent the size of Meta’s, the likelihood of encountering failure grows proportionally with the number of GPUs. Without checkpointing, a single crash can wipe out hours or days of training progress - a huge waste of compute time and a serious bottleneck for any ML team.

Why Checkpointing Matters

Checkpointing - periodically saving model weights and optimizer state - is more than a safety net. It enables you to recover from interruptions without restarting from zero, select the best‑performing model version based on validation metrics, and reproduce experiments reliably. The bigger the model that you are training, the longer a single epoch can take; losing even a few hours due to a crash costs a lot.

Checkpoint strategies vary depending on use case: you might save every few minutes (periodic), only when validation accuracy improves (best‑model), at the end of each epoch or steps (last‑epoch/steps), or under custom conditions such as hitting a specific loss threshold. Regardless of the approach, the choice of storage for these checkpoints is equally crucial.

The Storage Dilemma

Many teams default to cloud object stores or local disks for checkpoint storage. While cloud storage offers geo‑redundancy, its latency can stretch multi‑GB checkpoint writes and reads into minutes. Local disks may deliver lower latency, but they live and die with the node - taking your checkpoints offline if the machine fails.

VAST bridges these gaps. VAST’s disaggregated architecture aggregates NVMe drives across an entire cluster into a single global namespace, providing parallel I/O at tens of gigabytes per second plus built‑in erasure coding for data durability.

Orchestration with NVIDIA Run:AI

On the compute side, NVIDIA Run:AI layers atop Kubernetes to manage GPU and data resources intelligently. It continuously monitors pod and node health, automatically rescheduling failed jobs to healthy GPU pools - even across regions.

Mounting a VAST volume into your NVIDIA Run:AI‑managed pod is straightforward: no changes to your training code are required. Your script reads and writes checkpoints just as before, but now with NVMe‑equivalent performance and cross‑region accessibility.

Fast, Cross‑Region Recovery in Action

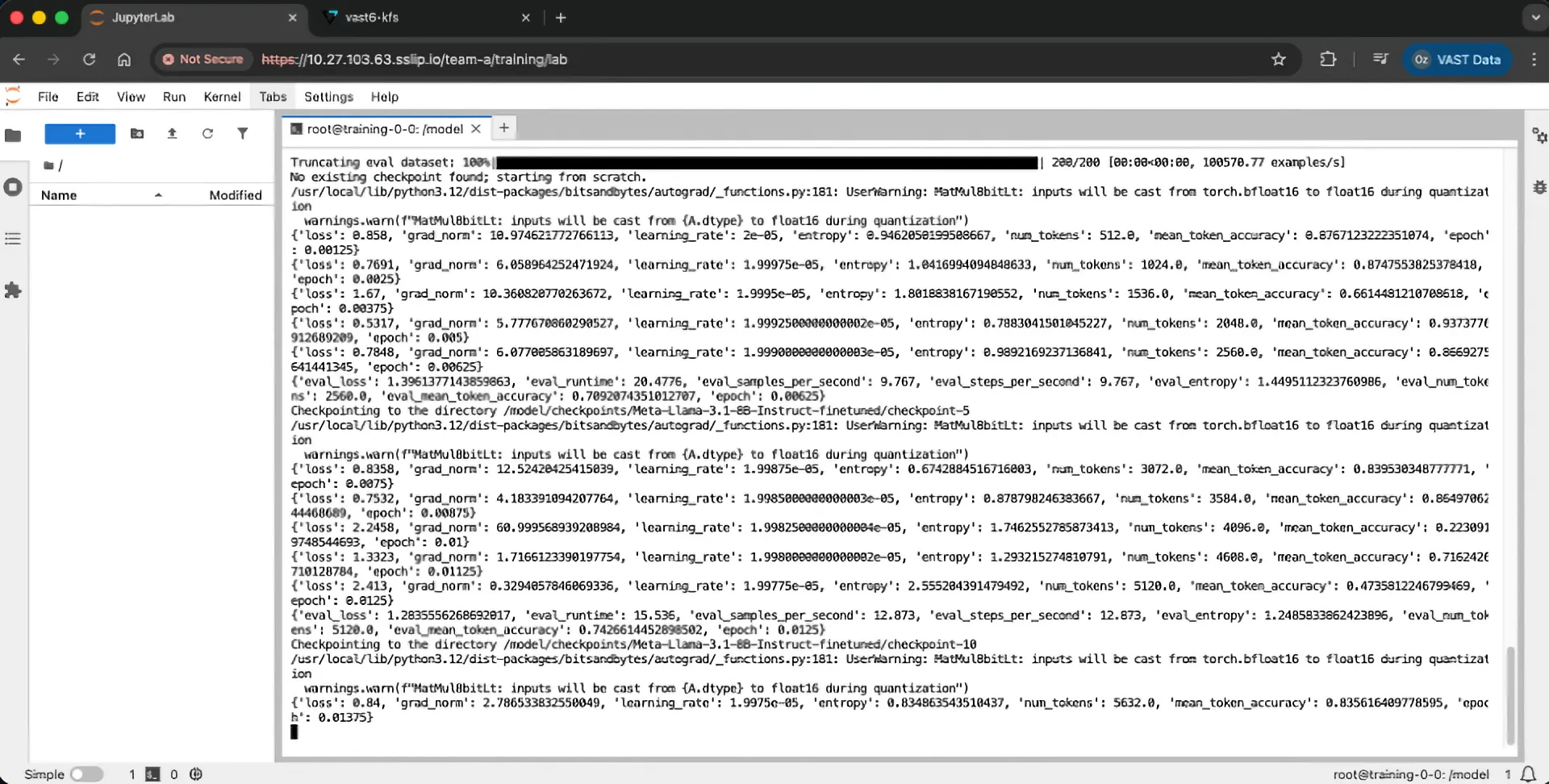

In the step-by-step walkthrough below, we launch a PyTorch training job for a LoRa adapter on Llama 3.1 in site A. Checkpoints are written in every 5 steps to a VAST‑backed volume.

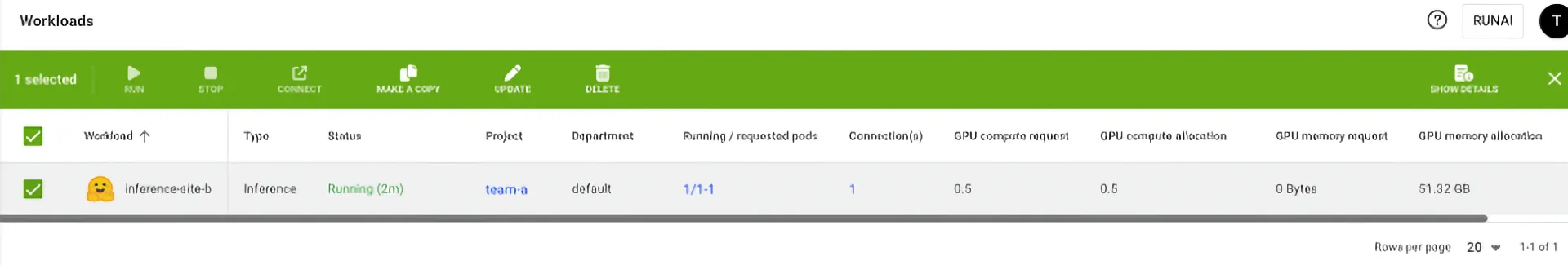

We then simulate a GPU host crash in site-A - Run:AI instantly detects the failure and reschedules the pod to a GPU cluster in site-B.

Since the VAST volume is globally accessible, the job reconnects to the same storage namespace; the latest checkpoint streams at full NVMe speed, and training resumes within seconds. What would normally take minutes of downtime is reduced to under half a minute - without any manual intervention.

In our approach, we start by preloading the base model into VAST using a data mover container. This avoids the cold start of downloading the model every time training begins. Once that’s in place, we submit the training job using the same VAST-mounted volume. Checkpoints are written to /model/checkpoints, and when a failure is triggered, recovery picks up exactly where it left off.

Putting it into Practice: A Step-by-Step Job Submission

To show this resilience in action, we’ve built a complete working example that you can reproduce. The full source code, scripts, and container definitions are available in our GitHub repository.

Step 1: Creating a PVC with VAST CSI filesystem

Let’s create a PVC with VAST CSI filesystem and mount it to /model.

Create a new training workload job with pytorch-one-gpu:

If the model isn’t already downloaded, start by fetching it - in this example, we’ll download Meta-Llama-3.1-8B-Instruct into /model and create the additional checkpoint directories. You can use the model-loading bash script included in this repository and run it after SSH-ing into the pod. This script downloads Meta-Llama-3.1-8B-Instruct from Hugging Face Hub and writes it to /model/Meta-Llama-3.1-8B-Instruct. It also creates the /model/checkpoints directory with the correct permissions where training checkpoints will be written.

After this step completes, your VAST volume contains:

/model/Meta-Llama-3.1-8B-Instruct - The base model (one-time download)

/model/checkpoints/ - Empty directory ready for checkpoints

Step 2: Creating Training Script

Now let’s look at what makes this training resilient: checkpointing. The heart of this example is training/distributed.py, which implements checkpointing and recovery;

Checkpoint frequency: Saves every 5 training steps to /model/checkpoints/Meta-Llama-3.1-8B-Instruct-finetuned/

Automatic resume logic: On startup, the script searches for existing checkpoints and automatically resumes from the latest one

LoRa fine-tuning: Uses parameter-efficient LoRa adapters to fine-tune Llama 3.1 with minimal memory overhead

Distributed training ready: Leverages torchrun for multi-GPU training via the launch.sh entrypoint

Step 3: Build the Training Image (or Use Ours)

The training/ directory includes a Dockerfile that packages everything needed.

If you want to build your own image:

cd training

docker build --platform linux/amd64 -t <YOUR_REGISTRY>/runai-vast-training

docker push <YOUR_REGISTRY>/runai-vast-training

Alternatively, you can use our pre-built Docker image that’s ready to go.

Step 4: Launch the Training Job

With the model loaded on VAST and the training image ready, submit your training job via Run:AI:

runai training pytorch submit lora-fine-tune-model --existing-pvc "claimname=$DATA,path=/model" -i docker.io/<REGISTRY>/runai-vast-training -g 1

Once the job starts, you’ll see training logs showing the LoRa fine-tuning in progress. Every 5 steps, you’ll see checkpoint confirmations:

Checkpoints are already saving to /model. Now we can simulate a failure on site A, and load, for example, an inference job on site B using checkpoint-40…

root@training-0-0:/model# ls -ltr checkpoints/

total Ø

drwxr-xr-x 2 nobody nogroup 4096 Nov 19 17:21 Meta-Llama-3.1-8B-Instruct-finetuned root@training-0-ø:/model# ls -ltr checkpoints/Meta-Llama-3.1-8B-Instruct-finetuned/

total 2

drwxr-xr-x 2 nobody nogroup 4096 Nov 19 17:18 checkpoint-40

-rw-r-r-1 nobody nogroup 1429 Noy 19 17:21 README.md

drwxr-xr-x 2 nobody nogroup 4096 Nov 19 17:21 checkpoint-80

… by creating an inference workload with the following arguments:

cmd: vllm

arggs: serve /model/Meta-Llama-3.1-8B-Instruct --enable-lora --lora-modules adapter=/model/checkpoints/Meta-Llama-3.1-8B-Instruct-finetuned/checkpoint-40 --max-model-len 1000 --enforce-eager --max-lora-rank 32

Final Thoughts

Resilience isn’t optional when training large models. Between hardware faults, preemptions, and scaling challenges, your pipeline needs to recover gracefully without human intervention. By combining a fast shared data store from VAST with automated orchestration from NVIDIA Run:AI, this illustrated example shows that checkpointing can be fast, global, and fully integrated into your training workflow.

Disaster becomes a speed bump, not a showstopper.

Experience VAST’s industry-leading approach to AI and data infrastructure at VAST Forward, our inaugural user conference, February 24–26, 2026 in Salt Lake City, Utah. Engage with VAST leadership, customers, and partners through deep technical sessions, hands-on labs, and certification programs. Join us.