In the era of “physical AI,” smart cities are no longer just about collecting petabytes of video footage—they are understanding and reacting upon it in real-time. The challenge has shifted from storage to cognition. That’s why we are excited to detail the integration of NVIDIA Cosmos Reason 2 Vision Language Model (VLM) directly into the VAST AI OS.

This architecture transforms a city’s video infrastructure from a passive recording system into an agentic “thinking machine,” capable of detecting anomalies, managing traffic, and ensuring public safety with human-like reasoning and autonomous capabilities.

VAST and NVIDIA: A Real-time Video Intelligence Engine for Agents

The core limitation of traditional video intelligence systems is not model quality, but architecture. Most deployments are designed around batch analytics, with only narrow, hard-coded real-time capabilities. Video is primarily treated as data to be stored and analyzed later, not as a live signal that can drive immediate action.

This design fundamentally limits response in scenarios where seconds matter—traffic incidents, safety hazards, or emerging public-security events. Even when “real-time” analytics exist, they are typically constrained to isolated detectors or fixed rules, unable to reason across streams, correlate context, or adapt dynamically to unfolding situations.

These limitations stem from architectural silos. Video is written to object storage, metadata is extracted into separate systems, and analytics pipelines are bolted on downstream. Each component operates independently, making low-latency, context-aware reasoning across the entire system impractical.

The VAST AI OS, built with the NVIDIA Metropolis Blueprint for video search and summarization (VSS), removes these constraints by collapsing storage, metadata, and reasoning into a single, unified video intelligence engine. Among the key components are:

VAST DataStore: A multiprotocol, exabyte-scale storage layer optimized for continuous high-definition video ingest, delivering sustained throughput with zero-copy efficiency.

VAST DataBase: A built-in, high-performance transactional database that captures semantic context—timestamps, GPS coordinates, sensor readings, and vector embeddings—directly alongside the video.

VAST DataEngine: An event-driven compute layer that reacts to this metadata in real time, triggering AI reasoning functions the moment meaningful patterns emerge.

This architecture is purpose-built for time-critical intelligence, enabling cities to move beyond retrospective analysis toward continuous, real-time understanding and response.

NVIDIA Cosmos Reason: The core of the video agent

At the heart of this system is NVIDIA Cosmos Reason 2, a state-of-the-art reasoning VLM purpose-built for physical AI and video-based agents. Traditional video analytics models focus on detection and classification—identifying objects, counting entities, or triggering alerts based on predefined rules. While useful, these approaches struggle in real-world environments where ambiguity, occlusion, and evolving context are the norm.

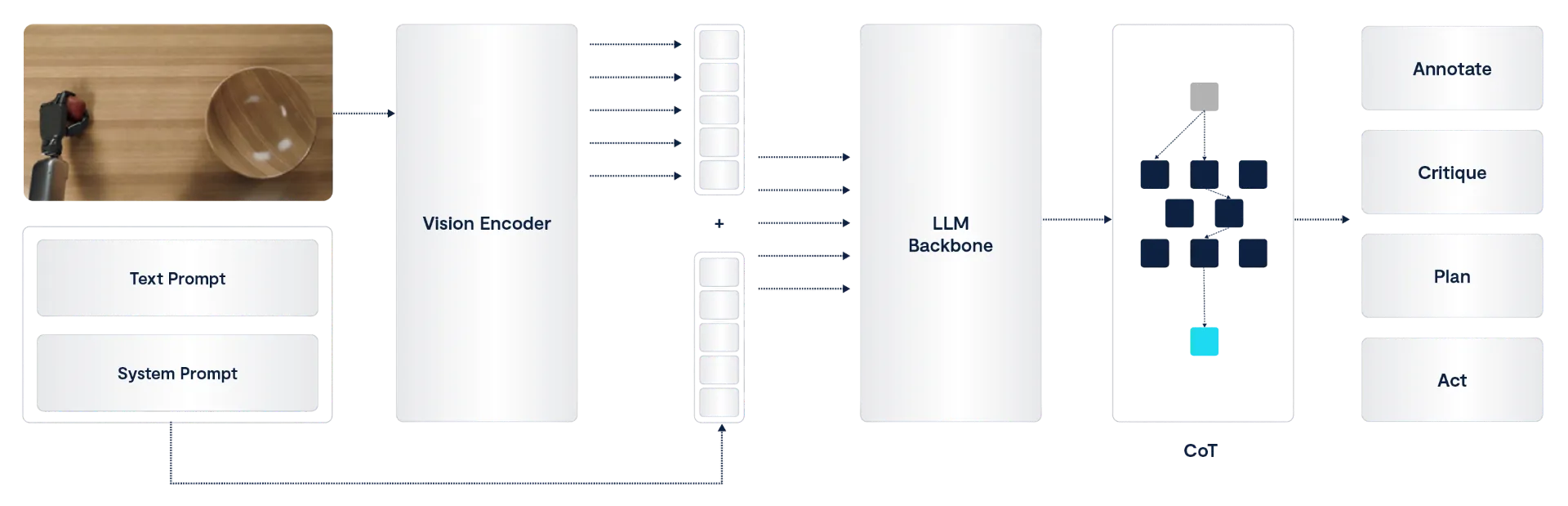

Cosmos Reason goes beyond recognition to reasoning. By applying Chain-of-Thought (CoT) inference, the model interprets visual scenes in terms of intent, causality, and physical context. This enables it to answer higher-level questions, such as: Why is something happening? What is likely to happen next? Does this situation require intervention?

For smart cities, this distinction is critical. A reasoning VLM can:

Reduce false positives by understanding context rather than reacting to isolated signals.

Differentiate normal behavior from true anomalies, even when both look visually similar.

Support safer automation, where responses are triggered only when the model has high confidence in its interpretation.

Adapt to novel situations that were never explicitly programmed or labeled.

Deployed as an NVIDIA NIM microservice, Cosmos Reason introduces true “System 2” thinking into video analytics pipelines. It deliberately pauses to <think> before responding, allowing it to solve complex visual scenarios—such as distinguishing between a vehicle stopping for a pedestrian and one disabled in traffic.

This shift from reactive detection to deliberate reasoning enables cities to move faster without acting prematurely, improving both operational efficiency and public safety.

Cosmos Reason 2 offers a few key improvements over the model’s original iteration:

Larger context window: Cosmos Reason 2 has a 256,000-token context window, compared with 16,000 tokens for Cosmos Reason 1. This 16x increase allows Cosmos Reason 2 to handle longer videos and richer scenes.

Flexible deployment options: Cosmos Reason 2 is available in 2B and 8B parameter counts, so users can deploy the size of model that suits their accuracy requirements, latency needs, and compute budgets. The new model can also run reliably across environments ranging from edge devices to large-scale cloud devices.

Improved spatial reasoning: Cosmos Reason 2 features Advanced Spatial Perception, which includes stronger 2D grounding (for locating objects in a flat image). This new capability is essential for more advanced spatial reasoning and embodied AI applications, where understanding an object’s position in space is critical.

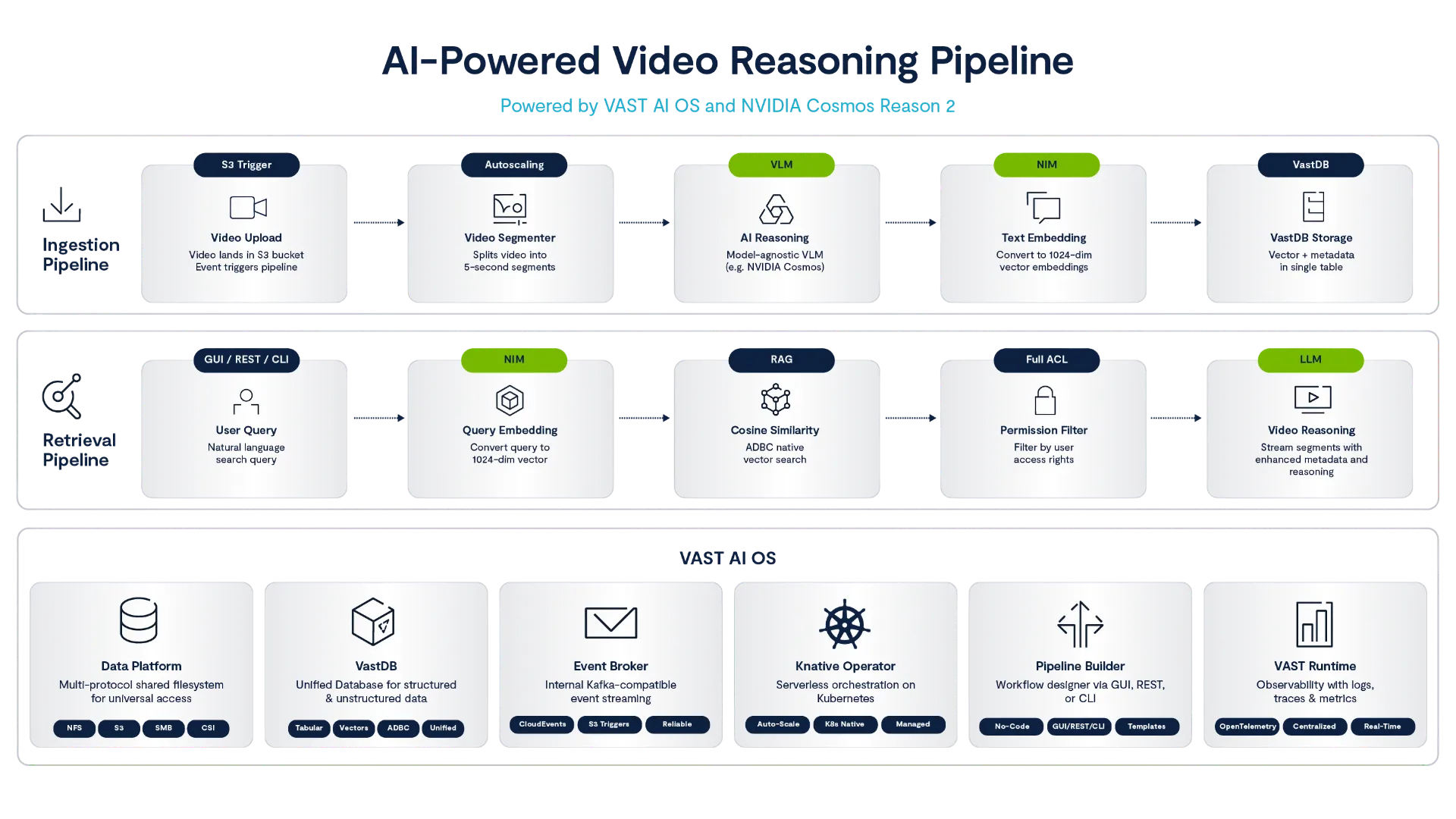

Technical Workflow: The “City Watch” Pipeline

A modern smart city may operate tens of thousands of cameras, continuously capturing streets, buildings, transit hubs, and public spaces. When integrated with VAST AI OS and NVIDIA Cosmos Reason 2, this video fabric becomes an always-on city watch pipeline—capable of detecting events, reasoning about risk, and autonomously triggering real-world response workflows within seconds.

Figure 2: video intelligence workflow diagram

Here’s how it works at each step of the pipeline.

Ingest, store, and catalog

Live video streams are ingested into VAST DataStore and written as time-sliced objects (for example, 5–10 second clips). These objects land in a scalable object namespace optimized for sustained, high-throughput ingest. As each clip is written:

Object metadata (timestamps, camera ID, GPS coordinates) is automatically extracted.

Index entries are created.

References are recorded in the VAST DataBase, forming a searchable, transactional catalog of live video.

This ensures that video and its semantic context are immediately queryable—without waiting for offline processing.

Trigger pipelines

The VAST DataEngine continuously monitors the DataBase for newly ingested, unprocessed video objects. The appearance of a new catalog entry acts as a native trigger, eliminating the need for external schedulers or polling systems.

Once triggered, the pipeline:

Performs lightweight preprocessing (frame sampling, resolution normalization)

Constructs a structured prompt

Invokes Cosmos Reason 2 via its NVIDIA NIM microservice and specifies an array of possible downstream agents that may be triggered based on the events in the video.

Inference (the “thinking” step)

Cosmos Reason 2 analyzes the video clip using Chain-of-Thought reasoning, explicitly ruling out benign explanations before escalating an event.

Prompt: “Analyze visual input. Is there an active fire? Estimate size and threat level.”

Cosmos Thought: <think>Thick black smoke is rising. Flashing orange flames are visible at the base of the tree line. This is not steam. Wind is blowing smoke toward the pedestrian path.</think>

Output: {"event": "fire", "confidence": "high", "severity": "critical", "recommended_agent": "emergency_dispatch"}

Rather than serving only as metadata, this structured output becomes a control signal for the pipeline. The reasoning model is provided, as part of its context, with knowledge of the available downstream agents and their capabilities. Based on its interpretation of the situation, Cosmos Reason 2 explicitly determines which agent should be invoked next.

This allows the system to move beyond static, prewired flows. The reasoning step dynamically selects the appropriate response path—effectively turning the VLM into an orchestration brain rather than a passive classifier.

Autonomous agent action

The VAST Autonomous Agent framework, operating as part of the DataEngine’s agentic execution layer, consumes the structured output and dispatches the selected agent.

The chosen agent(s):

Validate the reasoning output

Apply policy and safety constraints

Execute the corresponding real-world API action

For example, an emergency dispatch agent might connect to the city’s emergency dispatch system API, automatically dispatching fire services and attaching:

The relevant video clip

GPS coordinates and camera metadata

Severity and contextual reasoning

This design enables reasoning-driven orchestration, where understanding the situation determines not just what is detected, but what happens next. Crucially, it also determines what does not happen.

By allowing Cosmos Reason 2 to select downstream agents and functions dynamically, the system avoids indiscriminately running expensive AI workloads across every video stream. In an environment where GPU resources are scarce and valuable, only video segments that exhibit meaningful risk or ambiguity are escalated to deeper analysis or autonomous action. Benign or low-confidence scenarios are filtered early, preventing unnecessary model invocations.

The result is a pipeline that scales economically: compute is focused where it matters most, GPU cycles are preserved for high-impact events, and city-wide video intelligence remains both responsive and sustainable—even at tens of thousands of concurrent streams.

Apart from real-time analysis and actions, the addition of an embedding model into the pipeline also enables real-time retrieval augmented generation (RAG) on the same videos. This would allow, for example, investigators to search footage for factors that might have contributed to an accident (like the fire example above).

Why an AI-Native Architecture Matters

For developers, the value of combining the VAST DataEngine with Cosmos Reason 2 is that it turns “smart city AI” into a set of composable, testable building blocks instead of a fragile custom pipeline:

Events, not cron jobs: Video ingest, metadata updates, and Cosmos inferences are all expressed as event-driven functions. A fire-detection flow becomes: object written → metadata row created → trigger fires → Cosmos NIM call → result persisted → follow-up trigger for high-confidence events. That means you can version and deploy each function independently, monitor it, and roll back without touching the storage or database layers.

One data substrate, many agents: The same infrastructure used for fire detection can also be used by other agents (e.g., traffic optimization, intrusion detection, or crowd analytics) without copying data into separate “AI silos.” As a developer, you work against a consistent API and schema; you add new agents by wiring new triggers and functions to existing data, not by standing up new infrastructure.

Intelligent compute utilization: An environment with many diverse agents (potentially thousands) may quickly overwhelm compute resources. Every agent may assist a VLM, a reasoning model, embedding model, or perform object tracking pipeline. Cosmos Reason 2 is used as the model router to invoke only the relevant agents based on the content of a video clip (and possibly prior clips). This allows building agentic systems that have a very rich set of capabilities, while maximizing compute resource efficiency.

First-class reasoning in the loop: Cosmos Reason 2’s structured outputs (timestamps, bounding boxes, severity, confidence scores) fit naturally into VAST’s DataStore model. That gives you a clean contract: VAST handles when and what to process; Cosmos handles why it matters; and your agents implement what to do next (e.g., call dispatch APIs, update a digital twin, or notify operators).

For cities, this approach lets teams ship production-grade solutions while maintaining flexibility for the next set of agents and models they want to deploy. And because new use cases don’t require new infrastructure or systems, it’s cost-effective—the VAST Data foundation remains constant, meaning the biggest change to roll out new applications on top of it should be choosing the right models and agentic workflows.

Video has already proven immensely valuable for solving and deterring crime, but the combination of next-generation AI models and data infrastructure helps take it to the next level. With the ability to process, analyze, and act on data in real time, cities and other government entities can now help improve public safety by taking action as soon as incidents begin to unfold.

You can access the Cosmos Reason 2 model and learn more about it here. To learn more about deploying data pipelines, such as video-reasoning workflows, using VAST DataEngine, read this blog post. And register for our inaugural VAST FWD user conference to hear experts from VAST, NVIDIA, and other leading organizations discuss the cutting edge in AI use cases.