TLDR:

Long-context and multi-turn AI conversations create significant amounts of intermediate key-value data that is used by inference applications. Because GPU memory is a precious resource, these results cannot be kept forever in GPU memory, thus creating a memory wall where not all intermediate data can be kept in GPU memory.

The NVIDIA Dynamo inference framework is bringing novel capabilities to market such as key-value cache (KV$ in short) offload

KV$ offload enables the moving of GPU memory states from GPU memory to lower-tiers of memory or storage, enabling GPUs to pre-load past conversations or cache frequent prompts, avoiding the expensive recompute operation (aka: prefill) normally required to load large context into GPU memory

The KV$ offload upside is measured in terms of accelerated time-to-first-token (TTFT) and the savings that come from not needing GPU cycles for prefill, thanks to the data already being cached

Both NVIDIA Dynamo and the VAST AI Operating System are designed to move data at the speed of light (or the speed of the network in this case…)

This blog post covers sizing and architecture considerations when building for large-scale inference infrastructure that is enabled for KV$ offload

VAST collaborated with CoreWeave to evaluate NVIDIA’s Dynamo KV$ offload with the VAST AI OS and saw GPU efficiency improve by 90% and TTFT improve by 20x as compared to when GPUs need to prefill calculate every past conversation

Dynamo data persistence now relies on data batching for large asynchronous writes, and bulk synchronous reads - resulting in an IO profile focused on storage throughput, not IOPS and low latency

Ultimately, VAST’s testing identified that most KV$ offload operations are not storage-limited, but rather they are network-limited. For additional performance improvements, the NVIDIA Spectrum-X Ethernet fabric accelerates AI Storage fabrics by removing performance bottlenecks common to off the shelf (OTS) Ethernet solutions. VAST has integrated with NVIDIA Spectrum-X - more details here.

For the past few months, the VAST and NVIDIA engineering teams have been collaborating on improving NVIDIA NIXL and NVIDIA Dynamo. The following analysis reflects the software enhancements we've worked on that focus on aggregating and accessing KV data in large sequentially-accessed blocks - using new approaches to data storage and access such as KVBM block queuing, transfer list management and support for multi-file layouts.

Previously, we wrote about accelerating inference and the AI data pipeline where we introduced the critical challenge of KV cache (KV$) management for LLMs. We surveyed the landscape of offloading solutions, including LMCache, vLLM, and NVIDIA Dynamo KVBM, and shared compelling results from our own experiments. Those tests, using NIXL and NVIDIA GPUDirect Storage (GDS) and vLLM/LMCache integration, demonstrated the massive potential of offloading to KV$ VAST, showing a 10× improvement in prefill time for large-context scenarios.

Today, we're excited to share the next major step in this journey: the collaboration and integration between the VAST AI Operating System and NVIDIA Dynamo. This article will detail Dynamo's sophisticated architecture and show how the VAST AI OS is now integrated as a foundational, high-performance capacity solution to solve the GPU memory wall.

What is NVIDIA Dynamo?

Before we dive into the problem, let's briefly introduce NVIDIA Dynamo. NVIDIA Dynamo is an open-source, high-performance AI inference framework designed to manage the complexities of serving AI models at data center scale. Dynamo is not just a single tool, but is rather a modular system with components such as routing, scheduling, and (most importantly for this discussion) memory management.

One of the key components for solving the memory challenge is the KV Block Manager (KVBM). This is the "brain" within Dynamo specifically designed to intelligently manage the LLM's KV$. It's the KVBM that enables the sophisticated, caching system that allows us to break through the GPU memory wall.

The Challenge: The GPU Memory Wall

The KV$ stores the key and value states for every token in a sequence. As a conversation or document gets longer, the cache size grows, consuming precious high-bandwidth memory (HBM) on the GPU. This creates a hard limit, forcing a difficult choice between supporting long context for a few users or short context for many. Expensive HBM becomes the bottleneck for scaling inference.

The Solution: A New Caching Architecture

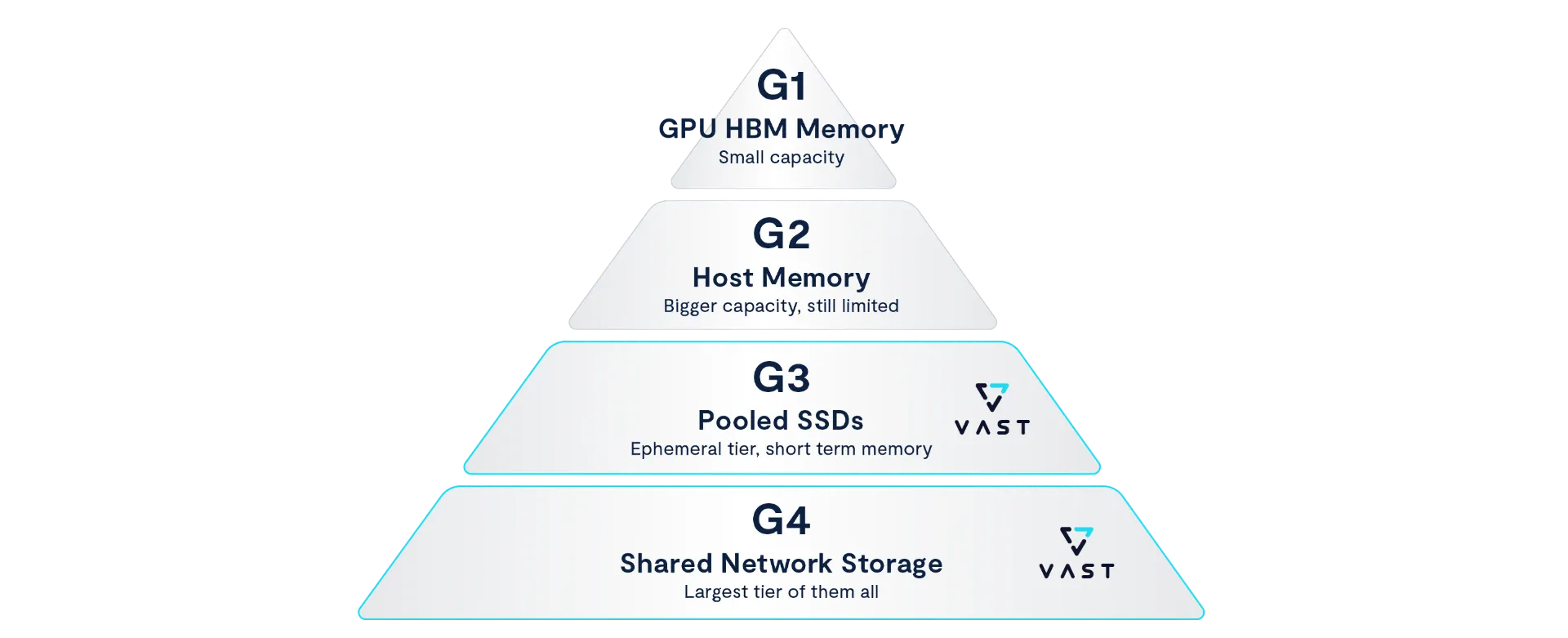

NVIDIA Dynamo's KV Block Manager (KVBM) provides an elegant solution to this problem by creating a sophisticated, multi-tiered system for intelligent memory management. This architecture is designed to place data in the right tier at the right time, balancing speed, capacity, and cost. Understanding the complete vision is key to seeing how VAST fits in.

Here is the four-tiered architecture as defined by NVIDIA:

G1: Device Memory (GPU HBM): The “hot” tier. This is the fastest and most expensive memory, residing directly on the GPU. It holds the cache for tokens being actively computed.

G2: CPU Memory: The “warm” staging tier. This is a larger, but slower, tier using the server's main CPU memory. When HBM is needed for new requests, less-active KV$ blocks are moved here.

G3: Local/Pooled SSDs (High-Capacity Cache): The “cold” tier. This is where the VAST AI OS currently integrates. It is designed for high-performance, massive-scale offloading when the upper tiers are full. VAST provides a shared, scale-out pool of capacity that many GPU nodes can access. In its current implementation, this G3 tier is ephemeral; it serves as a powerful temporary storage layer to support extremely long context windows for the duration of the service's uptime.

G4: Shared Network Storage: This final tier is long-term persistence. This tier allows sessions to be saved permanently and then reloaded hours or days later, even after a full GPU system restart. Persistence is a key difference from the G3 tier, which focuses on extending capacity for live inference sessions, especially multi-turn agentic conversations. In the future, the VAST AI OS will also be integrated at the G4 tier to provide "long-term memory" capability.

This tiered design allows Dynamo to handle memory pressure gracefully. By integrating into the G3 high-capacity cache, the VAST AI OS provides the critical, scalable capacity needed to make use of massive context windows a reality in production inference environments today.

Dynamo architecture https://docs.nvidia.com/dynamo/archive/0.2.0/architecture/kv_cache_manager.html

Architecting For KV$ Offload

General Architecture Considerations

KV$ is stored in large blocks, making KV$ offload a workload that demands high throughput

The network and supporting storage subsystems must scale with/to the count of GPUs

Each host’s network access to storage will determine its TTFT performance

KV$ carries conversation information and should be encrypted using an encryption key per-customer, following the best practices of securing user information

VAST Data Architecture Considerations

Any VAST cluster supports KV$ offload today via Dynamo’s transfer library (NIXL)

Use the VAST NFS driver, to enable multipathing to saturate the IO needs of any GPU machine

While VAST systems can saturate any client’s I/O needs, even on a lossy network, when using NFS-over-TCP, VAST recommends mounting VAST clusters over RoCE (RDMA over converged Ethernet) for optimizing latency, host CPU utilization, and unlocking GPUDirect Storage for direct KV$ retrieval from storage into GPU memory.

Calculating KV$ Footprint

KV$ creates gigabytes of data per prompt and is strongly dependent on model parameters:

Let’s take Llama 3.1-405B as an example for determining the size of the KV$ for a single token using standard parameters (126 layers, 8 KV heads, 128 head dimension, and FP16 storage):

Size per Token = 126 * 2 ( for Keys and Values) * 8 * 128 * 2 (bytes) = 516,096 bytes

This results in approximately 504 KiB per token. For a single user utilizing a full 127,188-token context window, the cache becomes massive:

504KiB/token * 127,188 tokens ≈ 61 GB

Modeling the "Data Dump" Scenarios

While it helps to have CPU memory and local SSDs to store data into, the large amounts of KV$ data created per each user session begs the need for scalable low cost storage infrastructure. The purpose of the G4 tier is to support massive contexts and serve them back with low latency to multi-turn inference sessions running on multiple GPU nodes. KV$ retrievals spawn low-TTFT, high-throughput read events rather than a gentle trickle of data. Consider these scenarios:

The 'Power User' Returns: A user accumulates 128,000 tokens worth of context (~61 GB) and pauses. Their session is evicted to VAST to free up memory space on a host to service other users. Upon the return of the original user, the system must rehydrate 61GB of KV$ back into a machine’s GPU memory. This is a 100% cache hit event.

The RAG Workflow: An analyst uploads 64,000 tokens worth of documents (~30 GB). Every subsequent question triggers a reload of that 30GB base cache, resulting in >90% cache hits per prompt. Queries which reference the same document set but come from a different analyst will also enjoy a 90% cache hit per prompt.

How Much Capacity Do You Need?

The following is an example formula that can guide customers in sizing for KV$:

Total Capacity = Number of Users * Average KV$ per User * Retention Multiplier

The retention multiplier is the number of past conversations that are stored for a user. Let's use our Llama 3.1 405B example, and plan for 100,000 concurrent users or agents:

Average KV$ per Prompt: As large context windows (e.g., 128,000 tokens) are becoming standard for tasks like document analysis or long-running conversations, the cache size per user balloons. A 128k-token context is roughly 61 GB in size. Let's assume a more typical average for a prompt is 64K tokens, roughly 30 GB

Retention Multiplier: We'll use 15 to provide a good user experience

Calculation: 100,000 users * 30 GB * 15 = 45,000,000 GB = 45 PB

To support 100,000 users/agents on a large-context model with a robust conversation history, you need 45 petabytes of storage.

Understanding the KV$ I/O Profile

Asynchronous Writes (Eviction): When the GPU HBM (G1) and CPU Memory (G2) tiers fill up, the KVBM evicts less-active cache blocks to the storage tier. This operation is asynchronous; it does not happen in the immediate, real-time path of generating the next token. This means the system can intelligently batch these writes into larger, more efficient operations. There is no strict, real-time latency or high-IOPS requirement for this eviction process.

The offload block size granularity is controlled on the Dynamo framework level. Currently, it mirrors the inference engine block size, usually 16 or 32 tokens, but can be enhanced to increase to whatever IO size we want. Upon eviction, the KVBM writes the all of the model layers of the block size, which amounts to:

Total Cache per Token (all 126 layers): 504 KiB

Total Cache per Block (16 tokens): 504 KiB/token * 16 tokens ≈ 8 MB

Data Written per GPU per Evicted Block (8 ways): 8064 KiB / 8 GPUs ≈ 1 MB

Latency-Critical Reads (Retrieval): When a user session/conversation is reactivated, the system must fetch the offloaded KV$. While modern inference engines utilize pipeline parallelism and batching to keep the GPU compute units active with other concurrent requests, the TTFT for the specific re-activated user/conversation is entirely bound by how fast this data can be retrieved.

Therefore, the goal is not to maximize IOPS (processing many small requests) and overly focus on latency, but to maximize throughput (delivering a massive stream of data instantly). AI inference systems need to stream GBs of data linearly into HBM to unblock that specific sequence. This is a bandwidth-intensive workload where the storage tier must deliver the entire KV$ at line-rate to minimize the response time perceived by the user.

NOTE: At larger batch sizes (inference on multiple prompts at once for improved token serving efficiency - less power per token), KV$ load becomes an asynchronous operation, as the next batch is prepared in memory while the existing batch is running.

Real-World Performance: VAST and Dynamo in Action

Hardware:

Client system: NVIDIA HGX™ H100

GPUs: 8x NVIDIA HGX H100 80GB HBM3

Network link: 2x100Gbit/sec Ethernet, Bluefied-3 integrated connectX

Software: NVIDIA Dynamo pre-release 0.7.0 (Nov 21, 2025, modified) + vLLM v0.11.0 (modified) + NIXL 0.7.1

Model: clowman/Llama-3.1-405B-Instruct-AWQ-Int4

Run mode: Tensor parallelism across 8 GPUs

Storage: NFS (TCP, VAST NFS multipath, VAST server)

Prompt: 127,188 tokens

Results

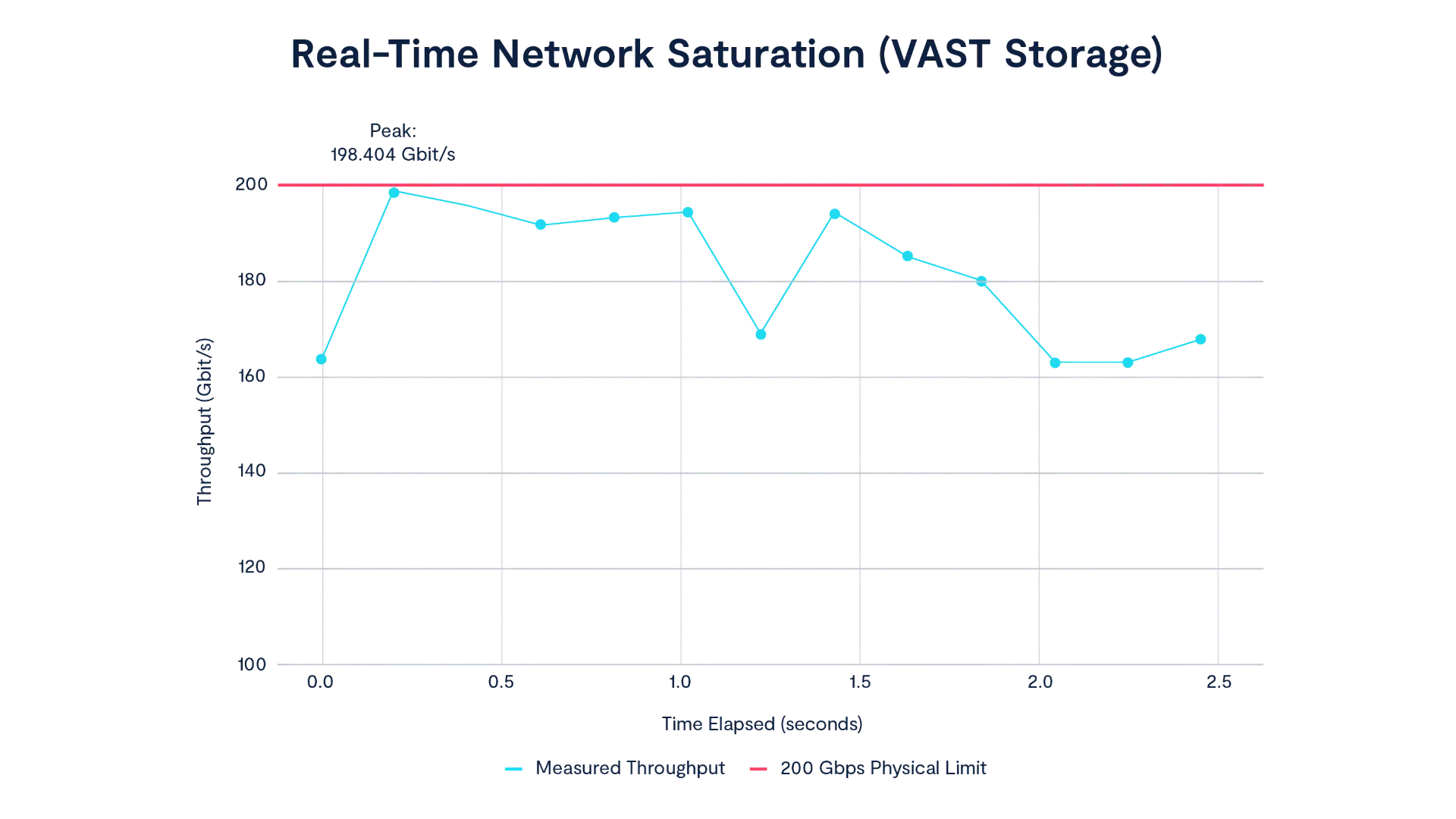

Collaborating on this configuration with our partner CoreWeave, VAST consistently saturates the network during KV$ retrieval, sustaining over 90% of line rate (181 Gbps) and peaking at ~99% (198 Gbps) on a 200 Gbps link.

Note: VAST’s NFS multipath driver can bind an arbitrary number of client interfaces into a single mount point, enabling extremely high aggregate throughput across enterprise environments.

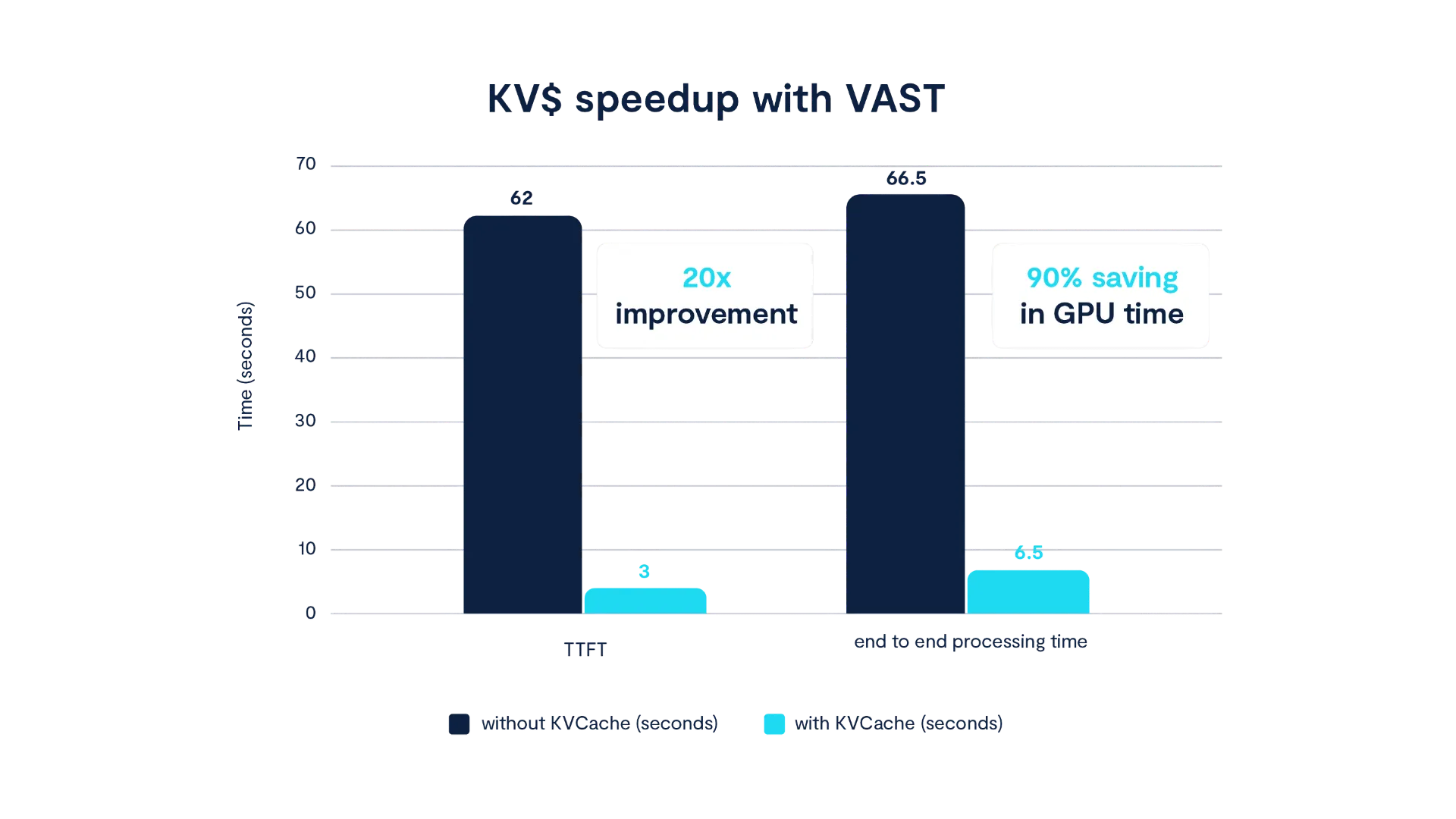

As relates to the inference user experience, we observed the following performance:

TTFT with prefill compute: 62 seconds

TTFT with KV$ load from VAST: 3 seconds

Effective speedup: 20×

Overall GPU time reduction: ~90% end-to-end

Next Steps

We invite developers to explore, reuse, and build on the work behind our benchmarks and integrations. The full walkthrough is available here.

In a future blog post, we’ll discuss how inference requirements evolve for enterprises that prioritize governance, security, and reliability. In the meantime, across clouds or on-prem - wherever you have VAST - you can accelerate inference today!

Dynamo and VAST

The combination of NVIDIA Dynamo with the VAST AI Operating System offers a practical and powerful solution to one of the biggest challenges in AI inference today. By embracing an intelligent memory architecture, we can build more scalable, resilient, and cost-effective infrastructure for the next generation of AI applications.

To learn more about NVIDIA Dynamo, visit the official GitHub repository and documentation.

Experience VAST’s industry-leading approach to AI and data infrastructure at VAST Forward, our inaugural user conference, February 24–26, 2026 in Salt Lake City, Utah. Engage with VAST leadership, customers, and partners through deep technical sessions, hands-on labs, and certification programs. Join us.