White Paper

VAST AI Operating System

Introduction

AI has emerged as the most transformative technology of our time. It is redefining how knowledge is produced, how decisions are made, and how entire scientific and industrial domains push the boundaries of what is possible. AI is accelerating discovery, amplifying human capability, and turning formerly intractable problems into solvable ones across every discipline. It is reshaping how we teach and learn, engineer complex systems, build and ship software, personalize services at scale, and diagnose and document care. Yet the magnitude of this transformation is not merely in what AI can do today, but in the rate at which its capabilities are improving. Every breakthrough becomes a stepping stone to the next, and the pace is outrunning all assumptions of earlier eras of technology.

Bringing these breakthroughs from the lab into the world demands more than clever algorithms; it requires an equally unprecedented rethinking of the physical, computational, and organizational infrastructure needed to make intelligence possible at scale. The world is already feeling the strain: hyperscale datacenter construction is outpacing every other category of industrial development, regional power grids are being re-architected to supply multi-gigawatt AI campuses, and policymakers are confronting the uncomfortable fact that AI demand is beginning to shape national energy strategy. These pressures dominate headlines because they reveal a structural constraint: AI has outgrown the scale of any single building, any single campus, and even the capacity of regional transmission infrastructure. The bottleneck in AI’s progress is no longer the ingenuity of model designers, but our ability to build, connect, and govern the massive, distributed machinery required to run these systems reliably, economically, and responsibly.

As we narrow the scope from national infrastructure down to the operational realities within and across AI facilities, the complexity deepens. Modern AI workloads require storage, networking, and compute to operate not as independent subsystems, but as a tightly coupled, data-centric fabric. Clusters must move and process petabytes of data with global consistency; they must elastically federate across datacenters, regions, or even continents; and they must do so with automation, auditability, and fine-grained data governance that satisfies regulatory, operational, and societal demands.

At this scale, the true constraint is not raw hardware capacity, but the absence of an integrated operating system that coordinates these distributed resources as a coherent whole. This need for a unified, data-aware, federated control plane is precisely what led us to build the VAST AI Operating System.

What is the VAST AI Operating System?

The VAST AI Operating System is a software platform that simplifies and accelerates the entire AI pipeline—from raw data ingest, through context analysis, to intelligent action—as a continuous, real-time flow. It is designed to be the foundation upon which AI-driven applications and autonomous agents are built, deployed, and managed at scale. Think of it as an operating system for the datacenter itself: one that manages the underlying resources across hundreds to thousands of systems, provides essential shared services, and offers a consistent environment for AI applications to run—much as a traditional OS does for a single machine, but built for the massive distributed scale and unique demands of modern AI.

The platform’s origins trace back to a fundamental rethinking of how distributed systems should be built. In 2016, VAST introduced the Disaggregated and Shared Everything (DASE) architecture, which separates compute logic from physical storage media while allowing all compute nodes to access all storage Gdevices in parallel via high-performance NVMe-over-Fabrics. This “shared everything” approach, combined with novel transactional data structures, eliminates the data partitioning and complex inter-server coordination that plague traditional “shared-nothing” designs. The result is a system that scales linearly from terabytes to exabytes, breaks the traditional tradeoffs between performance and capacity, and simplifies data management across all-flash infrastructure. DASE provided the foundation for VAST’s Universal Storage platform in 2019, its evolution into an AI Data Platform by 2023, and ultimately the VAST AI Operating System in 2025. As a software-defined system, it runs on your choice of vendor server or cloud platform, ensuring total flexibility with no hardware lock-in.

Built upon this DASE foundation, the AI Operating System is organized into two broad tiers: foundational data platform services and specialized execution engines. The data platform services—DataStore, DataBase, and DataSpace—address the storage, organization, and global accessibility of data.

DataStore provides the high-performance, resilient file, object, and block storage where data lives, building on VAST’s Universal Storage heritage to ingest, store, and deliver rapid access to the massive datasets AI demands.

DataBase extends this with a purpose-built database for AI, capable of handling structured data such as enterprise data warehouse tables alongside contextual metadata, vectors for similarity search, real-time streams, catalogs, and logs—making all of this data queryable and usable for model training and inference.

DataSpace provides the overarching globally distributed data computing layer, enabling organizations to manage and access data across on-premises, cloud, and edge environments through a unified namespace and access framework.

Together, these services ensure that data of any type, at any location, are continuously available to the AI pipeline without the silos and fragmentation that traditionally impede large-scale workloads.

The execution engines transform this data foundation into intelligent, autonomous action.

DataEngine serves as the orchestration supervisor for the AI OS, transforming distributed computing resources into an integrated execution environment by managing serverless function pipelines driven by event triggers and Python-based microservices. By decoupling applications from data gravity—intelligently moving compute to where the data resides—it optimizes for cost, execution time, and hardware requirements including GPUs, and includes a native Kafka-compatible Event Broker that stores message topics as VAST Tables for global ordering and real-time SQL querying of live streams.

SyncEngine operates within the DataEngine as a highly scalable data discovery and migration service, indexing and synchronizing data from external sources—S3 buckets, file systems, SaaS platforms—into the VAST DataSpace, maintaining a durable searchable index and triggering enrichment pipelines automatically as new data is onboarded.

InsightEngine focuses on enriching data, providing services that compute, index, maintain, and serve vector embeddings for data as it evolves to enable read-time semantic search across all data for RAG-enabled applications and agents.

AgentEngine provides the production-grade deployment and orchestration layer for AI agents, bridging the gap between notebook prototyping and enterprise-scale execution with a robust runtime for long-running containerized services, lifecycle management, persistent state, and secure service discovery—all fully auditable and traceable for compliance across complex multi-agent deployments.

The power of this architecture lies in how these layers compose into a seamless, end-to-end workflow. The SyncEngine discovers and onboards data from across the enterprise into the DataStore, preserving identity and continuity. The DataEngine processes and streams that data through event-driven serverless functions and the Event Broker, ensuring it is AI-ready. The InsightEngine leverages content-specific chunking and embedding models to contextualize the processed data, generating vector embeddings within the DataBase that make information immediately queryable and readable for large language models. And the AgentEngine provides the stable, secure environment at the top of the stack where AI agents execute complex, multi-step tasks by leveraging tools and data across the entire ecosystem.

By consolidating infrastructure that traditionally required separate silos—object stores, analytics clusters, Kubernetes orchestration, and message brokers—into a single pool of resources governed by a unified control plane, the VAST AI Operating System eliminates the performance bottlenecks and operational fragmentation that have historically constrained AI at scale. It remains entirely model-agnostic, runs on any preferred hardware or cloud, and imposes no vendor lock-in—acting as the orchestrator that bridges infrastructure and intelligence with the unified data layer and management framework necessary for seamless performance at any scale.

What is Possible with the VAST AI OS?

With the foundational challenges of scale, coordination, and governance now addressed, organizations can shift their focus from managing infrastructure to advancing AI itself.

For model trainers, this means training runs are no longer constrained by single-datacenter capacity. Multi-site training becomes operationally viable, because runs can access common data from a globally consistent namespace, maintain predictable performance through failures, and checkpoint at scale without destabilizing the entire data platform.

For inference operators, this means models deploy closer to data sources across on-premises, cloud, and edge environments, while requests are intelligently routed and governance policies are applied uniformly and automatically. Vector embeddings remain current as data evolves, allowing inference to be consistent regardless of scale or geography.

For agentic systems, this means agents discover, access, and reason over diverse data types—structured tables, vectors, streams, logs—through a single, scalable, and observable interface. Long-running workflows maintain persistent state across failures, compose multiple models and tools, and generate complete audit trails, all within a globally unified namespace.

By unifying storage, networking, compute, orchestration, observability, and governance in a single, federated control plane, the VAST AI OS allows every aspect of operational AI to scale within and across datacenters without fighting the infrastructure beneath them. The system abstracts complexity, scales linearly, and enforces governance so that engineering efforts shift from integrating disparate systems to solving the problems AI was meant to address. With the VAST AI OS, you can deploy new models, onboard new data sources, and scale workloads at the speed of your ambitions rather than the pace of infrastructure expansions.

The following sections detail how the VAST AI OS makes this possible: the architecture, coordination mechanisms, and operational semantics designed specifically to transform distributed resources into a coherent operating system for AI.

The VAST Data Platform

How It Works

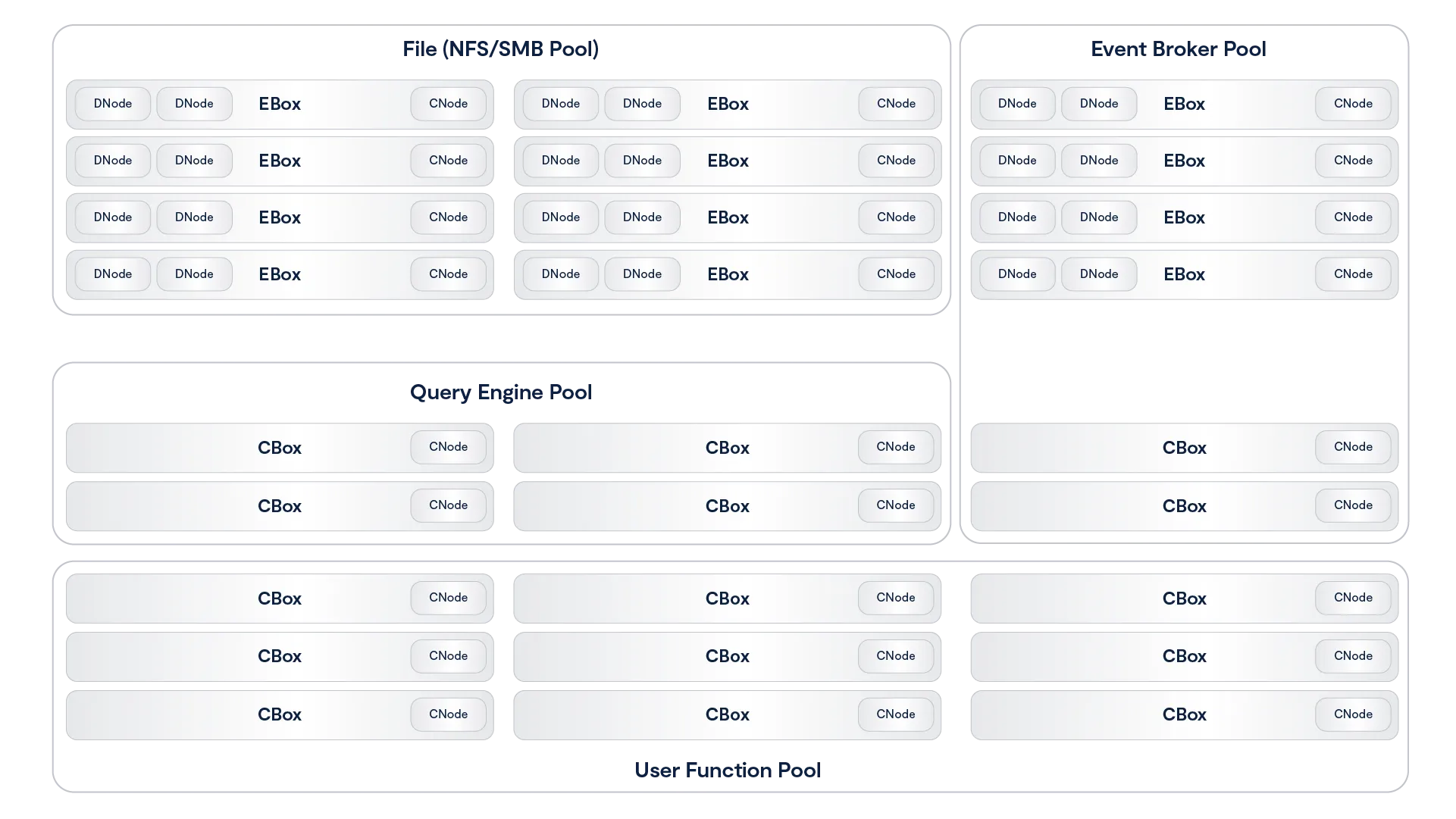

The VAST Data Platform is a unified and containerized software environment that brings different aspects of functionality to different application consumers.

The system is the first data platform to bring together native support for tabular data (either written natively or converted from open formats such as Parquet), data streams and notifications that work with Kafka endpoints and unstructured data from high-performance enterprise file protocols such as NFS, SMB, and S3. By adding a serverless computing engine (supporting functions written in Python), the VAST Data Platform brings life to data by creating an environment for recursive AI computing. Data events become the functional application triggers that create opportunities for the system to catalog data, an event that may then trigger additional functions such as AI inference, metadata enrichment, and subsequent AI model retraining. By marrying data with code, the system can recursively compute on both new and long-term data, thus getting ever more intelligent by combining new interactions with past realizations in real-time.

Unlike batch-based computing architectures, the VAST architecture leverages a real-time write buffer to capture and manipulate data in real-time as it flows into the system. This buffer can intercept small and random write operations (such as an event stream or a database entry) as well as massively parallel write operations (such as application checkpoint file creations) all into a persistent memory space that is immediately available for retrieval and correlative analysis against the rest of the system’s corpus that is largely stored in low-cost hyperscale-grade flash-based archive storage. With a focus on deep learning, the platform works to derive and catalog structure from unstructured data, to serve as the foundation of automation and discovery harnessing data that is captured from the natural world.

The functional areas of the system break down into a few components which all combine into on Platform:

The VAST DataStore is the storage foundation of the Platform. Previously known as VAST’s Universal Storage offering, the DataStore is responsible for persisting data and making it available via all of the different protocols that applications may write or read from. The DataStore can be scaled to exabytes within a single data center, and is renowned for breaking the fundamental tradeoff between performance and capacity so that customers can manage their files, objects and tables on a single tier of affordable flash, thus making it ready for any-scale and any-depth data computing.

The DataStore’s ultimate purpose is to capture and serve the immense amount of natural raw, unstructured and stream data that is being captured from the natural world. The VAST DataBase is the system’s database management service that writes tables into the system and enables real-time, fine-grained queries into VAST reserves of tabular data and cataloged metadata. Unlike conventional approaches to database management systems, the VAST DataBase is transactional like a row-based OLTP database, from a columnar data structure that processes analytical queries like a flash-based data warehouse, and has the scale and affordability of a data lake.

The DataBase’s ultimate purpose is to organize the corpus of knowledge that lives in the VAST DataStore and to catalog the semantic understanding of unstructured data. The VAST DataEngine (shipping in 2024) is the system’s declarative function execution environment that enables serverless functions much like AWS Lambda and event notifications to be deployed in standard Linux containers. With a built-in scheduler and cost-optimizer, the DataEngine can be deployed on CPU, GPU and DPU architectures to leverage scalable commodity computing to bring life to data. Unlike classic approaches to computing, the DataEngine bridges the divide between event-based and data-driven architectures that enables you to stream into your systems of insight and derive real-time insight by analyzing and inferring and training against all of your data. The DataEngine’s ultimate purpose is to transform raw, unstructured data into information by inferring on and analyzing data’s underlying characteristics.

The VAST DataSpace then takes these concepts and extends them across data centers, building a unified computing fabric and storage namespace that is intended to break the classic tradeoffs of geo-distributed computing. The DataSpace provides global data access by synchronizing metadata and presenting data through remote data caches, but allows for each site to assume temporary responsibility for consistency management at extreme levels of namespace granularity (file-level, object-level, table-level). With this decentralized and fine-grained approach to consistency management, the VAST Data Platform is a global data store that ensures strict application consistency while also providing remote functions with high-performance. The DataSpace makes access easy, by eliminating islands of information while also keeping pipes full and keeping remote CPUs and GPUs busy by prefetching and pipelining data in an intelligent fashion. The DataEngine then layers on top of this namespace to create a flexible computational fabric that can route functions to the data (when data gravity is greater) or routes data to the function (when processing resources are scarce near the data). In this way, the DataSpace helps organizations fight against both processor and data gravity as they build global AI computing environments. The DataSpace’s ultimate purpose is to be the Platform’s interface to the natural world, extending access on a global basis and enabling federated AI training and AI inference.

Now that you have a basic idea what The VAST Data Platform is let’s see how well it fits what we identified earlier as requirements for big data and deep learning workloads:

Big Data | Deep Learning | VAST Data Platform | |

Data Types | Structured & Semi-Structured Tables, JSON, Parquet | Unstructured Text, Video, Instruments, etc. | Structured and Unstructured |

Processor Type | CPUs | GPUs, AI Processors & DPUs | Orchestrates across, manages CPU, GPU, DPU, etc. |

Storage Protocols | S3 | S3, RDMA file for GPUs | S3, NFSoRDMA, SMB |

Dataset Size | TB-scale warehouses | TB-EB scale volumes | 100 TB- EBs |

Namespace | Single-Site | Globally-Federated | Globally-Federated |

Processing Paradigm | Data-Driven (Batch) | Continuous (Real-Time) | Real-time and batch |

Architecting the VAST Data Platform

The VAST Data Platform is architected as a collection of service processes that communicate with outside clients and each other to provide a wide range of data services. The easiest way to explain these services and how they interact is to view them as providing layered services analogous to the seven layers of the OSI networking model.

However, unlike the OSI model, which defines a strictly layered architecture with clear boundaries between protocols at different layers, the VAST Data Platform layers are a tool of explanation; they do not represent hard boundaries. Some services may provide services across what would logically be a layer boundary, and services communicate across layers using non-public interfaces.

Starting from the bottom, because every architecture has to have a solid foundation, the layers of the VAST Platform are:

The VAST DataStore – The VAST DataStore is responsible for storing and protecting data across the VAST global namespace and making that data available both via traditional storage protocols and through internal protocols to The VAST DataBase and The VAST DataEngine. The VAST DataStore has three significant sub-layers:

The Physical or Chunk Management Layer provides basic data preservation services for the small data chunks the VAST Element Store uses as its atomic units. This layer includes services such as erasure-coding, data distribution, data reduction, encryption at rest, and device management.

The Logical Layer aka The VAST Element Store - Uses metadata to assemble the physical layer’s data chunks into the Data Elements like files, objects, tables and volumes that users and applications interact with and then organizes those Elements into a single global namespace across a VAST cluster and with The VAST DataSpace across multiple VAST clusters worldwide.

Like a file system after exposure to gamma rays The VAST Element Store organizes the physical storage layer’s chunks into a global namespace holding Elements such as file/objects, tables, and block volumes/LUNs. The VAST Element Store provides services at the Element or path level including access control, encryption, snapshots, clones, and replication. The Protocol Layer provides multiprotocol access to data Elements. All the protocol modules are peers providing full multiprotocol access to Elements as appropriate to their data type.

The Execution Layer - The execution layer provides and orchestrates the computing logic to turn data into insight through data-driven processing. The execution layer includes two major services:

The VAST DataBase – This service manages structured data. The VAST DataBase is designed to provide the transactional consistency required for OLTP (Online Transaction Processing), the data organization, and the complex query processing demanded by OLAP (Online Analytical Processing) at the scale required for today’s AI applications. Where the logical layer stores tables and the protocol layer provides basic SQL access to those tables, The VAST DataBase turns those tables into a full-featured database management system providing advanced database functions from sort keys to foreign keys and joins.

The VAST DataEngine – The VAST DataEngine adds the intelligence required to process, transform, and ultimately infer insight from raw data. The VAST DataEngine performs functions such as facial recognition, data loss prevention scanning, or transcoding on Elements based on event triggers like the arrival of an object meeting some filter or a Lambda function. The VAST DataEngine acts as a global task scheduler assigning functions to execute where the combination of compute resources, data accessibility, and cost best meet user requirements across a global network of public and private resources.

There’s nothing revolutionary about layered architecture; IT organizations have been running relational databases on top of SAN storage with orchestration engines like Kubernetes for years. The revolutionary parts of The VAST Data Platform are the tight integration between the services across the typical layer boundaries and the Disaggregated Shared Everything (DASE) cluster architecture those services run on.

The Disaggregated Shared Everything Architecture

The VAST AIOS is software-defined, meaning it does all its magic in software running in containers on standard x86 and ARM CPUs. But that doesn’t mean we wrote that software to run on a cluster of least-common-denominator x86 servers. By using the latest storage and networking technologies like Storage Class Memory SSDs and NVMe over Fabrics (NVMe-oF), this Disaggregated Shared Everything (DASE) architecture allows VAST clusters to scale to unprecedented size. It breaks the tradeoffs inherent in the shared-nothing and shared-media models used in the past.

The DASE architecture introduces two revolutionary new concepts to data system cluster design.

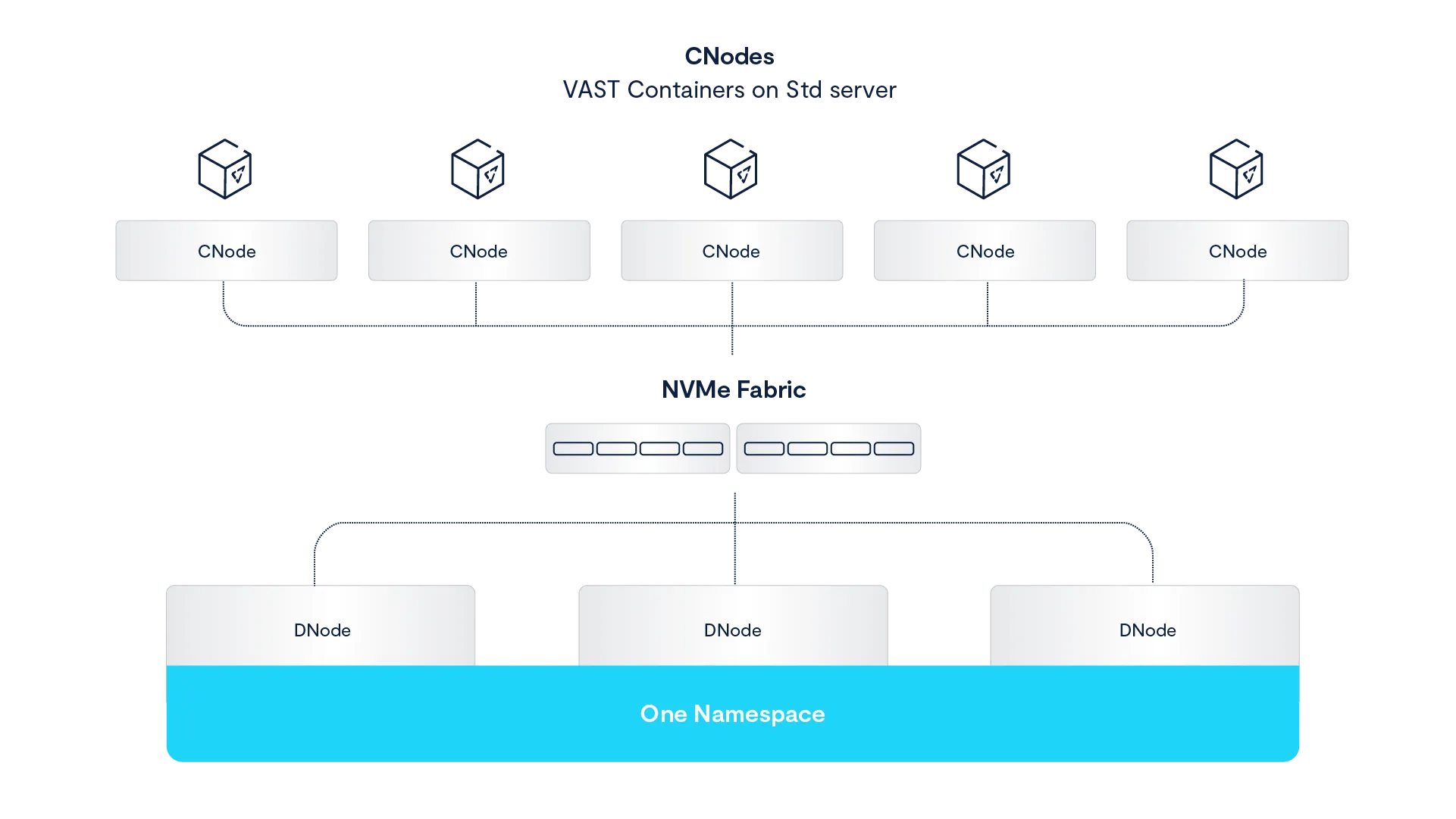

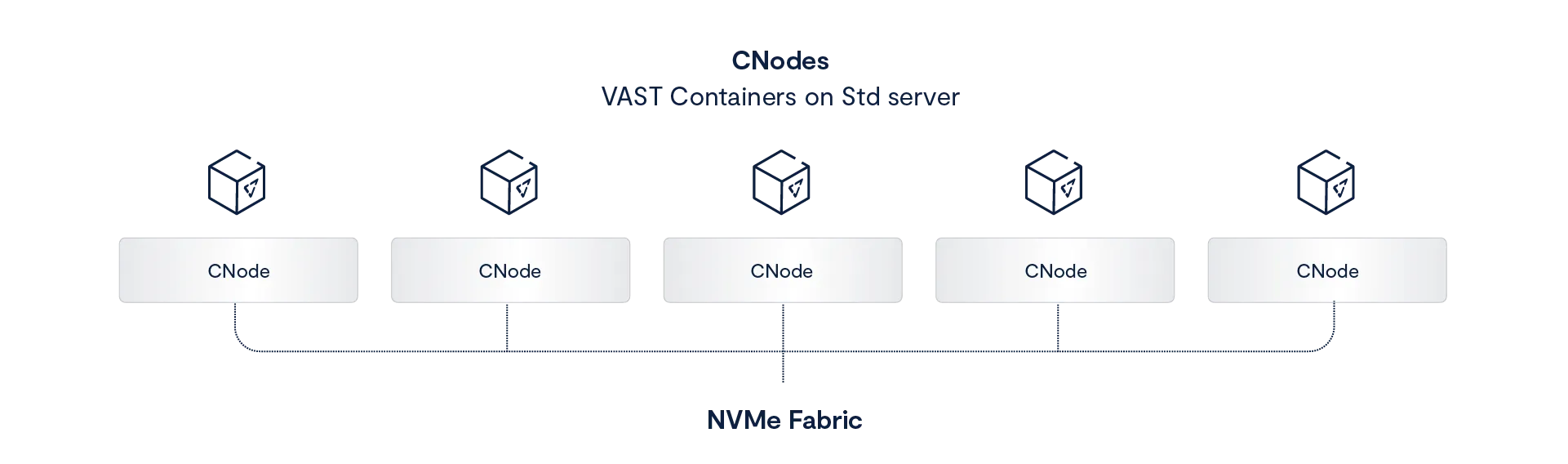

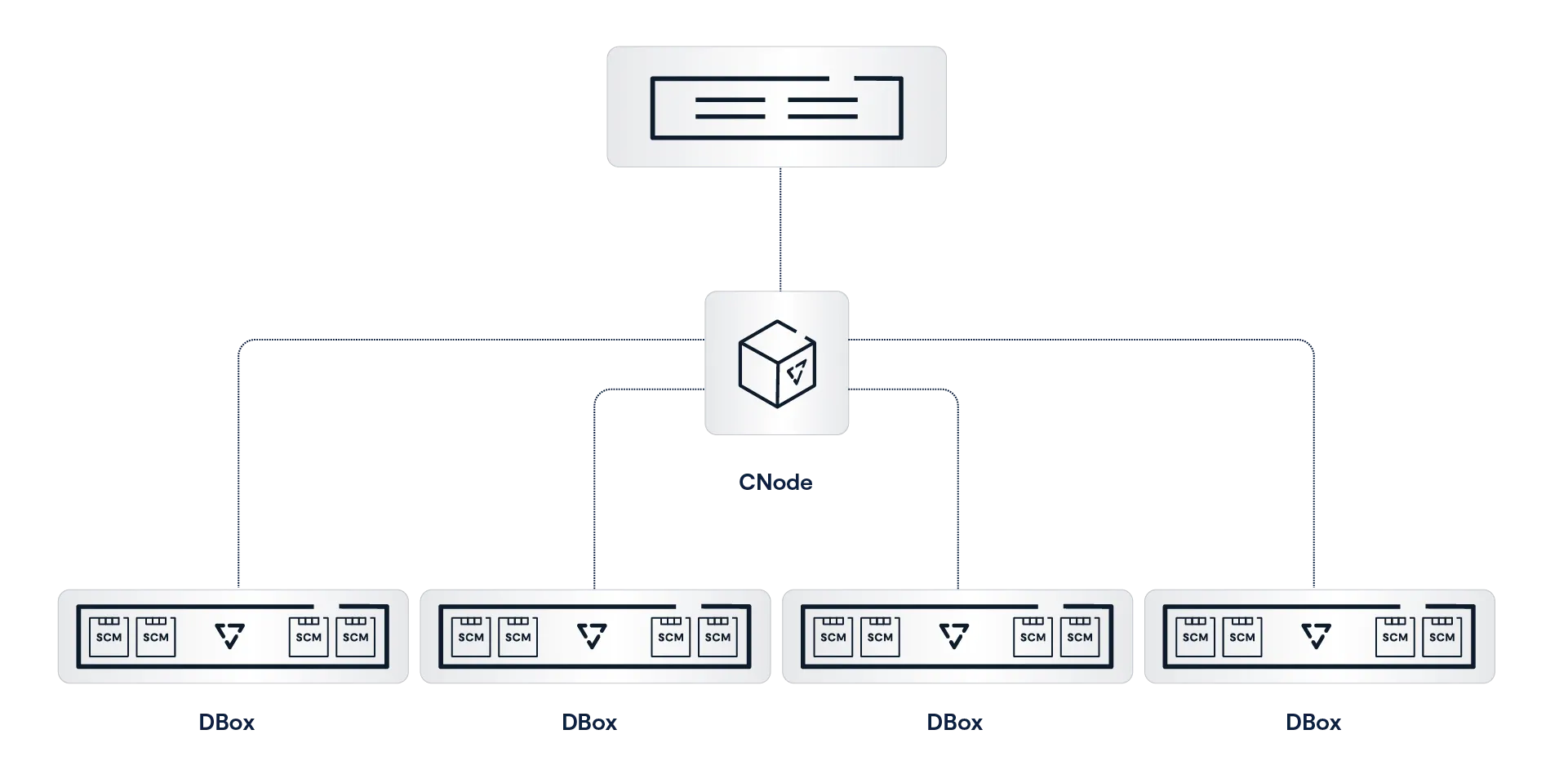

Disaggregating the cluster’s computational resources from its persistent data and system state In a DASE cluster, all the computation is performed by compute nodes (VAST Servers, sometimes called CNodes) that run in stateless containers. This very much includes all the computation needed to maintain the persistent storage that is traditionally performed by storage controller CPUs. This enables the cluster compute resources to be scaled independently from storage capacity across a commodity data center network.

A Shared-Everything model that provides every CNode with direct access to all the data, metadata, and other state information in the cluster. In a DASE cluster, all that system state is stored on NVMe SSDs, not just in the node’s DRAM. Since those SSDs are connected to the cluster’s NVMe fabric, all the overhead created by device ownership in other system architectures is eliminated.

Every CNode in a cluster mounts every SSD in the DASE cluster at boot time and has direct access to the shared system state, which provides a single, unpartitioned source of truth for everything, from global data reduction to database transaction state.

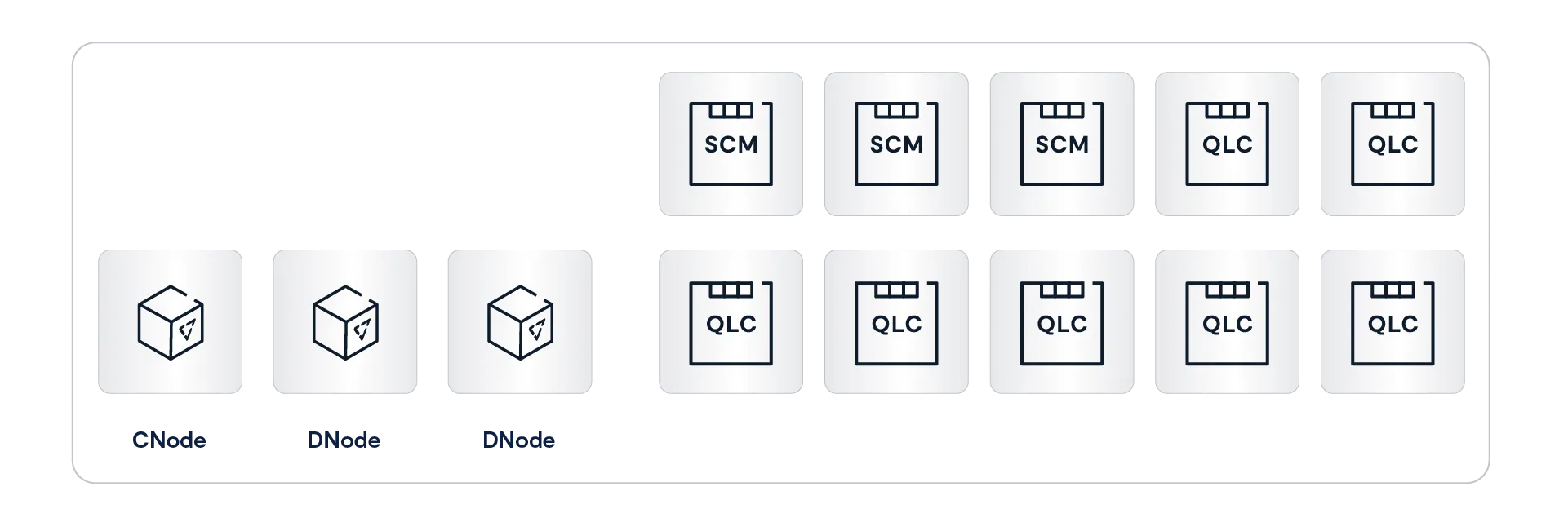

From the key points and diagram above, you’ve probably figured out that the DASE architecture is based on two basic components: CNodes, which run all the software to perform all the logic, and DBoxes, which hold all the storage media and the system state.

VAST Servers (CNodes)

VAST Servers, or CNodes (for compute nodes), provide the intelligence to manage a VAST cluster. This includes responding to client requests, protecting data in the VAST Element Store, processing database queries, and determining the best place to transcode a sports highlight to get it into the next highlights show, and processing that stream.

The VAST AIOS runs as a group of stateless containers across a cluster of servers. The term CNode (for compute node) usually refers to the VAST server container running as a node in a VAST cluster. However, it can occasionally be used to refer to the server on which the CNode container runs. If you ever see a reference like “Each CNode can use the same 100 Gbps Ethernet card for both cluster internal traffic and connections to the client network,” it’s safe to assume CNode in that context means the server hardware the VAST CNode container runs on.

All the CNodes in a cluster mount all the SCM and hyperscale flash SSDs in the cluster via NVMe-oF at boot time. This means that every CNode can directly access all the data and metadata across the cluster. In the DASE architecture, everything — every storage device, every metadata structure, and the state of every transaction within the system — is shared across all the CNode servers in the cluster.

In a DASE cluster, nodes don’t own storage devices or the metadata for a volume. When a CNode needs to read from a file, it accesses that file’s metadata from SCM SSDs to find the location of the data on hyperscale SSDs and then reads the data directly from the hyperscale SSDs. There’s no need for that CNode to ask other nodes for access to the data it needs. Each CNode can process simple storage requests, such as read or write to completion, without consulting the other CNodes in the cluster.

More complex requests like database queries and VAST DataEngine functions will be parallelized across multiple CNodes by the VAST DataEngine, but we’ll talk about that when we talk about The VAST DataBase and The VAST DataEngine below.

Stateless Containers

The CNode containers that run the DASE cluster are stateless, meaning that any user request or background task that changes the system's state, from a simple write to internal processes like garbage collection or rebuilding after a device failure, is written to multiple SDDs in the cluster’s DBoxes before it is acknowledged or finally committed. CNodes do NOT cache writes and metadata updates in DRAM or even power-failure-protected NVRAM.

Where NVRAM appears safe, its contents are really only protected against power failures. Even then, data could be lost if the wrong two nodes fail, and the system must run special, and therefore error-prone, recovery routines to restore the data saved in the NVRAM or NVDIMM’s flash back to RAM on power failure.

The best demonstration of this occurred a few years ago at a data center in the Boston area, which houses HPC and research computing systems for many of the area’s universities. The data center suffered a power failure, and of the many storage systems housed there, only the VAST clusters simply returned to operation when power was restored. The other systems required their administrators and/or vendor support to take some action.

The ultra-low latency of the direct NVMe-oF connection between CNodes and the SSDs in DBoxes also relieves CNodes of the need to maintain read or metadata caches in DRAM. When a CNode needs to know where byte 3,451,098 of an Element is located, the metadata’s single source of truth for that information is just microseconds away. Because CNodes don’t cache, they avoid all the complexity and east-west, node-to-node traffic required to keep a cache coherent across the cluster.

Containers make it simple to deploy and scale The VAST AIOS as software-defined microservices while laying the foundation for a much more resilient architecture where container failures are non-disruptive to system operation. Legacy systems must reboot nodes to instantiate a new software version, which can take a minute or more as the node’s BIOS performs a power-on self-test (POST) on the node’s DRAM. The upgrade process for VASTOS instantiates a new VASTOS container without restarting the underlying OS, which reduces the time a VAST server is offline to at most a few seconds.

The combination of statelessness and rapid container updates enables VAST systems to perform all system updates, from BIOS and SSD firmware re-flashes to simple patches, non-disruptively, even for stateful protocols like SMB. In most cases, a VAST cluster updates a CNode by spinning up an instance of the new version of the VAST container and then making that container active. Containerization reduces node downtime by running both the old and new versions of the container simultaneously, allowing for a seamless switch in seconds. Traditional solutions must take the node offline to reboot the operating system or monolithic application.

HA Enclosures (DBoxes)

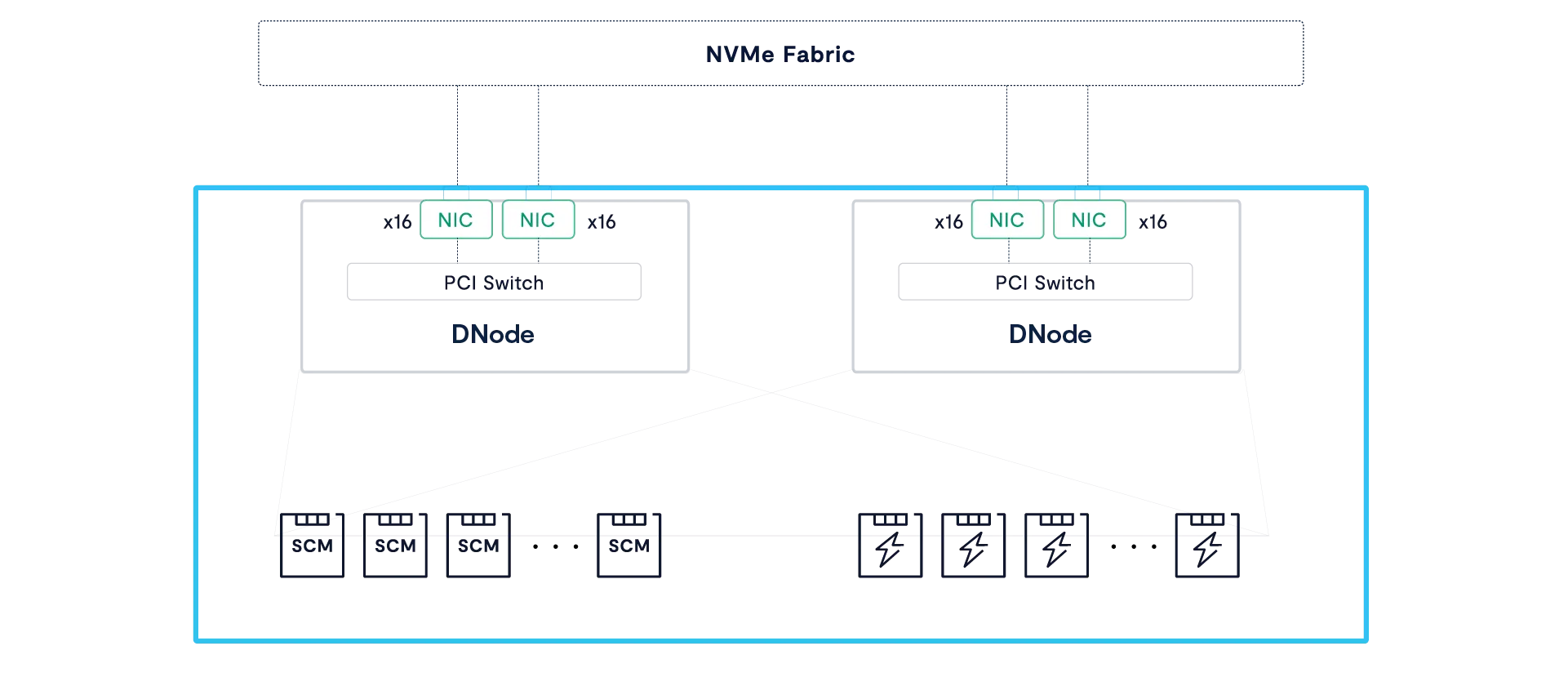

VAST Enclosures, also known as DBoxes (for Data bBxes), are NVMe-oF storage shelves that connect their SCM and hyperscale flash SSDs to an ultra-low latency NVMe fabric using Ethernet or InfiniBand. All DBoxes are highly redundant with no single point of failure. The DNodes that route NVMe over Fabrics requests between the NVMe fabric network and SSDs, NICs, fans, and power supplies are all fully redundant, making the clusters highly available regardless of whether they have one DBox or 1,000 HA DBoxes.

As you can see in the figure above, each DBox houses multiple DNodes that are responsible for routing NVMe-oF requests from their fabric ports to the enclosure’s SSDs through a complex of PCIe switch chips on each DNode.

With no single point of failure from network port to SSD, DBoxes combine enterprise-grade resiliency with high-throughput connectivity. While at face value, the architecture of a DBox appears similar to a dual-controller storage array, there are, in reality, several fundamental differences:

DNodes do not execute any of the storage logic of the cluster; thus, their CPUs can never become a bottleneck as new capability is added to the VAST AIOS.

Unlike legacy controllers, the DNodes don’t aggregate SSDs to LUNs or provide data services. Instead, these servers need little more than the most basic logic to present each SSD to the Fabric and route requests to/from SSDs within microseconds. One Gemini-supported enclosure, Ceres, consumes less power by using ARM DPUs as DNodes.

The DNodes within a DBox run active-active. Under normal conditions, each DNode connects its share of the DBox’s SSDs to the NVMe fabric. When a DNode goes offline, the DBox’s PCI switches remap the PCIe lanes from the failed DNode to the surviving DNode(s) while retaining atomic write consistency.

Some DBoxes implement four DNodes in two hot-swappable DTrays. Should either of the DNodes in a DTray go offline, the DBox will switch all the SSD connections to the DNodes in the surviving DTray to avoid an additional reconfiguration when the DTray is replaced.

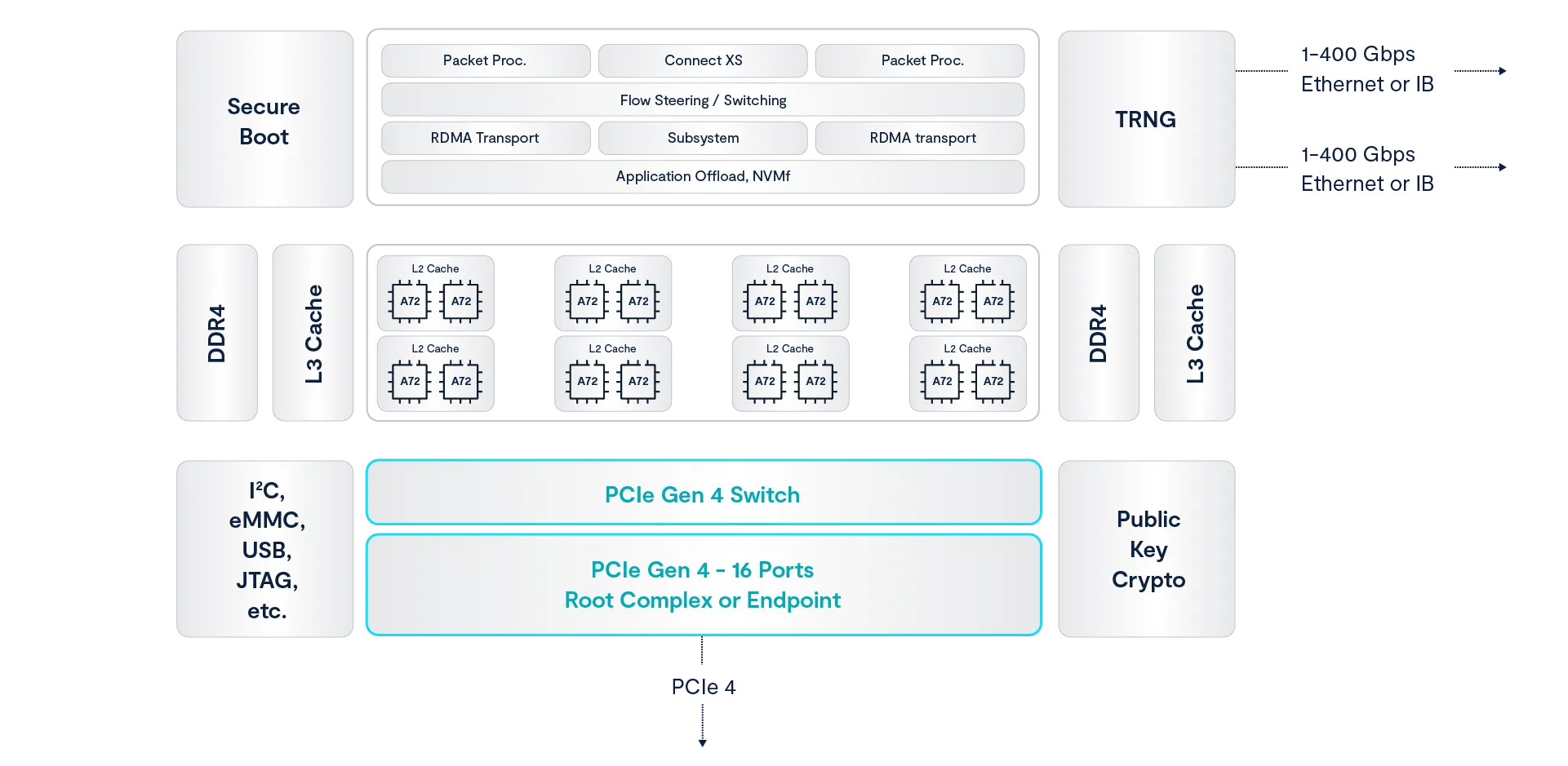

Introducing the Data Processing Unit

Data Processing Units, or DPUs, use an ASIC (Application Specific Integrated Circuit) that combines the usual functions of a network card with intelligence in two forms. DPU ASICs implement functions like RDMA, encryption, and even NVMe-oF in optimized hardware to accelerate those functions and offload those compute-intensive tasks from the server’s CPU.

Individual tasks like TCP processing or encrypting data don’t seem that demanding until you factor in that a server today has to do all that processing on multiple 200 or 400 Gbps ports.

Cloud providers use DPUs like Amazon’s Nitro series to offload networking and security tasks, allowing their hosts to run more user instances and the cloud provider to make more money.

DPU ASICs also include a set of computing cores, almost always based on the ARM architecture, that customers, like VAST, can use to run their own software.

VAST’s manufacturing partner AIC uses Nvidia Bluefield DPUs to run VAST DNode containers in the Ceres line of DBoxes. The Bluefield 3 DPU, shown in the functional diagram above, runs the VAST DNode container on its ARM cores while also taking advantage of the ASIC’s encryption and other offloads. The result is a DBox that uses less power and, therefore, produces less heat than JBOFs using x86 processors as NVMe routers.

Storage Class Memory

The term Storage Class Memory (SCM) refers to a class of technologies that offer lower write latency and greater endurance than commodity NAND flash, bridging the gap between flash and DRAM in terms of performance with a new, persistent layer. While the term was once used to describe novel memory technology, including Optane, the SCM performance and endurance niche is now also filled with SSDs using SLC (Single-Level Cell) flash.

The VAST DataStore leverages SCM SSDs both as a high-performance write buffer and as a global metadata store. SCM SSDs were chosen for their low write latency and long endurance, which allows DASE clusters to extend the endurance of their hyperscale flash and provide sub-millisecond write latencies without the complexity of DRAM caching.

Each VAST cluster includes tens to hundreds of terabytes of SCM capacity, which provides the VAST DASE architecture with several architectural benefits:

Protects Low-Endurance flash from Transient Writes: Data can live in the SCM write buffer indefinitely, and because the buffer is so comparatively large, it relieves the hyperscale SSDs from the wear of many intermediate updates.

Data Protection Efficiency: The SCM write buffer provides the capacity to assemble many wide, deep stripes concurrently and write them in a near-perfect form to hyperscale SSDs to get 20x more longevity from these low-cost SSDs than classic enterprise storage write patterns can achieve.

Protects Low-Endurance flash from Aggressive Data Reduction: SCM enables the VAST Cluster to perform data reduction calculations after the write has been acknowledged to the application but before the data is migrated to hyperscale flash – thus avoiding the write-amplification created by post-process approaches to data reduction.

Data Reduction Dictionary Efficiency: SCM acts as a shared pool of metadata that stores, among other types of metadata, a global compression dictionary that is available to all of the VAST Servers. This allows the system to enrich data with much more data reduction metadata than classic storage can (to further reduce infrastructure costs) while avoiding the need to replicate a data reduction index into the RAM space of every VAST Server (a classic problem with deduplication appliances).

Hyperscale Flash

Hyperscale flash refers to the types of SSDs used by hyperscalers like Facebook, Google, and Baidu to minimize flash costs. Because hyperscalers build their systems very differently from enterprise SAN arrays, hyperscale SSDs are different from both enterprise and consumer SSDs.

Enterprise SSDs are designed for enterprise SAN arrays where dual-port drives have long been connected to dual controllers and to deliver consistently low write latency. That means enterprise SSDs need expensive components like dual-port controllers, DRAM caches, and power protection circuitry, along with flash overprovisioning to manage endurance for the 4KB random-write-heavy JEDEC testing regime.

The hyperscalers build storage systems from servers that can only connect to one port on an SSD, only writing large objects, and therefore don’t need dual ports, a DRAM cache, or much overprovisioning. Hyperscale SSDs make flash affordable by using the densest available flash--currently four-bit per cell QLC--and delivering that capacity directly to storage.

Squeezing another bit into each flash cell boosts capacity, and since it doesn’t significantly increase manufacturing costs, it brings the cost per GB of flash down to unprecedentedly low levels. Unfortunately, squeezing more bits in each cell has a trade-off. As each successive generation of flash chips reduced cost by fitting more bits in a cell, each generation also had lower endurance, wearing out after fewer write/erase cycles. The differences in endurance across flash generations are huge – while the first generation of NAND (SLC) could be overwritten 100,000 times, QLC endurance is 100x lower. As flash vendors promote their upcoming PLC (Penta Level Cell) flash that holds 5 bits per cell, endurance is projected to be even lower.

Erasing flash memory requires high voltage that causes damage to the flash cell’s insulating layer at a physical level. After multiple cycles, enough damage has accumulated to allow some electron leakage through the silicon’s insulating layer. This insulating layer wear is the cause of high bit density flash’s lower endurance. For a QLC cell, for example, to hold a four-bit value, it must hold one of 16 discrete charge/voltage levels, all between 0 and 3 volts or so. Holding that many bits as slightly different voltage levels makes QLC more sensitive to electron leakage through the insulating layers. As the number of bits that have to be represented by a voltage between 0 and 3 volts increases, the difference between one value and another shrinks, making each generation of flash more sensitive to the escape of a few electrons from a cell. This allows low bit density flash to absorb more damaging erase cycles before leakage changes a 1 to a 0 or vice versa.

VAST’s Universal Storage systems were designed to minimize flash wear in two ways: first, by using innovative new data structures that align with the internal geometry of low-cost hyperscale SSDs in ways never before attempted; and second, by using a large SCM write buffer to absorb writes, providing the time, and space, to minimize flash wear. The combination allows VAST Data to support our flash systems for 10 years, which has its own impact on system ownership economics.

Asymmetric Scaling

Legacy scale-out architectures aggregate computing power and capacity into either shared-nothing nodes with a single “controller” per node or shared-media nodes with a pair of controllers and their drives. Either way, users are forced to buy computing power and capacity together across a limited range of node models while balancing the cost, performance, and data center resources needed by a small number of large nodes vs. a larger number of nodes with less capacity per node.

The DASE architecture eliminates these limitations as the simple result of disaggregating a VAST system’s computing power into CNodes that are independent of the DBoxes that provide capacity. VAST customers training their AI models (a workload that accesses a large number of small files) or processing complex queries using The VAST DataBase will use as many as a dozen or more CNodes per DBox. At the other extreme, customers using their VAST clusters to store backup repositories, archives, and other less active datasets typically run their clusters with less than one CNode per DBox.

When a VAST customer needs more capacity, they can expand their VAST cluster by adding more DBoxes without the cost (and not insignificant power consumption) of adding more compute power. This is a feature of old-school scale-up storage systems that the scale-out vendors abandoned.

Expanding capacity alone is cost-effective and old-school cool, but the only path legacy vendors ever provided to increase the amount of computing power in a cluster for a given capacity was to use a forklift to install new, faster nodes or add more nodes with smaller drives, which also boosts the CPU power per Petabyte.

When VAST customers discover their new AI engine can extract value from what they thought was a cold archive, or the new version of their key application starts performing a lot more small random I/Os than the old one, or simply that their new application is more popular across the company than the thought it would be, they can add more computing power to their cluster by adding more CNodes. The system will automatically rebalance VIPs (Virtual IP Addresses) and processing across the new CNodes when they’re added to a pool.

Asymmetric and Heterogeneous

Shared-nothing users face a difficult challenge when their vendors release a new generation of nodes. Because all the nodes in a storage pool have to be identical, a customer with a 16-node cluster of 3-year-old nodes who needs to expand capacity by 50% faces two choices:

Buy 8 additional nodes and extended support for the current 16 nodes

Extended support only available for total 5 or 6 years so replacement of all 24 nodes will be required in 2-3 years

Buy 5 new model nodes with twice the density and create a new pool

Performance will depend on pool data is in

Multiple pools add complexity

Lower efficiency from small cluster

This gets especially dicey when new features, like inline deduplication and compression, require the faster processor of the new model nodes.

When we say that DASE is an asymmetric architecture, we don’t just mean that customers can vary the computing power per petabyte of their systems by adjusting the number of servers (CNodes) per storage enclosure (DBox), or petabyte. Asymmetric also means that DASE systems support asymmetry across the servers running the VAST CNode containers, DBoxes, and the SSDs inside those enclosures.

VAST systems accommodate CNodes with different numbers or speeds of cores by treating the CNodes in a cluster as a pool of computing power, scheduling tasks across the CNodes the way an operating system schedules threads across CPU cores. When the system assigns background tasks, like reconstructing data after an SSD failure or migrating data from the SCM write buffer to hyperscale flash, tasks are assigned to the servers with the lowest utilization. Faster CNodes will be capable of performing more work and will therefore be assigned more work to do.

DASE systems similarly manage the SSDs in the cluster as pools of available SCM and hyperscale flash capacity. Every CNode has direct access to every SSD in the cluster, and the DNodes provide redundant paths to those SSDs, so the system can address SSDs as independent resources and failure domains.

When a CNode needs to allocate an SCM write buffer, it selects the SCM SSDs that have the most write buffer available and are as far apart as possible, always connected to the fabric through different DNodes, in different DBoxes if possible. Similarly, when the system allocates an erasure-code stripe on the hyperscale flash SSDs, it selects the SSDs in the cluster with the most free capacity and endurance for each stripe.

Since SSDs are chosen by the amount of space and endurance they have remaining, any new SSDs or larger SSDs in a cluster will be selected for more erasure-code stripes than the older, or smaller SSDs until the wear and capacity utilization equalize across the SSDs in the cluster. See A Breakthrough Approach To Data Protection below for more details.

The result is that VAST customers are never faced with choosing between buying a little more of the old technology they’re already using, knowing these nodes will have a short working life, or replacing the whole cluster, even though their current nodes have a few more years of life. When a VAST customer’s cluster requires more compute power, they don’t have to upgrade all their nodes to the new model with a faster processor; instead, they can just add a few CNodes.

VAST is committed to supporting clusters with as many as three generations of hardware and to supporting any VAST-branded CBox or DBox for up to 10 years under Gemini. This allows VAST customers to run VAST clusters for extended periods by adding new hardware and retiring old hardware from the cluster. In any case, data and work are balanced automatically and transparently, eliminating not just the forklift but all the drama from upgrades.

Variations on DASE

Our original model for DASE, utilizing highly available DBoxes to store persistent data and state, is optimized for efficiency. Since the DBox’s redundant DNodes and power supplies ensure that no single component failure can take the DBox’s SSDs offline, the VAST DataStore can treat each SSD as an independent failure domain relying on the DBox to provide fault tolerance. This, in turn, allows the VAST DataStore to write very wide erasure code stripes that protect against as many as four SSD failures with as little as 2.7% overhead.

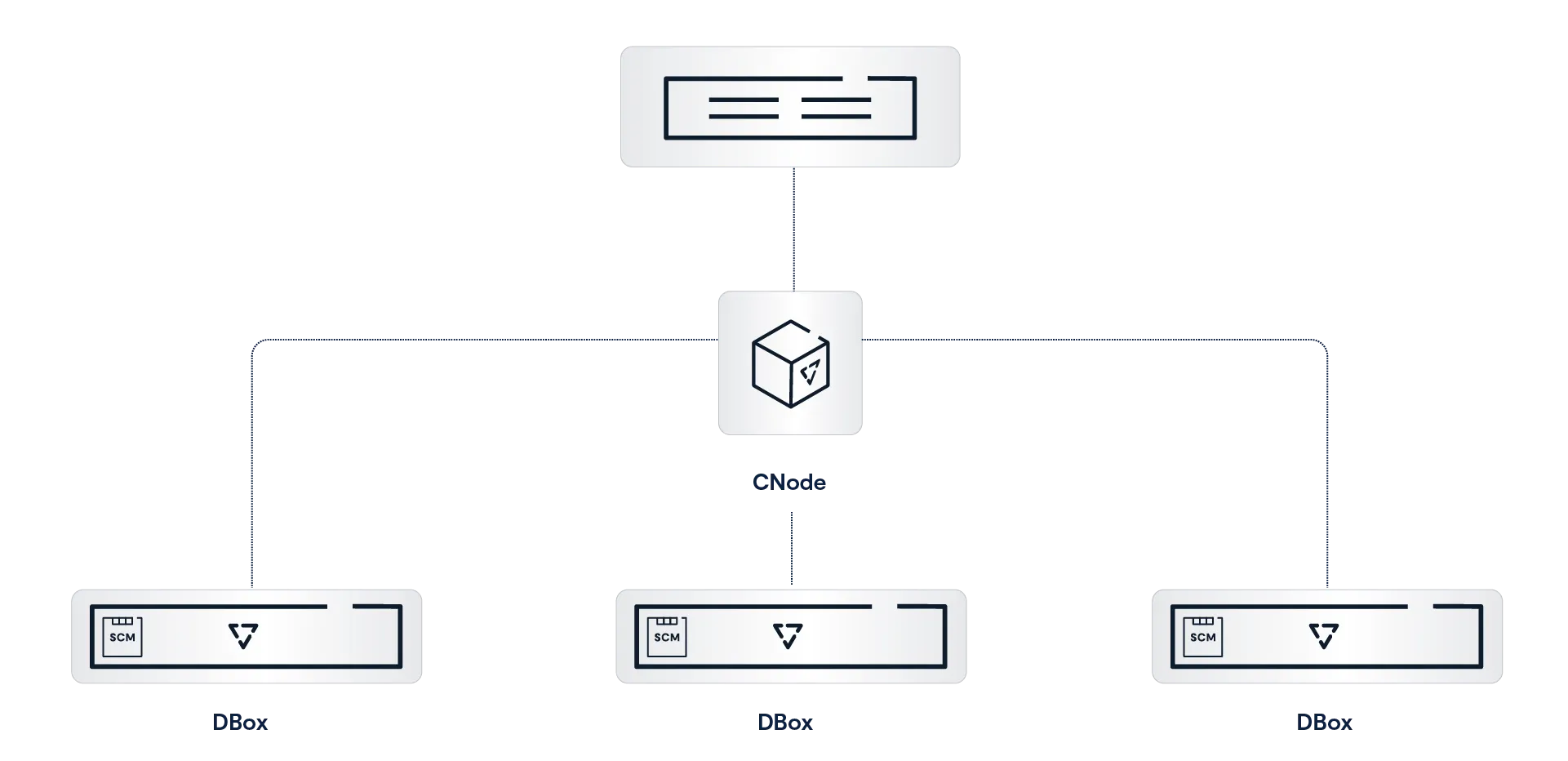

DBoxes provide high efficiency, and resilience but one size fits all solutions rarely actually fit everyone so we’ve expanded the DASE architecture to include configurations that don’t include highly available JBOFs like DBoxes. Cloud providers, hyperscalers and enterprises that build their data centers along the hyperscaler model wanted to run the VAST AIOS on the hardware they had very carefully engineered and on virtual machine cloud instances while server OEMs like SuperMicro, Lenovo and Cisco wanted to sell VAST clusters running on their standard servers so we’ve adapted the DASE architecture to support these variations.

Introducing the E for Everything Box

The first step in broadening the VAST hardware universe was adapting DASE to run on industry-standard servers functioning as both the CNodes and DBoxes. The easiest way to do that would have been for us to simply assign servers to be either CNodes or DBoxes. The DBoxes would hold all the SCM and hyperscale flash SSDs, and CNodes would access those SSDs just as if they were the HA DBoxes in a VAST Classic cluster. This solution would work; however, it would require a large number of servers, reducing system density while increasing power and data center costs.

Instead of dedicating servers to the DBox role, and since DBoxes don’t do much computational work, wasting the compute power in those servers, EBoxes, the E stands for Everything, take advantage of The VAST AIOS’s containerized architecture to combine the CNode and DBox functions into a single server.

Each EBox runs three VAST containers. One CNode container that, just like a CNode container running on a server dedicated to being a CNode, mounts all the SSDs in the cluster, processes requests for files via NFS, or for the next entry in a topic via the Kafka API request, and does its share of managing the VAST Element Store.

Like the DNodes in a DBox, the DNode containers connect the SCM and hyperscale flash SSDs in the EBox to the cluster’s NVMe fabric, routing NVMe commands and data between the SSDs and the cluster’s CNodes. Under normal conditions, each DNode connects half of the EBox’s SSDs to the cluster’s NVMe fabric. Should one DNode go offline, such as during an update, the other DNode will connect all the EBox’s SSDs to the fabric.

While EBoxes have redundant DNode containers just like DBoxes, unlike DBoxes, they are just industry-standard servers that pass VAST’s strict validation testing, which means that, unlike DBoxes, they have multiple single points of failure (motherboard, memory DIMMs, etc).

As a result, EBoxes will go offline more often than DBoxes, so EBox clusters are designed to remain available even when two EBoxes go offline simultaneously. This is accomplished by erasure coding data in the cluster’s write buffer with double-parity erasure codes and 3-way mirroring the cluster’s metadata.

Together, these features, along with VAST’s locally decodable codes and an optimized data layout, provide n+2 protection for all the system’s data and metadata so that an EBox cluster will remain fully functional through the simultaneous failure of two EBoxes. Customers seeking even higher levels of fault tolerance can implement Rack Level Resilience, which can keep the system running, with all of its contents available, through full rack failures.

EBox clusters, like all VAST clusters, are designed to allow components, individual boxes, or even full racks to fail in place, rebuilding the system to a fully protected state even after multiple consecutive failures. The only limitation on this ability to rebuild is the availability of free space on the system to rebuild onto.

Truly Non-Disruptive Upgrades

Shared-nothing clusters try to provide non-disruptive upgrades by rolling the update process across the cluster’s nodes, updating the software on, and rebooting one or two nodes at a time. Since the SSDs in each can only be accessed by that node’s CPU, the cluster has to operate in a degraded state when any of its nodes is rebooting, or offline because an update failed.

While we could expect that a cluster with a node down for update to deliver lower performance because the cluster is now running 19 or 39 nodes instead of 20 or 40, the problem is bigger than that. Not only will the cluster be busy rebuilding all the data on the node that is now offline, but even more significantly, the cluster will have to reconstruct any data on the offline node’s drives when that data is read turning one read I/O from a client into 8 or 16 I/Os to recall the entire erasure code stripe.

EBoxes run multiple containers to avoid this problem by almost never , taking the whole EBox offline to update. When the system needs to update an EBox it:

Updates the CNode:

A container running the new version of the CNode function is spun up on the EBox in parallel with the existing CNode

The virtual IP addresses being serviced by the EBox’s CNode container are transferred to the new CNode container so clients, even SMB clients, can continue accessing their data.

The old CNode container is spun down.

Updates the DNode containers

One of the two DNode containers in the Ebox (DNodeA) is selected for update first

The SSDs being connected via DNodeA are transferred to DNodeB

A new container is spun up for DNodeA

The SSD connections are transferred to the new version of DNodeA

The old DNodeA container is spun down

A new DNodeB container is spun up

The new DNodeB container takes over connections for half the SSDs

The old DNodeB container is spun down

All of the EBox’s SSDs remain online and available through the upgrade, limiting the performance impact of the upgrade.

Asymmetric Scaling with EBoxes

Asymmetric scaling has always been one of the key advantages of the DASE architecture, allowing VAST customers to add capacity to their by adding DBoxes and compute power by adding CNodes. This ability to expand the computing power of a cluster is key to the VAST AI OS’s ability to not just provide the persistent storage for your AI pipelines, but also the query engine for the pipeline’s data warehouse, the pipeline’s orchestration and automation engine, and a managed Kubernetes cluster to execute the pipeline’s functions.

Extending Resiliance Beyond the DBox

VAST’s original data layout maximizes efficiency by writing very wide erasure code stripes across a cluster’s SSDs without regard to which DBox held each SSD, relying on the highly available DBox to provide resilience. Since it takes multiple device failures to take the SSDs in a DBox offline, the system can write many more strips of each erasure code stripe to each DBox in the cluster than the four erasures locally decodable codes could correct for losing.

A VAST classic cluster with over 150 hyperscale SSDs will use erasure code stripes of 146 data strips plus 4 parity strips (146D+4P), providing protection from up to four SSDs going offline concurrently with just 2.7% overhead. Still, since each enclosure holds more strips of each erasure code stripe than the four losses the system can correct for, the system will go offline when any of the cluster’s DBoxes goes offline.

While locally decodeable codes and DBoxes provide a higher level of resilience than classic enterprise systems, even the most resilient appliance remains vulnerable to power or network service failures in the rack they’re housed in. When we started introducing VAST into hyperscalers who designed their applications to accommodate whole racks of servers going offline, and then decided to save money by building out their data centers with one power feed or top-of-rack switch per rack, we realized we needed to expand high-availability beyond the resilience provided by a DBox.

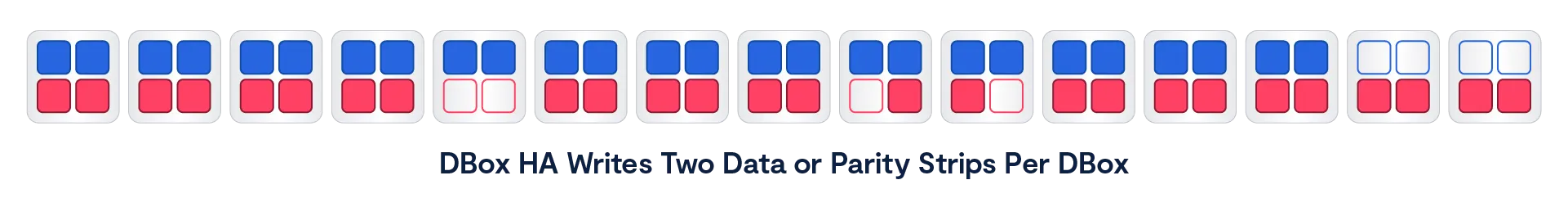

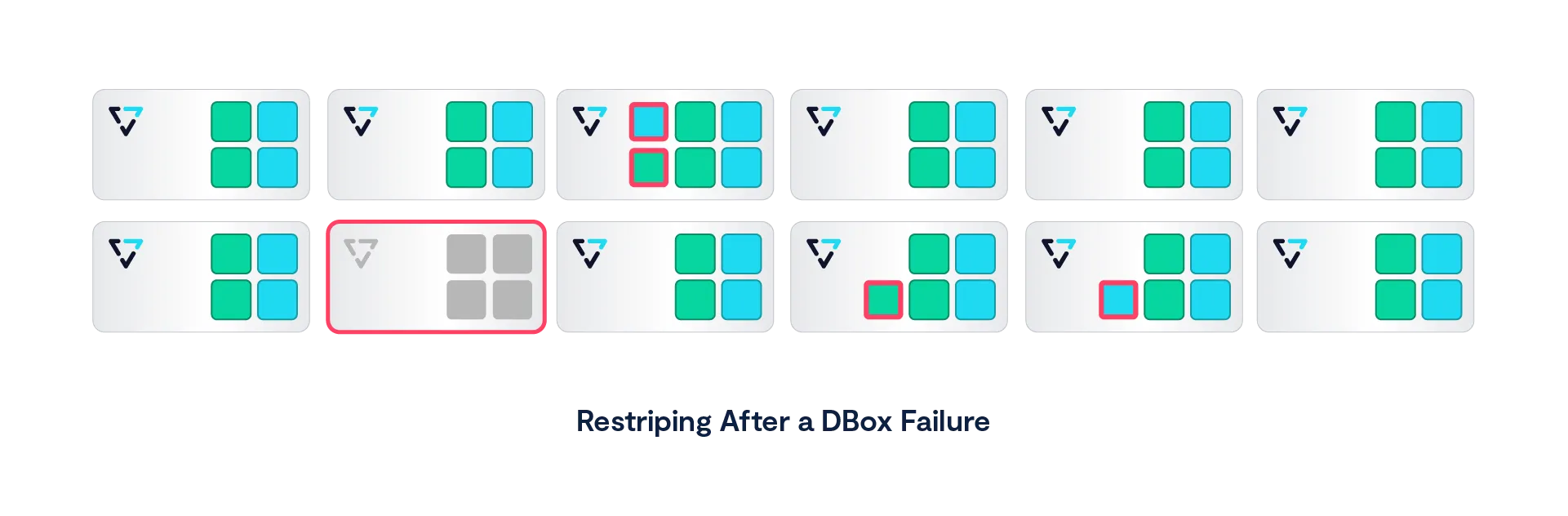

Maintaining Availability through DBox Failures with DBox-HA

Our first step in expanding resilience beyond a DBox, and addressing the age-old question “Sure, I know DBoxes are highly available with no single point of failure, but what happens when a DBox blows up?” was DBox-HA. DBox-HA uses all the same data protection methods as VAST classic, but it redefines the domains of failure it protects the system from up from individual SSDs to DBox so a VAST cluster can continue operating through a Dbox failure. The failure of a DBox takes all the SSDs in that DBox offline, so the system has to be able to rebuild any of the data from the missing DBox when users read it.

To ensure that all the data in a cluster is still available, even with a DBox offline, VAST clusters running DBox-HA stripe limit the portion of each erasure code stripe written to each DBox to two data and/or parity strips, as shown below. That limit of two strips of each erasure code stripe per DBox means that a DBox going offline causes two erasures in every erasure code stripe, which is well below the four erasures that VAST’s locally decodable codes can correct for allowing VAST clusters running DBox-HA to continue to operate, and access all of their contents, even with a DBox offline.

As the great Robert A Heinlein wrote, “There Ain’t No Such Thing As A Free Lunch,” and DBox-HA is no exception, the two strips per DBox limit means that DBox-HA clusters write narrower, and therefore somewhat less efficient erasure code stripes than VAST classic clusters that rely on the inherent high-availability of DBoxes. A cluster of ten Ceres DBoxes with 220 hyperscale flash SSDs will write erasure code stripes of 146 data strips and four parity strips (146D+4P), which has 2.7% overhead. That same cluster running DBox-HA will write erasure code stripes with 36 data strips (36D+4P), raising the data protection overhead from 2.7% to 10%, which is still significantly less than the 20% or greater overhead of legacy storage solutions.

Even better, unlike many shared-nothing systems, VAST clusters not only reconstruct the data from the offline DBox to satisfy read requests but also restore the data to full protection. When a DBox fails in a cluster running DBox-HA, the cluster begins rebuilding the data on its SSDs shortly after the failure is detected.

The system writes 1 reconstructed strip of each erasure-code stripe to 2 of the surviving DBoxes. Since each DBox in a DBox-HA cluster already holds 2 strips of every erasure-code stripe, and these additional strips are distributed across all the surviving DBoxes, each DBox will hold 3 strips of some number of erasure-code stripes as shown outlined in red below.

The rebuild process will continue until all the cluster’s erasure code stripes are back to full +4 protection, and a cluster will be able to rebuild from multiple failures as long as there is free space to rebuild into without writing more than three strips of any strips to any DBox.

It is, however, much more common for the DBox to come back online when the circuit breaker is reset, or the DBox is installed in its new rack or data center cage. When a DBox returns to a cluster, there’s no need to keep rebuilding data and so the rebuild stops.

Since VAST clusters determine the erasure code stripe width based on the number of active DBoxes when the stripe is written, any new erasure code stripes that are written while a DBox is offline will be written slightly narrower (34D+4P for our example cluster with nine active DBoxes vs 36D+4P when all 10 DBoxes were online). These new stripes are fully protected, and there’s no need to rebuild them when the disconnected DBox comes back online.

When that DBox returns online, some of the existing erasure code stripes will not yet have been rebuilt. Those stripes are now once again complete with our wayward DBox back online and don’t need to be rebuilt. Other stripes will have already been rebuilt when the DBox returns. Since these stripes have been rebuilt, the system marks the space used by rebuilt stripes on the returning DBox as free space.

The other cost of DBox-HA is a larger minimum cluster size. VAST classic clusters can run on a single DBox, but DBox-HA requires a minimum of eight DBoxes to manage both erasure code stripe width and, therefore, efficiency, and the impact of a DBox failure.

Write Buffer Erasure Coding

As we’ve already established, when a client, or AI pipeline function running on the VAST cluster itself, writes new data to a VAST cluster, the CNode that receives that new data saves that data to multiple SSDs before acknowledging the write to the client. VAST classic clusters rely on the physical redundancy of DBoxes to ensure that CNodes can access the SSDs even if a DNode, power supply, or network card in the DBox fails, mirroring the write buffer across two SCM SSDs with DBox and DNode anti-affinity, writing each of the two replicas of the data to different SSDs connected to the NVMe fabric through different DNodes and in different DBoxes if multiple DBoxes are available.

Mirroring ensures that the data in the write buffer remains available through any single SSD or DNode failure and introduces very little latency, but mirroring isn’t perfect. The obvious problem is that two-way mirroring only protects the data from the loss of one of the two replicas of that data. Should both replicas go offline, that data will be unavailable until one of the replicas can be restored.

The other problem with mirroring is that fully 50% of the write buffer space, and even more significantly, half the write bandwidth to the SSDs, is consumed by overhead, reducing a cluster’s ability to accept writes.

More recent releases of the VAST AI OS have transitioned from using 2-way mirroring to protect data in the write buffer to protecting that data with double parity erasure codes, striping data across as many as 12 SCM SSDs, reducing the bandwidth consumed for data protection from 50% to 16.7% significantly boosting cluster write bandwidth.

As a CNode receives write requests, it accumulates newly written data in memory until it has enough data for a full erasure code stripe, calculates parity, and writes the data to the buffer before acknowledging the write(s) to the client(s). To minimize write latency during periods of low to moderate write traffic, should a CNode not receive sufficient data to build a full erasure code stripe in 300 µsec, it will pad the data it holds in memory with zeros and write the stripe.

How the cluster distributes erasure code strips depends on the cluster’s availability level:

VAST Classic clusters stripe their write buffers across the cluster’s SCM SSDs, protecting the write buffer against up to SCM SSDs going offline

Clusters running DBox-HA stripe their write buffer across the DBoxes in the cluster, placing two strips per DBox, protecting the write buffer against one DBox or two SCM SSDs going offline

EBox clusters, and clusters running with Rack Level Resiliance <add link> stripe data across their EBoxes or racks with no more than 1 strip per Ebox or rack, protecting the write buffer from up to two racks going offline

Write Buffer Bursting

We’ve discussed in this paper how VAST’s DASE uses high-endurance storage-class memory to absorb writes to the system and thereby extend the more limited endurance of the low-cost hyperscale flash SSDs. Having all writes land on SCM before being migrated onto the hyperscale SSDs extends the hyperscale SSD’s endurance, but it also limits the write bandwidth of a VAST cluster to the bandwidth of the relatively small number of SCM SSDs in the cluster.

This limitation on write bandwidth is problematical for some leading-edge applications, including some HPC simulations and AI model training. These applications run on leading-edge computing environments with the latest CPU, GPU, and networking technologies. Unfortunately, these leading-edge environments are frequently not the most reliable environments, with servers crashing because of overheating or just from running software developed by that somewhat disheveled post-doc who sleeps under his desk last night.

Training an AI model or running a detailed simulation of a nuclear weapon aging in its silo, or a car crashing, requires running a single compute job across hundreds or thousands of processors for days or weeks. To prevent the job from having to restart from the beginning when any of the many servers it’s running across crashes, these applications write periodic checkpoints, saving the state of the application to persistent storage. Now, when a server crashes or a top-of-rack switch takes a rack offline, the application can restart its work from the last checkpoint, instead of from the beginning of the job.

While AI training models have predominantly shifted to asynchronous checkpointing, older libraries and many HPC applications will still checkpoint synchronously, holding up their computing while the checkpoint is being written, making the speed at which a system can save a checkpoint a significant consideration for many users.

The VAST AI OS accommodates this need for periodic high-performance writes by directing the large writes created by servers dumping all or part of their memory to the cluster’s hyperscale flash SSDs, as well as the SCM SSDs. Since a typical VAST cluster has roughly three times as many hyperscale SSDs as SCM SSDs, this roughly doubles the write bandwidth of the cluster for checkpoints and similar applications.

A training cluster would typically be configured to take hourly checkpoints with the system’s burst write bandwidth and the size of the extended write buffer designed to save a checkpoint in roughly three minutes, so that even the most basic synchronous checkpoints would take less than 5% of the system’s time to save.

During a checkpoint or other write burst, CNodes receiving write I/Os smaller than ~256 KB save that data to the SCM write buffer as usual. Larger writes are also written to a limited amount of space on the cluster’s hyperscale flash SSDs using the same double-parity erasure codes used to write to the SCM. VAST users can configure the size of this write buffer burst area to manage the amount of hyperscale flash to dedicate to the extended write buffer and manage the amount of burst data that can be written.

This segregation of writes, with only large writes going to the hyperscale flash SSDs, minimizes flash wear. To minimize costs, hyperscale SSDs use flash chips with a large page size. The page size is the minimum amount of data the flash chip can store at a time. When a hyperscale SSD receives writes smaller than the 128 KB size of its internal pages, each of those writes is stored individually on a page, consuming 128 KB of endurance, even for a 4 KB write. The hyperscale SSDs used in VAST systems have twenty times the endurance when data is written to them in 128 KB or larger sequential writes than for 4 KB random writes. Add that to the small amount of hyperscale flash designated for the extended write buffer, and VAST’s cluster-wide wear-leveling, and the endurance impact of write buffer bursting is minimal.

Once a checkpoint, or other write burst, ends, the system will of course, have more than the usual amount of data in the write buffer to migrate to hyperscale flash. As a result, systems will dedicate more resources to emptying the extended write buffer after a burst ends, resulting in a temporary performance dip.

Metadata Triplication

The double parity erasure codes VAST clusters use for the write buffer strike a balance between the greater efficiency of wider stripes and the impact those stripes may have on latency for typical storage write I/O patterns. Under heavy write demand, CNodes can assemble full erasure code stripes and maximize bandwidth. When demand is lower, they pad stripes with zeros, trading unused bandwidth for reduced latency. Any wasted SCM space will be quickly and inexpensively recovered when the write buffer is migrated to QLC.

As efficient as those erasure codes are for newly written data, they don’t fit the I/O patterns for metadata updates. Every write or S3 PUT causes the creation of multiple new pointers, creating new 4 KB metadata blocks and new versions of existing blocks. All those small I/Os landing in the middle of erasure code stripes would trigger significant read-modify-write I/O amplification, the last thing you want for your metadata.

VAST clusters protect the system’s metadata against two EBoxes, or with Rack Level Resilience, two DBoxes, going offline simultaneously by triplicating each metadata update to three SCM SSDs in different DBoxes, or EBoxes.. The CNode processing the write I/O from a client writes the new metadata block(s) to three SCM SSDs in different boxes, or racks, simultaneously. It sends an acknowledgment of the write to the client only when the NVMe over Fabrics (NVMe-oF) acknowledgment comes back from all three.

Networking in DASE

A DASE cluster includes four primary logical networks.

The NVMe fabric, or back-end network, connects CNodes to DNodes. VAST clusters use NVMe over RDMA for CNode<->DNode communications over 100 Gbps Ethernet or InfiniBand with Ethernet as the default.

The host network, or front-end network, that carries file, object, or database requests from client hosts to the cluster’s CNodes.

The management network that carries management traffic to the cluster, including DNS and authentication traffic.

The IPMI network used for managing and monitoring the hardware in the cluster.

Depending on their requirements, VAST customers have several options for how to implement these logical networks using dedicated ports, and/or VLANs as their network designs and security concerns dictate. The biggest decision is how the DASE cluster is connected to the customer’s data center network to provide host access.

Connect via Switch

The Connect via Switch option runs the NVMe back-end fabric and the front-end host network connections as two VLANs on the NVMe fabric switches that are included in each DASE cluster. The customer’s host network is connected to the cluster through an MLAG connection from the fabric switches to the customer’s core switches as shown in green above.

Each CNode has a single 100 Gbps network card. A splitter cable turns that into a pair of 50 Gbps connections, one for each of the two fabric switches. Each 50 Gbps connection carries NVMe fabric traffic on one VLAN and host data traffic on another.

The Connect via Switch method has several advantages:

Only one RNIC is needed in each CNode

Network traffic is aggregated to a small number of 100 Gbps links MLAGed together minimizing the number of host network switch ports needed

If Connect via Switch was perfect we wouldn’t have any other options, but there are disadvantages:

Host network connections must be the same as the fabric

Infiniband fabrics only support Infiniband hosts

40, 25, 10 Gbps Ethernet connections expensive in 100 Gbps fabric ports

Only one physical host network

Connect via CNode

Connecting DASE clusters to the customer’s network through the NVMe fabric switches is simple, and minimizes the number of network ports. But as we discussed in the server pooling section above, customers may need more flexibility or control over how their various clients and tenants should connect to their DASE cluster.

When VAST customers need to connect clients from multiple disparate networks with different technologies, or security considerations, they can solve that problem by connecting those networks to CNodes with a second network card that connects directly to the network that CNode will serve.

Advantages of Connect via CNode

Connect hosts to the DASE cluster via different technologies

Infiniband clients served by CNodes with Infiniband NICs

Ethernet clients served by CNodes with Ethernet NICs

Or Ethernet clients connected via fabric switches

Support for new technologies like 200 Gbps Ethernet for client connect

Connect multiple security zones w/o routes

Disadvantages

Requires more network cards, switch ports, IP Addresses, etc.

As noted in the advantages section above, VAST customers are free to mix the “connect via CNode” and “connect via switch” models. A customer with a few Infiniband hosts could install IB cards in one pool of CNodes and still connect their Ethernet clients to the DASE cluster through the Ethernet fabric switches to minimize the number of switch ports needed.

Leaf-Spine for Large Clusters

As DASE clusters grow to need more NVMe fabric connections than a pair of 64-port switches can provide, the pair of fabric switches usually shown at the core of a DASE cluster grows into a leaf-spine network. The CBoxes (multi-server chassis running multiple CNodes in a single appliance) and DBoxes are still connected to a pair of switches, but instead of that top-of-rack switch pair being the core of the cluster they become leaves redundantly connected to a pair of Spine switches, as are the leaf switches at the top of the other racks of the cluster.

Leaf-spine networking allows DASE clusters to grow to well over 100 appliances, especially when large port count director class switches are used in the spine. Planning is underway for very large clusters of 1,000 appliances or more, so the groundwork will be laid before customers reach that size.

Scale-Out Beyond Shared-Nothing

For the past decade or more, the storage industry has convinced itself that a shared-nothing storage architecture is the best approach to achieving storage scale and cost savings. Following the release of the Google File System architecture whitepaper in 2003, it became table stakes for storage architectures of almost any variety to be built from a shared-nothing model, ranging from hyper-converged storage to scale-out file storage to object storage to data warehouse systems and beyond. Ten years later, the basic principles that shared-nothing systems were founded on are much less valid for the following reasons:

Shared-nothing systems were designed to co-locate disks with CPUs, in an era when networks were slower than local storage. However, with the advent of NVMe-oF, it’s now possible to disaggregate CPUs from storage devices without compromising performance while accessing SSDs and SCM devices remotely.

Shared-nothing systems force customers to scale compute power and capacity together, creating an inflexible infrastructure model vs. being able to scale up CPUs as the data set needs faster access performance.

Shared-nothing systems limit storage efficiency. Since each node in a shared-nothing cluster owns some set of media, a shared-nothing cluster must accommodate node failures by erasure-coding across nodes, limiting stripe width and shard, or replicate data reduction metadata, limiting data reduction efficiencies. Shared-everything systems can build wider, more efficient RAID stripes when no one machine exclusively owns any SSDs and build more efficient global data reduction metadata structures.

As containers become an increasingly popular choice for deploying applications, this microservices approach to deploying applications benefits from the stateless approach containers bring to the table, making it possible to easily provision and scale data services on composable infrastructure when data locality is no longer a concern.

The Advantages of a Stateless Design

When a VAST Server (CNode) receives a read request, that CNode accesses the VAST DataStore’s persistent metadata from shared Storage Class Memory to find where the data being requested actually resides. It then reads that data directly from hyperscale flash (or SCM if the data has not yet been migrated from the write buffer) and forwards the data to the requesting client. For write requests, the VAST Server writes both data and metadata directly to multiple SSDs and then acknowledges the write.

This direct access to shared devices over an ultra-low latency fabric eliminates the need for VAST servers to talk with each other to service an IO request – no machine talks to any other machine in the synchronous write or read path. Shared-Everything makes it easy to linearly scale performance just by adding CPUs and thereby overcome the law of diminishing returns that is often found when shared-nothing architectures are scaled up. Clusters can be built from thousands of VAST servers to provide extreme levels of aggregate performance. The primary limiter on VAST cluster scale is the size of the network fabric that customers configure.

Storing all the system’s metadata on shared, persistent SSDs across an ultra-low latency fabric eliminates the need for CNodes to cache metadata and, therefore, any need to maintain cache coherency between Servers/CNodes. Because all data is written to persistent SCM SSDs before being acknowledged to the user, not cached in DRAM, there’s no need for the power failure protection hardware usually required by volatile and expensive DRAM write-back caches. VAST’s DASE architecture pairs 100% nonvolatile media with transactional storage semantics to ensure that updates to the Element Store are always consistent and persistent.

The DASE architecture eliminates the need for storage devices to be owned by one, or an HA pair, of node controllers. Since all the SCM and hyperscale flash SSDs are shared by all the CNodes, each CNode can access all the system’s data and metadata to process requests from beginning to end. That means a VAST Cluster will continue to operate and provide all data services even with just one VAST Server running. If, for example, a cluster consisted of 100 servers, said cluster could lose as many as 99 machines and still be 100% online.

The VAST DataStore

As its very name implies, data has always been the critical core of data processing, but for many years, organizations really only processed a small fraction of the data they had access to, or even the data they generated internally. Corporate IT departments treated the relatively small databases that ran their ERP, logistics, and other line of business applications as their crown jewels, but since the OLTP platforms that held that data could only process a limited amount of data at a reasonable cost, historical data was treated as a luxury, and unstructured data was treated as second-class.

Over the past two decades or so, that’s changed. First, the so-called big data analytics platforms from Hadoop to Databricks and Snowflake made it practical to glean business insight across data from multiple applications, and database management systems, and datasets too big for classical databases. More recently, data has been central to AI model training and RAG (Retrieval Augmented Generation). Since AI model accuracy is directly related to the amount of data used to train and optimize the model, many organizations are facing data storage, or more broadly, a data management crisis.

The VAST DataStore provides the persistence and basic data services layers of the VAST AI Operating System. In English, that means that the VAST DataStore is responsible for storing, protecting, securing, and presenting the VAST AI OS’s data. While it’s not limited to the functions provided by conventional operating systems we saw in the VAST AI Operating System section above, the VAST DataStore is analogous to the logical volume manager, file system, and protocol services/daemons in traditional operating systems.

In addition to making the data it holds available across the standard storage protocols for file (NFS and SMB), object (S3 API), and block (NVMe over TCP) access the VAST DataStore also provides direct access to and manages the contents of tables for the VAST DataBase and provides data services like snapshots, and clones across its entire contents of both structured, and unstructured data.

Designing the VAST DataStore

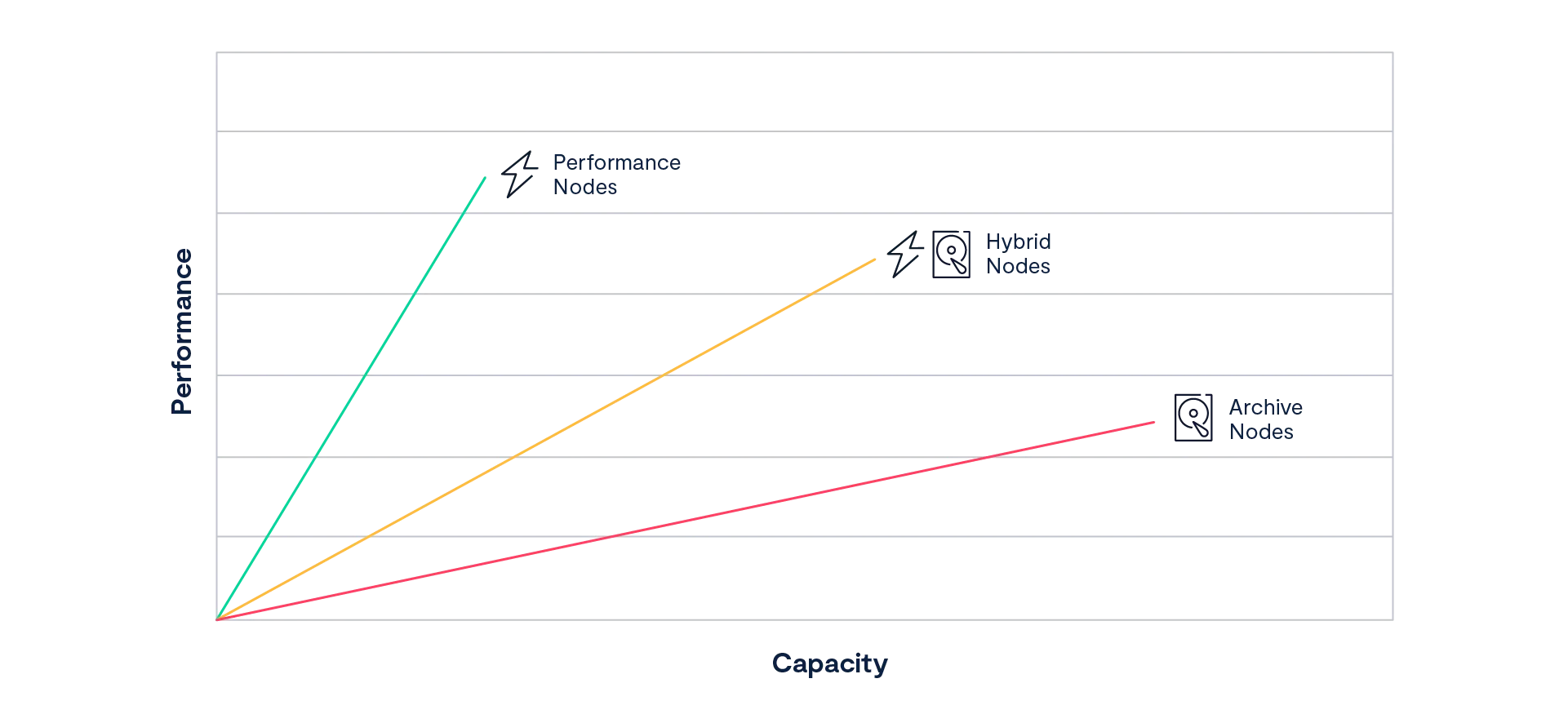

Before VAST, the computing world assumed that the only way to efficiently store data was with multiple tiers of storage, each providing a unique price/performance proposition. Storage vendors built, and storage users bought, storage systems designed to fit one or two tiers in the storage pyramid. All-flash systems were fast, but small and expensive; shared-nothing systems scaled, but couldn’t handle small files or deliver 1 ms latency.

For the VAST DataStore to truly deliver universal storage, we had to build a system that broke all the tradeoffs underlying previous solutions. It had to scale to the exabytes of data needed to train the most advanced AI models while still delivering the sub to single digit millisecond latency and five 9s reliability users expect from an all-flash array. It had to support parallel I/O from thousands of clients and deliver the strict atomic consistency to support transactional applications. Perhaps most importantly, it had to do it all at a price customers could afford when buying petabytes at a time.

The VAST DataStore is the software and metadata structures that turn the CNodes and SSDs of a DASE cluster into a coherent system that provides universal storage alongside all the AI OS’s other services. It supports a wide range of applications and data types, from volumes to files and tables. The VAST DataStore takes full advantage of the low-latency direct connection from every CNode to every SSD in the cluster by optimizing metadata structures for the shared, persistent SCM SSDs.

Unlike first-generation all-flash arrays that offered low latency at limited capacity, the VAST DataStore was designed to efficiently manage petabytes to exabytes of data. This doesn’t just mean efficient use of capacity through erasure codes and data reduction, though those are an important part of the VAST DataStory (Sorry, I couldn’t resist the pun); it also means the efficient management of flash endurance.

The VAST DataStore manages data through three sublayers:

The Physical or Chunk Management layer provides the basic data preservation services for the small (32 KB average) data chunks the VAST DataStore uses as its atomic units. This layer includes services such as erasure-coding, data distribution, data reduction, flash management, and encryption at rest.