Jason Vallery has spent most of his career building systems that don’t get to fail. At Microsoft, he helped shape Azure Blob Storage into something that could handle massive, unpredictable workloads across enterprises and model builders alike.

Now at VAST, he’s working on the same problem but from a different angle as he works to figure out how to make AI infrastructure behave like a system instead of a collection of parts. That perspective shows up quickly when he talks about AI, because he does not start with models or GPUs. He starts with what happens when those systems have to run continuously.

As Vallery told the crowd gathered for his talk at the VAST FWD event, contrary to popular opinion, most AI systems are less likely to fail under peak load and more challenged when they simply have to keep running. He says the reason GPUs sit idle often isn’t because of compute limits, but because data doesn’t arrive on time (or arrives in the wrong place, or gets stuck between systems). What looks from the outside like a scaling issue is actually a coordination problem.

He adds that the problem sits in the data layer between hardware and models. It has to keep data available, isolate workloads across tenants, and handle very different usage patterns at the same time and if that slips, utilization drops, performance degrades, and the system fragments. It’s actually this layer that determines whether an AI system actually works in production even if it’s not the one that’s gotten all the attention.

In VAST’s architecture, that includes built-in multi-tenancy controls, QoS policies, and a single system handling object, file, and streaming data through the same interfaces.

The Pipeline Everyone Recognizes Ends Too Early

The next part of the system is the one most people think they already understand, because it looks familiar, Vallery says. The first half of the pipeline (ingestion, transformation, curation, distribution, etc.) has been around for more than a decade and is recognizable in cloud analytics stacks.

In this traditional model the data comes in, gets cleaned up, organized, and made queryable, then it’s moved to wherever it needs to be processed.

That idea defined the last era of cloud. Systems like Spark, Databricks, and Snowflake made it possible to take messy data and extract something useful from it. But that pipeline, as complete as it looked, stopped short of actually turning that data into something that could act, adapt, or improve on its own.

What’s different now is not the existence of those steps, but what they feed into. The ingestion is still raw data, whether that is web-scale text, vehicle telemetry, or manufacturing signals.

Yes, transformation still means feature extraction, normalization, filtering and so on. And curation still builds catalogs and embeddings so the data can be searched and understood. Distribution still moves that data closer to where compute lives. But now, instead of ending in dashboards or reports, this pipeline now feeds directly into systems that continue working on that data in real time.

In VAST’s case, those stages run against a single platform that combines its DataStore, database layer, event broker, and DataEngine so the data does not have to move between separate systems at each step.

When Did The Pipeline Stop Being a Pipeline?

What follows those first four steps is where the system changes character, Vallery explains.

Training, inference, agents, and governance are often described as separate stages, but in practice, he says, they collapse into a loop that never really ends.

Data is ingested, transformed, and curated so it can be used to build a model, that model is deployed and begins serving requests, and then something new happens. The system starts generating its own inputs. “The outcome of that process,” he told the crowd, “is that new knowledge, those new queries that have been done, and certainly, all of those tool chains and problems that have been solved turn into new data to train on.”

“The frontier model builders got this whole pipeline working. I'm going to call it like 12 months ago,” Vallery said, and the effect is visible in how quickly those systems improve once deployed. Agents are the forcing function here, they operate continuously, issuing queries, retrieving data, generating intermediate outputs, and feeding those results back into the system. Every interaction becomes input for the next cycle.

This is also where VAST is the layer that spans that loop, with storage, vector database, streaming, and execution all operating on the same underlying dataset.

Where the System Starts to Strain

Once that loop is running, the problem shows up fast because of how much data is being moved around. The usual cloud setup assumes data sits in one place and gets pulled into different systems to be processed, analyzed, and served. Each step ends up making its own copy, moving it somewhere else, and working on it there. That was fine when workloads were occasional and delays didn’t matter much. It breaks down when the system is running all the time and every step depends on the last one finishing quickly.

Vallery describes a different approach that keeps things much simpler. Instead of moving data into another system to process it, “you can send the query from the analytics system into vast, and then vast does that filtering before it ever leaves the system.”

That means only the results move, not the full dataset. In practice, this is enabled by VAST’s DataStore and DataEngine, which allow queries and transformations to run directly where the data lives instead of exporting it into separate systems.

Over time, that makes a big difference. Less data moving around means less delay, fewer copies, and less strain on the system. In a loop that never stops, that is what keeps things from slowing down or falling apart.

When Data Stops Living in One Place

Once you stop moving data around inside a single environment, the next issue shows up at a much larger scale. The system itself is no longer in one place, compute is being deployed where power is available, wherever capacity can be brought online, wherever regulations allow it. The idea that everything sits neatly inside one region starts to fall apart pretty quickly. You end up with GPUs in different locations, often across countries, sometimes across entirely separate environments, all needing access to the same data at the same time.

“The cloud has been fundamentally built on a premise of data has gravity, and you bring your data to the cloud region.” He says that model made sense when infrastructure was centralized. It becomes a problem when compute is scattered. What replaces it is a system where the data can be presented wherever the compute happens to be, with consistency across all those locations. That includes handling regulations about where data can live and who can access it, which are changing quickly and differently across regions.

This is where VAST’s DataSpace provides a global namespace across environments, with Polaris managing placement and access policies across regions.

The system has to keep everything aligned without forcing everything into one place, and that becomes just as important as performance or scale.

Training Stops Being the Center of the System

By the end of this year, over 90% of the GPU capacity deployed globally will not be used for training. It'll actually be used for AI inferencing and agentic inferencing, primarily.” The center of gravity has moved from building models to running them.

That shift changes what the system has to do. Training is heavy, but it is contained. It runs, it finishes, it writes checkpoints. Inference does not stop. Requests come in constantly, data is pulled in alongside them, and the system has to keep up without interruption.

When agents are involved, that pressure increases further because they are not issuing single requests. They are running longer processes, pulling in more context, and interacting with multiple systems as they go. The workload becomes continuous, and the infrastructure has to be built for that pattern rather than the bursty, contained behavior of training.

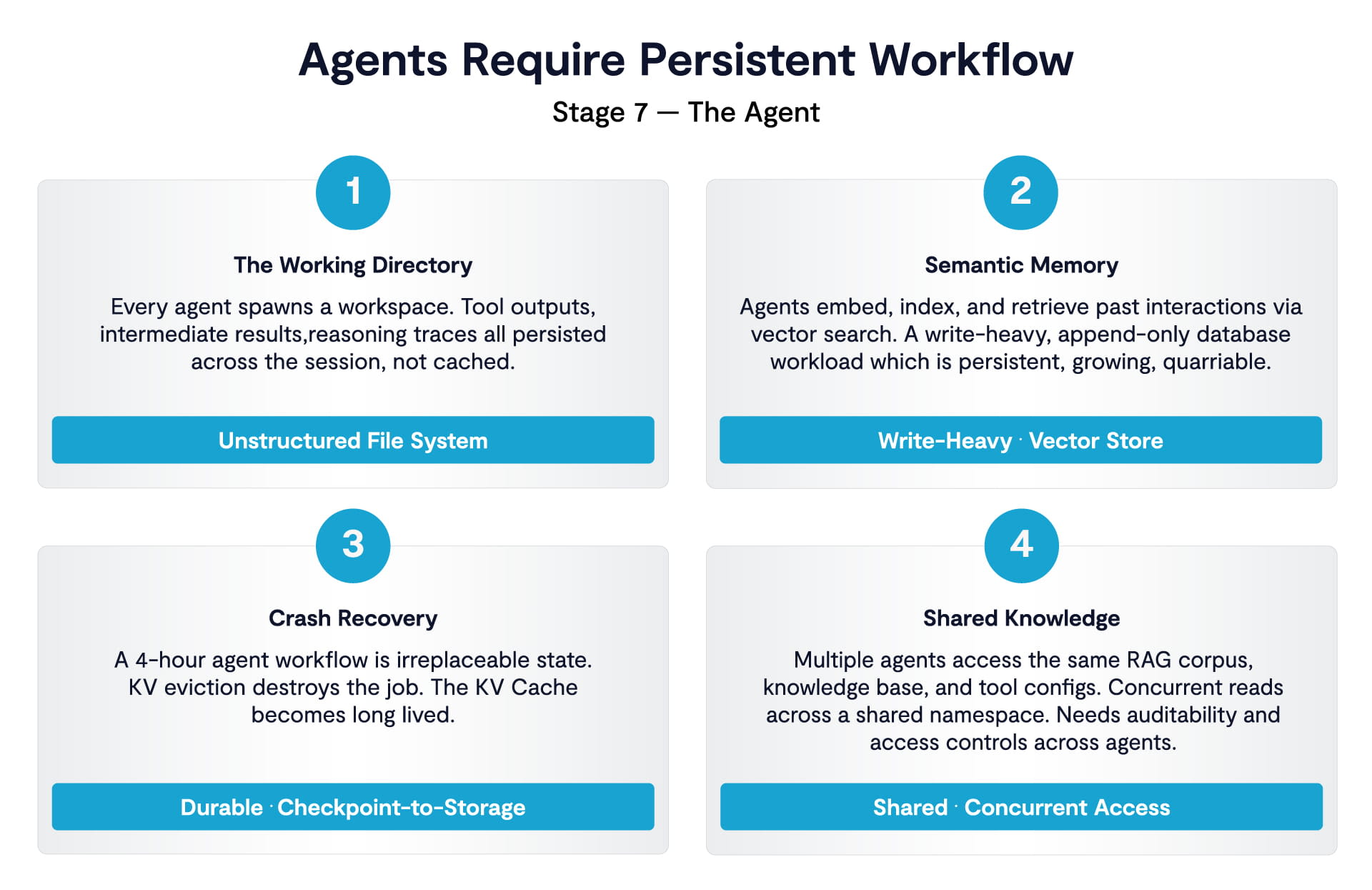

Once inference runs all the time, the problem becomes keeping everything working together. Agents run long jobs over shared data, using files, logs, and embeddings over and over. As Jason Vallery says, “agents are persistent workflow. They need a working directory. Typically, this is a file system.” These jobs can run for hours, and if something breaks, you lose that progress. At scale, you’re dealing with thousands of agents working on the same data at once, which turns into a coordination problem.

Memory at the Center

The next limit is memory. Each request brings along prompts, retrieved data, and intermediate results, and GPU memory isn’t enough to hold it all. “You want at least 16 terabytes of kV store per GPU”, which means a lot of that data has to live outside the GPU and be shared efficiently. The challenge shifts from compute to managing and reusing all that data.

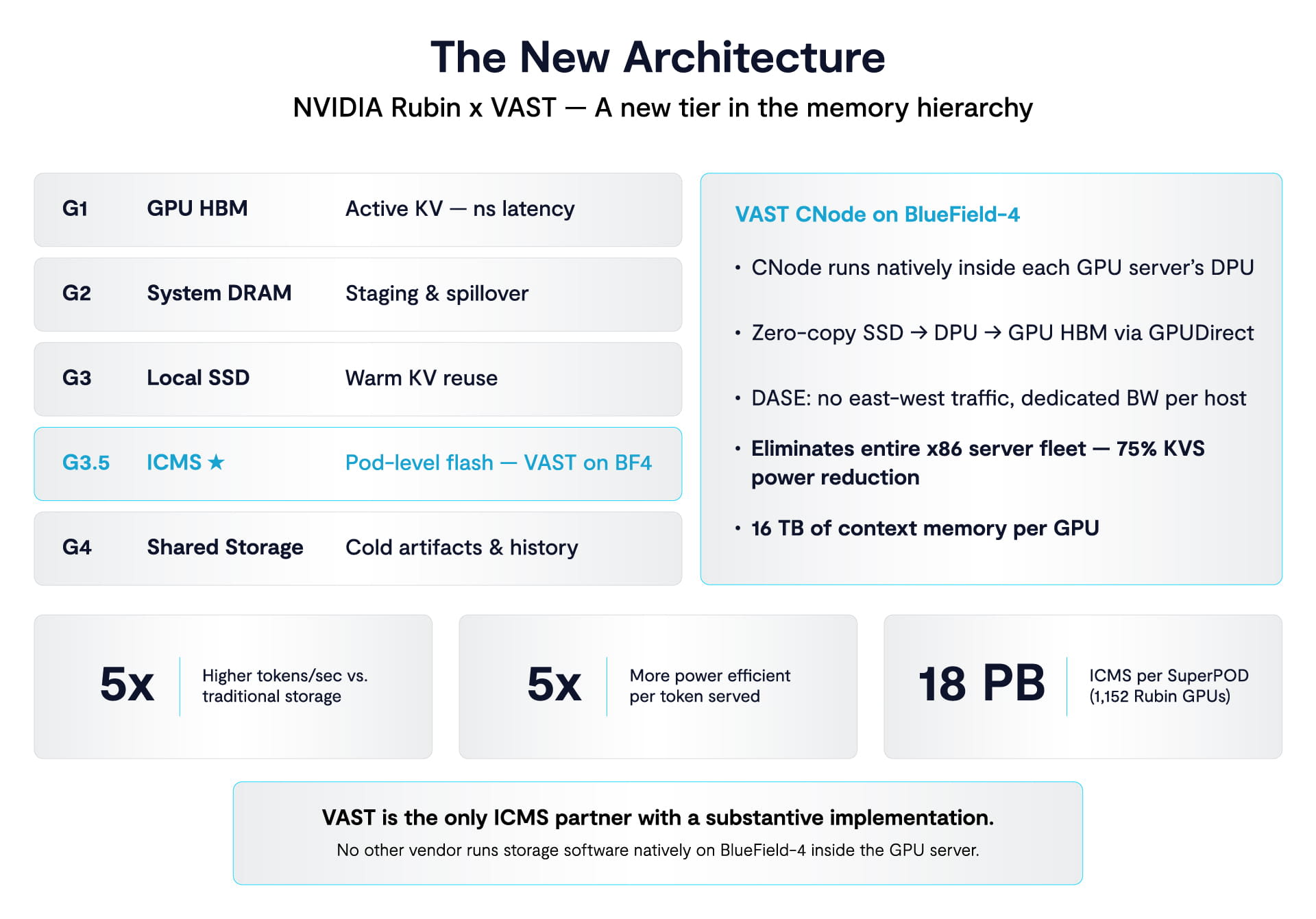

VAST addresses this by persisting that context outside the GPU layer and serving it directly over RDMA, with control running on BlueField DPUs inside the GPU cluster to remove intermediate layers.

That forces changes in how systems are built. Older designs move data through multiple layers before it reaches storage, which adds delay and extra infrastructure. A more direct connection between GPUs and storage removes those steps, cuts latency, and reduces the number of servers needed. At this scale, that’s what keeps things fast enough. That architecture eliminates the traditional x86 translation layer and allows capacity and performance to scale more independently.

Planning also changes because these days storage is about way more than throughput. Data builds up across every step, from ingestion to embeddings to agent activity. “One petabyte just turned into 20 petabytes of storage demand.” The only way to manage that is to avoid making copies across different systems and keep everything in one place.

Because VAST operates on a single underlying data platform, the same dataset is reused across all stages instead of being duplicated at each step.

As agents do more, control becomes important. These systems read, write, and act across multiple resources, which makes it hard to track what’s happening if data is spread out. Vallery is blunt about it: “I’m terrified of agents, honestly.” A single system makes it possible to control access, track activity, and understand what’s happening.

With all data and operations running through one platform, access control, auditability, and policy enforcement can be applied consistently across files, embeddings, and workflows.

At that point, it’s not about individual parts anymore. The system has to keep running without breaking, with data, compute, and workloads all working together. That’s what separates systems that work from ones that don’t, Vallery says.