Beyond Better Codes

In our last installment, we saw how locally decodable codes allows the VAST Data Platform to use very wide stripes, typically 150D+4P, without having to read all 149 remaining data strips to rebuild from a device failure.

Some other vendor may have taken locally decodable codes and applied them to a traditional RAID system where users had to specify the number of data strips and protection strips for multiple RAIDsets, and the system reconstructed the whole contents of each failed SSD. But at VAST Data, we aren’t so easily satisfied.

No Nerd Knobs

Other storage systems make administrators choose between the efficiency of wide stripes, the lower write latency of replication, or narrow stripes. The large Storage Class Memory write buffer in a VAST system decouples write latency from the backend data layout, since the system ACKs from the Storage Class Memory, and accumulates data there, back end stripe width doesn’t effect write latency.

VAST systems determine stripe width automatically based on the number of SSDs in the cluster, the relative wear of those SSDs, and other more esoteric inputs freeing storage administrators from choosing the right striping and protection level for each workload. Our coding is also dynamic, so when one of 608 SSDs in a system that’s using 600D+8P encoding fails, we can rebuild onto 599D+8P stripes.

Intelligent, Data Only Rebuilds

Since the VAST Element Store (our hybrid file system and object store) manages files and objects — not just arbitrary blocks of data — we know which blocks on each SSD are used, which are empty, and even which are used but are holding deleted data. When an SSD fails, we only have to copy the data and can ignore the deleted data and empty space.

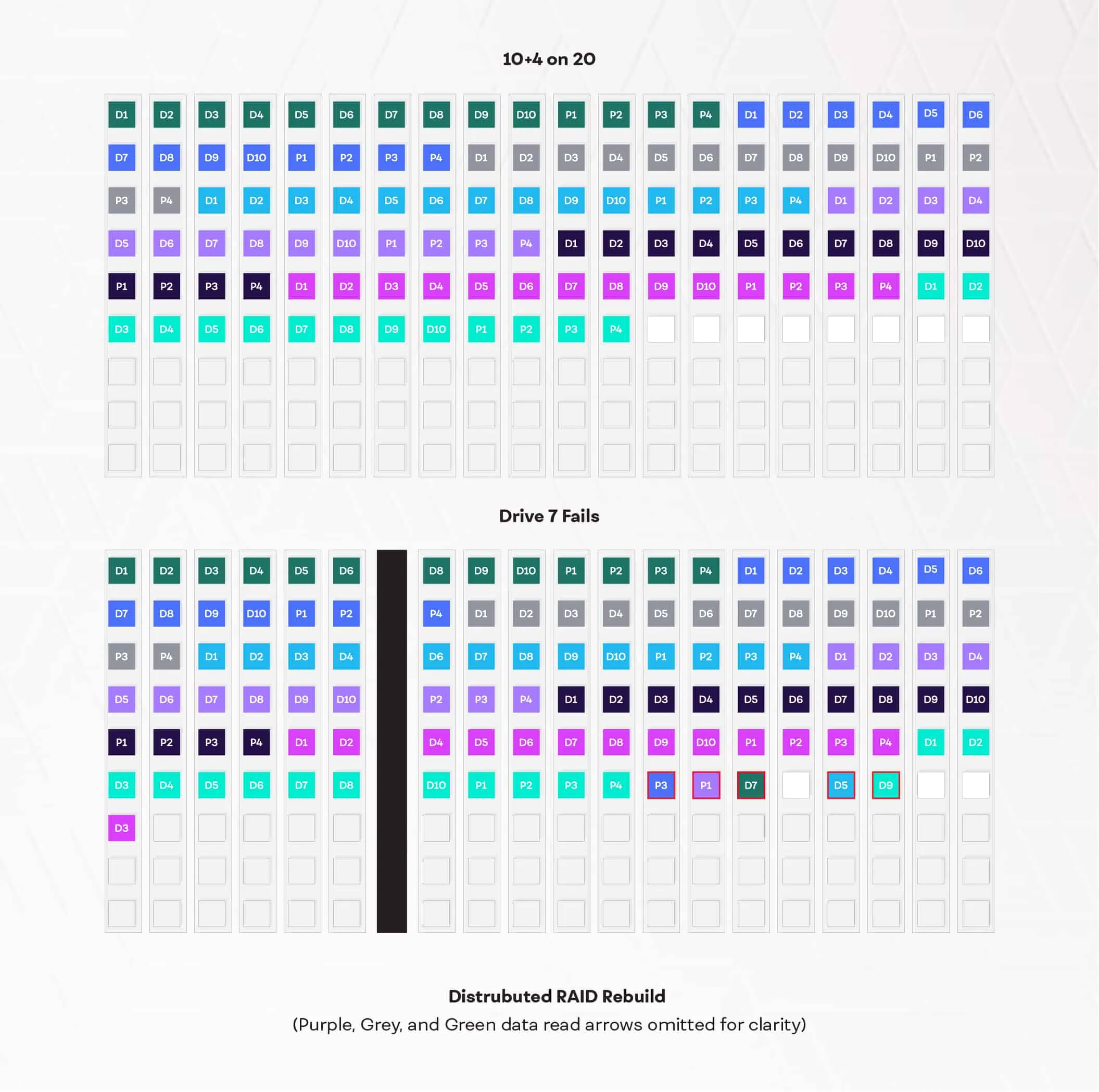

Declustered Parity

Rather than clustering SSDs to hold data strips, parity strips, or act as spares, the VAST Element Store distributes erasure-coded stripes across all the SSDs in the system. The system selects which SSDs to write each stripe of data to based on SSD wear, not the locations being written.

In a conventional RAID system, the rebuild after a device failure has to read from the remaining drives and write the reconstructed data to a replacement, or spare, device. This many-to-one rebuild can only proceed as fast as the spare drive can save data. It also creates an I/O hotspot, stressing the small number of drives involved in the rebuild and impacting application performance.

As illustrated above; when a drive in a distributed parity system fails, the system reads data from all the remaining drives and then writes the reconstructed data; not a dedicated spare drive, but to free space across the whole system (the blocks outlined in red). This many-to-many rebuild completes much faster, and since the load is spread across the whole system, there’s less impact on performance.

Eliminating the Controller Bottleneck

Declustering RAID from designated spare disks parallelized the rebuild process, and eliminated the bottleneck of having to write all the rebuilt data to one spare device. This is a good thing — but there’s an old saying among system architects: “You never eliminate a bottleneck, you just shift it somewhere else” and for most storage systems, decoupleing RAID results in the bottlenecks at the two controllers that are SAS connected to the devices being rebuilt.

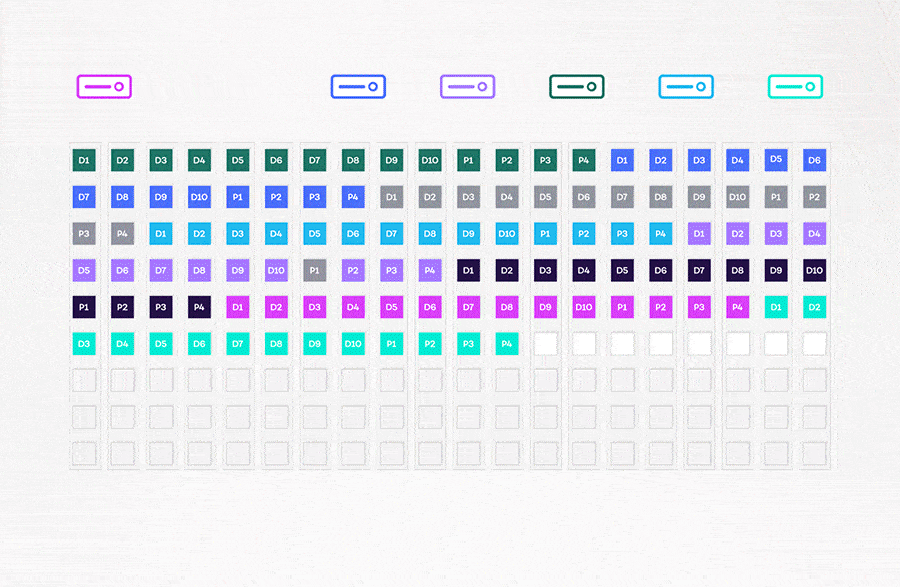

VAST’s DASE architecture parallelizes the rebuild across not only all the SSDs in the system, but just as importantly across all the VAST Servers in the system. As you can see in the diagram below, each VAST server reads three surviving data strips (1/4th of 10 data strips, rounds up to 3) and the four parity strips from a stripe, rebuilds the data strip from the failed SSD and writes the rebuild data to a strip on an SSD that’s not already participating in the stripe being rebuilt.

With the VAST Servers working independently to rebuild each stripe, there’s none of the east-west internode chatter that can clog the networks of shared-nothing systems and a lot more horsepower available than a dual controller system could spare.

A Server sees there’s data to rebuild in the system metadata, claims a stripe, rebuilds it, and writes the result to flash, and in the metadata pointer to that strip. A large VAST system will have dozens of VAST Servers sharing the load and shortening rebuild time.

Since a system with D+nP protection will only lose data when the n+1st device fails before the first device that failed has been rebuilt; rebuild time is an important factor in any data loss probability calculation.

1+1=3 for Erasure Codes Plus Architecture

As we saw in part 1, locally decodable erasure codes allow VAST to provide a much higher level of data protection (typical Mean Time to Data Loss of 44 million years) and while only requiring a fraction, typically 1/4th of the data to recover from a device failure. VAST's unique disaggregated shared everything architecture (DASE) further accelerates rebuilds by distributing the rebuild load across all the VAST Servers and all the SSDs In the cluster.

The result is that the VAST Data Platform, like many cloud environments, manage failures in place. A device failure in a VAST cluster reduces available capacity a little, but with N+4 or higher levels of protection, a device failure is just a low-level event in your support ticket tracking system...not the crisis-inducing moment failures that frequent conventional storage systems.